特征选择的目标是在给定评价标准下选择非冗余的特征子集,其作为一项重要的数据预处理步骤,能够有效地提高数据分析模型的准确性和高效性,在数据挖掘与知识发现中起着重要的作用[1]。

数据中存在一些缺失值是一种非常普遍的现象,缺失值给数据中的分类知识带来了不一致性问题[2]。粗糙集理论是一种能够有效应对不精确、不一致信息的数据建模与知识获取工具。近年来,人们基于粗糙集理论针对不完备数据的特征选择问题进行了深入的分析和讨论。Kryszkiewicz[3]认为不完备数据中缺失值应是已有值域中的某一特征值,进而提出了一种基于广义差别矩阵的特征选择方法。Parthalin等[4]为了保留分类所产生的不一致决策区域,研究了基于容差粗糙集的特征选择方法。Meng等[5]讨论了不一致不完备决策系统中基于区分矩阵的特征选择方法。Grzymala-Busse等[6]将缺失值考虑为丢失值和不在乎值,提出了基于广义特征关系粗糙集模型的特征选择方法。Qian等[7]提出了一种高效的正向近似加速器,用于加速不完备数据特征选择的启发式特征搜索过程。Dai[8]为了处理不完备数值型数据,建立了一种新的容差模糊粗糙集模型,并提出了基于差别矩阵的特征选择方法。Yang等[9]定义了多准则决策系统中相似优势关系的概念,提出了4种基于差别矩阵的近似分布约简方法。Liang等[10]提出了一种不完备信息系统中基于粗糙熵的启发式特征选择算法。Qian等[11]基于不完备信息系统中的最大一致块概念,提出了一种新的组合信息熵用于度量信息系统的不可分辨能力。Dai等[12]在不完备决策系统中提出一种新的满足单调性约束的条件信息熵。Zhao等[13]提出了一种新的邻域容差条件熵,并将其应用于混合不完备数据中的特征选择问题。

另一方面,实际应用中数据随时间的推移呈现出动态更新的变化趋势,数据的采集与分析是一个不断优化升级的动态过程。面向动态数据的高效特征选择方法成为了当前人们普遍关注的一个研究热点。增量技术可以利用已有计算结果进行特征选择增量计算,以发现新的特征子集,从而避免重新计算整个特征空间以获取新的特征子集[14-15]。近年来,许多学者通过将增量学习技术引入到特征选择问题中,对动态数据环境下的高效特征选择方法进行了广泛深入的研究。Xu等[16]将特征选择问题转化为0-1整数规划问题,提出了一种对象更新条件下的动态特征选择方法。Qian等[17]设计了一种新的基于相对不可辨识对象对的属性重要度度量方式,并提出了动态粒度空间下的基于序贯三支决策模型的增量特征选择方法。Yang等[18]分析了对象动态变化时相对可辨识关系的增量更新机制,提出了基于模糊粗糙集的动态特征选择算法。Lang等[19]提出了覆盖信息系统中基于相关族的动态特征选择方法。Wei等[20]设计了基于辨识矩阵和压缩辨识矩阵的增量特征选择算法,以获得数据动态变化时最优的特征子集。Zeng等[21]基于高斯核模糊粗糙集模型,研究了混合信息系统的动态特征选择方法。Liang等[22]提出了信息熵的批增量递推计算机制,可用于多个数据集之间信息熵的高效融合。Shu等[23]针对含有缺失值的不完备数据,提出了基于正域的增量特征选择算法。Xie等[24]提出了3种不完备数据中相容类的更新策略,并设计了相应的增量特征选择算法。考虑到动态数据中特征值存在频繁的修改和更新操作,Wang等[25]针对完备数据集研究了特征值动态更新时信息熵的增量更新机制,进一步设计了相应的动态特征选择算法。刘吉超等[26]针对不完备数据中数据集维数动态增加的情形,分析了互补信息熵的更新机制,进而提出了一种增量特征约简算法。钱进等[27]提出一种基于正域处理面向成组对象集的增量式特征选择算法。综合上文所述,大部分研究者针对完备决策系统的动态更新特征选择问题进行深入的研究,鲜有对不完备决策系统动态更新特征问题研究。基于正域处理不完备决策系统的特征选择存在无法处理边界域中的样本分类的不确定性问题。信息熵作为度量信息不确定性的度量标准,有助于不完备数据特征选择问题研究,而引入增量计算机制可以加速特征选择过程,有效减少计算时间。本文针对不完备决策系统,设计了一种面向特征值动态更新的特征选择算法。文中首先分析了特征值更新时不完备决策系统中相容类和决策类的动态变化模式,并以此给出了条件信息熵的增量计算机制,进而设计了基于增量条件信息熵的动态特征算法,最后通过实验验证进一步说明了算法的有效性和高效性。

1 基本概念粗糙集理论中,信息系统表示为一个四元组

完备信息系统中条件特征的任何子集

| ${\rm{IND}}(P) = \{ (x,y) \in U \times U|\forall a \in P,f(x,a) = f(y,a)\} $ |

| $\begin{array}{l} T(P) = \{ (x,y) \in U \times U|\forall a \in P,f(x,a) = f(y,a) \vee \\ \quad\quad\quad f(x,a) = * \vee f(y,a) = *\} \end{array} $ |

| $\begin{array}{l} \quad\underline {{\rm{apr}}} ({D_i}) = \{ x \in U|{T_P}(x) \subseteq {D_i}\} \\ \overline {{\rm{apr}}} ({D_i}) = \{ x \in U|{T_P}(x) \cap {D_i} \ne \text{Ø}\} \end{array} $ |

基于粗糙集理论的特征选择方法根据特征重要度的不同度量标准,可笼统地归纳为依赖性度量、一致性度量、距离度量和信息度量。前面3种度量方法都局限于数据的实际值,对含有噪声或缺失值的数据处理十分敏感。而基于信息论的度量方法仅关注随机变量的概率分布,不关注其实际值,成为了高维数据中常用的特征重要度度量方式。借鉴香农熵的传统定义形式,Dai等[8,12]定义了一种新的满足单调性的条件熵来度量不完备决策系统协调程度的不确定性。给定一个不完备决策系统

| $H(d|P) = - \sum\limits_{i = 1}^n {\sum\limits_{j = 1}^m {\frac{{|{T_P}({x_i}) \cap {D_j}|}}{{|U|}}{\log_2} } } \frac{{|{T_P}({x_i}) \cap {D_j}|}}{{|{T_P}({x_i})|}}$ | (1) |

根据式(1),通过从特征子集

| ${\rm{sig}}(a,P,d) = H(d|P) - H(d|P - \{ a\} )$ |

当不完备决策系统中特征值发生动态更新时,由特征子集所诱导的相容关系和由决策特征所诱导的等价关系会随之变化,进而使得特征度量准则条件熵发生变化。下面,首先分析一组对象的特征值发生更新时相容类和决策类的变化情况。由于条件熵的计算与相容类和决策类中的对象顺序无关,为了方便阐述,下文中假设决策系统中发生特征值修改的对象集合为

| $\begin{array}{l} U/T'(P) = \{ {{T'}_P}({x_i})|i = 1,2, \cdots ,p,p + 1, \cdots ,\\ \quad\quad\quad q,q + 1, \cdots ,k, k + 1, \cdots ,n\} \end{array} $ |

式中:

不完备决策系统中决策特征值发生变化后决策类的更新为

| $U/d = \{ {D'_j}|j = 1,2, \cdots ,{q_1},{q_1} + 1, \cdots ,{q_2},{q_2} + 1, \cdots ,m\} $ |

式中:

根据上文中不完备决策系统中特征值动态修改后相容类和决策类的更新模式,下面进一步可得相容类与决策类交集的动态更新方式。

对于任意对象

对于任意对象

| $ \begin{array}{l}\quad\quad\quad\quad\quad\quad\quad \left|{{T}^{\prime }}_{P}({x}_{i})\cap {D}^{\prime }\right|=\\ \left\{\begin{array}{*{20}{l}}|{T}_{P}({x}_{i})\cap {D}_{j}|,\quad 1\leqslant j\leqslant {q}_{1}\\ \begin{array}{l}|{T}_{P}({x}_{i})\cap {D}_{j}|-|{T}_{P}^-({x}_{i})\cap {D}_{j}|-\\ |{T}_{P}({x}_{i})\cap {D}_{j}^-|+|{T}_{P}^-({x}_{i})\cap {D}_{j}^-|,\end{array}\quad {{q}_{1}+1\leqslant j\leqslant {q}_{2}}\\ |{T}_{P}({x}_{i})\cap {D}_{j}|-|{T}_{P}^-({x}_{i})\cap {D}_{j}|-\\ \begin{array}{l}|{T}_{P}({x}_{i})\cap {T}_{P}^-({x}_{i})|+|{T}_{P}^-({x}_{i})\cap {D}_{j}^-|+\\ |{T}_{P}^+({x}_{i})\cap {D}_{j}^+|\end{array},\quad{q}_{2}+1\leqslant j\leqslant m \end{array}\right.\end{array}$ |

对于任意对象

| $\begin{array}{l} \quad\quad\quad\quad\quad\quad\quad|{{T'}_P}({x_i}) \cap {{D'}_j}| = \\ \left\{ {\begin{array}{*{20}{l}} {|{T_P}({x_i}) \cap {D_j}|,}\quad{1 \leqslant j \leqslant {q_1}} \\ \begin{array}{l} \!\!\!\!\!|{T_P}({x_i}) \cap {D_j}| - |T_P^ - ({x_i}) \cap {D_j}| - \\ \!\!\!\!\!|{T_P}({x_i}) \cap D_j^ - | + |T_P^ - ({x_i}) \cap D_j^ - |, \\ \end{array} \quad{{q_1} + 1 \leqslant j \leqslant m} \end{array}} \right. \end{array} $ |

通过分析不完备决策系统中相容类和决策类,以及其交集的动态更新模式,可得特征值发生修改时决策特征

| ${H'_U}(d|P) = {H_U}(d|P) + \varDelta $ |

其中

| $ \begin{split}\varDelta =-{\displaystyle\sum\limits_{i=p+1}^{q}{\displaystyle\sum\limits_{j=1}^{m}\dfrac{|{{T}^{\prime }}_{P}({x}_{i})\cap {{D}^{\prime }}_{j}|}{\left|U\right|}\mathrm{log_2}\dfrac{|{{T}^{\prime }}_{P}({x}_{i})\cap {{D}^{\prime }}_{j}|}{|{{T}^{\prime }}_{P}({{x}^{\prime }}_{i})|}+}}\\ {\displaystyle\sum\limits_{i=p+1}^{n}{\displaystyle\sum\limits_{j=1}^{m}\dfrac{|{T}_{P}({x}_{i})\cap {D}_{j}|}{\left|U\right|}}} \mathrm{log_2}\dfrac{|{T}_{P}({x}_{i})\cap {D}_{j}|}{|{T}_{P}({x}_{i})|}+\quad\quad\\ {\displaystyle\sum\limits_{i=q+1}^{n}{\displaystyle\sum\limits_{j={q}_{1}+1}^{m}\Bigg(\frac{|{T}_{P}^-({x}_{i})\cap {D}_{j}|+|{T}_{P}({x}_{i})\cap {D}_{j}^-|}{\left|U\right|}}}- \quad\quad\\ \dfrac{|{T}_{P}^-({x}_{i})\cap {D}_{j}^-|+|{T}_{P}({x}_{i})\cap {D}_{j}|+|{T}_{P}^+({x}_{i})\cap {D}_{j}^+|}{\left|U\right|}\Bigg) \cdot \quad\\ \mathrm{log_2}\dfrac{|{{T}^{\prime }}_{P}({x}_{i})\cap {D}^{\prime }|}{|{{T}^{\prime }}_{P}({x}_{i})|}\quad\quad\quad\quad\quad\quad\quad\quad\end{split}$ |

基于上述分析,算法1给出了不完备决策系统中特征值更新时基于条件熵的增量式特征选择算法来计算新的特征子集。

算法1 不完备决策系统中基于条件熵的增量式特征选择算法(IFS-CE-IDS)

输入 不完备决策系统

输出 特征选择后的特征子集

1)初始化特征子集

2)根据特征值更新对象集合

3)计算

4)如果

5)当

6)对任意特征

7)返回A。

该算法中条件熵的计算时间是

本文选取了9组UCI数据集进行性能测试,数据集详细信息如表1所示。对于完备数据集Car和kr-vs-kp,随机删除原始数据集中5%的已知特征值变为缺失值,使原始完备数据集变为不完备数据集。对含有数值型数据的数据集Hepatitis、Wisconsin、Dermatology和Ozone,将数值型特征进行了离散化处理。如数据集Hepatitis包含19个特征,其中6个为数值型特征;数据集Wisconsin含有1个数值型特征;数据集Dermatology包含1个数值型特征;数据集Ozone都是数值型特征。实验环境配置为:Intel(R)Core(TM)i5-4210M CPU 2.60 GHz,8 GB内存,操作系统为Windows 10,程序开发平台为IntelliJ IDEA,编程语言为Java。

| 表 1 数据集描述 Tab.1 Description of the datasets |

为验证本文所提出算法IFS-CE-IDS处理数据集特征值更新问题具有高效性和可行性,使用传统批量式特征选择算法HFS-CE-IDS与算法IFS-CE-IDS在9组UCI数据集上进行测试,从分类精度、决策性能以及计算效率三方面对传统批量式特征选择算法HFS-CE-IDS和IFS-CE-IDS进行比较。

3.1 分类精度分析为比较算法HFS-CE-IDS与算法IFS-CE-IDS所得特征子集的分类精度,对表1中9组数据集选择其中50%对象,并且更新其特征值,然后分别运行传统批量式算法HFS-CE-IDS和增量式算法IFS-CE-IDS对特征值更新数据集进行特征选择。使用决策树J48、Naïve Bayes、SVM(support vector machines)分类器验证这两种算法的分类性能。实验结果如表2~4所示。

| 表 2 J48分类精度比较 Tab.2 J48 classification accuracy comparison |

| 表 3 Naïve Bayes分类精度比较 Tab.3 Naïve Bayes classification accuracy comparison |

| 表 4 SVM分类精度比较 Tab.4 SVM classification accuracy comparison |

见表2,从两种算法在J48分类器的分类精度比较可知,算法IFS-CE-IDS在数据集Hepatitis、Audiology和Soybean上所得的分类精度相较算法HFS-CE-IDS所得分类精度差一些,而在其他6个数据集上算法IFS-CE-IDS所得分类精度与算法HFS-CE-IDS所得分类精度相同甚至更好。从表3可知,在Naïve Bayes分类器中,算法IFS-CE-IDS在9个数据集上的分类精度结果表明新提出算法在大部分数据集上的分类精度不比算法HFS-CE-IDS的分类精度差,例如在数据集Cancer、Car和kr-vs-kp上两种算法的分类精度基本相同。

从表4可知,在SVM分类器中,与算法HFS-CE-IDS相比,新提出算法的分类精度在Hepatitis、Audiology、Cancer、Soybean、Dermatology、Wisconsin、Car、Ozone、kr-vs-kp等7个数据集上相等甚至更好。

实验结果表明,算法IFS-CE-IDS在大部分数据集上能够在特征子集和分类精度上取得和算法HFS-CE-IDS相接近的,甚至更好的结果,可以证明算法IFS-CE-IDS是一种有效的特征选择算法。

3.2 决策性能分析为检验算法IFS-CE-IDS的决策性能,本文使用文献[28]中对不完备数据进行评估所提出的6种评估函数评估算法HFS-CE-IDS以及算法IFS-CE-IDS计算的特征子集的决策性能。

6种评估函数中,特征集合

| ${a_C}(F) = \frac{{\displaystyle\sum_{{D_j} \in U/D} {|{{\underline {{\rm{apr}}} }_C}({D_j})|} }}{{\displaystyle\sum_{{D_j} \in U/D} {|{{\overline {{\rm{apr}}} }_C}({D_j})|} }}$ |

式中:

不完备决策系统

| ${c_C}(D) = \frac{{\displaystyle\sum\nolimits_{{D_j} \in U/D} {|{{\underline {{\rm{apr}}} }_C}({D_j})|} }}{{|U|}}$ |

不完备决策系统

| $\alpha ({\rm{IDS}}) = \frac{1}{m}\sum\limits_{{N_i}}^m {\frac{1}{{{N_i}}}} \sum\limits_{j = 1}^{{N_i}} {\frac{{|{X_i} \cap {D_j}|}}{{|{X_i}|}}} $ |

式中:

不完备决策系统

| $ \beta ({\rm{IDS}})=\frac{1}{m}[1-\frac{4}{\left|{X}_{i}\right|}{\sum\limits_{j=1}^{{N}_{i}}|{X}_{i}\cap {D}_{j}|\mu ({Z}_{ij})(1-\mu ({Z}_{ij}))}]$ |

式中:

不完备决策系统

| $\gamma ({\rm{IDS}}) = \sum\limits_{j = 1}^n {\frac{{|{D_j}|}}{{{N_j}|U|}}\sum\limits_{k = 1}^{{N_j}} {\frac{{|{X_k} \cap {D_j}|}}{{|U|}}} } $ |

式中

不完备决策系统

| $\vartheta ({\rm{IDS}}) = \frac{1}{{|U|}}\sum\limits_{i = 1}^m {\frac{{|{X_i}|}}{{|U|}}} $ |

选择表1中每组数据集中50%数据对象,更新其特征值,然后分别运行算法HFS-CE-IDS和算法IFS-CE-IDS对特征值更新数据集进行特征选择。并且使用上述6种评估函数评估每组数据集更新后两种算法特征选择的特征子集的决策性能,实验结果如表5所示。

| 表 5 HFS-CE-IDS与IFS-CE-IDS的度量比较 Tab.5 Measurement comparison of HFS-CE-IDS with IFS-CE-IDS |

从表5的实验结果可知,算法IFS-CE-IDS与算法HFS-CE-IDS相比,在近似准确评估

结合两种算法在6种评估函数下的结果,表明算法IFS-CE-IDS在大部分数据集下能够取得与算法HFS-CE-IDS相同的决策性能。

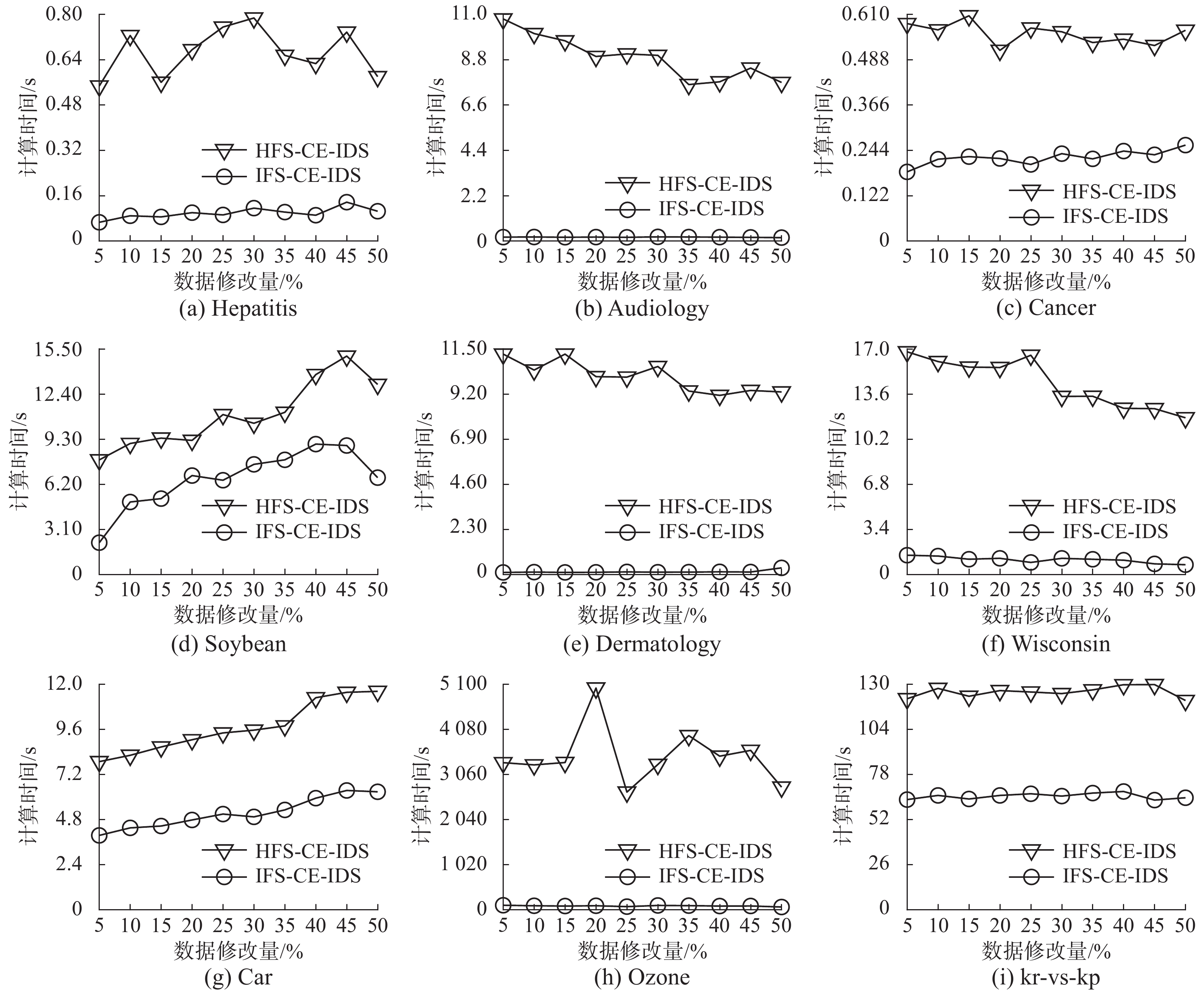

3.3 效率分析为验证本文提出的增量式特征选择算法IFS-CE-IDS的高效性,采用传统的批量式特征选择算法HFS-CE-IDS作比较,该算法是一种与所提出算法基于相同特征评估准则的非增量方法。对表1中的每组数据集,依次选择其中的5%, 10%, 15%, ···, 50%数据对象并更新其对象特征值。同时发生变化的特征值取决于对象特征的值域。当有不同规模的数据对象特征值发生更新,分别使用增量式特征选择算法IFS-CE-IDS和传统批量式特征选择算法HFS-CE-IDS对数据集进行特征选择,求解特征选择结果。计算时间的比较结果如图1所示,图中详细给出两种算法计算时间随数据对象特征值更新规模的变化而发生的变化。

|

Download:

|

| 图 1 算法HFS-CE-IDS与算法IFS-CE-IDS计算时间比较 Fig. 1 Computational time comparison between HFS-CE-IDS and IFS-CE-IDS | |

从图1可知,当不同规模的数据对象特征值发生更新后,传统批量式特征选择算法HFS-CE-IDS比增量式特征选择算法IFS-CE-IDS花费更多时间来选择特征值更新后的特征子集,主要的原因是增量式算法IFS-CE-IDS能够避免重复的计算,可以利用之前已有的计算结果,从而使得特征选择的计算效率得以提高。算法IFS-CE-IDS在9组数据集上的计算效率普遍比算法HFS-CE-IDS高,尤其是在一些数据规模较大的数据集上,算法IFS-CE-IDS的高效性更加明显。比如在Ozone数据集上算法IFS-CE-IDS的计算效率远优于算法HFS-CE-IDS的计算效率。图1中两种特征选择算法的计算时间都存在一些波动,如数据集Ozone中对象数据对象特征值更新20%时,算法HFS-CE-IDS的计算时间突然变得比其他比例耗时更大,因为数据集的数值对象特征值发生更新后,它的相容类与决策类因数据对象特征更新而产生变化,从而导致计算时间发生波动。

在实验分析中,通过算法HFS-CE-IDS与算法IFS-CE-IDS的分类精度、决策性能和计算效率三部分实验结果可知,算法IFS-CE-IDS与算法HFS-CE-IDS相比,在大部分数据集上进行特征选择所得特征子集数量相接近,两种算法分类精度和决策性能基本相同,但算法IFS-CE-IDS的计算时间小于算法HFS-CE-IDS,尤其在数据规模较大的数据集上计算时间的优势更加明显。通过本节分类精度、决策性能和计算效率三部分实验分析,证明IFS-CE-IDS是一种高效的处理数据对象特征值更新问题的增量式特征选择算法。

4 结束语本文提出了不完备决策系统中面向特征值动态更新的增量式特征选择算法。通过分析不完备决策系统中条件特征值和决策特征值同时更新时相容类和决策类的动态更新模式,构造了条件信息熵的增量计算机制,并进一步设计了一种基于动态不完备决策系统的增量式特征选择算法。实验选取了9组UCI公共数据集,并通过分类精度、决策性能以及计算效率3个方面与传统批量式特征选择算法进行了性能对比。实验结果表明,本文算法所选择的特征子集与批量式算法在分类精度和决策性能具有基本一致的性能表现。同时,在面对不完备数据中特征值的动态变化环境下,本文算法的计算效率远优于传统批量式算法,可在较短时间内计算出一个可行的特征子集。实验中部分数据集使用算法IFS-CE-IDS需要进行特征转化,导致失去部分有效信息,降低算法结果质量,未来将致力于寻求更有效处理混合数据的增量特征算法。

| [1] |

KWAK N, CHOI C H. Input feature selection by mutual information based on Parzen window[J]. IEEE transactions on pattern analysis and machine intelligence, 2002, 24(12): 1667-1671. DOI:10.1109/TPAMI.2002.1114861 ( 0) 0)

|

| [2] |

SLOWINSKI R, VANDERPOOTEN D. A generalized definition of rough approximations based on similarity[J]. IEEE transactions on knowledge and data engineering, 2000, 12(2): 331-336. DOI:10.1109/69.842271 ( 0) 0)

|

| [3] |

KRYSZKIEWICZ M. Rough set approach to incomplete information systems[J]. Information sciences, 1998, 112(1/2/3/4): 39-49. ( 0) 0)

|

| [4] |

PARTHALÁIN N M, SHEN Qiang. Exploring the boundary region of tolerance rough sets for feature selection[J]. Pattern recognition, 2009, 42(5): 655-667. DOI:10.1016/j.patcog.2008.08.029 ( 0) 0)

|

| [5] |

MENG Zuqiang, SHI Zhongzhi. Extended rough set-based attribute reduction in inconsistent incomplete decision systems[J]. Information sciences, 2012, 204: 44-69. DOI:10.1016/j.ins.2012.04.004 ( 0) 0)

|

| [6] |

GRZYMALA-BUSSE J W, CLARK P G, KUEHNHAUSEN M. Generalized probabilistic approximations of incomplete data[J]. International journal of approximate reasoning, 2014, 55(1): 180-196. DOI:10.1016/j.ijar.2013.04.007 ( 0) 0)

|

| [7] |

QIAN Yuhua, LIANG Jiye, PEDRYCZ W, et al. An efficient accelerator for attribute reduction from incomplete data in rough set framework[J]. Pattern recognition, 2011, 44(8): 1658-1670. DOI:10.1016/j.patcog.2011.02.020 ( 0) 0)

|

| [8] |

DAI Jianhua. Rough set approach to incomplete numerical data[J]. Information sciences, 2013, 241: 43-57. DOI:10.1016/j.ins.2013.04.023 ( 0) 0)

|

| [9] |

YANG Xibei, YANG Jingyu, WU Chen, et al. Dominance-based rough set approach and knowledge reductions in incomplete ordered information system[J]. Information sciences, 2008, 178(4): 1219-1234. DOI:10.1016/j.ins.2007.09.019 ( 0) 0)

|

| [10] |

LIANG Jiye, XU Zongben. The algorithm on knowledge reduction in incomplete information systems[J]. International journal of uncertainty, fuzziness and knowledge-based systems, 2002, 10(1): 95-103. DOI:10.1142/S021848850200134X ( 0) 0)

|

| [11] |

QIAN Yuhua, LIANG Jiye, WANG Feng. A new method for measuring the uncertainty in incomplete information systems[J]. International journal of uncertainty, fuzziness and knowledge-based systems, 2009, 17(6): 855-880. DOI:10.1142/S0218488509006303 ( 0) 0)

|

| [12] |

DAI Jianhua, WANG Wentao, XU Qing. An uncertainty measure for incomplete decision tables and its applications[J]. IEEE transactions on cybernetics, 2013, 43(4): 1277-1289. DOI:10.1109/TSMCB.2012.2228480 ( 0) 0)

|

| [13] |

ZHAO Hua, QIN Keyun. Mixed feature selection in incomplete decision table[J]. Knowledge-based systems, 2014, 57: 181-190. DOI:10.1016/j.knosys.2013.12.018 ( 0) 0)

|

| [14] |

RAGHAVAN V, HAFEZ A. Dynamic data mining[C]//Proceedings of the 13th International Conference on Industrial and Engineering Applications of Artificial Intelligence and Expert Systems. New Orleans, Louisiana, USA, 2000.

( 0) 0)

|

| [15] |

LI Tianrui, RUAN Da, GEERT W, et al. A rough sets based characteristic relation approach for dynamic attribute generalization in data mining[J]. Knowledge-based systems, 2007, 20(5): 485-494. DOI:10.1016/j.knosys.2007.01.002 ( 0) 0)

|

| [16] |

XU Yitian, WANG Laisheng, ZHANG Ruiyan. A dynamic attribute reduction algorithm based on 0-1 integer programming[J]. Knowledge-based systems, 2011, 24(8): 1341-1347. DOI:10.1016/j.knosys.2011.06.007 ( 0) 0)

|

| [17] |

QIAN Jin, DANG Chuangyin, YUE Xiaodong, et al. Attribute reduction for sequential three-way decisions under dynamic granulation[J]. International journal of approximate reasoning, 2017, 85: 196-216. DOI:10.1016/j.ijar.2017.03.009 ( 0) 0)

|

| [18] |

YANG Yanyan, CHEN Degang, WANG Hui, et al. Incremental perspective for feature selection based on fuzzy rough sets[J]. IEEE transactions on fuzzy systems, 2018, 26(3): 1257-1273. DOI:10.1109/TFUZZ.2017.2718492 ( 0) 0)

|

| [19] |

LANG Guangming, CAI Mingjie, FUJITA H, et al. Related families-based attribute reduction of dynamic covering decision information systems[J]. Knowledge-based systems, 2018, 162: 161-173. DOI:10.1016/j.knosys.2018.05.019 ( 0) 0)

|

| [20] |

WEI Wei, WU Xiaoying, LIANG Jiye, et al. Discernibility matrix based incremental attribute reduction for dynamic data[J]. Knowledge-based systems, 2018, 140: 142-157. DOI:10.1016/j.knosys.2017.10.033 ( 0) 0)

|

| [21] |

ZENG Anping, LI Tianrui, LIU Dun, et al. A fuzzy rough set approach for incremental feature selection on hybrid information systems[J]. Fuzzy sets and systems, 2015, 258: 39-60. DOI:10.1016/j.fss.2014.08.014 ( 0) 0)

|

| [22] |

LIANG Jiye, WANG Feng, DANG Chuangyin, et al. A group incremental approach to feature selection applying rough set technique[J]. IEEE transactions on knowledge and data engineering, 2014, 26(2): 294-308. DOI:10.1109/TKDE.2012.146 ( 0) 0)

|

| [23] |

SHU Wenhao, SHEN Hong. Incremental feature selection based on rough set in dynamic incomplete data[J]. Pattern recognition, 2014, 47(12): 3890-3906. DOI:10.1016/j.patcog.2014.06.002 ( 0) 0)

|

| [24] |

XIE Xiaojun, QIN Xiaolin. A novel incremental attribute reduction approach for dynamic incomplete decision systems[J]. International journal of approximate reasoning, 2018, 93: 443-462. DOI:10.1016/j.ijar.2017.12.002 ( 0) 0)

|

| [25] |

WANG Feng, LIANG Jiye, DANG Chuangyin. Attribute reduction for dynamic data sets[J]. Applied soft computing, 2013, 13(1): 676-689. DOI:10.1016/j.asoc.2012.07.018 ( 0) 0)

|

| [26] |

刘吉超, 王锋, 宋鹏. 缺失数据的维数增量式特征选择[J]. 计算机工程与应用, 2019, 55(17): 95-99. LIU Jichao, WANG Feng, SONG Peng. Dimension incremental feature selection algorithm for missing data[J]. Computer engineering and applications, 2019, 55(17): 95-99. DOI:10.3778/j.issn.1002-8331.1806-0029 (  0) 0)

|

| [27] |

钱进, 朱亚炎. 面向成组对象集的增量式属性约简算法[J]. 智能系统学报, 2016, 11(4): 496-502. QIAN Jin, ZHU Yayan. An incremental attribute reduction algorithm for group objects[J]. CAAI transactions on intelligent systems, 2016, 11(4): 496-502. (  0) 0)

|

| [28] |

QIAN Yuhua, DANG Chuangyin, LIANG Jiye, et al. On the evaluation of the decision performance of an incomplete decision table[J]. Data & knowledge engineering, 2008, 65(3): 373-400. ( 0) 0)

|

2021, Vol. 16

2021, Vol. 16