近年来,人体行为识别技术随着深度学习的兴起,引起了广泛的关注。传统的行为识别方法,如iDT[1],计算繁琐,时效性不高。深度学习以及卷积神经网络的发展推动了行为识别技术的发展。主流深度学习网络模型,如AlexNet[2]、VGG-Net[3]、GoogleLetNet[4]、ResNet[5]和DenseNet[6]等,在2D图像数据处理方面取得了不错的效果。

基于深度学习的人体行为识别方法目前主要包括两个流派:3D时空卷积(3D ConvNets)和双流卷积网络(Two-Stream),主要基于的网络架构是ResNet。

本文采用DenseNet做为网络的架构,通过2D卷积操作进行时空信息的学习,提出了一种新的基于视频的行为识别方法: 2D时空卷积密集连接神经网络(2D spatiotemporal dense connected convolutional networks,2DSDCN)。首先在视频中选取用于表征行为的帧,并将这些帧按时空次序组织成BGR格式数据,传入2DSDCN中进行识别。2DSDCN模型在DenseNet的基础上添加了时空信息提取层,与单纯使用DenseNet相比,在UCF101[7]数据集上得到了1%的效果提升。目前,本文的方法在没有使用多流融合、iDT信息融合等手段,在UCF101数据集上获得了最高94.46%的准确率。

本文提出了一种新的基于2D卷积的行为识别方法,使用2D卷积提取时空信息;引入了DenseNet作为行为识别的网络架构,分析其对时空信息提取的促进作用;提出了一种新的基于BGR图像的时空关系组织提取方法。

1 相关工作 1.1 卷积网络卷积神经网络模型由交替堆叠的卷积层、池化层和全连接层构成。AlexNet、LeNet[8]、VGG-Net在结构上并没有太大的改进,卷积层、池化层和全连接层进行合理的组织来加深模型的深度。GoogLeNet引入了Inception结构来串联特征图,通过多分辨率来丰富提取到的特征。

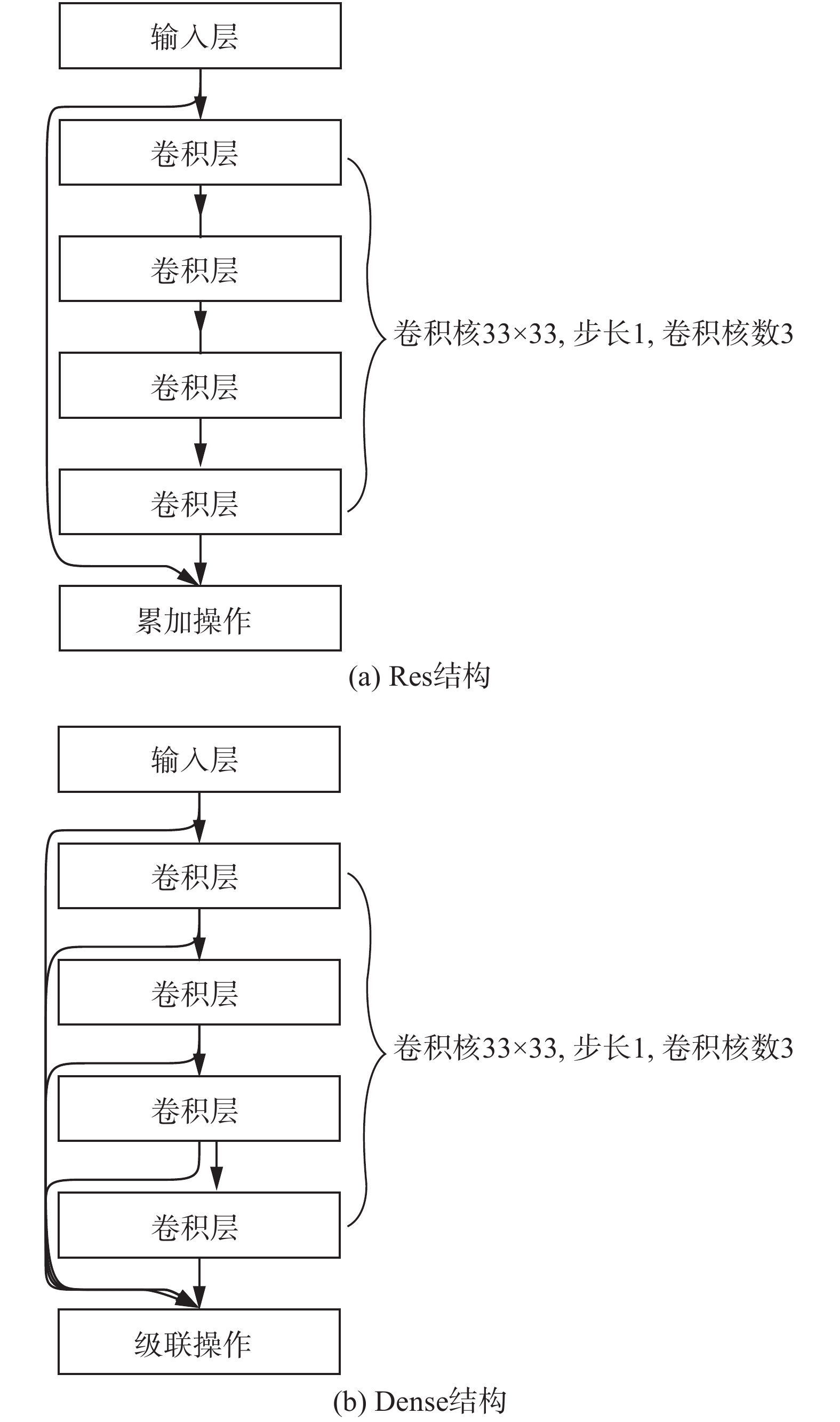

ResNet引入了残差块,即增加了把当前输出直接传输给后面层网络而绕过了非线性变换的直接连接,梯度可以直接流向前面层,有助于解决梯度消失和梯度爆炸问题。然而该网络的缺点是,前一层的输出与其卷积变换后的输出之间通过值相加操作结合在一起可能会阻碍网络中的信息流[5-6]。

DenseNet在ResNet的基础上提出了一种不同的连接方式。它建立了一个密集块内前面层和后面所有层的密集连接,即每层的输入是其前面所有层的特征图,与ResNet在值上的累加不同,DenseNet是维度上的累加,因此在信息流方面克服了ResNet的缺点,改进了信息流。DenseNet的网络结构由密集块组成,其中,两个密集块之间有过渡层。密集块内的结构参照了ResNet的瓶颈结构(Bottleneck),而过渡层中包括了一个

对于卷积网络而言,输入网络数据的宽度(weight)、高度(height)、通道数(channels)以及数据的分布对网络的实际表现有很大的影响。而这些卷积网络的源生输入数据均为3通道的RGB图像,数据未归一化前分布在0~255。因此,为了充分发挥这些卷积网络的性能,本文决定将时空信息组织成BGR图像形式作为输入数据的组织形式。

1.2 行为识别算法根据行为识别方法各自的特点,可大致分为基于特征工程的算法和基于深度学习的算法两大类。

基于特征工程的算法是传统的识别方法,其中最经典的是改进的密集轨迹算法[9-13](improved dense trajectories,iDT)。iDT算法源于对DT(dense trajectories)算法的改进,主要思想是通过利用光流场来获得视频序列中的一些轨迹,再提取HOF、HOG、MBH等特征,用BOF(bag of feature)方法对提取到的特征进行编码,最后用SVM对编码的结果进行分类得到结果。iDT在消除了相机运动带来的影响,优化了光流信息的同时,对提取的HOF、HOG、MBH等特征采用L1正则化后再对每个维度开方,并使用了费舍尔向量的编码方式对DT算法进行优化,在UCF50上的准确率从原本的84.5%提升到了91.2%,在HMDB51上的准确率也从原本的46.6%提升到了57.2%[1]。

基于深度学习的算法可分为基于卷积的行为识别算法[14]、基于Two-Stream架构的行为识别算法[15-18]以及基于人体骨骼序列的行为识别算法[19-20]3类。前两者对视频进行像素级别的识别,而后者则依赖于单帧关键点或骨架等信息进行时间上的识别。

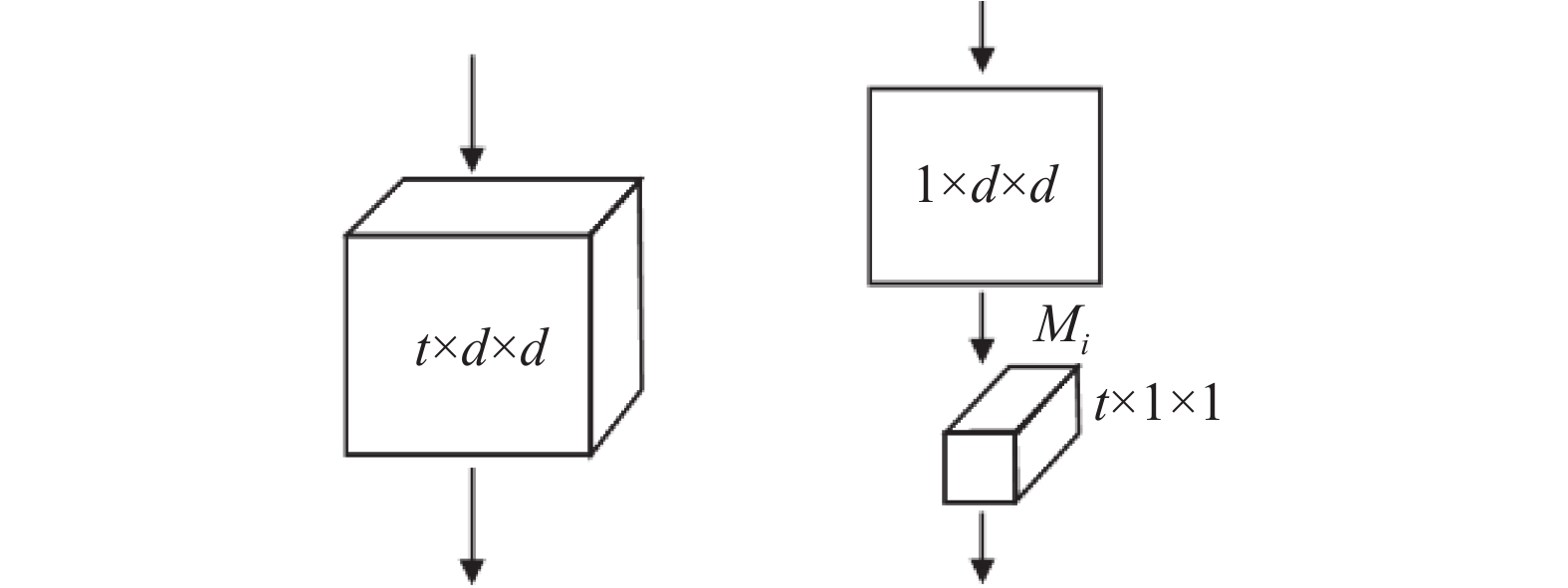

基于卷积的行为识别算法,最经典的是C3D。TRAN Du等[21]提出的C3D (3D ConvNets)的基本思想是将二维卷积拓展到三维空间,引入3D卷积提取时空特征。在C3D的启发下,一系列的2D卷积网络结构的3D卷积版本被用于行为识别,例如3D ResNets[22]、P3D[23]、T3D[24]等。为解决3D卷积学习参数冗余导致学习困难,TRAN Du与WANG Heng在FSTCN (factorized spatio-temporal convolutional networks)[25]的启发下提出了结合2D卷积和3D卷积的R(2+1)D[26]神经网络。(2+1)D卷积核与3D卷积核对比如图1所示,R(2+1)D神经网络将3D的时空卷积分解为了2D的空间卷积和1D的时间卷积,使得空间信息与时间信息分离开来,便于分别对时空信息进行优化。

|

Download:

|

| 图 1 (2+1)D卷积核与3D卷积核对比 Fig. 1 (2+1)D vs 3D convolution | |

基于Two-Stream[27]架构的行为识别算法通常对空间信息和时域信息进行分流学习然后将特征融合进行识别。比较经典的是Simonyan等[27]提出的Two-Stream Network。Two-Stream Network 训练了两个CNN学习,一个用于学习2D的RGB图,另一个用于学习光流信息,最后将两个分类器的结果融合起来。

基于人体骨骼序列的行为识别算法使用循环神经网络等方法,其通过时间序列上表征人体的关键点信息进行识别。现阶段主要利用的是骨架信息结合不同的循环神经网络进行研究。

现在主流的数据组织形式是RGB图像和光流图像。光流图像对运动的表征通常优于RGB图像。但是对光流的计算往往会带来时效上的损失,需要对新的数据组织形式进行探索。因此,本文尝试使用按照时间顺序组织的RGB平铺图像作为数据组织形式,通过2D卷积提取时空信息。

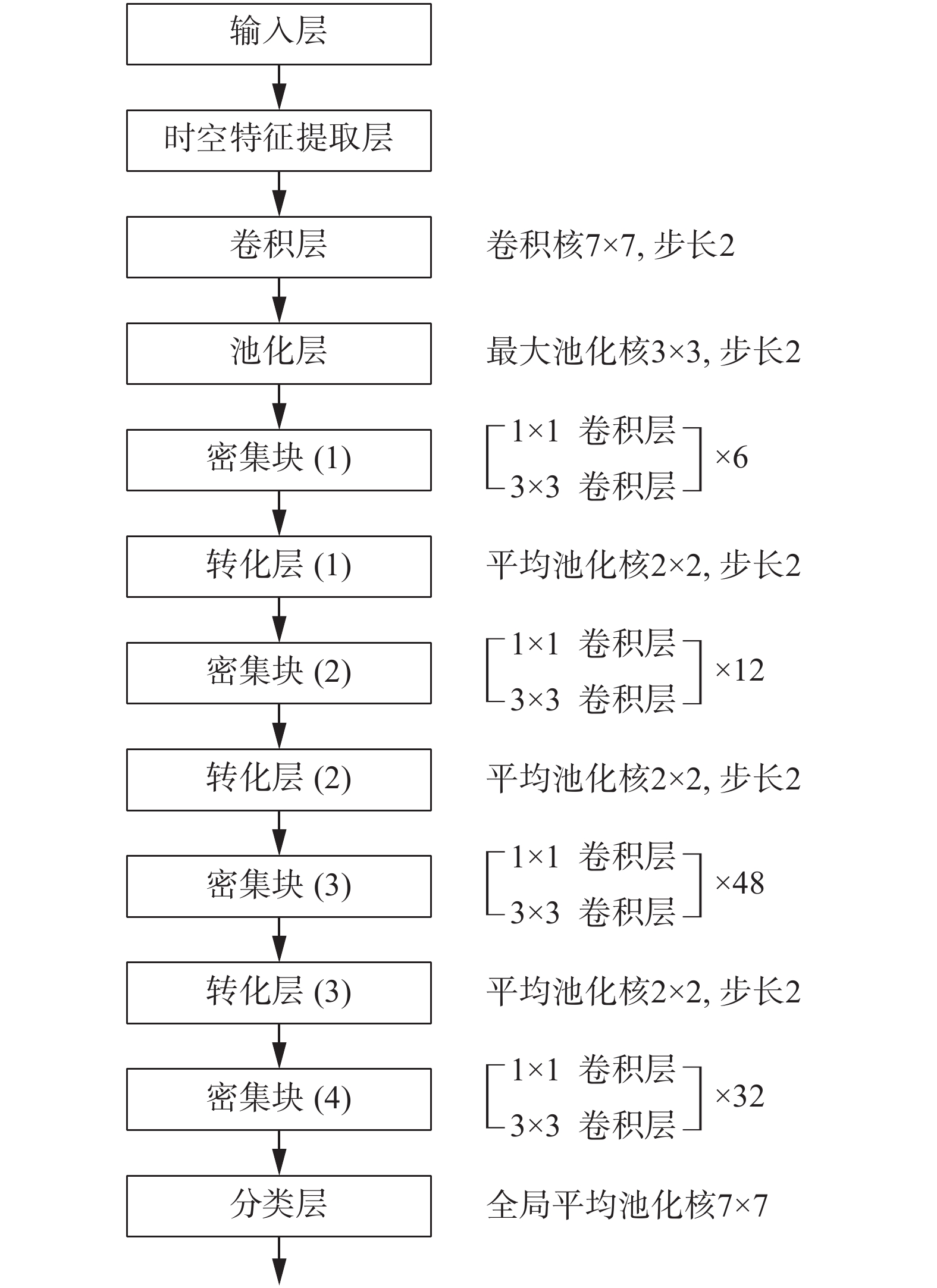

2 2D时空卷积设计以及时空特征组织形式本节对2D卷积用于时空特征提取的可能性进行分析,设计了适用于2D卷积的输入数据组织形式,分析了DenseNet在时空信息特征提取的促进作用,提出了最终的方案设计,如图2所示。

|

Download:

|

| 图 2 2DSDCN网络架构 Fig. 2 Structure of 2DSDCN | |

卷积神经网络(CNN)对信息特征的组织和提取主要依靠两种操作:卷积和池化操作。卷积依靠卷积集核将低层感受野中的相应信息组织到高层的对应像素点中。高层像素点

| $ d=\frac{2\times w}{k} $ | (1) |

若在卷积过程中,在合适位置使用

| $ d=\frac{2\times w}{k\times {f}^{n}} $ | (2) |

可以看出池化操作对底层像素点之间关系的建立起到了不错的加速效果,使模型可以在尽量少的层次中获取对输入图像的表征。

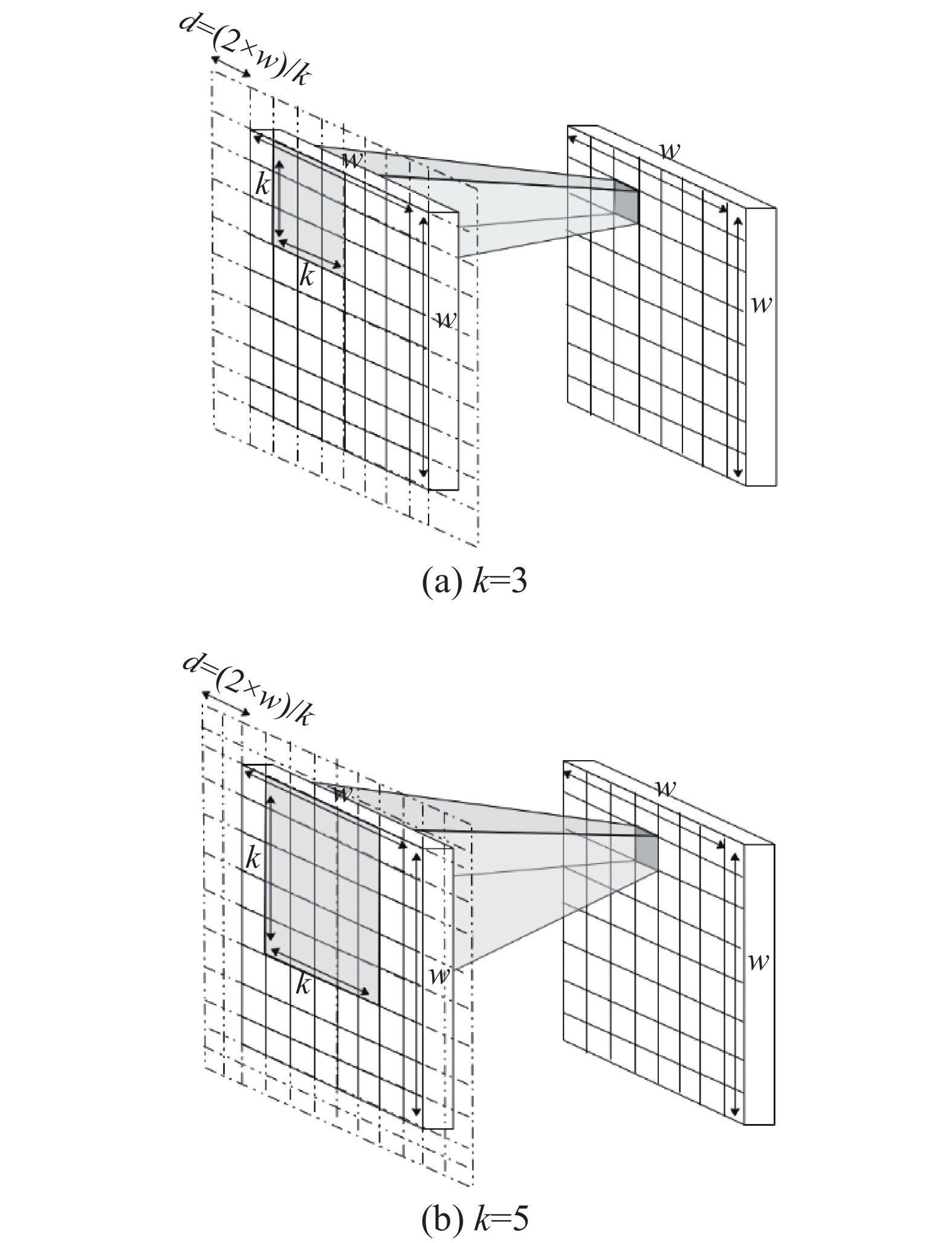

对一个卷积核尺寸为

| $ {A_{n,i,j}} = R\left[ {\begin{array}{*{20}{c}} {{A_{n - 1,i - \frac{{k - 1}}{2},j - \frac{{k - 1}}{2}}}}& \cdots &{{A_{n - 1,i - \frac{{k - 1}}{2},j + \frac{{k - 1}}{2}}}}\\ \vdots & & \vdots \\ {{A_{n - 1,i + \frac{{k - 1}}{2},j - \frac{{k - 1}}{2}}}}& \cdots &{{A_{n - 1,i + \frac{{k - 1}}{2},j + \frac{{k - 1}}{2}}}} \end{array}} \right] $ | (3) |

随着进一步卷积,

| $ {r}_{n}=\left({r}_{n-1}+k-1\right)\times {f}_{n-1} $ | (4) |

式中:

|

Download:

|

| 图 3 不同卷积核的卷积对比 Fig. 3 Comparison of different convolution kernels | |

对于单帧图像而言,2D维度上的卷积可以提取到丰富的空间特征,这种特征是由单帧图像每个像素点与其他像素点之间的关系来进行表征。本文将多帧在时间上有相互关系的图像组织到空间维度上,通过2D卷积建立起同帧像素点间以及跨帧像素点间的关系,就可以提取到空间(同帧内部)和时间(多帧之间)上的关系。据此,本文提出了基于RGB图像和2D卷积对视频时空信息提取的方法。

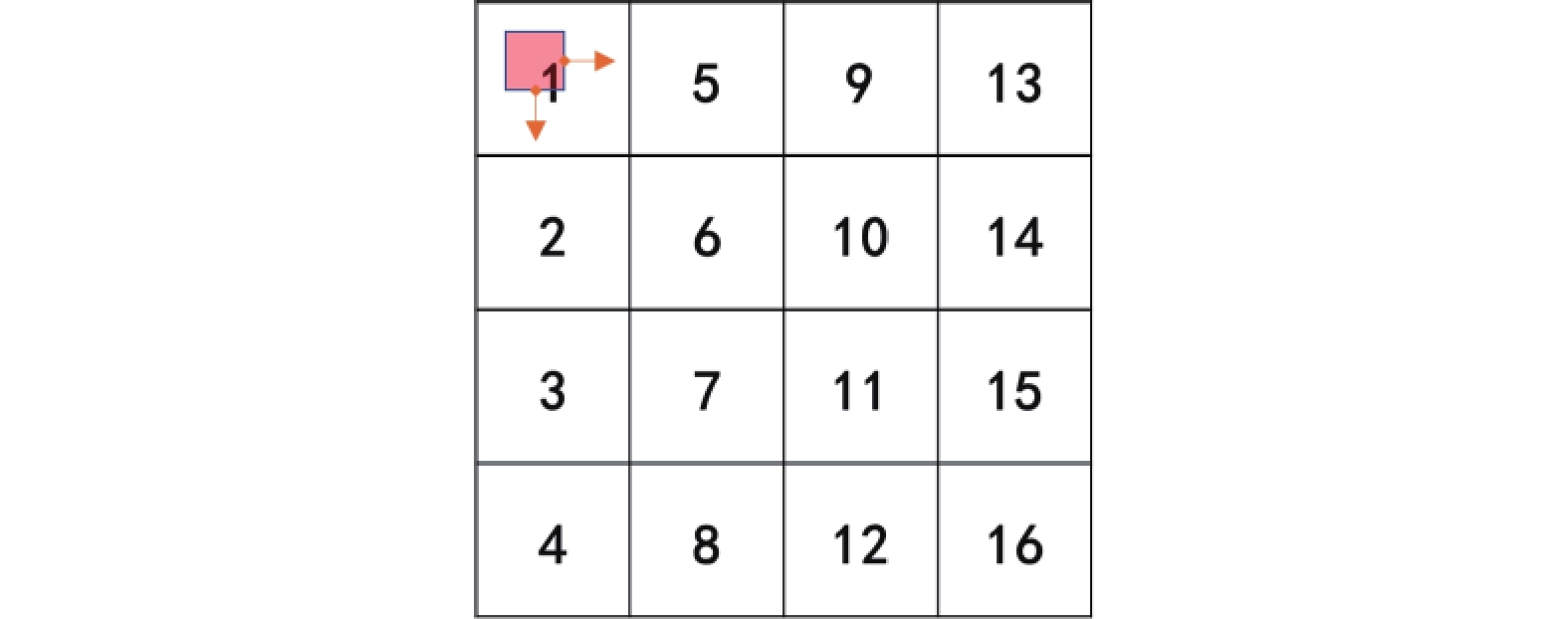

2.2 选取和拼接的组织本文从一个视频片段提取出16帧的

|

Download:

|

| 图 4 图像拼接 Fig. 4 Image mosaicking | |

|

Download:

|

| 图 5 卷积示意图 Fig. 5 Convolution diagram | |

单纯的拼接虽然可以快速提取相邻帧之间的关系,但是在建立不同帧中相邻空间像素点之间关系时,2D卷积相比3D卷积有一定的差距:如图6所示,只有当

|

Download:

|

| 图 6 单纯拼接的缺点 Fig. 6 Disadvantages of simple mosaicking | |

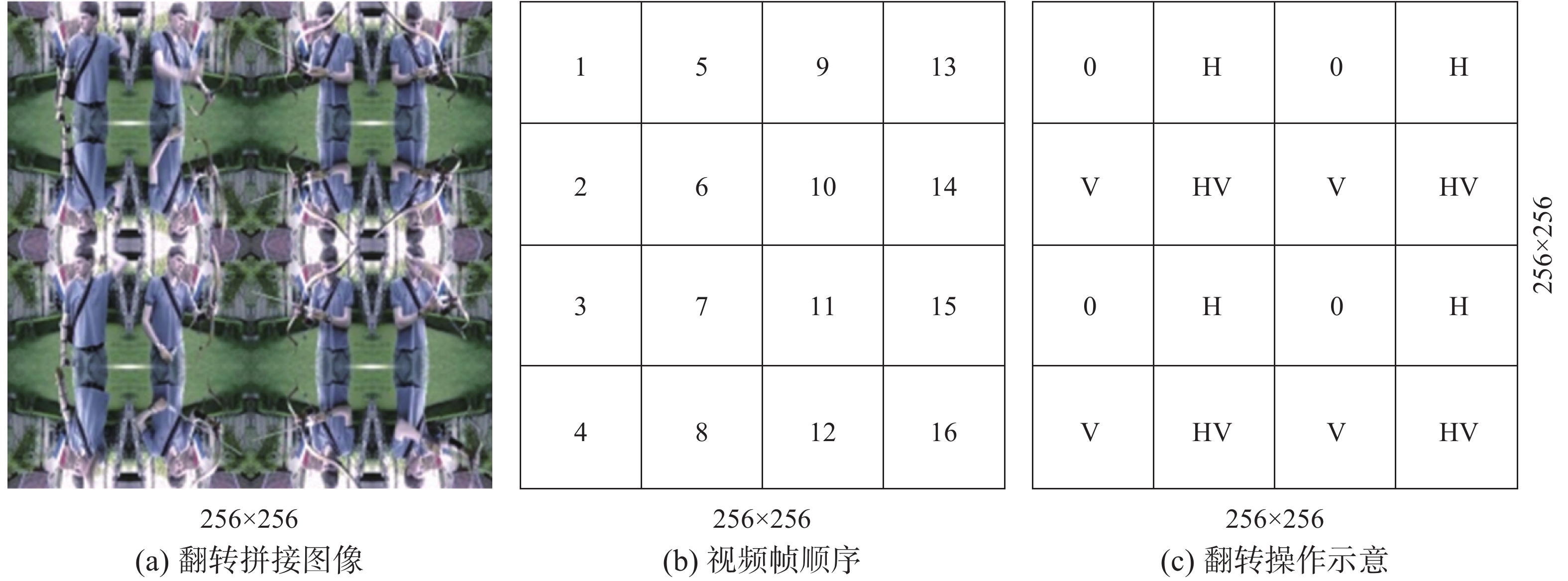

为加快不同帧中相邻空间像素点之间关系的建立,本文对16帧图像进行翻转的操作,如图7所示,其中H代表水平翻转,V代表垂直翻转。

|

Download:

|

| 图 7 图像翻转设计 Fig. 7 Image reversal design | |

在卷积核位于多帧交界处时,能够在首次卷积中就建立范围

|

Download:

|

| 图 8 像素点覆盖 Fig. 8 Pixel point coverage | |

通过此操作可以加快部分相邻帧间对应像素点之间联系的提取,使相邻帧图像帧之间的时空联系在更低的层次上建立起来。对比无翻转的组织形式,能够在相同深度下更好地提取时空信息。

2.4 DenseNet的选择DenseNet是CVPR2017的最佳论文,不同于之前的神经网络在宽度(inception结构)和深度(resblock结构)上的改进,在模型的特征维度进行了改进,将不同卷积阶段所提取的特征进行维度上的密集连接,可以保留更丰富的信息。DenseNet建立了一个denseblock内前面层和后面所有层的密集连接,即每层的输入是其前面所有层的特征图,第

| $ {x}_{l}={H}_{l}\left(\left\{{x}_{0},{x}_{1},\cdots, {x}_{l-1}\right\}\right) $ | (5) |

式(5)中:

对本文提出的方法来说,不同卷积阶段所提取的特征

结合2.1节提出加入4个大小为

|

Download:

|

| 图 9 时空卷积层结构 Fig. 9 Structure of spatiotemporal convolutional layer | |

本文首先通过对比实验来验证2DSDCN各个部分的设计,然后对输入视频采用不同的帧选取方式,来验证模型的鲁棒性。实验所采用的数据集为UCF101数据集,选用以tensorflow为后端的Keras框架,在训练过程中使用2个单精度GPU进行加速,型号为Pascal架构下的GTX 1080Ti。

3.1 翻转操作的验证本文对每一个视频等分采样16帧,设单个视频的总帧数为Fl,对应的采样帧间隔为Fl/16。将得到的16帧图像进行翻转或拼接操作,分别得到直接拼接的BGR图像和使用翻转拼接的BGR图像。视频数据集按照相同的随机系数进行打乱,训练集和验证集按照8:2的比例划分。本文将获得的数据集直接送入没有进行预训练的DenseNet-201网络进行训练,训练轮次为100轮。训练的结果如图10所示。

|

Download:

|

| 图 10 无翻转操作与带翻转操作准确率对比 Fig. 10 Accuracy comparison between no flipping operation and flipping operation | |

没有进行翻转操作的模型训练后的准确率为92.5%,而带翻转操作的模型训练后的准确率则为93.4%,有1%的效果提升,说明加快相邻帧间对应像素点之间关系的建立对模型学习时空信息起到了一定的促进作用。

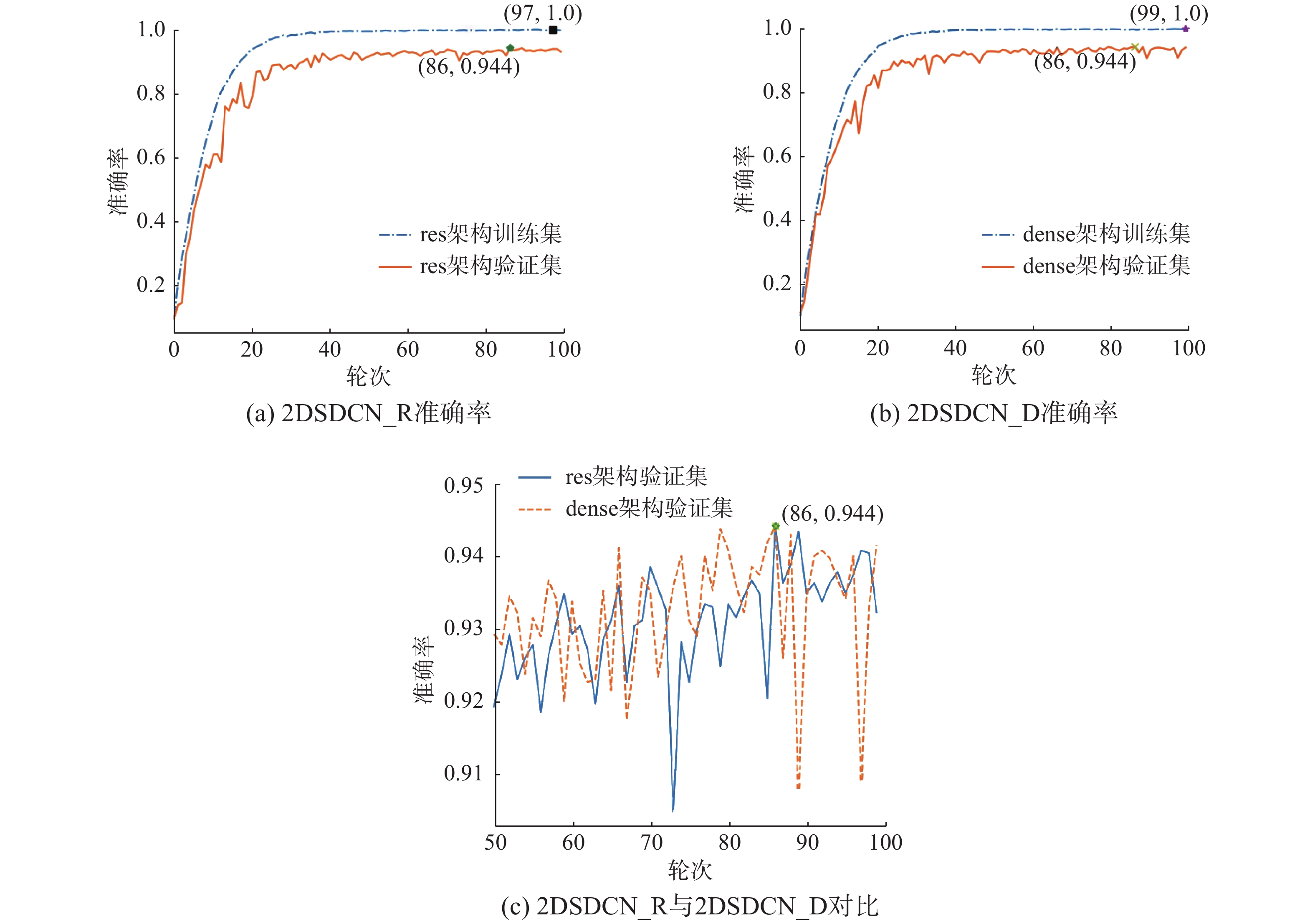

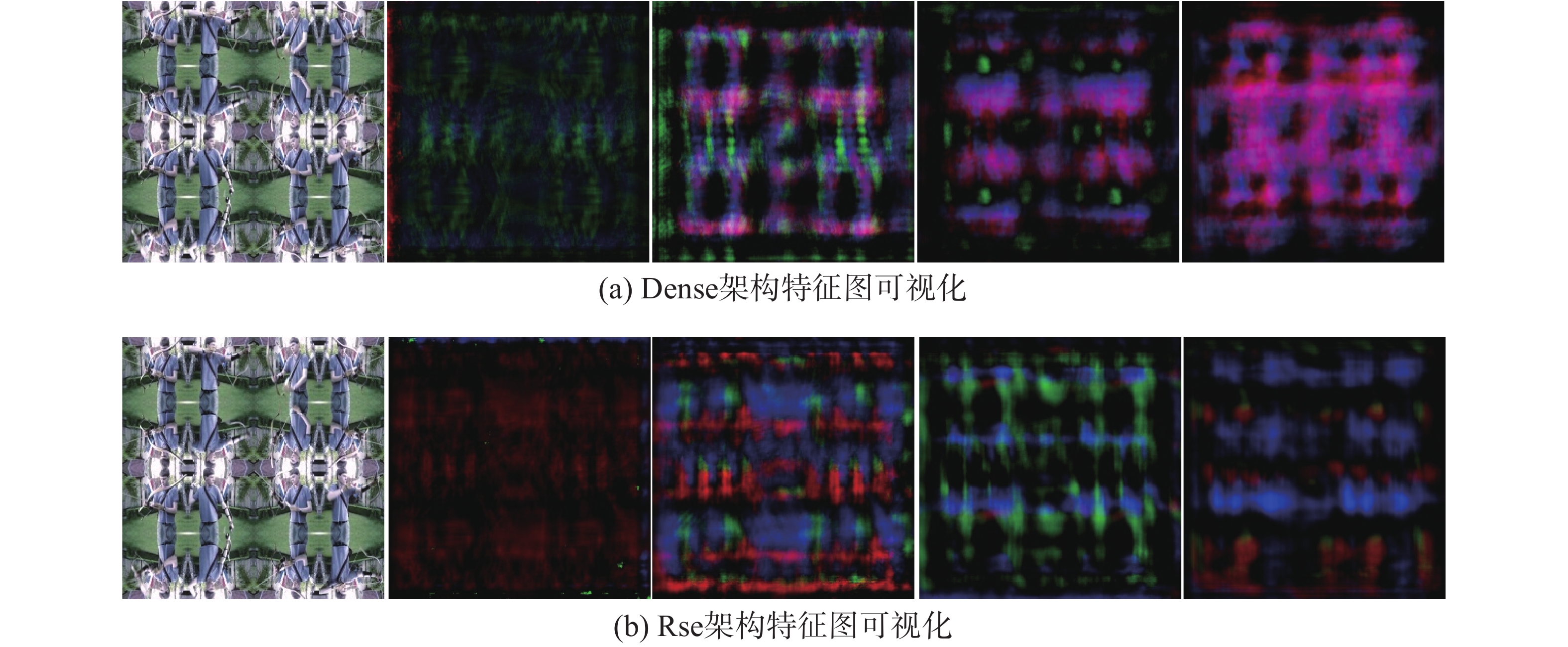

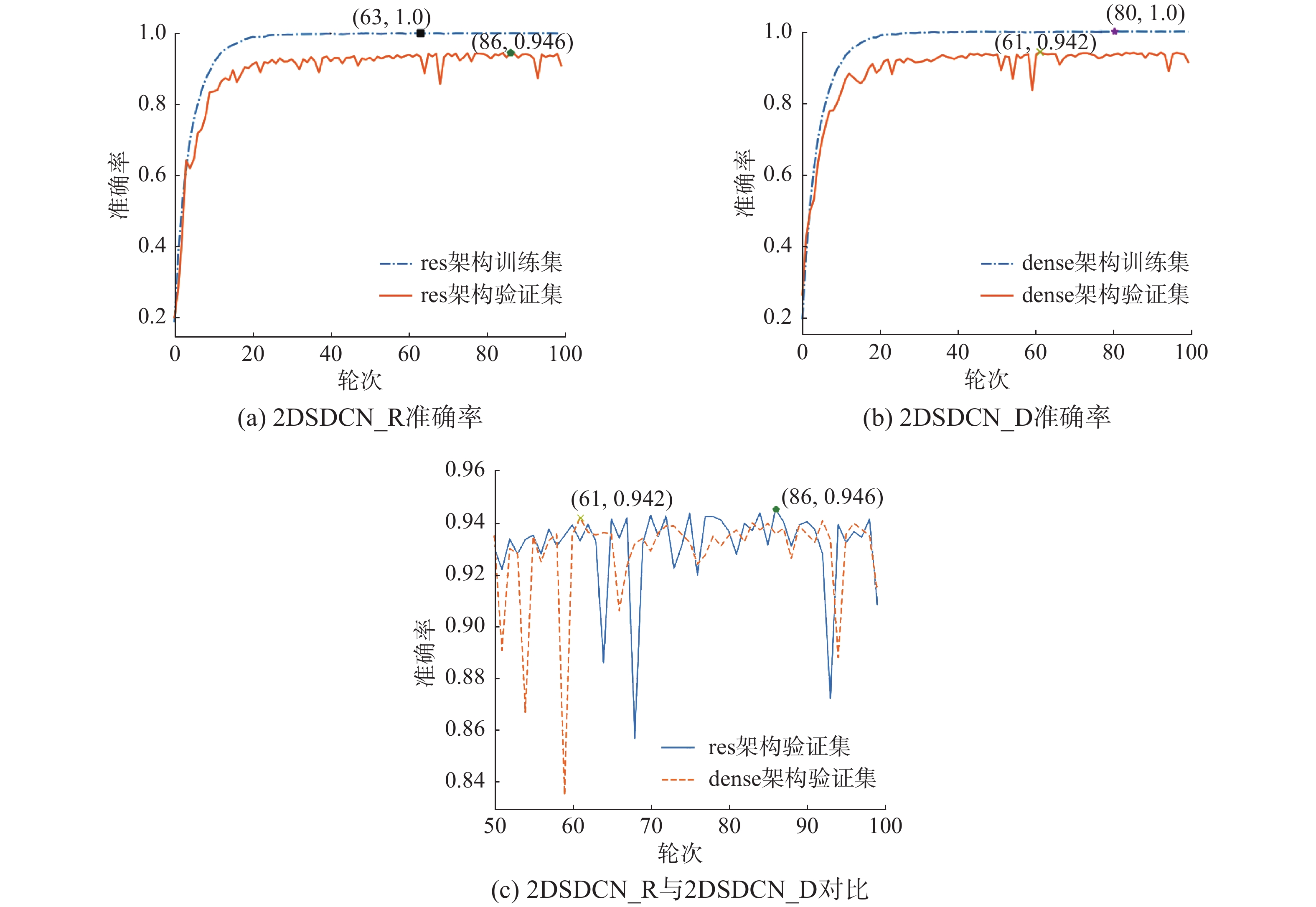

3.2 时空卷积层效果提升与特征可视化本文基于resblock和denseblock设计实现了2种不同的网络作为时空卷积层,并将翻转拼接图像数据集的BGR形式分别送入两个网络进行训练,训练轮次为100轮,训练结果如图11所示。可以看出,二者在大卷积核操作的促进下,效果差距不大,均能达到94.4%的准确率,并且相比原始的DenseNet-201网络,有1%的效果提升。为了直观展现时空卷积层的效果,本文进行了特征的可视化,对每一层的输出,如图12所示。

|

Download:

|

| 图 11 2DSDCN_R 和 2DSDCN_D的准确率对比 Fig. 11 Accuracy comparison between 2DSDCN_R and 2DSDCN_D | |

|

Download:

|

| 图 12 denseblock和resblock设计的特征可视化 Fig. 12 Feature of visualization denseblock and resblock | |

在之前的帧选取方法的基础上,本文又对同一视频片段每隔5帧进行16帧的选取,对每一个视频进行随机划分,送入3.2节设计的两个网络中进行训练。之所以这样选取是因为在实际使用中,视频的获取方式往往是连续的。采用这种方式获取的动作信息在时序上是等时分布的,符合实时识别的数据采样形式。训练结果如图13所示,可以发现模型准确率在denseblock设计上达到了94.2%,在resblock设计上达到了94.6%。

|

Download:

|

| 图 13 每5帧采样下2DSDCN_R和2DSDCN_D的准确率对比 Fig. 13 Accuracy comparison between 2DSDCN_R and 2DSDCN_D with sampling every 5 frames | |

本文对之前的实验进行汇总,详细内容参见表1。2DSDCN结合翻转平铺的数据组织形式对比无附加操作时,准确率更高,收敛速度更快。本文所设计的两种时空特征层提取结构在实验中均能达到相同的水准,并且在不同的视频数据采样形式下保持稳定的准确率,表示基于2D时空平展图的大卷积核时空特征提取能够有效加速时空关系的建立。

| 表 1 实验结果 Tab.1 Experiment result |

本文在对过往行为识别特别是基于卷积的行为识别算法总结分析的基础上,提出了一种新的基于视频的2D卷积行为识别算法2DSDCN以及一种新的时空信息数据的组织形式,并对2D卷积在像素级别上进行分析,引入dense结构来进行时空信息的提取。

本文以基础CNN分类网络对BGR图像组织形式的良好支持为源起,通过合理的组织输入数据,结合CNN网络的特性,最终设计出两种性能稳定的网络2DSDCN_R和2DSDCN_D,并在UCF101数据集上取到了最高94.6%的准确率。

图像识别和视频行为识别领域存在着一定的技术隔阂,本文尝试通过合理的组织数据将图像识别和视频识别有机统一,一定程度上促进了图像识别与视频识别的同步发展,但还需要进一步的研究,以支持视频分割以及视频语义理解等研究方向。

| [1] |

WANG H, SCHMID C. Action recognition with improved trajectories[C]//2013 IEEE International Conference on Computer Vision. Sydney, AUS, 2013: 3551−3558.

( 0) 0)

|

| [2] |

KRIZHEVSKY A, SUTSKEVER I, HINTON G. ImageNet classification with deep convolutional neural networks[C]//Proceedings of the 25th International Conference on Neural Information Processing Systems. Lake Tahoe, USA, 2012: 1097−1105.

( 0) 0)

|

| [3] |

RUSSAKOVSKY O, DENG J, SU H, et al. ImageNet large scale visual recognition challenge[J]. International journal of computer vision, 2014, 115(3): 211-252. ( 0) 0)

|

| [4] |

SZEGEDY C, LIU W, JIA Y, et al. Going deeper with convolutions[C]//2015 IEEE Conference on Computer Vision and Pattern Recognition. Boston, USA, 2015: 1−9.

( 0) 0)

|

| [5] |

HE K, ZHANG X, REN S, et al. Deep residual learning for image recognition[C]//2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas, USA, 2016: 770−778.

( 0) 0)

|

| [6] |

HUANG G, LIN Z, LAURENS V D M, et al. Densely connected convolutional networks[C]//2017 IEEE Conference on Computer Vision and Pattern Recognition. Honolulu, USA, 2017: 2261−2269.

( 0) 0)

|

| [7] |

SOOMRO K, ZAMIR A R, SHAH M. UCF101: A dataset of 101 human actions classes from videos in the wild[J]. arXiv: 1212.0402, 2012.

( 0) 0)

|

| [8] |

LECUN Y, BOTTOU L, BENGIO Y, et al. Gradient-based learning applied to document recognition[J]. Proceedings of the IEEE, 1998, 86(11): 2278-2324. DOI:10.1109/5.726791 ( 0) 0)

|

| [9] |

CHEN P H, LIN C J, Schölkopf B. A tutorial on v-support vector machines[J]. Applied stochastic models in business and industry, 2005, 21(2): 111-136. DOI:10.1002/asmb.537 ( 0) 0)

|

| [10] |

DALAL N, TRIGGS B. Histograms of oriented gradients for human detection[C]//2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition. San Diego, USA, 2005, 1: 886−893.

( 0) 0)

|

| [11] |

CHAUDHRY R, RAVICHANDRAN A, HAGER G, et al. Histograms of oriented optical flow and binet-cauchy kernels on nonlinear dynamical systems for the recognition of human actions[C]//2009 IEEE Conference on Computer Vision and Pattern Recognition. Miami, USA, 2009: 1932−1939.

( 0) 0)

|

| [12] |

DALAL N, TRIGGS B, SCHMID C. Human detection using oriented histograms of flow and appearance[C]//European Conference on Computer Vision. Graz, Austria, 2006: 428−441.

( 0) 0)

|

| [13] |

WANG H, Kläser A, SCHMID C, et al. Action recognition by dense trajectories[C]//Proceedings of the IEEE International Conference on Computer Vision. Colorado Springs, USA, 2011: 3169−3176.

( 0) 0)

|

| [14] |

CARREIRA J, ZISSERMAN A. Quo vadis, action recognition? a new model and the kinetics dataset[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Venice, Italy, 2017: 6299−6308.

( 0) 0)

|

| [15] |

FEICHTENHOFER C, PINZ A, ZISSERMAN A. Convolutional two-stream network fusion for video action recognition[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas, USA, 2016: 1933−1941.

( 0) 0)

|

| [16] |

NG Y H, HAUSKENCHT M, VIJAYANARASIMHAN S, et al. Beyond short snippets: Deep networks for video classification[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Boston, USA, 2015: 4694−4702.

( 0) 0)

|

| [17] |

WANG L, XIONG Y, WANG Z, et al. Temporal segment networks: Towards good practices for deep action recognition[C]//European Conference on Computer Vision. Amsterdam, The Netherlands, 2016: 20−36.

( 0) 0)

|

| [18] |

LAN Z, ZHU Y, HAUPTMANN A G, et al. Deep local video feature for action recognition[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops. Venice, Italy, 2017: 1−7.

( 0) 0)

|

| [19] |

张培浩. 基于姿态估计的行为识别方法研究[D]. 南京: 南京航空航天大学, 2015. ZHANG Peihao. Research on action recognition based on pose estimation[D]. Nanjing: Nanjing University of Aeronautics and Astronautics, 2015. (  0) 0)

|

| [20] |

马淼. 视频中人体姿态估计、跟踪与行为识别研究[D]. 山东: 山东大学, 2017. MA Miao. Study on human pose estimation, tracking and human action recognition in videos[D]. Shandong: Shandong University, 2017. (  0) 0)

|

| [21] |

TRAN D, BOURDEY L, FERGUS R, et al. Learning spatiotemporal features with 3D convolutional Networks[C]//Proceedings of the IEEE International Conference on Computer Vision. Santiago, Chile, 2015: 4489−4497.

( 0) 0)

|

| [22] |

HARA K, KATAOKA H, SATOH Y. Learning spatio-temporal features with 3D residual networks for action recognition[C]//Proceedings of the IEEE International Conference on Computer Vision Workshops. Venice, Italy, 2017: 3154−3160.

( 0) 0)

|

| [23] |

QIU Z, YAO T, MEI T. Learning spatio-temporal representation with pseudo-3d residual networks[C]//Proceedings of the IEEE International Conference on Computer Vision. Venice, Italy, 2017: 5533−5541.

( 0) 0)

|

| [24] |

DIBA A, FAYYAZ M, SHARMA V, et al. Temporal 3d convnets: new architecture and transfer learning for video classification[J]. arXiv preprint arXiv: 1711.08200, 2017.

( 0) 0)

|

| [25] |

SUN L, JIA K, YEUNG D Y, et al. Human action recognition using factorized spatio-temporal convolutional networks[C]//Proceedings of the IEEE International Conference on Computer Vision. Santiago, Chile, 2015: 4597−4605.

( 0) 0)

|

| [26] |

TRAN D, WANG H, TORRESANI L, et al. A closer look at spatiotemporal convolutions for action recognition[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Salt Lake City, USA, 2018: 6450−6459.

( 0) 0)

|

| [27] |

SIMONYAN K, ZISSERMAN A. Two-stream convolutional networks for action recognition in videos[C]//Advances in Neural Information Processing Systems. Montreal, Canada, 2014: 568−576.

( 0) 0)

|

2020, Vol. 15

2020, Vol. 15