2. 铜陵学院 数学与计算机学院,安徽 铜陵 244061

2. School of Mathematics and Computer Science, Tongling University, Tongling 244061, China

研究人员常用网络(图)描述不同学科领域中实体间的交互关系,例如生物学领域中的蛋白质互联网络,社会学领域中的社交网络,语言学领域中的词共现网络。结合不同的分析任务对网络进行研究、探索,进而挖掘出隐藏在网络数据中的信息,使之服务于人类。不过,真实场景中的网络数据通常具有稀疏、高维等特质,直接利用这样的数据进行分析通常有较高的计算复杂度,使得许多先进的研究成果无法直接应用到现实的网络环境中。

网络表示学习[1](network representation learning,NRL)是解决上述问题的有效方法,旨在保留结构信息的前提下,为网络中的每个节点学习一个低维、稠密的向量表示。如此,网络被映射到一个向量空间中,并可通过许多经典的基于向量的机器学习技术处理许多网络分析任务,如节点分类[2]、链接预测[3]、节点聚类[4]、可视化[5]、推荐[6]等。

网络表示学习不仅要解决网络数据的高维和稀疏的问题,还需使学习到的节点特征表示能够保留丰富的网络结构信息。网络中的节点结构大致分为三类:1)微观结构,即局部相似性,例如:一阶相似性(相邻)、二阶相似性(有共同的邻居)。2)中观结构,例如:角色相似性(承担相同的功能)、社团相似性(属于同一社团)。3)宏观结构,即全局网络特性,例如:无标度(度分布符合幂律分布)特性、小世界(高聚类系数)特性。

为获取有效的节点表示,结合最先进的机器学习、深度学习等技术,已提出各种各样的网络表示学习方法。DeepWalk[7]和Node2Vec[8]通过随机游走获取节点的局部相似性。GraRep[9]和Walklets[10]通过将邻接矩阵提升到

最近,图卷积网络[17](graph convolutional networks,GCN)越来越受到关注,已经显示GCN对网络分析任务性能的改进有着显著的效果。GCN通过卷积层聚合网络中每个节点及其邻居节点的特征,输出聚合结果的加权均值用于该节点新的特征表示。通过卷积层的不断叠加,节点能够整合

为克服这种限制,受商空间[20]中的分层递阶[21]思想的启发,提出一种基于多粒度结构的网络表示学习方法(network representation learning based on multi-granularity structure,Multi-GS)。Multi-GS首先基于模块度[22]和粒计算[23]的思想,利用网络自身的层次结构,即社团结构,通过使用局部模块度增量迭代地移动和合并网络中的节点,构造网络的粗粒度结构。利用粗粒度的结构生成更粗粒度的结构,反复多次,最终获得分层递阶的多粒度网络结构。在多粒度结构中,不同粗细的粒能够反映节点在不同粒度空间上的社团内邻近关系。然后,Multi-GS使用无监督的GCN模型分别学习不同粒度空间中粒的特征表示向量,学习到的特征能够反映不同粒度下粒间的邻近关系,不同粗细粒度中的粒间关系能够表示不同阶的节点关系,粒度越粗阶数越高。最后,Multi-GS将不同粒度空间中学习到的粒特征表示按照由粗到细的顺序进行逐层细化拼接,输出最细粒度空间中拼接后的粒特征表示作为初始网络的节点特征表示。实验结果表明,结合多粒度结构能使GCN有效地捕获网络的高阶特征,学习的节点表示可提升诸如节点分类任务的性能。

1 问题定义定义1 设网络

定义 2 网络的模块度[22]

| $Q = \frac{1}{{2m}}\sum\limits_{c \in C} {\left( {\frac{{l_c}}{{2m}} - {{\left( {\frac{{D_c}}{{2m}}} \right)}^2}} \right)} $ | (1) |

式中:

基于式(1),可以推导出两个社团合并后的局部模块度增量

| $\begin{gathered} \Delta Q_{pq} = Q_k - Q_p - Q_q = \\ \displaystyle \frac{{{{\left( {l_p + l_q} \right)}^2}}}{{2m}} - {\left( {\frac{{D_p + D_q}}{{2m}}} \right)^2} - \frac{{l_p}}{{2m}} + {\left( {\frac{{D_p}}{{2m}}} \right)^2} - \\ \displaystyle \frac{{l_q}}{{2m}} + {\left( {\frac{{D_q}}{{2m}}} \right)^2} = \frac{{l_p}}{{2m}} + \frac{{l_{pq}}}{m} + \frac{{l_q}}{{2m}} - {\left( {\frac{{D_p}}{{2m}}} \right)^2} - \\ \displaystyle \frac{{D_pD_q}}{{2{m^2}}} - {\left( {\frac{{D_q}}{{2m}}} \right)^2} - \frac{{l_p}}{{2m}} + {\left( {\frac{{D_p}}{{2m}}} \right)^2} - \frac{{l_q}}{{2m}} + {\left( {\frac{{D_q}}{{2m}}} \right)^2} = \\ \displaystyle \frac{{l_{pq}}}{m} - \frac{{D_pD_q}}{{2{m^2}}} \\ \end{gathered} $ | (2) |

其中,

定义3 给定初始网络

将初始网络结构视为由基本粒构成的最细粒度的粒层结构。粒化分层是通过聚合和粒化操作,迭代地形成粒度由细到粗的多粒层结构。具体地说,通过不断聚合不同粒层中的粒和边,

|

Download:

|

| 图 1 网络拓扑的粒化分层示例 Fig. 1 An example of hierarchical view of network topology | |

定义4 给定网络

Multi-GS首先基于模块度构建多粒度的网络分层结构;接着使用GCN模型依次学习不同粒层中所有粒的特征向量;然后自底向上逐层对粒的特征向量进行映射、拼接;最后输出最终的结果作为初始网络中节点的特征表示,如图2所示。

|

Download:

|

| 图 2 Multi-GS方法的框架 Fig. 2 Framework of Multi-GS approach | |

本小节介绍Multi-GS的粒化分层操作。主要包含两个步骤:1)粒的移动与合并:移动和合并的决定取决于局部模块度增量计算结果。2)粒化:生成更粗粒度的粒层结构。相关细节见算法1。

算法1 网络粒化分层(Graphgranular)

输入 网络

输出 由细到粗的粒层

1)level = 0;

2)granuleGraph = copy_Graph(G);/*复制图结构*/

3)Qnew=modularity();/*按式(1)计算模块度*/

4)repeat

5)

6)

7)repeat

8)Qcur= Qnew;

9)for each vi in granuleGraph do

10)将粒

11)for

12)按式(2)计算粒

13)end for

14)将粒

15)end for

16)Qnew=modularity();

17)untill (Qcur = Qnew)

18)

19)

20)

21)

23)untill (level>k)

24)return

算法1的主要步骤如下:

粒的移动与合并:首先将当前粒层中的每个粒放入不同的集合中(第6行)。其中,子集合的数量等于当前粒层中粒的数量。遍历所有的粒(第9行),将当前粒移出自身所在的集合(第10行),依次移入一个相邻粒的集合中,并依据式(2)计算移入后的局部模块度增量

在第2个步骤中,新粒间的边权由两个对应集合中的粒间的边权和确定。同一集合中粒间的内部边视为新粒的自边。

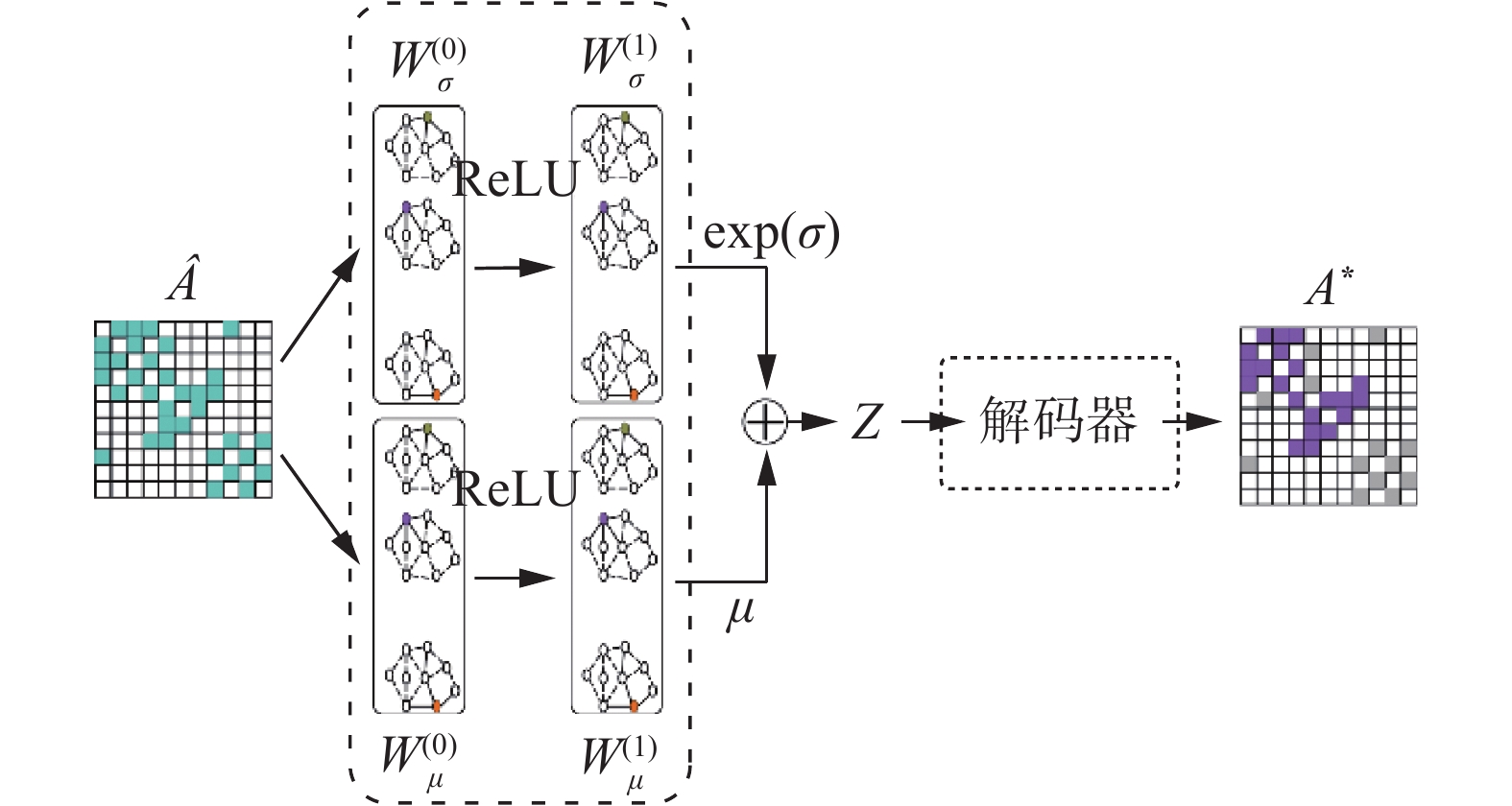

2.2 基于图卷积网络的粒特征表示学习本小节介绍Multi-GS的GCN模型结构,包括两个部分,编码器和解码器,如图3所示。GCN模型借鉴VGAE[24]的设计,Multi-GS不使用节点的辅助信息,仅利用网络的拓扑结构学习节点的特征表示,因此,最终的GCN模型在设计上与VGAE有所区别。

|

Download:

|

| 图 3 图卷积神经网络模型结构 Fig. 3 The structure of GCN model | |

GCN模型的输入是不同分层中的粒关系矩阵

| $\widehat {{A}} = {{{D}}^{ - \frac{1}{2}}}\widetilde {{A}}{{{D}}^{ - \frac{1}{2}}}$ | (3) |

其中,

| $\mu = f\left( {\widehat {{A}}f\left( {\widehat {{A}}{{W}}_\mu ^{\left( 0 \right)}} \right){{W}}_\mu ^{\left( 1 \right)}} \right)$ | (4) |

| $\sigma = f\left( {\widehat {{A}}f\left( {\widehat {{A}}{{W}}_\sigma ^{\left( 0 \right)}} \right){{W}}_\sigma ^{\left( 1 \right)}} \right)$ | (5) |

| ${{Z}} \sim {\cal{N}}\left( {\mu ,\exp (\sigma )} \right)$ | (6) |

| ${{{A}}^ * } = {\rm{sigmoid}}\left( {{{Z}} * {{{Z}}^{\rm{T}}}} \right)$ | (7) |

其中,

基于变分推断的编码器,其变分下界的优化目标函数如下:

| ${{\cal{L}}_{\rm latent}} = - \left( {\frac{{0.5}}{N}} \right) \cdot {\rm{mean}}\left( {\sum\limits_{i = 1}^d {\left( {1 + 2 \log \left( \sigma \right) - {\mu ^2} - {\sigma ^2}} \right)} } \right)$ | (8) |

解码器使用式(7)重构关系矩阵

| $\begin{gathered} {\rm{Loss}} = {{A}} \cdot \left[ - \log \left( {{\rm{sigmoid}}\left( {{{{A}}^ * }} \right)} \right) * {{{W}}^{(1)}}\right] + \\ \left( {1 - {{A}}} \right) \cdot \left[ - \log \left( {1 - {\rm{sigmoid}}\left( {{{{A}}^ * }} \right)} \right) \right] \\ \end{gathered} $ | (9) |

| ${{{W}}^{(1)}} = {{\left( {N \cdot N - \sum\limits_{i = 1}^N {{A_i}} } \right)} \Big/ {\sum\limits_{i = 1}^N {{A_i}} }}$ | (10) |

| ${{{W}}^{(2)}} = {{N \cdot N} \Big/ {\left( {N \cdot N - \sum\limits_{i = 1}^N {{A_i}} } \right)}}$ | (11) |

| ${{\cal{L}}_{\rm reconst}} = {{{W}}^{(2)}}{\rm{mean}}\left( {{\rm{Loss}}\left( {{{A}},{{{A}}^ * },{{{W}}^{(1)}}} \right)} \right)$ | (12) |

结合式(8)和式(12),GCN模型最终的目标函数如下:

| ${\cal{L}} = {{\cal{L}}_{\rm latent}} + {{\cal{L}}_{\rm reconst}}$ | (13) |

本小节介绍基于多粒度结构的网络表示学习方法Multi-GS,主要包括3个步骤:利用局部模块度增量

算法2 基于多粒度结构的网络表示学习(Multi-GS)

输入 网络G,粒化层数k,节点表示向量维度d,GCN参数

输出 网络表示Z。

1)

2)for m = 0 to k do

3)

4)end for

5)for n = k to 1 do

6)

7)

8)end for

9)Z←Z(0);

10)return Z

首先,Multi-GS算法的输入包括3个部分:网络

本节通过节点分类和链接预测任务,在真实数据集上与4个具有代表性的网络表示学习方法进行对比,验证Multi-GS的有效性。实验环境为:Windows10操作系统,Intel i7-4790 3.6 GHz CPU,8 GB内存。通过Python语言和TensorFlow实现Multi-GS。

3.1 实验设定1) 数据集。

实验使用5个真实数据集,包括引文网络、生物网络、词共现网络和社交网络,详细信息见表1。Cora[25]是引文网络。其中,节点代表论文,根据论文的不同主题分为7类。边代表论文间的引用关系。该网络包含2 708个节点和5 278条边。Citeseer[25]同样是引文网络。该网络包含6类的3 312种出版物。边代表不同出版物间的引用关系,共有4 660条边。PPI[26]是生物网络。该网络包含3 890个节点和38 739条边。其中,节点代表蛋白质,根据不同的生物状态分为50类,边代表蛋白质间的相互作用。WiKi[27]是维基百科数据库中单词的共现网络。该网络包含4 777个节点,92 517条边,以及40种不同的词性标签。BlogCatalog[28]是来自BlogCatalog网站的社交网络。节点代表博主,并根据博主的个人兴趣划分为39类,边代表博主间的友谊关系。该网络包含10 312个节点和333 983条边。

| 表 1 数据集信息 Tab.1 Datasets information |

2) 对比算法。

实验选择4种具有代表性的网络表示学习算法作为对比算法,包括DeepWalk、Node2Vec、GraRep、DNGR。关于这些方法的简要描述如下:

DeepWalk[7]:使用随机游走获取节点序列,通过SkipGram方法学习节点表示。

Node2Vec[8]:类似于DeepWalk,但是使用有偏向的随机游走获取节点序列。

GraRep[9]:通过构造

DNGR[11]:使用随机冲浪方法获取节点的高阶相似性,利用深度神经网络学习节点表示。

3) 参数设定。

对于Multi-GS方法中的GCN模型,使用Adam优化器更新训练中的参数,学习率设为0.05。对于DeepWalk和Node2Vec,节点游走次数设为10,窗口大小设为10,随机游走的长度设为80。Node2Vec的参数p=0.25、q=4。对于GraRep,kstep=4。对于DNGR的随机冲浪方法,迭代次数设为4,重启概率

利用节点分类任务比较Multi-GS和对比算法的性能差异。实验挑选4种不同领域数据集,包括Citeseer、PPI、WiKi和BlogCatalog。首先各自使用网络中所有节点学习节点的特征表示,对于Multi-GS,为比较不同的粒化层次对方法性能的影响,针对不同的数据集,分别设置5组实验,在每组实验中,将粒化层次从0设到4(k为0~4)。k=0表示Multi-GS不使用多粒度结构进行联合学习表示,仅利用原始网络通过GCN模型学习节点的表示。针对节点分类,使用Logistic回归分类器,随机从不同数据集中分别选择{10%,50%,90%}节点训练分类器,在其余节点上评估分类器的性能。为衡量分类性能,实验采用Micro-F1[29]和Macro-F1[29]作为评价指标。两个指标越大,分类性能越好。所有的分类实验重复10次,报告平均结果。表2~5分别展示在Citeseer、PPI、WiKi和BlogCatalog数据集上的节点分类Micro-F1和Macro-F1的均值,其中,粗体表示性能最好的结果,下划线表示对比算法中性能最优的结果。

| 表 2 Citeseer数据集上的Micro-F1和Macro-F1结果 Tab.2 Micro-F1 and Macro-F1 results on Citeseer dataset |

| 表 3 PPI数据集上的Micro-F1和Macro-F1结果 Tab.3 Micro-F1 and Macro-F1 results on PPI dataset |

| 表 4 WiKi数据集上的Micro-F1和Macro-F1结果 Tab.4 Micro-F1 and Macro-F1 results on WiKi dataset |

| 表 5 BlogCatalog数据集上的Micro-F1和Macro-F1结果 Tab.5 Micro-F1 and Macro-F1 results on BlogCatalog dataset |

实验结果显示,在对比算法中,GreRap表现出强有力的竞争力,Node2Vec也表现不俗。因无法获取节点的一阶相似性,故DNGR表现较差。针对Multi-GS,可以发现,相对于不使用联合学习(k=0)的情形,使用多粒度结构在多数情况下可提升方法的性能,说明保留节点的高阶相似性可提升节点分类任务的性能。对于相同的数据集,Multi-GS在不同的粒化层次下存在差异。具体来说,在Citeseer数据集上,随着粒化层数的增加,Multi-GS的Micro-F1和Macro-F1值逐渐增大。在PPI和WiKi数据集上,最佳的结果出现在k=3时。在BlogCatalog数据集上,当k=2时方法性能最好。依据表1中不同数据集平均度的统计结果,可以看出,Citeseer数据集的平均度是3.898 1,说明该网络是一个弱关系网络,BlogCatalog数据集的平均度高达64.775 6,是一个强关系网络。在弱关系网络中,由于不同社团间的联系较弱,使得不同粒层中粒的粒度差异较小,而对于强关系网络,由于社团内部边的密度与不同社团间的边密度差异较小,使得小社团快速合并成大社团,导致不同粒层间的粒度差异会非常大。在强关系网络中,随着粒化层数的增加,各粒层中相应粒的特征趋于雷同,若拼接过多类似的特征将导致节点自身的特征被弱化,导致Multi-GS的性能会有先提升再降低的情况。因此,针对不同的数据集,如何设置一个合理的粒化层数是Multi-GS需要考虑的问题。

3.3 链接预测链接预测任务是预测网络中给定节点间是否存在边。通过链接预测可以显示不同网络表示方法的链接预测能力。对于链接预测任务,仍然选择Citeseer、PPI、WiKi和BlogCatalog作为验证数据集,分别从不同数据集中随机移除现有链接的50%。使用剩余网络,利用不同的方法学习节点表示。另外,将被移除边中的节点对作为正样本,同时随机采样相同数量未连接的节点对作为负样本,使正样本和负样本构成平衡数据集。实验中,首先基于给定样本中的节点对,通过表示向量计算其余弦相似度得分,然后使用Logistic回归分类器进行分类,并通过曲线下面积[29](area under curve,AUC)评估标签间的一致性和样本的相似性得分。对于Multi-GS,k为0~4。表6显示链接预测任务中,不同算法在Citeseer、PPI、WiKi和BlogCatalog数据集上的AUC值,其中,粗体表示性能最好的结果,下划线表示对比算法中性能最优的结果。

| 表 6 链接预测任务中不同数据集上的AUC结果 Tab.6 AUC score on all datasets |

表6的结果显示,在对比算法中,GraRep表现依然最好。对于Multi-GS,当不使用联合学习框架时,方法的性能是最优的。以AUC为评价标准,相对于对比算法中的最优结果,在Citeseer数据集上相对提高45.24%,在WiKi数据集上相对提高15.4%,在PPI数据集上相对提高39.14%,在BlogCatalog数据集上相对提高20.66%。但是,随着粒化层数的增加,对于链接预测任务,方法的性能会越来越差,下降速度会随着网络的密度成正比。综合来看,在链接预测任务中,利用多粒度结构联合学习到的节点表示无法提升链接预测能力,说明该类任务需要更多节点自身的特征,节点低阶相似性比高阶相似性更重要。虽然融合多粒度结构中节点的高阶特征会导致Multi-GS性能下降,但可以看出,在较低的k 值下,仅利用节点的拓扑结构信息,Multi-GS的链接预测结果十分理想,说明Multi-GS中GCN模型能有效地捕获节点的低阶相似性。

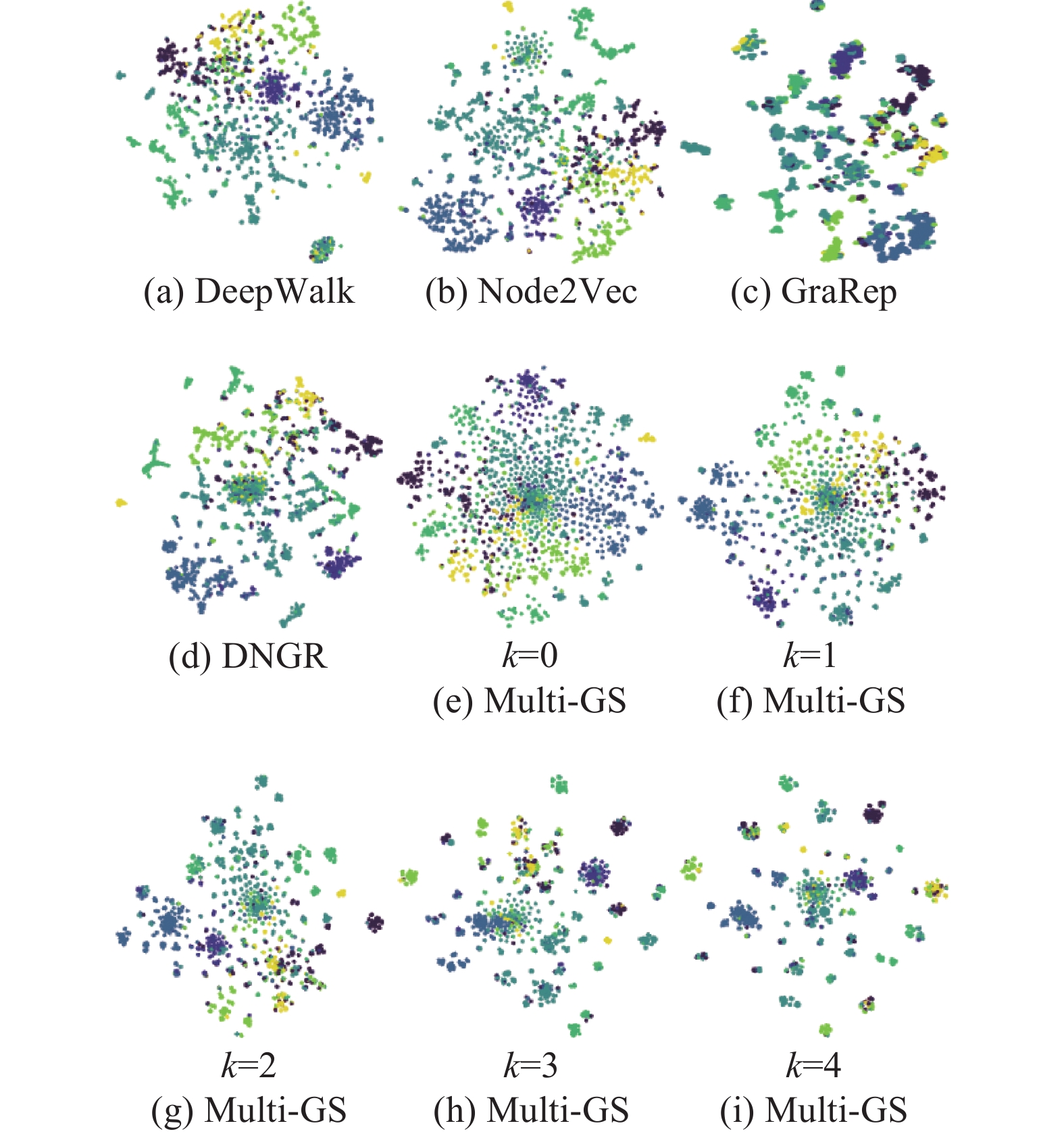

3.4 可视化在本小节中,对Multi-GS和对比算法学习的节点表示利用可视化进行比较。由于空间限制,实验选择节点数较少的Cora作为可视化数据集。其中,每个节点代表一篇机器学习论文,所有节点按照论文的主题分为7类。实验通过t-SNE[27]工具,将所有方法的节点表示投影到二维空间中,不同类别的节点用不同的颜色显示。可视化结果如图4所示。

|

Download:

|

| 图 4 Cora数据集的可视化结果 Fig. 4 The visualization of Cora dataset | |

在图4中,DeepWalk、Node2Vec的表示结果较差,节点散布在整个空间中,不同类别的节点相互混在一起,无法观察到分组结构,意味着算法无法将相似节点组合在一起。通过GreRap的可视化结果,能够看出节点间的分组结构。对于Multi-GS,在图4(e)中,不同分类的节点相互混合,这种现象在图的中心尤其明显。意味着仅保留低阶相似性的节点表示无法区分不同分类的节点。图4(f)显示结果与图4(e)相似。在图4(g)~图4(i)中,可以看到节点逐渐开始呈现紧凑的分组结构,而且不同组之间的距离越来越大,随着层数的增加,Multi-GS可以将相似结构的节点进行分组并推到一起。因此,在节点分类任务中,利用多粒度结构使Multi-GS获得更好的结果。

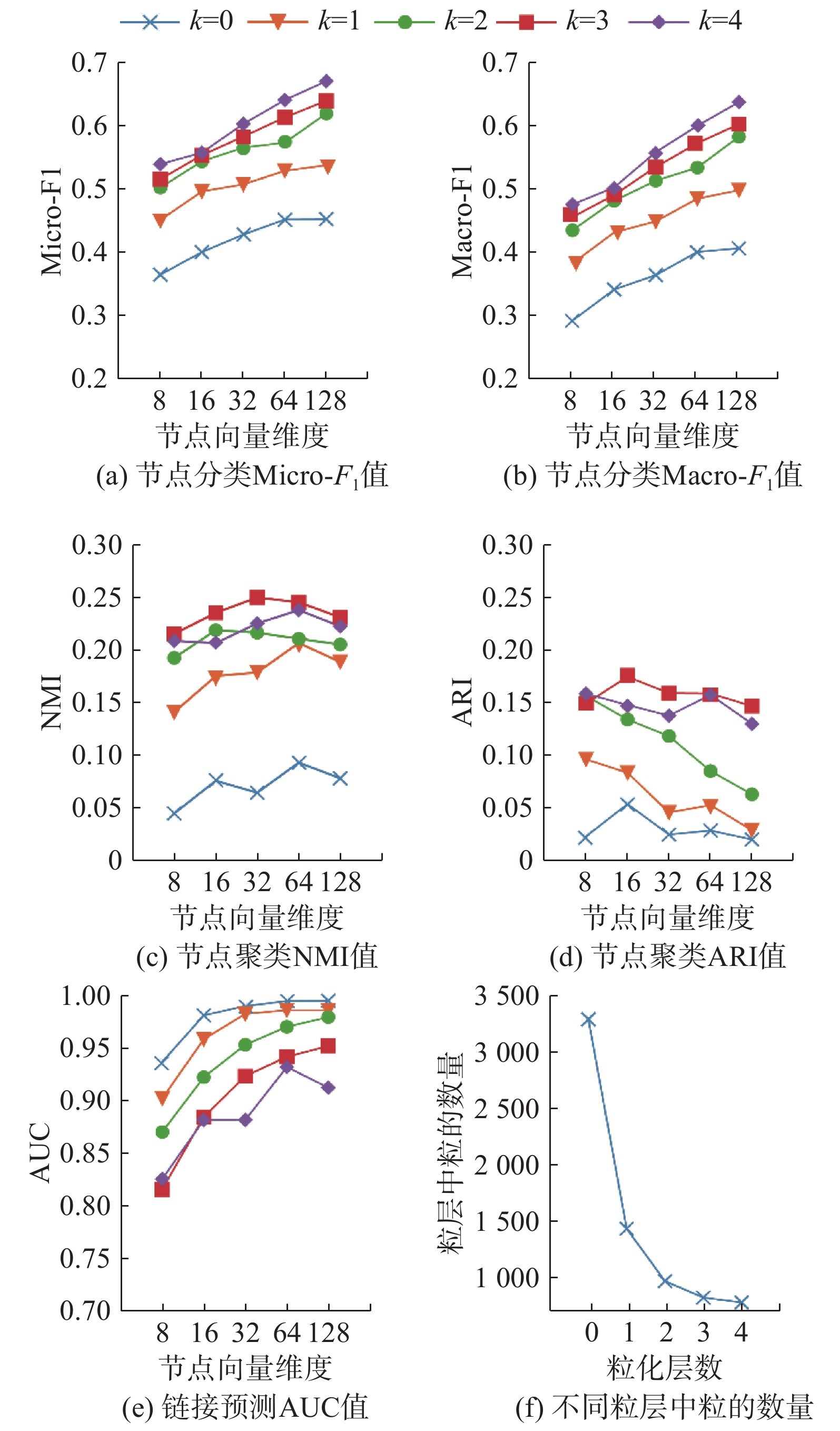

3.5 参数敏感性分析本节进行参数敏感性实验,主要分析不同的特征维度和粒化层数对Multi-GS性能的影响。实验针对Citeseer数据集,利用Multi-GS在不同粒化层数下学习到的不同维度的节点表示,通过节点分类、节点聚类和链接预测任务对Multi-GS进行评估,并报告相关的实验结果。对于节点分类任务,随机选择50%节点训练分类器。采用Micro-F1和Macro-F1作为评价指标。对于节点聚类任务,采用NMI[30]和ARI[30]作为评价指标。对于链接预测任务,移除50%的链接,采用AUC作为评价指标。参数k表示最终节点的特征表示融合的粒化层数,若k=0,表示仅选取最细粒度的粒特征表示作为最终的节点特征表示,k=1,表示用第0层和第1层的粒学习到的特征表示进行拼接后的向量作为最终的节点特征表示,以此类推。其中,0表示最细粒度,k值越大,表示粒度越粗。对于所有任务,重复实验10次并报告平均结果,实验结果如图5所示。

|

Download:

|

| 图 5 参数敏感性分析 Fig. 5 Parametric sensitivity analysis | |

图5的结果表明,对于节点分类任务,Micro-F1和Macro-F1指标随着特征维度的上升而上升。因为一个更大的特征维度可以保留网络中更多的信息。随着粒化层数的增加,Micro-F1和Macro-F1指标也逐渐提升,但是可以看出,这样的提升效果会随着粒化层数的增加而越变越小,甚至退化。对于节点聚类任务,NMI和ARI的最优结果都出现在k=3时,继续增加层数,结果会下降。对于链接预测任务,AUC指标随着特征维度的上升而上升,这是合理的情形。但是当k=4时,AUC指标发生波动,说明叠加更多的层次会导致学习的特征表示发生信息变化,这是需要避免的情况。通过图5(f)中显示的各层中粒的数量的变化曲线,可以看出,不同层间的粒度差异会随着层数的增加而减少。在第3层和第4层间,这种粒度差异几乎很小,意味着节点在第3层和第4层中的特征极为相似,若拼接过多类似的高阶特征向量,导致节点自身的特征被弱化,使得最终Multi-GS的输出表示不能在网络分析任务中发挥出方法优势。

4 结束语在网络表示学习中,如何让学习到的节点特征表示能够保留网络结构的局部和全局特征,仍是一个开放和重要的研究课题。本文结合分层递阶的思想,提出一种无监督网络表示学习方法Multi-GS,通过构建网络的深度结构解决GCN无法有效捕获节点高阶相似性特征的问题。该方法首先利用模块度增量逐步构建网络的多粒度分层结构,然后利用GCN模型学习不同粒度空间中粒的特征表示,最后将已学习的粒特征向量逐层映射拼接为原始网络的节点表示。利用Multi-GS可捕获多种网络结构信息,包括一阶和二阶相似性、社团内相似性(高阶结构)和社团间相似性(全局结构)。

为验证Multi-GS方法的性能,通过在4个真实数据集上进行节点分类任务和链接预测任务,并与几个经典的网络表示学习方法进行比较。从实验结果上看,针对节点分类任务,使用多粒度结构的Multi-GS能够改进节点的特征表示,提升GCN模型的节点分类性能。但是由于网络结构的多样性和复杂性,Multi-GS的粒化层数无法固定,必须根据不同结构的网络进行调整。针对链接预测任务,使用多粒度结构Multi-GS对GCN模型的性能造成损害。说明节点间的低阶邻近关系对链接预测任务是至关重要的。尽管如此,在不使用多粒度结构的情况下,以AUC为评价指标,相对于对比算法,Multi-GS的性能优势非常明显。针对Multi-GS超参数敏感性的实验结果可以看出,面对不同的网络分析任务,融合不同粒度的粒特征对Multi-GS的性能有着不同程度的影响。

未来工作方向包括探索其他网络粒化分层技术和继续深入研究不同的层和不同粗细的粒以及不同类型的网络结构对Multi-GS的影响。

| [1] |

涂存超, 杨成, 刘知远, 等. 网络表示学习综述[J]. 中国科学: 信息科学, 2017, 47(8): 980-996. TU Cunchao, YANG Cheng, LIU Zhiyuan, et al. Network representation learning: an overview[J]. Scientia sinica informationis, 2017, 47(8): 980-996. (  0) 0)

|

| [2] |

SHEIKH N, KEFATO Z T, MONTRESOR M. Semi-supervised heterogeneous information network embedding for node classification using 1D-CNN[C]//Proceedings of the Fifth International Conference on Social Networks Analysis, Management and Security. Valencia, Spain, 2018: 177–181.

( 0) 0)

|

| [3] |

XU Guangluan, WANG Xiaoke, WANG Yang, et al. Edge-nodes representation neural machine for link prediction[J]. Algorithms, 2019, 12(1): 12. DOI:10.3390/a12010012 ( 0) 0)

|

| [4] |

HU Xuegang, HE Wei, LI Lei, et al. An efficient and fast algorithm for community detection based on node role analysis[J]. International journal of machine learning and cybernetics, 2019, 10(4): 641-654. DOI:10.1007/s13042-017-0745-x ( 0) 0)

|

| [5] |

PEREDA M, ESTRADA E. Visualization and machine learning analysis of complex networks in hyperspherical space[J]. Pattern recognition, 2019, 86: 320-331. DOI:10.1016/j.patcog.2018.09.018 ( 0) 0)

|

| [6] |

SHI Chuan, HU Binbin, ZHAO W X, et al. Heterogeneous information network embedding for recommendation[J]. IEEE transactions on knowledge and data engineering, 2019, 31(2): 357-370. DOI:10.1109/TKDE.2018.2833443 ( 0) 0)

|

| [7] |

PEROZZI B, AL-RFOU R, SKIENA S. DeepWalk: online learning of social representations[C]//Proceedings of the 20th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. New York, USA, 2014: 701–710.

( 0) 0)

|

| [8] |

GROVER A, LESKOVEC J. node2vec: Scalable feature learning for networks[C]//Proceedings of the 22th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. San Francisco, California, USA, 2016: 855–864.

( 0) 0)

|

| [9] |

CAO Shaosheng, LU Wei, XU Qiongkai. GraRep: learning graph representations with global structural information[C]// Proceedings of the 24th ACM International on Conference on Information and Knowledge Management. Melbourne, Australia, 2015: 891–900.

( 0) 0)

|

| [10] |

PEROZZI B, KULKARNI V, CHEN Haochen, et al. Don't walk, Skip!: Online learning of multi-scale network embeddings[C]//Proceedings of the 2017 IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining 2017. Sydney, Australia, 2017: 258–265.

( 0) 0)

|

| [11] |

CAO Shaosheng, LU Wei, XU Qiongkai. Deep neural networks for learning graph representations[C]//Proceedings of the 30th AAAI Conference on Artificial Intelligence. Phoenix, USA, 2016: 1145–1152.

( 0) 0)

|

| [12] |

RIBEIRO L F R, SAVERESE P H P, FIGUEIREDO D R. struc2vec: Learning node representations from structural identity[C]//Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. Halifax, Canada, 2017: 385–394.

( 0) 0)

|

| [13] |

WANG Xiao, CUI Peng, WANG Jing, et al. Community preserving network embedding[C]//Proceedings of the 31th AAAI Conference on Artificial Intelligence. San Francisco, California, USA, 2017: 203–209.

( 0) 0)

|

| [14] |

方莲娣, 张燕平, 陈洁, 等. 基于三支决策的非重叠社团划分[J]. 智能系统学报, 2017, 12(3): 293-300. FANG Liandi, ZHANG Yanping, CHEN Jie, et al. Three-way decision based on non-overlapping community division[J]. CAAI transactions on intelligent systems, 2017, 12(3): 293-300. (  0) 0)

|

| [15] |

DONNAT C, ZITNIK M, HALLAC D, et al. Learning structural node embeddings via diffusion wavelets[C]//Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. London, UK, 2018: 1320–1329.

( 0) 0)

|

| [16] |

CHEN Haochen, PEROZZI B, HU Hifan, et al. HARP: hierarchical representation learning for networks[C]// Proceedings of the 32th AAAI Conference on Artificial Intelligence. New Orleans, Louisiana, USA, 2018: 2127–2134.

( 0) 0)

|

| [17] |

ZHANG Si, TONG Hanghang, XU Jiejun, et al. Graph convolutional networks: algorithms, applications and open challenges[C]//Proceedings of the 7th International Conference on Computational Data and Social Networks. Shanghai, China, 2018: 79–91.

( 0) 0)

|

| [18] |

KIPF T N, WELLING M. Semi-supervised classification with graph convolutional networks[C/OL]. [2019-01-28]. https://arxiv.org/pdf/1609.02907.pdf.

( 0) 0)

|

| [19] |

LI Qimai, HAN Zhichao, WU Xiaoming. Deeper insights into graph convolutional networks for semi-supervised learning[C]//Proceedings of the 32th AAAI Conference on Artificial Intelligence. New Orleans, Louisiana, USA, 2018: 3538–3545.

( 0) 0)

|

| [20] |

张燕平, 张铃, 吴涛. 不同粒度世界的描述法——商空间法[J]. 计算机学报, 2004, 27(3): 328-333. ZHANG Yanping, ZHANG Ling, WU Tao. The representation of different granular worlds: a quotient space[J]. Chinese journal of computers, 2004, 27(3): 328-333. DOI:10.3321/j.issn:0254-4164.2004.03.006 (  0) 0)

|

| [21] |

赵姝, 柯望, 陈洁, 等. 基于聚类粒化的社团发现算法[J]. 计算机应用, 2014, 34(10): 2812-2815. ZHAO Shu, KE Wang, CHEN Jie, et al. Community detection algorithm based on clustering granulation[J]. Journal of computer applications, 2014, 34(10): 2812-2815. DOI:10.11772/j.issn.1001-9081.2014.10.2812 (  0) 0)

|

| [22] |

NEWMAN M E J. Fast algorithm for detecting community structure in networks[J]. Physical review E, 2003, 69(6): 066133. ( 0) 0)

|

| [23] |

赵姝, 赵晖, 陈洁, 等. 基于社团结构的多粒度结构洞占据者发现及分析[J]. 智能系统学报, 2016, 11(3): 343-351. ZHAO Shu, ZHAO Hui, CHEN Jie, et al. Recognition and analysis of structural hole spanner in multi-granularity based on community structure[J]. CAAI transactions on intelligent systems, 2016, 11(3): 343-351. (  0) 0)

|

| [24] |

KIPF T N, WELLING M. Variational graph auto-encoders[C/OL]. [2019-01-28]. https://arxiv.org/pdf/1611.07308.pdf.

( 0) 0)

|

| [25] |

MCCALLUM A K, NIGAM K, RENNIE J, et al. Automating the construction of internet portals with machine learning[J]. Information retrieval, 2000, 3(2): 127-163. DOI:10.1023/A:1009953814988 ( 0) 0)

|

| [26] |

BREITKREUTZ B J, STARK C, REGULY T, et al. The BioGRID interaction database: 2008 update[J]. Nucleic acids research, 2008, 36: D637-D640. ( 0) 0)

|

| [27] |

GAO Hongchang, HUANG Heng. Self-paced network embedding[C]//Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. London, UK, 2018: 1406–1415.

( 0) 0)

|

| [28] |

TANG Lei, LIU Huan. Relational learning via latent social dimensions[C]//Proceedings of the 15th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. Paris, France, 2009: 817–826.

( 0) 0)

|

| [29] |

FAWCETT T. An introduction to ROC analysis[J]. Pattern recognition letters, 2006, 27(8): 861-874. DOI:10.1016/j.patrec.2005.10.010 ( 0) 0)

|

| [30] |

FAHAD A, ALSHATRI N, TARI Z, et al. A survey of clustering algorithms for big data: Taxonomy and empirical analysis[J]. IEEE transactions on emerging topics in computing, 2014, 2(3): 267-279. DOI:10.1109/TETC.2014.2330519 ( 0) 0)

|

2019, Vol. 14

2019, Vol. 14