有效的数据低维表示不仅能够发现数据集中的潜在信息,还能去除原始数据中的冗余特征,快速处理高维数据,其中最具代表性的方法包括主成分分析[1]、线性判别分析[2]及非负矩阵分解[3]。然而PCA和LDA得到的数据低维表示中可能会包含负值元素,这在实际的应用中缺少合理的解释;而NMF是一种非负约束下的矩阵分解方法,仅允许原始数据的加法而非减法组合,因此它有利于稀疏的且基于部分的表示,比非稀疏的全局表示更稳健。

经典的NMF是一种无监督学习算法,不能充分利用原始数据的类别信息。为了提高NMF的判别能力,Wang等[4]提出了Fisher-NMF(FNMF),而Zafeiriou等[5]和Kotsia等[6]在NMF的目标函数中增加了散度差项,建立起判别子空间法。由于LDA[2]中Fisher准则的比值形式很难处理,集成在NMF模型中会出现“小样本问题”。为此,可考虑最大间距准则(max margin criterion,MMC)[7-8],该准则是以类中心及类散度为度量依据,使得降维后不同类样本之间距离越远而同类样本之间距离越近,在充分使用原始数据类别信息的基础上使得改进后的NMF(如NDMF[9])具有良好的判别性质。

另外,为了揭示和利用数据的内在几何结构,出现了许多基于流形特征的NMF,例如GNMF[10]、GDNMF[11]、LCGNMF[12]和NLMF[13]等,这些方法通过添加图拉普拉斯正则化项达到了提高图像聚类或分类性能的目的。Shang等[14]同时考虑数据流形和特征流形,提出了图对偶正则化NMF(DNMF)。而Meng等[15]则在图对偶模型中加入了稀疏性(DSNMF)和正交性的约束[16],有效地简化了高维计算,揭示数据和特征之间的双流形结构。

由于NDMF[9]具有良好的判别性质,而NLMF[13]又利用了数据的图结构来提升其分类性能,故本文将两种方法结合起来提出一种基于图正则化的稀疏判别非负矩阵分解算法(GSDNMF-L2,1),在充分利用数据的类别信息的同时,不仅保持了数据样本之间的局部流形结构,而且能提取稀疏的特征,进而提升了其分类性能。若干图像数据集上的实验结果表明,该方法在特征提取与分类性能等方面优于其他各对比算法。

1 相关工作 1.1 非负矩阵分解(NMF)假设由

| $\begin{array}{l} \mathop {\min }\limits_{{\bf{W}},{\bf{H}}} \begin{array}{*{20}{c}} {} \end{array}\left\| {{{X}} - {{WH}}} \right\|_F^2 \\ {\rm{s.t.}}\begin{array}{*{20}{c}} {} \end{array}{{W}} \geqslant 0,{{H}} \geqslant 0 \\ \end{array} $ | (1) |

其中

| $\begin{gathered} {{{W}}_{{{ik}}}} \leftarrow {{{W}}_{{{ik}}}}\dfrac{{{{\left( {{{X}}{{{H}}^{\rm{T}}}} \right)}_{{{ik}}}}}}{{{{\left( {{{WH}}{{{H}}^{\rm{T}}}} \right)}_{{{ik}}}}}},{{{H}}_{{{kj}}}} \leftarrow {{{H}}_{{{kj}}}}\dfrac{{{{\left( {{{{W}}^{\rm{T}}}{{X}}} \right)}_{{{kj}}}}}}{{{{\left( {{{{W}}^{\rm{T}}}{{WH}}} \right)}_{{{kj}}}}}}\\ \left( {i = 1,2, \cdots ,m{\text{;}}k = 1,2, \cdots ,r{\text{;}}j = 1,2, \cdots ,n} \right) \end{gathered}$ | (2) |

图正则化非负矩阵分解(graph regularized non-negative matrix factorization,GNMF)[9]考虑了数据样本之间的流形结构,希望数据样本在低维空间中尽可能多地保持其近邻结构,从而寻找数据在低维空间中的紧致嵌入。该问题可用以下优化模型表示:

| $\begin{array}{l} \mathop {\min}\limits_{{W},{{H}}} \begin{array}{*{20}{c}} {} \end{array}\left\| {{{X}} - {{WH}}} \right\|_F^2 +{\textit{λ}}{\rm{tr}}\left( {{{HL}}{{{H}}^{\rm{T}}}} \right)\\ {\rm{s.t.}}\begin{array}{*{20}{c}} {} \end{array}{{W}} \geqslant {{0}},{{H}} \geqslant {{0}} \end{array}$ | (3) |

其中

求解该问题的乘性迭代规则为

| $\begin{gathered} {{{W}}_{{{ik}}}} \leftarrow {{{W}}_{{{ik}}}}\dfrac{{{{\left( {{{X}}{{{H}}^{\rm{T}}}} \right)}_{{{ik}}}}}}{{{{\left( {{{WH}}{{{H}}^{\rm{T}}}} \right)}_{{{ik}}}}}},{{{H}}_{{{kj}}}} \leftarrow {{{H}}_{{{kj}}}}\dfrac{{{{\left( {{{{W}}^{\rm{T}}}{{X}} + \lambda {{H}}{{{S}}_{{H}}}} \right)}_{{{kj}}}}}}{{{{\left( {{{{W}}^{\rm{T}}}{{WH}} + \lambda {{H}}{{{D}}_{{H}}}} \right)}_{{{kj}}}}}}\\ \left( {i = 1,2, \cdots ,m{\text{;}}k = 1,2, \cdots ,r{\text{;}}j = 1,2, \cdots ,n} \right) \end{gathered} $ | (4) |

设有

| $\max {\rm{tr}}\left( {{{{P}}^{\rm{T}}}({{{S}}_{{b}}} - {{{S}}_{{w}}}){{P}}} \right)$ | (5) |

式中:

根据流形学习的图嵌入思想[17-19],数据在高维空间中的局部邻域关系在低维样本空间中应得到保持,同时也希望原始数据集的散度关系得到保持,因此在NMF的目标函数中通过添加图正则化约束和判别约束可达到提高算法分类性能的目的。

2.1 构建权重矩阵基于图嵌入的流形学习法其前提是构建相应的邻接图及权矩阵。传统的流形学习方法通常是利用k-近邻法或ε-邻域法来构建图和权矩阵,但此类方法的性能依赖于参数k或ε。本文利用基于同类样本的稀疏表示方法选择邻域样本,该方法是自适应且与参数无关的,对噪声数据具有鲁棒性。

图1比较了两种方法选择的邻域样本。其中,k-近邻法选择的邻域样本包含了数据集中不同类别的人脸,人脸角度变化与标注图像一致。相比而言,本文方法所选的样本不仅和标注人脸具有相似角度,而且最接近其正面的人脸图像,且相应的稀疏表示系数越大,越接近标注样本。所以,基于同类样本的稀疏表示方法构建的权重矩阵不仅包含了样本的类别信息,而且还包含了样本间的局部邻域结构,从而具有良好的判别性质。

|

Download:

|

| 图 1 k-近邻法与稀疏表示法确定的近邻 Fig. 1 Neighbor samples determined by k-nearest neighbor method and sparse representation | |

对于某一类数据样本

| $\begin{array}{l} \min \begin{array}{*{20}{c}} {} \end{array}{\left\| {{{s}}_{{i}}^{{c}}} \right\|_1}\\ {\rm{s.t.}}\begin{array}{*{20}{c}} {} \end{array}{{{x}}_{{i}}} = {{{X}}_{{c}}}{{s}}_{{i}}^{{c}},c\left( {{{{x}}_{{i}}}} \right) = c \end{array}$ | (6) |

式中:

| ${{{S}}_{{H}}} = {\left( {{{{S}}_{{ij}}}} \right)_{n \times n}} = {\rm diag}\left\{ {{{{S}}^{{1}}},{{{S}}^{{2}}}, \cdots ,{{{S}}^{{C}}}} \right\}$ | (7) |

其中:子矩阵

图正则化是基于邻域保持嵌入的,它将训练样本之间的局部邻域关系通过权矩阵S在由NMF的系数矩阵H的列向量hj所张成的子空间中得到保持,因此得到以下图正则化约束项:

| $ \begin{split} \frac{1}{2}\sum\limits_{i,j = 1}^n {\left\| {{{{h}}_{{i}}} - {{{h}}_{{j}}}} \right\|_2^2{{{S}}_{{{ij}}}}} = & {\rm{tr}}\left( {{{H}}{{{D}}_{{H}}}{{{H}}^{\rm{T}}}} \right) - {\rm{tr}}\left( {{{H}}{{{S}}_{{H}}}{{{H}}^{\rm{T}}}} \right) =\\ & {\rm{tr}}\left( {{{H}}{{{L}}_{{H}}}{{{H}}^{\rm{T}}}} \right) \end{split}$ | (8) |

式中:

以NMF中的基矩阵W为投影矩阵,使得投影后同类样本之间的散度最小而不同类样本之间的散度最大[19],以保留训练样本的判别特征,从而有以下基于MMC准则的判别约束项:

| ${\rm{tr}}\left( {{{{W}}^{\rm{T}}}\left( {{{{S}}_{{B}}} - {{{S}}_{{W}}}} \right){{W}}} \right)$ | (9) |

为满足NMF的非负约束的要求,式(9)可改写为

| ${\rm{tr}}\left( {{{{W}}^{\rm{T}}}\left( {\left( {{{S}}_{{B}}^ + - {{S}}_{\left| {{B}} \right|}^ - } \right) - \left( {{{S}}_{{W}}^ + - {{S}}_{\left| {{W}} \right|}^ - } \right)} \right){{W}}} \right)$ | (10) |

式中:

对于图像数据,其特征维度和冗余程度都很高,基于稀疏编码的思想[20],将样本

| ${{{x}}_{{i}}} \approx {{{w}}_{{1}}}{{{h}}_{{{1,i}}}} + {{{w}}_{{2}}}{{h}}{}_{{{2,i}}} + \cdots + {{{w}}_{{r}}}{{{h}}_{{{r,i}}}}$ | (11) |

通常使用

| ${\left\| {{H}} \right\|_1} = \sum\limits_{i = 1}^r {\sum\limits_{j = 1}^n {\left| {{{{h}}_{{{ij}}}}} \right|} } $ | (12) |

系数矩阵

| $\left\| {{{{H}}^{\bf{T}}}} \right\|_{2,1}^{} = \sum\limits_{j = 1}^n {{{\left\| {{{{h}}_{{j}}}} \right\|}_2} = } \sum\limits_{j = 1}^n {\sqrt {\sum\limits_{{\rm{i}} = 1}^r {{{h}}_{{{ij}}}^2} } } $ | (13) |

其中

由以上分析,利用图正则化约束来保持样本之间的邻域结构;以最大间距准则保持数据集的判别性;以

| $\begin{split} & min \begin{array}{*{20}{c}} {} \end{array}\left\| {{{X}} - {{WH}}} \right\|_F^2 + \lambda {\rm{tr}}\left( {{{H}}{{{L}}_{{H}}}{{{H}}^{\rm{T}}}} \right) + \\ & \beta {\rm{tr}}\left( {{{{W}}^{\rm{T}}}\left( {\left( {{{S}}_{{W}}^ + - {{S}}_{\left| {{W}} \right|}^ - } \right) - \left( {{{S}}_{{B}}^ + - {{S}}_{\left| {{B}} \right|}^ - } \right)} \right){{W}}} \right) + \mu \left\| {{{{H}}^{\rm{T}}}} \right\|_{2,1}^{} \\& {\rm{s.t.}}\begin{array}{*{20}{c}} {} \end{array}{{W}} \geqslant 0,{{H}} \geqslant 0 \end{split} $ | (14) |

令

| $\begin{gathered} L = \left\| {{{X}} - {{WH}}} \right\|_F^2 +{\textit{λ}} {\rm{tr}}\left( {{{H}}{{{L}}_{{H}}}{{{H}}^{\rm{T}}}} \right) + \\ \beta {\rm{tr}}\left( {{{{W}}^{\rm{T}}}\left( {\left( {{{S}}_{{W}}^ + - {{S}}_{\left| {{W}} \right|}^ - } \right) - \left( {{{S}}_{{B}}^ + - {{S}}_{\left| {{B}} \right|}^ - } \right)} \right){{W}}} \right) + \\ \mu {\rm{tr}}\left( {{{{H}}^{\rm{T}}}{{QH}}} \right) - {\rm{tr}}\left( {\varPhi {{{W}}^{\rm{T}}}} \right) - {\rm{tr}}\left( {\varPsi {{H}}} \right) \end{gathered} $ | (15) |

式中:

使用交替迭代方法求解。先固定

| $\begin{gathered} \frac{{\partial L}}{{\partial {{W}}}} = - 2{{X}}{{{H}}^{\rm{T}}} + 2{{WH}}{{{H}}^{\rm{T}}} + \\ 2\beta \left( {\left( {{{S}}_{{W}}^ + - {{S}}_{\left| {{W}} \right|}^ - } \right) - \left( {{{S}}_{{B}}^ + - {{S}}_{\left| {{B}} \right|}^ + } \right)} \right){{W}} - {{\varPhi }} = 0 \end{gathered} $ | (16) |

由互补松驰条件

| ${\left( { - {{X}}{{{H}}^{\rm{T}}} + {{WH}}{{{H}}^{\rm{T}}} + \beta \left( {\left( {{{S}}_{{W}}^ + - {{S}}_{\left| {{W}} \right|}^ - } \right) - \left( {{{S}}_{{B}}^ + - {{S}}_{\left| {{B}} \right|}^ - } \right)} \right){{W}}} \right)_{ik}}{{{W}}_{{{ik}}}} = 0$ | (17) |

于是,有以下关于

| ${{W}}_{{{ik}}}^{t + 1} \leftarrow {{W}}_{{{ik}}}^t\frac{{{{\left( {{{X}}{{{H}}^{\rm{T}}} + \beta \left( {{{S}}_{\left| {{W}} \right|}^ - + {{S}}_{{B}}^ + } \right)} \right)}_{ik}}}}{{{{\left( {{{WH}}{{{H}}^{\rm{T}}} + \beta \left( {{{S}}_{{W}}^ + + {{S}}_{\left| {{B}} \right|}^ - } \right)} \right)}_{ik}}}}$ | (18) |

同理,得到关于

| $ \begin{gathered} {{H}}_{{{kj}}}^{t + 1} \leftarrow \\ {{H}}_{{{kj}}}^t\frac{{{{\left( {{{{W}}^{\rm{T}}}{{X}} +{\textit{λ}} {{H}}{{{S}}_{{H}}} + \beta {\nabla _{{H}}}{\rm{tr}}\left( {{{W}}\left( {{{S}}_{\left| {{W}} \right|}^ - + {{S}}_{{B}}^ + } \right){{{W}}^{\rm{T}}}} \right)} \right)}_{kj}}}}{{{{\left( {{{{W}}^{\rm{T}}}{{WH}} + {\textit{λ}} {{H}}{{{D}}_{{H}}} + \beta {\nabla _{{H}}}{\rm{tr}}\left( {{{W}}\left( {{{S}}_{{W}}^ + + {{S}}_{\left| {{B}} \right|}^ - } \right){{{W}}^{\rm{T}}}} \right) + \mu {{HQ}}} \right)}_{kj}}}} \end{gathered}$ | (19) |

在每次迭代得到基图像矩阵

综合以上讨论,得到图正则化稀疏判别非负矩阵分解法:

算法1 图正则化稀疏判别非负矩阵分解算法(GSDNMF-L2,1)

输入 训练样本

1)利用式(6)和式(7)计算权重矩阵

2)利用式(9)计算类内散度

3)随机初始化

4)

Fort= 1:

利用式(18)得到更新后的

End

输出 基矩阵

设

为了说明GSDNMF-L2,1算法在图像特征提取中的有效性,分别在ORL和AR人脸数据集和COIL20实物数据集上进行实验,并将之与NMF[3]、GNMF[10]、NDMF[9]等算法进行比较,同时比较利用L1范数进行稀疏约束的模型(GSDNMF-L1)。将数据集按0.5的比例随机划分为训练集和测试集,利用训练集进行特征提取,获得基矩阵

ORL人脸数据库包含了40个人的人脸图像,每个人有10张,图像具有不同面部表情(睁/闭眼,笑/不笑)、面部细节(眼镜/无眼镜)及不同光照条件。

AR数据集包含了120个人的人脸图像,每个人有26张图像,图像具有不同的面部表情、照明条件和遮挡(太阳眼镜/围巾)。

COIL20数据集包含了20个不同的物体(玩具小鸭、招财猫、杯子等),其中,每个物体在水平面上每隔5°拍摄一张图片,因此每个物体一共有72幅图片,整个数据集共计l 440幅图片。

实验中,所有图像均压缩成32×32大小的灰度图,将其每列相连构成大小为1 024维的向量,并做归一化处理。如图2所示。

|

Download:

|

| 图 2 数据集示例 Fig. 2 The instances of datasets | |

GSDNMF-L2,1算法包含了图正则化约束参数λ、判别约束参数

|

Download:

|

| 图 3 参数对识别率的影响 Fig. 3 The influence of parameters | |

由图3可知,图正则化参数λ对识别率的影响较大,在[0,0.01]的范围内,识别率随参数的增大而增大,在[0.01,1]的范围内,识别率随参数的增大而减少。判别约束参数和稀疏参数对识别率的影响较小,在[0,1]范围内一直保持较高识别率。经过多次实验,最终确定算法的最优参数组合为λ=0.005,β=1,μ=0.5。

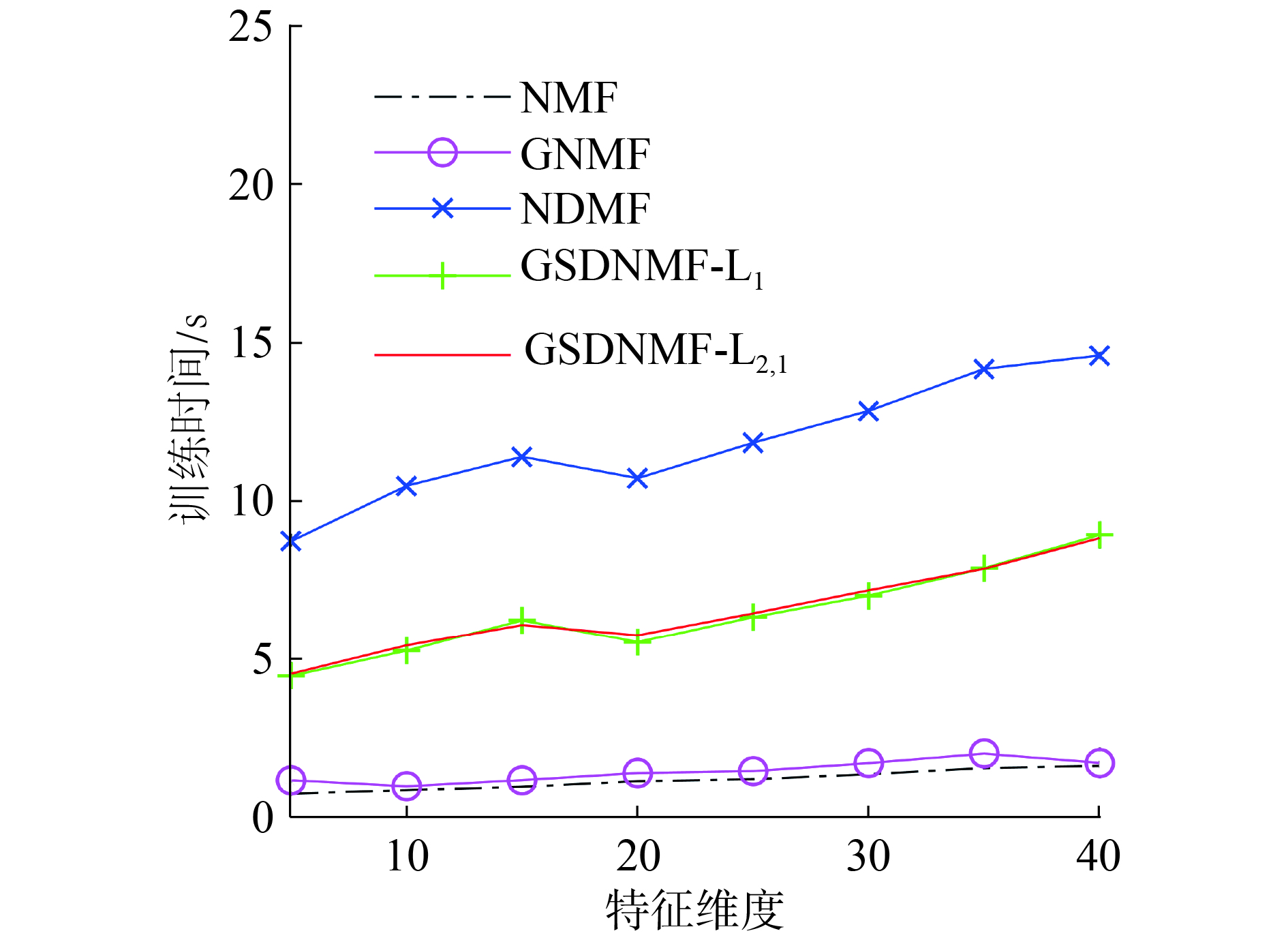

取基图数分别为3、4、5、6、7、8的平方,在3种数据集上独立随机地进行20次实验,计算平均识别率及方差。表1~3给出了5种算法在3种数据集上的平均识别率随基图像个数的增加而变化的情况。在大多数情况下,GSDNMF-L2,1的识别率要优于其他几种算法,且方差更小,说明在NMF的目标函数中结合图正则化约束和判别约束能够有效提高图像特征提取的准确率和稳定性。图4比较了在ORL数据集上5种算法的训练时间(取类别数40作为基图数目),可以发现NMF和GNMF的训练时间较短,NDMF训练时间最长,GSDNMF-L2,1和GSDNMF-L1比NDMF时间短。可知,利用稀疏编码的思想对系数矩阵H添加稀疏性约束,在每一迭代更新的过程中能够尽可能少地选择基图像重构训练样本,提高了运算速度。

| 表 1 ORL数据集上的平均识别率(方差) Tab.1 Average recognition rate (variance) on ORL dataset |

| 表 2 AR数据集上的平均识别率(方差) Tab.2 Average recognition rate (variance) on AR dataset |

| 表 3 COIL20数据集上的平均识别率(方差) Tab.3 Average recognition rate (variance) on COIL20 dataset |

|

Download:

|

| 图 4 训练时间随基图数变化的曲线 Fig. 4 Variation curve of training time | |

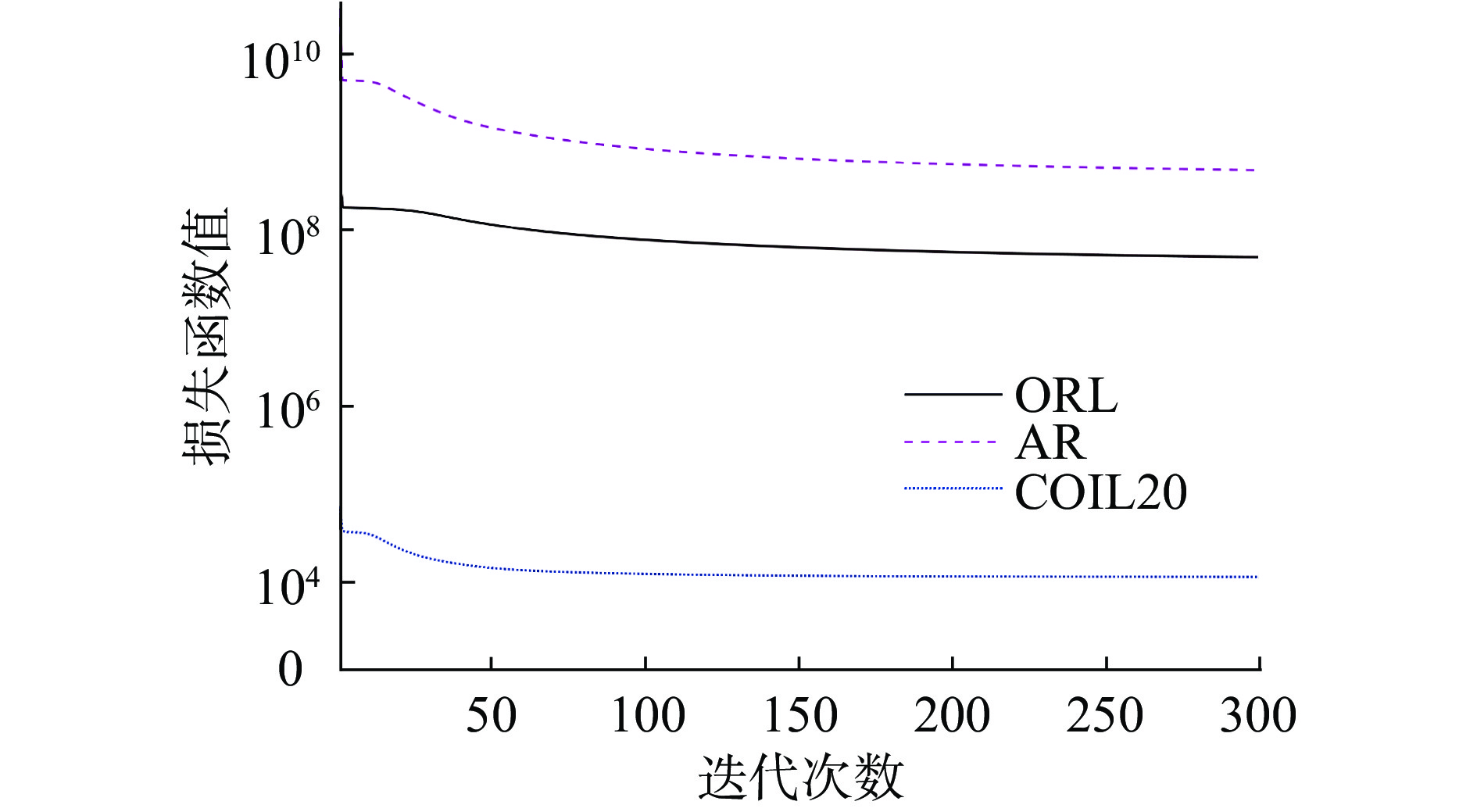

图5给出了GSDNMF-L2,1算法在3种数据集上的收敛情况,当迭代次数小于10次时损失函数值迅速下降;而当迭代次数大于100时,随着迭代次数的增加其损失函数值的下降趋于平缓,并收敛于一个固定值。本文所有实验均设置最大迭代次数为300次,以保证算法收敛。

|

Download:

|

| 图 5 损失函数的变化曲线 Fig. 5 Variation curve of loss function | |

为了刻画和评价基图像矩阵的特点,Hoyer[20]提出采用向量的

| $s(x) = \frac{{\sqrt n - \left( {\displaystyle\sum\limits_i {{{{x}}_{{i}}}} /\sqrt {\displaystyle\sum\limits_i {{{x}}_{{i}}^2} } } \right)}}{{\sqrt n - 1}}$ | (16) |

其中向量

| 表 4 比较基图像W的稀疏度 Tab.4 Comparison of sparsity of the base matrix W |

|

Download:

|

| 图 6 比较NDMF,GSDNMF-L1和GSDNMF-L2,1算法在ORL、AR和COIL20数据集上得到的基图 Fig. 6 Comparison of basic images computed by NDMF, GSDNMF-L1 and GSDNMF-L2,1 algorithm on ORL,AR and COIL20 dataset | |

本文给出了一种新的图正则化稀疏判别NMF算法(GSDNMF-L2,1)。该方法在对图像进行特征提取时,充分利用了数据集的标签信息来构造权重矩阵和判别约束项。与已有的图正则化方法不同的是,GSDNMF-L2,1算法用同类样本的稀疏线性组合来构建权重矩阵,很好地保持了样本内的相似性和样本间的差异性,在最大间距准则下使得类内离散性最小而类间分离性最大,从而获得很强的判别能力。另外,GSDNMF-L2,1在稀疏性约束条件下得到了稀疏系数矩阵,从而提高了矩阵分解的速度。下一步研究的方向是如何处理新样本,及自动选择最佳特征数量,使算法更加完善。

| [1] |

WOLD S, ESBENSEN K, GELADI P. Principal component analysis[J]. Chemometrics and intelligent laboratory systems, 1987, 2(1/2/3): 37-52. ( 0) 0)

|

| [2] |

BELHUMEUR P N, HESPANHA J P, KRIEGMAN D J. Eigenfaces vs. Fisherfaces: recognition using class specific linear projection[C]//Proceedings of the 4th European Conference on Computer Vision. Cambridge, UK, 1996: 45-58.

( 0) 0)

|

| [3] |

LEE D D, SEUNG H S. Learning the parts of objects by non-negative matrix factorization[J]. Nature, 1999, 401(6755): 788-791. DOI:10.1038/44565 ( 0) 0)

|

| [4] |

WANG Yuan, JIA Yunde, HU Changbo, et al. Fisher non-negative matrix factorization for learning local features[C]//Proceedings of the 6th Asian Conference on Computer Vision. Jeju, Korea, 2004.

( 0) 0)

|

| [5] |

ZAFEIRIOU S, TEFAS A, BUCIU I, et al. Exploiting discriminant information in nonnegative matrix factorization with application to frontal face verification[J]. IEEE transactions on neural networks, 2006, 17(3): 683-695. DOI:10.1109/TNN.2006.873291 ( 0) 0)

|

| [6] |

KOTSIA I, ZAFEIRIOU S, PITAS I. A novel discriminant non-negative matrix factorization algorithm with applications to facial image characterization problems[J]. IEEE transactions on information forensics and security, 2007, 2(3): 588-595. DOI:10.1109/TIFS.2007.902017 ( 0) 0)

|

| [7] |

GU Quanquan, ZHOU Jie. Two dimensional maximum margin criterion[C]// IEEE International Conference on Acoustics, Speech and Signal Processing. Taipei, Taiwan, 2009: 1621−1624.

( 0) 0)

|

| [8] |

LI Haifeng, JIANG Tao, ZHANG Keshu. Efficient and robust feature extraction by maximum margin criterion[J]. IEEE transactions on neural networks, 2006, 17(1): 157-165. DOI:10.1109/TNN.2005.860852 ( 0) 0)

|

| [9] |

LU Yuwu, LAI Zhihui, XU Yong, et al. Nonnegative discriminant matrix factorization[J]. IEEE transactions on circuits and systems for video technology, 2017, 27(7): 1392-1405. DOI:10.1109/TCSVT.2016.2539779 ( 0) 0)

|

| [10] |

CAI Deng, HE Xiaofei, HAN Jiawei, et al. Graph regularized nonnegative matrix factorization for data representation[J]. IEEE transactions on pattern analysis and machine intelligence, 2011, 33(8): 1548-1560. DOI:10.1109/TPAMI.2010.231 ( 0) 0)

|

| [11] |

LONG Xianzhong, LU Hongtao, PENG Yong, et al. Graph regularized discriminative non-negative matrix factorization for face recognition[J]. Multimedia tools and applications, 2014, 72(3): 2679-2699. DOI:10.1007/s11042-013-1572-z ( 0) 0)

|

| [12] |

LIAO Qing, ZHANG Qian. Local coordinate based graph-regularized NMF for image representation[J]. Signal processing, 2016, 124: 103-114. DOI:10.1016/j.sigpro.2015.09.038 ( 0) 0)

|

| [13] |

LI Xuelong, CUI Guosheng, DONG Yongsheng. Graph regularized non-negative low-rank matrix factorization for image clustering[J]. IEEE transactions on cybernetics, 2017, 47(11): 3840-3853. DOI:10.1109/TCYB.2016.2585355 ( 0) 0)

|

| [14] |

SHANG Fanhua, JIAO L C, WANG Fei. Graph dual regularization non-negative matrix factorization for co-clustering[J]. Pattern recognition, 2012, 45(6): 2237-2250. DOI:10.1016/j.patcog.2011.12.015 ( 0) 0)

|

| [15] |

MENG Yang, SHANG Ronghua, JIAO Licheng, et al. Feature selection based dual-graph sparse non-negative matrix factorization for local discriminative clustering[J]. Neurocomputing, 2018, 290: 87-99. DOI:10.1016/j.neucom.2018.02.044 ( 0) 0)

|

| [16] |

EGGERT J, KORNER E. Sparse coding and NMF[C]//Proceedings of 2004 IEEE International Joint Conference on Neural Networks. Budapest, Hungary, 2004: 2529-2533.

( 0) 0)

|

| [17] |

BELKIN M, NIYOGI P, SINDHWANI V. Manifold regularization: a geometric framework for learning from labeled and unlabeled examples[J]. Journal of machine learning research, 2006, 7(1): 2399-2434. ( 0) 0)

|

| [18] |

HOU C, JING W, YI W, et al. Local linear transformation embedding[J]. Neurocomputing, 2009, 72(10-12): 2368-2378. DOI:10.1016/j.neucom.2008.12.002 ( 0) 0)

|

| [19] |

LI H, LIU D, WANG D. Manifold regularized reinforcement learning[J]. IEEE transactions on neural networks & learning systems, 2017, 29(4): 932-943. ( 0) 0)

|

| [20] |

HOYER P O. Non-negative matrix factorization with sparseness constraints[J]. Journal of machine learning research, 2004, 5: 1457-1469. ( 0) 0)

|

| [21] |

NIE Feiping, HUANG Heng, CAI Xiao, et al. Efficient and robust feature selection via joint ℓ2, 1-norms minimization[C]//Proceedings of the 23rd International Conference on Neural Information Processing Systems. Vancouver, British Columbia, Canada, 2010: 1813-1821.

( 0) 0)

|

2019, Vol. 14

2019, Vol. 14