2. 中南大学 交通运输工程学院,湖南 长沙 410000;

3. 同济大学计算机科学与技术系,上海 201804

2. College of Transportation Engineering, Central South University, Changsha 410000, China;

3. Department of Computer Science and Technology, Tongji University, Shanghai 201804, China

不同于传统的单标记学习问题,多标记学习考虑一个对象对应多个类别标记的情况。例如:一个基因可能同时具有多种功能,如新陈代谢、转录以及蛋白质合成;一首乐曲可能传达了多种信息,如钢琴、古典音乐和莫扎特等;一幅图像可能同时属于多个类别,如motor、person与car等。早期,多标记学习的研究主要集中于文本分类中遇到的多义性问题。经过近十年的发展,多标记学习已成为当前国际机器学习领域研究的热点问题之一,逐渐在情感分类[1]、图像视频语义标注[2]、生物信息学[3]和个性化推荐[4]等实际应用中扮演重要的角色。随着相关应用的发展及需求的不断提升,多标记学习技术的大规模应用仍然要应对很多的问题和挑战。当前在多标记学习领域,特征表达大多采用人工设计的方式,如SIFT、HOG等,这些特征在特定类型对象中能够达到较好的识别效果,但这些算法提取的只是一些低层次(low-level)特征,抽象程度不高,包含的可区分性信息不足,对于分类来说无法提供更多有价值的语义信息,影响分类的精度。目前,如何让多标记系统学会辨别底层数据中隐含的区分性因素,自动学习更抽象和有效的特征已成为制约多标记学习研究进一步深入的瓶颈。

近年来,深度学习在图像分类和目标检测等领域取得了突破性进展,成为目前最有效的特征自动学习方法。文献[5]将传统人工设计的特征与深度神经网络自学习的特征进行了比较,发现后者更有助于提升图像自动标注算法的性能。深度学习模型具有强大的表征和建模能力,通过监督或非监督的方式,逐层自动地学习目标的特征表示,将原始数据经过一系列非线性变换,生成高层次的抽象表示,避免了手工设计特征的烦琐低效。本文针对多标记学习中存在的特征抽象层次不高的问题,利用包含多个隐含层的深度卷积神经网络直接从原始输入中学习并构建多层的分级特征,形成更加抽象的高层表示,实现以最少和最有效的特征来表达原始信息。同时,针对卷积神经网络预测精度高但运算速度慢的特点,利用迁移学习和双通道神经元方法,缩减网络的参数量,提高训练速度,在一定程度上弥补了卷积神经网络计算量大、速度较慢的缺陷。

1 相关工作 1.1 多标记学习为了便于叙述,在分析之前先给出多标记问题的形式化定义。令

目前,多标记分类算法根据解决问题方式的不同,可归为问题转换型和算法适应型两类[6]。问题转换型是将多标记分类问题转化为多个单标记分类问题,如算法BR(binary relevance)[7]、LP(label powerset)[8]等,然后利用单标记分类方法进行处理。算法适应型则是改进已有的单标记分类算法,使其适应于多标记分类问题,如算法BSVM(biased support vector machine)[9]、ML-KNN(multi-label k-nearest neighbor)[10]等。随着深度学习的兴起,已有一些学者开始基于深度学习研究多标记分类问题,Zhang[11]由传统径向基函数RBF(radial basis function)推导出了一种基于神经网络的多标记学习算法ML-RBF。Wang等[12]将卷积神经网络CNN(convolutional neural network)和循环神经网络RNN(recurrent neuron network)相结合,提出了一种多标记学习的复合型框架,用于解决多标记图像分类问题,但这些算法的精度和时间复杂度都有待进一步提升。

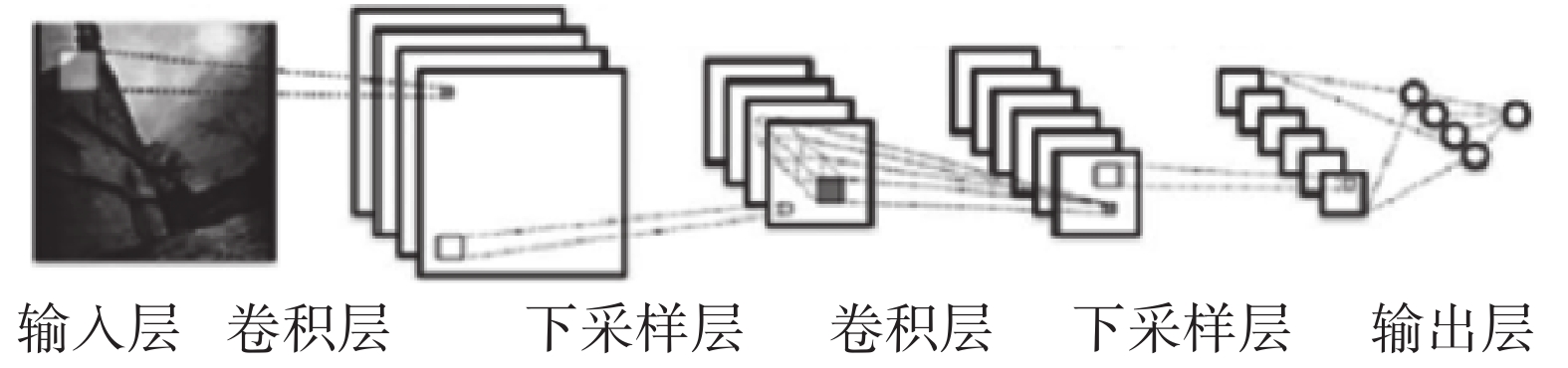

1.2 卷积神经网络卷积神经网络CNN是一种深度神经网络模型,主要由卷积层、池化层和全连接层构成,如图1所示。卷积层负责图像特征提取,池化层用于降维及实现不变形,而全连接层则起到分类器的作用。卷积层和池化层一般作为组合多次成对出现,也可以根据实际情况灵活使用,如AlexNet[13]和VGG[14]。

|

Download:

|

| 图 1 卷积神经网络结构 Fig. 1 Convolutional neural network structure | |

相比于传统的特征提取方法,卷积神经网络不需要事先人工设定特征,而是通过网络模型从大量数据中自动学习特征表示。通过多层非线性映射,逐层提取信息,最底层从像素级原始数据学习滤波器,刻画局部边缘和纹理特征;中层滤波器对各种边缘滤波器进行组合后,描述不同类型的局部特征;最高层描述整体全局特征。

1.3 迁移学习迁移学习(transfer learning)的基本思想是将从一个环境中学到的知识用于新环境中的学习任务。

目前,迁移学习已被广泛应用于各个领域,例如,在文档分类方面,Dai等[15]提出联合聚类的方法,通过不同领域共享相同的词特征进行知识迁移;在智能规划中,Zhuo等[16]提出一种新的迁移学习框架TRAMP,通过建立源领域与目标领域之间的结构映射来迁移知识,获取人工智能规划中的动作模型。

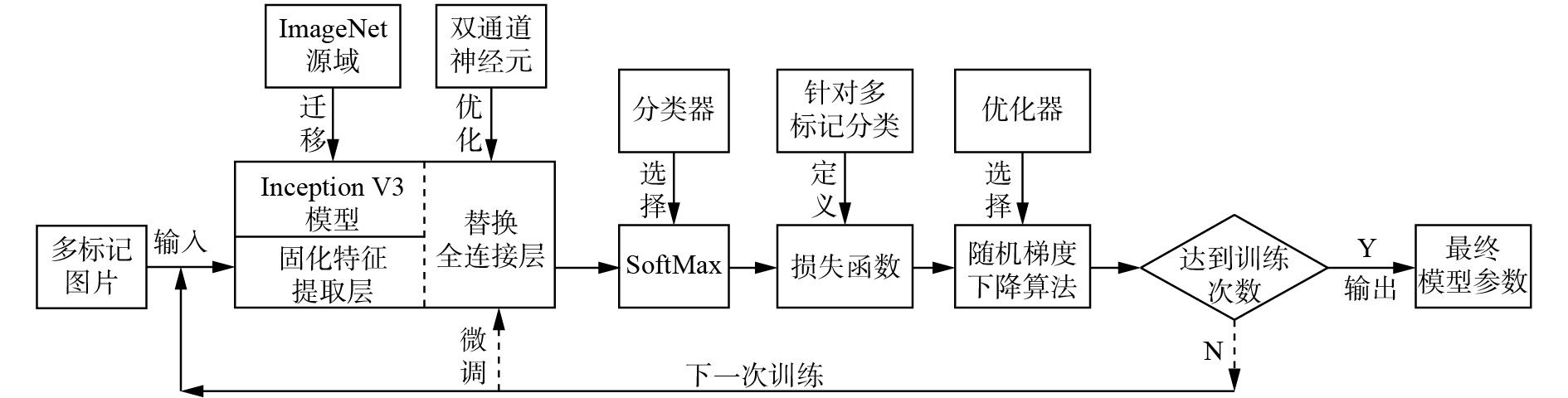

2 基于改进CNN的多标记分类算法 2.1 算法框架由于图像传递信息的底层机制相通,因此可以利用迁移学习,将在源域上训练好的网络模型,通过共享网络参数,使之在目标域上也具有一定的特征提取能力。本文采用在ImageNet[17]数据集上训练好的Inception V3[18]模型进行图像特征提取。该模型引入了“Factorization into small convolutions”的思想,将一个较大的二维卷积核拆分成两个较小的一维卷积核,例如将

为了进一步减少全连接层参数数量,本文对Inception V3模型的全连接层进行改进,引入双通道神经元,优化网络结构,并结合迁移学习提出了多标记分类模型ML_DCCNN。最后,将全连接层的输出送入SoftMax分类器,从而得到各标记的预测概率,然后根据各标记的概率计算多标记分类损失函数。

在反向传播时,保留Inception V3模型的特征提取层,即固定特征提取层的权重和偏置参数,并用神经元个数为20的全连接层替换原有全连接层,设置该层的初始权重和偏置为0,学习率设置为0.001,batchsize设置为100。然后,使用随机梯度下降算法,用PASCAL Visual Object Classes Challenge(VOC)数据集[19]对网络参数进行微调,使其适应于新数据集,算法的具体流程如图2所示。

|

Download:

|

| 图 2 基于改进CNN的多标记分类算法框架 Fig. 2 Multi-label classification algorithm framework based on improved convolution neural network | |

在卷积神经网络中,卷积、池化和激活函数等操作将原始数据映射到隐层特征空间,全连接层则将学到的分布式特征表示映射到标记空间,即全连接层在整个卷积神经网络中起到了“分类器”的作用。但全连接层上往往包含大量参数,对整个网络的速度有一定影响。虽然FCN[20]全卷积模型取消了全连接层,避免了全连接层的副作用,但是在Zhang等[21]的研究中,全连接层能够在模型表示能力迁移过程中充当“防火墙”的作用,保证模型表示能力的迁移。因此为了能够在保留全连接层的基础上,减少网络参数,本文提出了双通道神经元的概念。

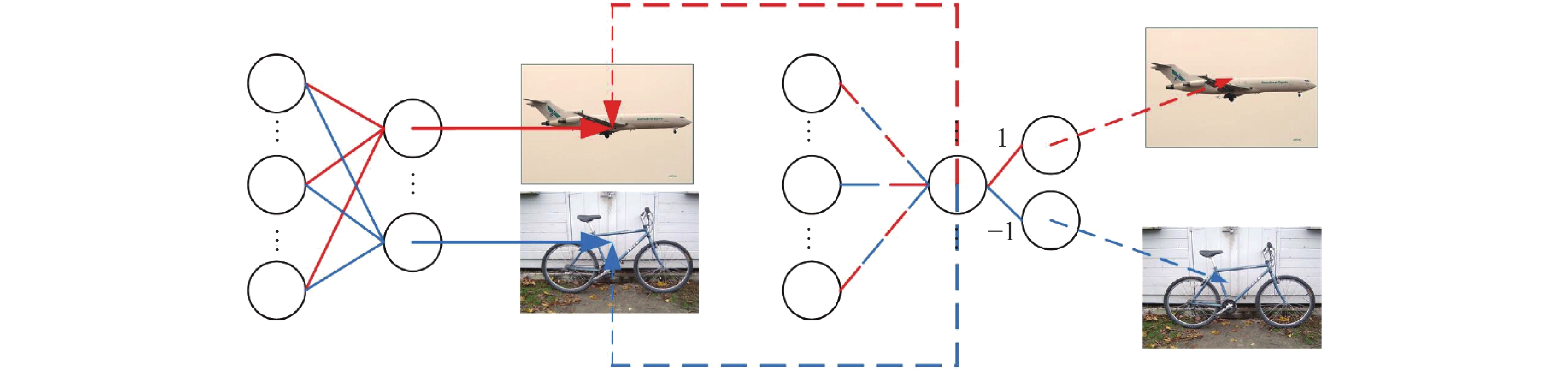

2.2.1 基本结构本文将全连接层中只能接受一种标记特征信息的神经元称为普通神经元,如图3(a)所示,全连接层中最后一层的神经元个数和具体分类问题的标记总数相等,如某数据集上共有

|

Download:

|

| 图 3 全连接层 Fig. 3 Fully connected layer | |

本文将可以接受两种标记特征信息的神经元称为双通道神经元。一个双通道神经元相当于两个普通神经元的合并,它改进了全连接层,有效地减少了该层的参数。在接受到特征信息后,为了能将合并的标记区分,使用双通道的神经元,需在其后再连接两个神经元,分别表示对应的标记,并规定所连接的两个神经元上的权重分别为1和−1,如图3(b)所示。

在图3(a)中,假设分类标记数为

在图3(b)中,假设全连接层有

| $\frac{1}{2} \leqslant \frac{{\left( {m + 1} \right)\left( {d + e} \right) + 2d}}{{\left( {m + 1} \right)n}} \leqslant 1$ | (1) |

式中:

打包和解包是双通道神经元的核心思想。打包主要表现在将两种标记合二为一在一个神经元上,即最后一层全连接层上的每个神经元可以表示两种标记,接受两种标记的特征信息。例如:将飞机和自行车这两种标签打包在一起,由一个神经元负责输出,则该神经元上的权重只对飞机和自行车的特征信息敏感。但仅用一个神经元输出,存在无法判别输出是飞机还是自行车的情况,因此需要解包思想,主要表现在一个神经元又“分裂”出两个神经元,具体如图4所示。

|

Download:

|

| 图 4 打包与解包示意 Fig. 4 Package and unpack diagram | |

图4左边为普通全连接层的神经元,每个神经元仅对一种标记特征信息敏感,如上方神经元仅对飞机特征信息敏感,下方神经元仅对自行车特征信息敏感。图4右边使用了双通道神经元,每个神经元对两种类别的特征信息敏感,例如同时对飞机和自行车的特征信息敏感,在提取出飞机和自行车的特征后,再分裂出两个神经元分别代表对应的标记,其中权重为1的代表飞机,权重为−1的代表自行车。

2.3 损失函数设

SoftMax分类器不仅可以用于处理单标记分类问题,也可以用于处理多标记分类问题。本文将最后一层全连接层的输出送入SoftMax分类器中,得出图片含有各标记的概率,例如图片xi含有标记

| ${p_{ij}}{\rm{ = }}\frac{{\exp ({f_j}({{{x}}_i}))}}{{\sum\limits_{k = 1}^c {\exp ({f_k}({{{x}}_i}))} }}$ | (2) |

式中:fj(xi)表示图片xi对应标记

| $J = - \sum\limits_{i = 1}^n {\sum\limits_{j = 1}^q {\overline {{p_{ij}}} \log ({p_{ij}})} } $ | (3) |

式中:

| $\overline {{p_{ij}}} {\rm{ = }}\left\{ {\begin{array}{*{20}{c}} {\displaystyle\frac{1}{{{c_ + }}}},&{ {{y_{ij}} = 1} } \\ 0,&{ {{y_{ij}} = 0} } \end{array}} \right.$ | (4) |

由式(3)和式(4)可以推导出:

| $J = - \sum\limits_{i = 1}^n {\sum\limits_{j = 1}^{{c_ + }} {\frac{1}{{{c_ + }}}\log ({p_{ij}})} } $ | (5) |

式中:

本文实验在处理器为i5-3210M的Windows PC机上完成,基于TensorFlow 1.2.1实现卷积神经网络,采用了PASCAL VOC2007和PASCAL VOC2012两个多标记数据集,二者均含有20个类别标记。PASCAL VOC2007数据集共有9 963张图片,其中训练验证集有5 011张,测试集有4 952张,PASCAL VOC2012共有33 260张图片,其中训练验证集有17 125张,测试集有16 135张。

为了验证双通道神经元的可用性,本文对普通全连接层结构和采用双通道神经元的全连层结构的分类效果进行了比较,其中双通道神经元的标签两两合并方式如表1所示。表2显示了PASCAL VOC数据集中的一个多标记图像(如图5)分别使用普通全连接层和包含10个双通道神经元的全连层在训练2 000步时,softmax分类器的输出值,其中,FC表示普通全连接层,DC(Dual_Channel)表示双通道神经元全连接层,GT表示ground_truth。DC所用

|

Download:

|

| 图 5 多标记图像 Fig. 5 Multi-label image | |

| 表 1 标签合并方式 Tab.1 Label merging mode |

| 表 2 使用两种全连接层的分类结果比较 Tab.2 Result comparison of two fully connected layers |

| 表 3 两种全连接层的平均分类效果比较 Tab.3 Average effect comparison of two fully connected layers |

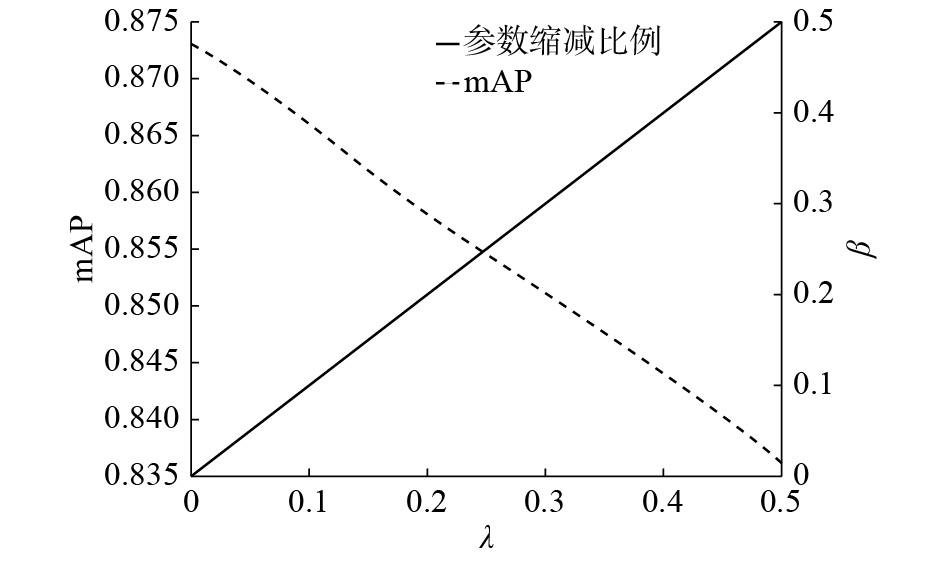

为了说明双通道神经元个数对分类效果的影响,在PASCAL VOC2007数据集上对比了双通道神经元个数

| 表 4 d取不同值时AP在PASCAL VOC2007数据集上的变化 Tab.4 Comparison of AP of algorithm on PASCAL VOC2007 data set |

|

Download:

|

|

图 6 双通道神经元比例

|

|

为了验证ML_DCCNN模型的分类效果,本文分别在Pascal VOC2007和Pascal VOC2012数据集上进行实验,比较了ML_DCCNN、普通全连接层模型CNN-SoftMax、传统的多标记分类算法INRIA[22]、FV[23]和GS-MKL[24],以及基于卷积神经网络的多标记分类模型PRE-1000C[25]和HCP-1000[26],评价指标使用Accuracy Precision(AP),双通道神经元个数

| 表 5 不同分类算法AP在PASCAL VOC2007 上的比较 Tab.5 Comparison of AP of different classification algorithms on PASCAL VOC2007 data set |

| 表 6 不同分类算法AP在PASCAL VOC2012数据集上的比较 Tab.6 Comparison of AP of different classification algorithms on PASCAL VOC20012 data set |

总之,通过实验可以证明使用双通道神经元能够对全连接层参数进行一定比例的缩减,而由于全连接层参数往往是迁移学习过程中所需要训练的全部参数,因此全连接层参数的缩减在一定程度上意味着整个网络模型的参数缩减。虽然双通道神经元在特征提取方面存在一定准确率损失,但整体性能依然在可接受范围之内,双通道神经元提供了不同程度的参数缩减与性能表现的可选择性,某种程度上增加了网络模型的灵活性。

4 结束语本文提出了一种基于卷积神经网络的多标记分类方法,设定了针对多标记分类的损失函数,并在PASCAL VOC2007和PASCAL VOC2012两个多标记数据集上进行了验证。总体而言,与以往的方法相比,本文提出的使用迁移学习和双通道神经元多标记分类方法,可以在保证一定准确率的前提下减少网络参数,节省计算资源。在当下注重准确率和计算量平衡的背景下,有着较好的适应性和应用前景。但限于数据、机器性能等因素,本文没有进行更多的实验来证明标记相关性约束条件下分类算法的性能。因此将来的工作从以下方面开展:利用深度学习模型构建标记之间的依赖关系以及在标记依赖关系约束下进行多标记卷积神经网络的训练。

| [1] |

TROHIDIS K, TSOUMAKAS G, KALLIRIS G, et al. Multilabel classification of music into emotions[C]//Proceedings of 2008 International Conference on Music Information Retrieval (ISMIR 2008). Philadelphia, USA, 2008: 325–330.

( 0) 0)

|

| [2] |

WU Baoyuan, LYU S, HU Baoguang, et al. Multi-label learning with missing labels for image annotation and facial action unit recognition[J]. Pattern recognition, 2015, 48(7): 2279-2289. DOI:10.1016/j.patcog.2015.01.022 ( 0) 0)

|

| [3] |

JIANG J Q, MCQUAY L J. Predicting protein function by multi-label correlated semi-supervised learning[J]. IEEE/ACM transactions on computational biology and bioinformatics, 2012, 9(4): 1059-1069. DOI:10.1109/TCBB.2011.156 ( 0) 0)

|

| [4] |

OZONAT K, YOUNG D. Towards a universal marketplace over the web: statistical multi-label classification of service provider forms with simulated annealing[C]//Proceedings of the 15th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. Paris, France, 2009: 1295–1304.

( 0) 0)

|

| [5] |

GONG Y, JIA Y, LEUNG T, et al. Deep convolutional ranking for multilabel image annotation[C]. 2nd International Conference on Learning Representations, ICLR 2014. Banff, Canada, 2014: 1312–1320.

( 0) 0)

|

| [6] |

ZHANG Minling, ZHOU Zhihua. A review on multi-label learning algorithms[J]. IEEE transactions on knowledge and data engineering, 2014, 26(8): 1819-1837. DOI:10.1109/TKDE.2013.39 ( 0) 0)

|

| [7] |

LUACES O, DÍEZ J, BARRANQUERO J, et al. Binary relevance efficacy for multilabel classification[J]. Progress in artificial intelligence, 2012, 1(4): 303-313. DOI:10.1007/s13748-012-0030-x ( 0) 0)

|

| [8] |

READ J, PFAHRINGER B, HOLMES G. Multi-label classification using ensembles of pruned sets[C]//ICDM'08. Eighth IEEE International Conference on Data Mining. Pisa, Italy, 2008: 995–1000.

( 0) 0)

|

| [9] |

WAN Shupeng, XU Jianhua. A multi-label classification algorithm based on triple class support vector machine[C]//Proceedings of 2007 International Conference on Wavelet Analysis and Pattern Recognition. Beijing, China, 2008: 1447–1452.

( 0) 0)

|

| [10] |

张敏灵. 一种新型多标记懒惰学习算法[J]. 计算机研究与发展, 2012, 49(11): 2271-2282. ZHANG Minling. An improved multi-label lazy learning approach[J]. Journal of computer research and development, 2012, 49(11): 2271-2282. (  0) 0)

|

| [11] |

ZHANG Mimling. ML-RBF: RBF neural networks for multi-label learning[J]. Neural processing letters, 2009, 29(2): 61-74. DOI:10.1007/s11063-009-9095-3 ( 0) 0)

|

| [12] |

WANG Jiang, YANG Yi, MAO Junhua, et al. CNN-RNN: a unified framework for multi-label image classification[C]//Proceedings of 2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas, USA, 2016: 2285–2294.

( 0) 0)

|

| [13] |

KRIZHEVSKY A, SUTSKEVER I, HINTON G E. ImageNet classification with deep convolutional neural networks[C]//Proceedings of the 25th International Conference on Neural Information Processing Systems. Lake Tahoe, USA, 2012: 1097–1105.

( 0) 0)

|

| [14] |

SIMONYAN K, ZISSERMAN A. Very deep convolutional networks for large-scale image recognition[C]//3rd International Conference on Learning Representations, ICLR 2015. San Diego, USA, 2015:1409–1422.

( 0) 0)

|

| [15] |

DAI Wenyuan, XUE Guirong, YANG Qiang, et al. Co-clustering based classification for out-of-domain documents[C]//Proceedings of the 13th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. San Jose, USA, 2007: 210–219.

( 0) 0)

|

| [16] |

ZHUO H H, YANG Qiang. Action-model acquisition for planning via transfer learning[J]. Artificial intelligence, 2014, 212: 80-103. DOI:10.1016/j.artint.2014.03.004 ( 0) 0)

|

| [17] |

DENG Jia, DONG Wei, SOCHER R, et al. ImageNet: a large-scale hierarchical image database[C]//Proceedings of 2009 IEEE Conference on Computer Vision and Pattern Recognition. Miami, USA, 2009: 248–255.

( 0) 0)

|

| [18] |

SZEGEDY C, VANHOUCKE V, IOFFE S, et al. Rethinking the Inception Architecture for Computer Vision[C]//Proceedings of 2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas, USA, 2016: 2818–2826.

( 0) 0)

|

| [19] |

EVERINGHAM M, VAN GOOL L, WILLIAMS C K I, et al. The pascal visual object classes (VOC) challenge[J]. International journal of computer vision, 2010, 88(2): 303-338. DOI:10.1007/s11263-009-0275-4 ( 0) 0)

|

| [20] |

SHELHAMER E, LONG J, DARRELL T. Fully convolutional networks for semantic segmentation[J]. IEEE transactions on pattern analysis and machine intelligence, 2017, 39(4): 640-651. DOI:10.1109/TPAMI.2016.2572683 ( 0) 0)

|

| [21] |

ZHANG Chenlin, LUO Jianhao, WEI Xiushen, et al. In defense of fully connected layers in visual representation transfer[C]//Proceedings of the 18th Pacific-Rim Conference on Multimedia on Advances in Multimedia Information Processing. Harbin, China, 2017: 807–817.

( 0) 0)

|

| [22] |

HARZALLAH H, JURIE F, SCHMID C. Combining efficient object localization and image classification[C]//Proceedings of 2009 IEEE International Conference on Computer Vision. Kyoto, Japan, 2009: 237–244.

( 0) 0)

|

| [23] |

PERRONNIN F, SÁNCHEZ J, MENSINK T. Improving the fisher kernel for large-scale image classification[C]//Proceedings of the 11th European Conference on Computer Vision. Heraklion, Greece, 2010: 143–156.

( 0) 0)

|

| [24] |

YANG Jingjing, LI Yuanning, TIAN Yonghong, et al. Group-sensitive multiple kernel learning for object categorization[C]//Proceedings of 2009 IEEE International Conference on Computer Vision. Kyoto, Japan, 2009: 436–443.

( 0) 0)

|

| [25] |

OQUAB M, BOTTOU L, LAPTEV I, et al. Learning and transferring mid-level image representations using convolutional neural networks[C]//Proceedings of 2014 IEEE Conference on Computer Vision and Pattern Recognition. Columbus, USA, 2014: 1717–1724.

( 0) 0)

|

| [26] |

WEI Y , XIA W , LIN M , et al. HCP: a flexible CNN framework for multi-label image classification[J]. IEEE transactions on pattern analysis and machine intelligence, 2016, 38(9): 1901–1907.

( 0) 0)

|

2019, Vol. 14

2019, Vol. 14