粒子群优化算法是Kennedy等[1]在1995年提出的一种群体搜索的随机优化算法。由于PSO算法的参数少而且易操作,所以在实际问题中得到了广泛的应用。对PSO算法的研究主要有以下几个方面:1)对PSO算法自身参数的改进,这方面的工作主要关于惯性权重的自适应改进[2-9]和对学习因子的改进[9-11];2)将其他进化算法与PSO相结合,比如,遗传算法与PSO算法结合[12-13],差分进化算法与PSO算法结合[14],模拟退火算法与PSO算法结合[15];3)在PSO算法引入一些改进PSO算法性能的其他算子,比如,把高斯扰动策略加入粒子群优化算法中[16],把均匀设计方法引入到粒子群优化算法中[17],把变异策略[18]、精英策略[19]、局部搜索策略[20]及邻域搜索策略[21]引入到粒子群优化算法中;4)PSO算法的理论分析,比如,基于线性系统理论研究PSO收敛性[22-25],基于随机过程研究PSO收敛性[26],但是粒子群算法不依概率收敛[26]。本文给出了一种依概率1收敛的PSO算法,该算法在标准粒子群优化算法实施位置更新后按一定概率对较好的粒子实施具有开发能力的变异操作,对较差的粒子实施具有探索能力的变异操作,从而平衡了算法的全局搜索能力和局部搜索能力,提高了算法的效率,另外,证明了该算法依概率1收敛到ε-最优解。实验结果表明,提出的算法能够提高全局搜索能力,算法的收敛速度明显加快。

1 标准PSO算法标准粒子群算法中,粒子群由Np个粒子组成,在时刻k,第i个粒子在d维空间中速度和位置的更新公式为

| $ v_{id}^{k + 1} = \omega v_{id}^k + {c_1}{r_1}\left( {p_{id}^k - x_{id}^k} \right) + {c_2}{r_2}\left( {p_{gd}^k - x_{id}^k} \right) $ | (1) |

| $ x_{id}^{k + 1} = x_{id}^k + v_{id}^{k + 1} $ | (2) |

式中:i=1, 2, …, Np,d=1, 2, …, D,D为决策变量的维数,ω为惯性权重,

| $ {\omega _k} = \left( {{\omega _{{\rm{start}}}} - {\omega _{{\rm{end}}}}} \right)\left( {\frac{{{K_{\max }} - k}}{{{K_{\max }}}}} \right) + {\omega _{{\rm{end}}}} $ | (3) |

式中:ωstart、ωend分别为最初和最终的惯性权重,Kmax是最大迭代步。

2 改进的PSO算法提高PSO算法性能的有效方法之一就是平衡算法的探索能力和开发能力。我们的目的是对粒子所经历的最好位置进行操作,一方面能够使其跳出局部最优;另一方面使其具有一定的局部搜索能力。为此,给出两个变异算子:

| $ \bar P_{id}^k = P_{id}^k \cdot {\rho _1}N\left( {0,{\sigma ^2}} \right) $ | (4) |

| $ \bar P_{id}^k = P_{id}^k + {\rho _2}N\left( {0,{\sigma ^2}} \right) $ | (5) |

式中:ρ1>1和ρ2>1是常数,N(0, σ2)是具有时变标准差σ的高斯分布的随机变量,σ表示为

| $ \sigma = {\sigma _{\max }} - \left( {{\sigma _{\max }} - {\sigma _{\min }}} \right)\frac{k}{{{K_{\max }}}} $ | (6) |

式中:σmax和σmin是标准差σ的最终和初始值,k是当前迭代步,Kmax是最大迭代步。因为ρ1N(0, σ2)的值比1大或比1小,又因为

如果每次都对

| $ {f_{{\rm{ave}}}} = \frac{1}{{{N_p}}}\sum\limits_{j = 1}^{{N_p}} {f\left( {\mathit{\boldsymbol{P}}_j^k} \right)} , $ | (7) |

| $ \mu = \left\{ \begin{array}{l} \frac{{\alpha \left( {{f_{{\rm{ave}}}} - f\left( {\mathit{\boldsymbol{P}}_i^k} \right)} \right)}}{{{f_{{\rm{ave}}}} - f\left( {\mathit{\boldsymbol{P}}_g^k} \right)}},\;\;\;\;f\left( {\mathit{\boldsymbol{P}}_i^k} \right) \le {f_{{\rm{ave}}}}\\ \frac{{\alpha \left( {f\left( {P_i^k} \right) - {f_{{\rm{ave}}}}} \right)}}{{{f_{\max }} - {f_{{\rm{ave}}}}}},\;\;\;\;\;f\left( {\mathit{\boldsymbol{P}}_i^k} \right) > {f_{{\rm{ave}}}} \end{array} \right. $ | (8) |

式中:fmax=

| $ \alpha = 1 - 0.8\frac{k}{{{K_{\max }}}} $ | (9) |

由式(8)知,对适应值较好的粒子实施局部搜索的概率较大,而对适应值较差的粒子实施全局搜索的概率较大。由于当算法迭代到后期,粒子比较集中,从而导致

位置与速度越界处理:

| $ x_{id}^{k + 1} = \left\{ \begin{array}{l} x_{id}^{k + 1},\;\;\;\;\;{l_d} \le x_{id}^{k + 1} \le {u_d}\\ {l_d} + {\rm{rand}}\left( \; \right) \cdot \left( {{u_d} - {l_d}} \right),\;\;\;\;\;其他 \end{array} \right. $ | (10) |

| $ v_{id}^{k + 1} = \left\{ \begin{array}{l} v_{id}^{k + 1},\;\;\;{v_{\min }} \le v_{id}^{k + 1} \le {v_{\max }}\\ {v_{\min }},\;\;\;\;v_{id}^{k + 1} < {v_{\min }}\\ {v_{\max }},\;\;\;\;v_{id}^{k + 1} > {v_{\max }} \end{array} \right. $ | (11) |

式中:

改进PSO算法的流程如下:

1) 设置参数,随机初始化粒子的位置和速度,计算各粒子的适应度,确定每个粒子的经历过的最优位置及全局最优位置,令k=1;

2)按式(3)计算惯性权重,并按式(1)和式(2)进行速度与位置更新;

3) 按式(10)和式(11)进行越界处理;

4) 对粒子i(i=1, 2, …, Np)计算其适应值,更新其经历过的最优位置

5)若

6)若

7)令

| $ \mathit{\boldsymbol{P}}_i^{k + 1}\left( {{\rm{new}}} \right) = \left\{ \begin{array}{l} \mathit{\boldsymbol{\bar P}}_i^{k + 1},\;\;f\left( {\mathit{\boldsymbol{\bar P}}_i^{k + 1}} \right) < f\left( {\mathit{\boldsymbol{P}}_i^{k + 1}} \right)\\ \mathit{\boldsymbol{P}}_i^{k + 1},\;\;f\left( {\mathit{\boldsymbol{\bar P}}_i^{k + 1}} \right) \ge f\left( {\mathit{\boldsymbol{P}}_i^{k + 1}} \right) \end{array} \right.; $ |

8)令

9)判断是否满足终止条件(本文采用最大迭代步),若满足则输出结果,否则令k=k+1,转2)。

3 收敛性分析本文考虑如下全局优化问题:

| $ \begin{array}{*{20}{c}} {\min {\rm{imize}}\;f\left( x \right)}\\ {{\rm{subject}}\;{\rm{to}}\;x \in S} \end{array} $ |

式中:f:S→R是实值函数, S={xmin≤x≤xmax},xmin=(l1, l2, …, lD),xmax=(u1, u2, …, uD)。

定义1 设f(x)是实值函数,x*∈S,若对任意x∈S,

| $ f\left( {{x^ * }} \right) \le f\left( x \right) $ |

则称x*为S的全局最优解。

定义2 对任意ε>0,设

| $ {B_\varepsilon } = \left\{ {x \in S\left| {\left| {f\left( x \right) - f\left( {{x^ * }} \right)} \right| < \varepsilon } \right|} \right\} $ |

则称Bε为ε最优解集,x∈Bε被称为ε-最优解。

本文假设μ(Bε)>0, 其中μ为勒贝格测度。

由定义1和2得:

| $ x_{id}^{k + 1} = x_{id}^k + {\omega _k}v_{id}^k + {c_1}{r_1}\left( {p_{id}^k - x_{id}^k} \right) + {c_2}{r_2}\left( {p_{gd}^k - x_{id}^k} \right) $ | (12) |

由式(12)可看出,在第k+1代粒子群中第i个粒子的状态由

定义3 设

定义4 设Ω={ω|ω=(ω1, ω2, ω3, …), Φk∈ΦNp,

由改进算法可知,

引理1 对

引理2 对

证明 如果

引理3

| $ {\rm{pr}}\left( {\mathit{\boldsymbol{P}}_g^{k + 1} \in {\mathit{\boldsymbol{B}}_\varepsilon }\left| {{\mathit{\boldsymbol{ \boldsymbol{\varPhi} }}_k} = \mathit{\boldsymbol{ \boldsymbol{\varPhi} }}} \right.} \right) \ge \rho $ |

式中pr表示概率测度。

证明 如果

| $ {\rm{pr}}\left( {\chi \left( {\mathit{\boldsymbol{\bar P}}_g^{k + 1}} \right) = 1} \right) = \alpha ,{\rm{pr}}\left( {\chi \left( {\mathit{\boldsymbol{\bar P}}_g^{k + 1}} \right) = 0} \right) = 1 - \alpha $ |

于是

| $ \begin{array}{*{20}{c}} {{\rm{pr}}\left( {\mathit{\boldsymbol{P}}_g^{k + 1} \in {B_\varepsilon }\left| {{\mathit{\boldsymbol{ \boldsymbol{\varPhi} }}_k} = \mathit{\boldsymbol{ \boldsymbol{\varPhi} }}} \right.} \right) \ge {\rm{pr}}\left( {\chi \left( {\mathit{\boldsymbol{\bar P}}_g^{k + 1}} \right) = 1} \right) \cdot }\\ {{\rm{pr}}\left( {\left( {\mathit{\boldsymbol{P}}_g^{k + 1} \in {B_\varepsilon }\left| {{\mathit{\boldsymbol{ \boldsymbol{\varPhi} }}_k} = \mathit{\boldsymbol{ \boldsymbol{\varPhi} }}} \right.} \right)\left| {\chi \left( {\mathit{\boldsymbol{\bar P}}_g^{k + 1}} \right) = 1} \right.} \right) = }\\ {\alpha \cdot {\rm{pr}}\left( {\left( {\mathit{\boldsymbol{P}}_g^{k + 1} \in {B_\varepsilon }\left| {{\mathit{\boldsymbol{ \boldsymbol{\varPhi} }}_k} = \mathit{\boldsymbol{ \boldsymbol{\varPhi} }}} \right.} \right)\left| {\chi \left( {\mathit{\boldsymbol{\bar P}}_g^{k + 1}} \right) = 1} \right.} \right) = }\\ {\alpha \cdot {\rm{pr}}\left( {\mathit{\boldsymbol{P}}_g^{k + 1}\left( {{\rm{new}}} \right) \in {B_\varepsilon }\left| {{\mathit{\boldsymbol{ \boldsymbol{\varPhi} }}_k} = \mathit{\boldsymbol{ \boldsymbol{\varPhi} }}} \right.} \right)} \end{array} $ |

由引理2有

| $ {\rm{pr}}\left( {\mathit{\boldsymbol{P}}_g^{k + 1} \in {B_\varepsilon }\left| {{\mathit{\boldsymbol{ \boldsymbol{\varPhi} }}_k} = \mathit{\boldsymbol{ \boldsymbol{\varPhi} }}} \right.} \right) \ge \alpha \cdot {\rm{pr}}\left( {\mathit{\boldsymbol{\bar P}}_g^{k + 1} \in {B_\varepsilon }\left| {{\mathit{\boldsymbol{ \boldsymbol{\varPhi} }}_k} = \mathit{\boldsymbol{ \boldsymbol{\varPhi} }}} \right.} \right) $ |

由于

| $ g\left( {{x_1},{x_2}, \cdots ,{x_D}} \right) = {\left( {\frac{1}{{\sqrt {2{\rm{\pi }}} \sigma {\rho _2}}}} \right)^D}\exp \left( { - \sum\limits_{d = 1}^D {\frac{{{{\left( {{x_d} - p_{gd}^{k + 1}} \right)}^2}}}{{2{\sigma ^2}\rho _2^2}}} } \right) $ |

于是

| $ \begin{array}{*{20}{c}} {{\rm{pr}}\left( {\mathit{\boldsymbol{\bar P}}_g^{k + 1} \in {B_\varepsilon }\left| {{\mathit{\boldsymbol{ \boldsymbol{\varPhi} }}_k} = \mathit{\boldsymbol{ \boldsymbol{\varPhi} }}} \right.} \right) = }\\ {\int_{{B_\varepsilon }} {g\left( {{x_1},{x_2}, \cdots ,{x_D}} \right){\rm{d}}{x_1},{\rm{d}}{x_2}, \cdots ,{\rm{d}}{x_D}} \ge }\\ {{{\left( {\frac{1}{{\sqrt {2{\rm{ \mathsf{ π} }}} {\sigma _{\max }}{\rho _2}}}} \right)}^D}\exp \left( { - D\frac{{2{m^2}}}{{\sigma _{\max }^2\rho _2^2}}} \right)\mu \left( {{\mathit{\boldsymbol{B}}_\varepsilon }} \right)} \end{array} $ |

式中

令

| $ {\rm{pr}}\left( {\mathit{\boldsymbol{P}}_g^{k + 1} \in {B_\varepsilon }\left| {{\mathit{\boldsymbol{ \boldsymbol{\varPhi} }}_k} = \mathit{\boldsymbol{ \boldsymbol{\varPhi} }}} \right.} \right) \ge \rho > 0。$ |

定理1 设(Φ1, Φ2, Φ3, …)∈Ω是由算法任意生成的状态序列,则序列{

| $ \mathop {\lim }\limits_{k \to \infty } {\rm{pr}}\left\{ {p_g^k \in {\mathit{\boldsymbol{B}}_\varepsilon }} \right\} = 1 $ |

证明 由算法可知,Φk+1只与Φk有关,所以Φ1, Φ2, Φ3, …是马尔可夫过程,由马尔可夫过程性质及引理1可知

| $ \begin{array}{*{20}{c}} {{\rm{pr}}\left( {\mathit{\boldsymbol{P}}_g^{k + 1} \notin {\mathit{\boldsymbol{B}}_\varepsilon }} \right) = {\rm{pr}}\left( {\mathit{\boldsymbol{P}}_g^1 \notin {\mathit{\boldsymbol{B}}_\varepsilon }} \right) \cdot }\\ {\prod\limits_{t = 1}^k {{\rm{pr}}\left( {\mathit{\boldsymbol{P}}_g^{t + 1} \notin {\mathit{\boldsymbol{B}}_\varepsilon }\left| {\mathit{\boldsymbol{P}}_g^t \notin {\mathit{\boldsymbol{B}}_\varepsilon }} \right.} \right)} \le }\\ {\prod\limits_{t = 1}^k {{\rm{pr}}\left( {\mathit{\boldsymbol{P}}_g^{t + 1} \notin {\mathit{\boldsymbol{B}}_\varepsilon }\left| {\mathit{\boldsymbol{P}}_g^t \notin {\mathit{\boldsymbol{B}}_\varepsilon }} \right.} \right)} } \end{array} $ |

令

| $ \Delta = \left\{ {\mathit{\boldsymbol{ \boldsymbol{\varPhi} }}\left| {{\mathit{\boldsymbol{P}}_g} \notin {\mathit{\boldsymbol{B}}_\varepsilon }} \right.} \right\} \subset {\mathit{\Phi }_{{N_p}}} $ |

则

| $ \begin{array}{*{20}{c}} {{\rm{pr}}\left( {\mathit{\boldsymbol{P}}_g^{t + 1} \notin {\mathit{\boldsymbol{B}}_\varepsilon }\left| {\mathit{\boldsymbol{P}}_g^t \notin {\mathit{\boldsymbol{B}}_\varepsilon }} \right.} \right) \le }\\ {{\rm{pr}}\left( {\mathit{\boldsymbol{P}}_g^{k + 1} \notin {\mathit{\boldsymbol{B}}_\varepsilon }\left| {{\mathit{\boldsymbol{ \boldsymbol{\varPhi} }}_t} = \mathit{\boldsymbol{ \boldsymbol{\varPhi} }}} \right.} \right) = 1 - \rho } \end{array} $ |

于是pr

| $ \mathop {\lim }\limits_{k \to \infty } {\rm{pr}}\left\{ {p_g^k \in {\mathit{\boldsymbol{B}}_\varepsilon }} \right\} = 1 $ |

为了评价改进算法(简称,IPSO)的性能,我们选取文献[24]中的13个测试函数进行实验研究。前7个函数为高维单峰函数,后6个函数是高维多峰函数。在本文中13个问题的维数都取30。IPSO算法的实验结果与LDIWPSO[2]、CDIWPSO[3]、DAPSO[4]、和SSRDIWPSO[5]实验结果进行比较。所有算法的共同参数设置如下:ωstart=0.9,ωend=0.4,Np=30,c1=c2=2,vmin=0.5xmin,vmax=0.5xmax,Kmax=3 000,IPSO算法的其他参数设置如下:ρ1=2, ρ2=0.1, σmax=1, σmin=0.2。所有算法的程序都是由MATLAB2007实现,且每个实验独立运行30次,实验结果见表 1和表 2。

| 表 1 高维单峰函数的实验结果 Tab.1 Experimental results for unimodal functions |

| 表 2 高维多峰函数的实验结果 Tab.2 Experimental results for multimodal functions |

从表 1可以看出,除了测试函数F1、F5和F6外, IPSO算法与其他4种算法相比,在寻优能力和稳定性方面明显优于其他4种算法,特别是测试函数F3和F4,其他4种算法不能获得到最优解,而IPSO算法能够得到较理想的最优解,且稳定性也非常好。对于测试函数F1来说,IPSO算法不如SSRDIWPSO算法,但是优于其他3种算法。对于测试函数F5来说,IPSO算法的最好收敛值不如其他4种算法,但是对于平均收敛值和方差来说,IPSO算法优于其他4种算法。对于测试函数F6,IPSO算法与其他4种算法获得相同的结果。总之,对于高维单峰函数来说IPSO算法有一定优势,其原因如下:函数F1~F7都单峰函数,由于IPSO算法有较好的局部搜索能力,所以IPSO算法对于单峰函数具有一定优势。函数F1是非线性简单的单峰函数,所以大多数算法都能够找到最优解;函数F5是很难极小化的典型病态二次函数,由于其全局最优解与可达到的局部最优之间有一道狭窄的山谷,所以算法很难辨别搜索方向,找到全局最优解的机会很小,因此5种算法都没有找到全局最优解,而函数F7是含有随机变量的函数,由于目标函数不确定,找到非常理想的全局最优解也是较困难的。

从表 2可以看出,除了测试函数F12和F13外IPSO算法与其他4种算法相比,在寻优能力和稳定性方面明显优于其他4种算法,特别是测试函数F9和F11,其他4种算法不能获得较好最优解,而IPSO算法能够得到非常理想的最优解,且稳定性也非常好。对于测试函数F12来说,关于最好收敛值,IPSO算法不如SSRDIWPSO和CDIWPSO算法,但是优于其他两种算法,而关于最差收敛值、平均收敛值和方差,IPSO算法远好于其他4种算法。对于测试函数F13来说,对于最好收敛值IPSO算法不如SSRDIWPSO和CDIWPSO算法,对于平均收敛值,IPSO算法与SSRDIWPSO算法一样优于其他3种算法。总之,除了函数F8~F13外,对于其他函数IPSO算法都能够得到满意结果,其原因如下:函数F8~F13都是多峰函数且有较多局部最优解,由于IPSO算法按一定概率对适应值较差的粒子实行全局搜索,对于适应值较好的粒子实行局部搜索,所以IPSO算法对于解决多峰函数有一定优势。但是由于函数F8的全局最优解与局部最优相距较远,且有一定的欺骗性,导致算法朝着错误方向搜索,因此不易找到满意的全局最优解;函数F13含有大量深度不同的“坑”,导致算法陷入第一个坑后不易跳出来,因此也不易找到满意的全局最优解。

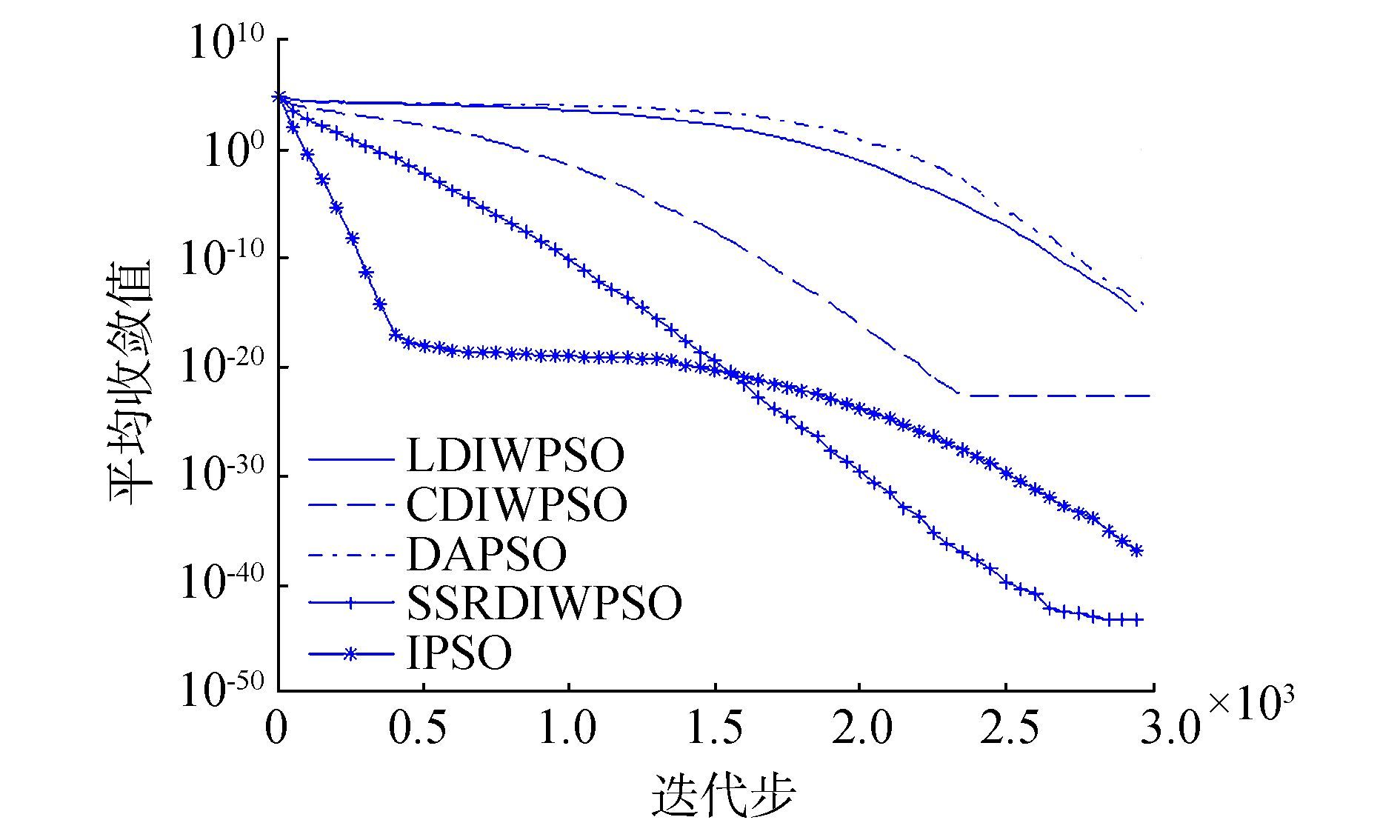

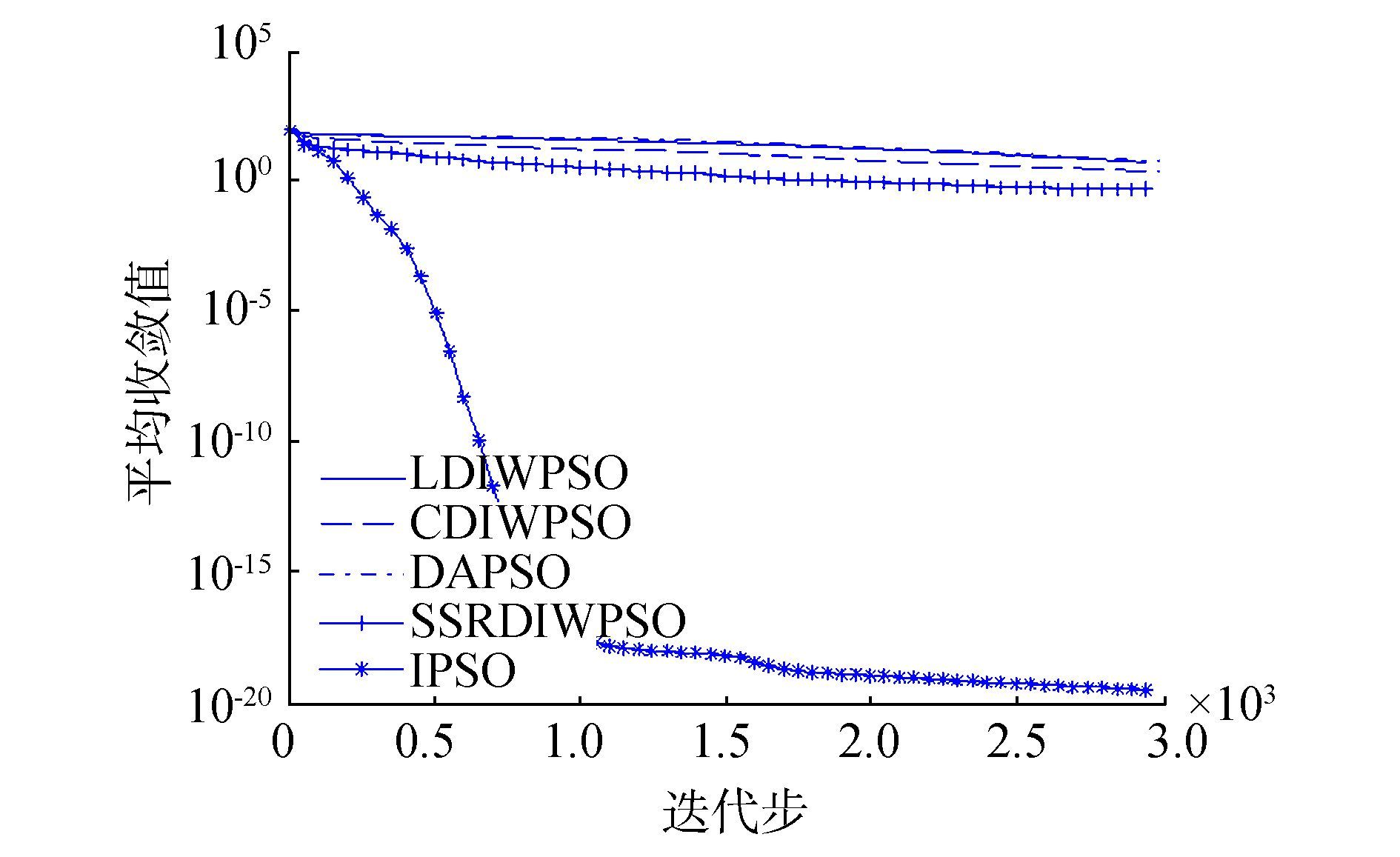

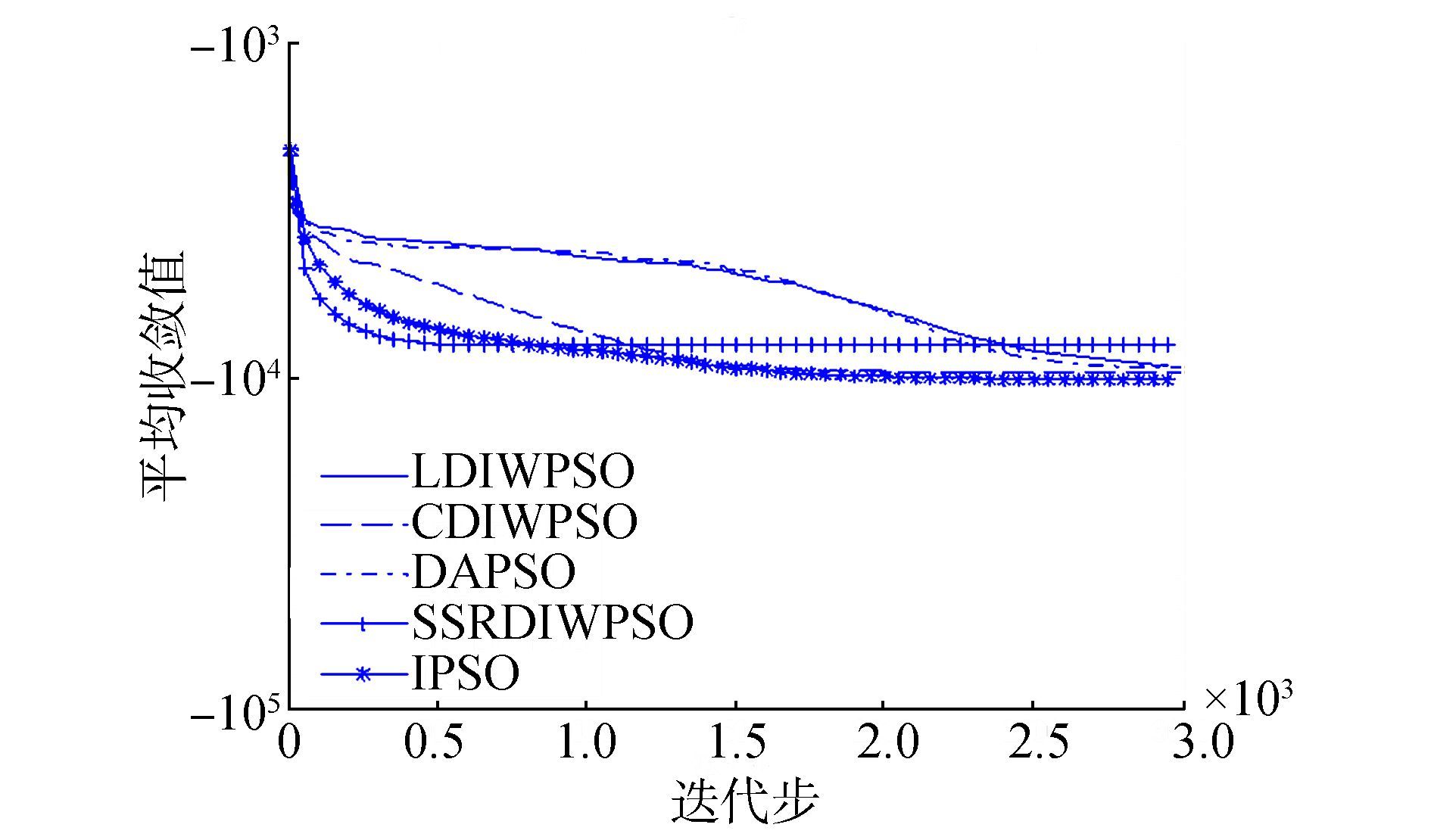

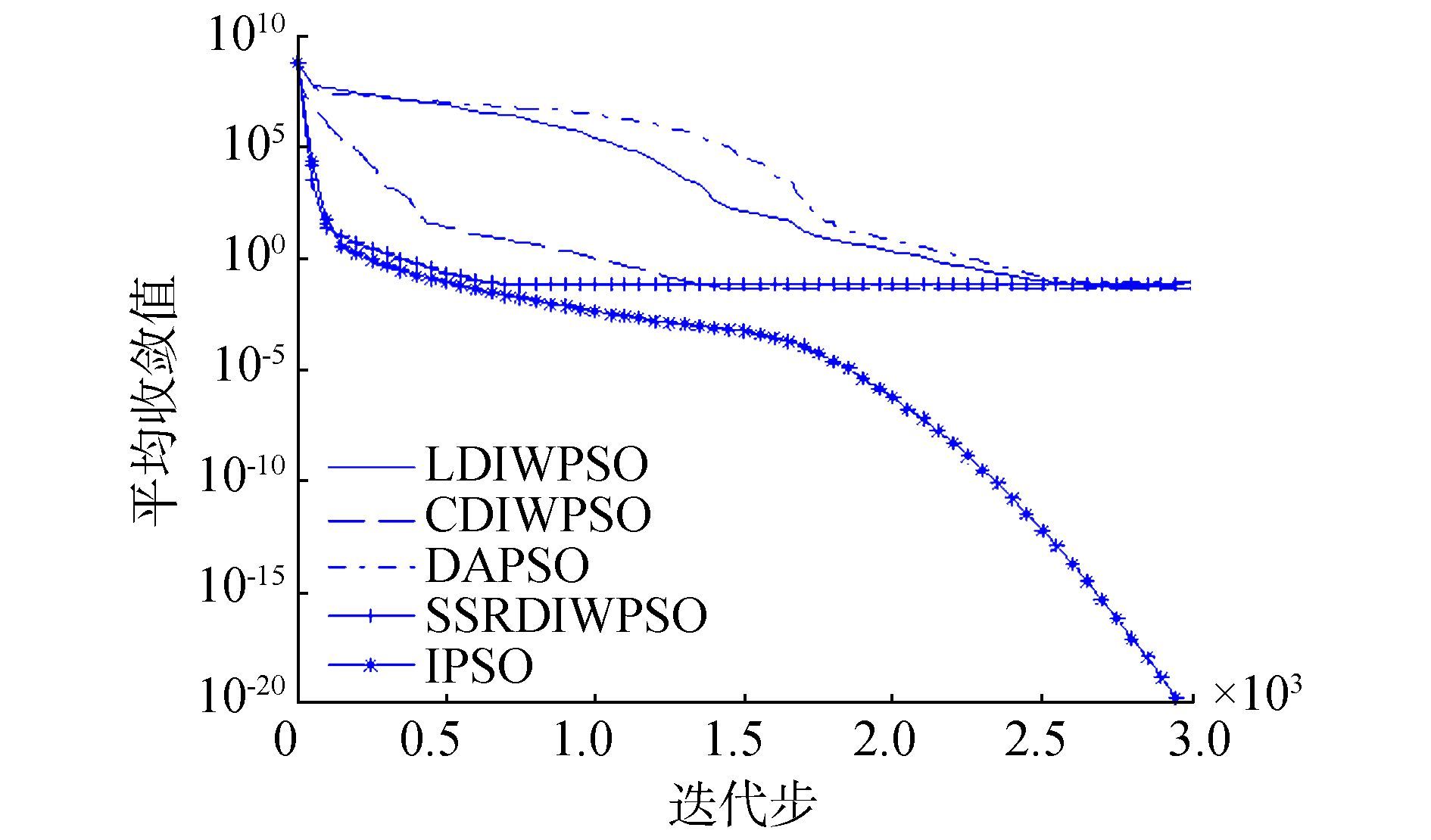

为了更清楚且直观地比较各种算法的收敛性,我们分别从高维单峰函数和高维多峰函数中选取3个函数进行收敛曲线分析,图 1~图 3分别表示测试函数F1、F4、和F6的收敛曲线,其中用100-100代替0。图 4~图 6分别表示测试函数F8、F10和F12的收敛曲线。

|

图 1 函数F1的收敛曲线 Fig.1 Convergence curve of function F1 |

|

图 2 函数F4的收敛曲线 Fig.2 Convergence curve of function F4 |

|

图 3 函数F6的收敛曲线 Fig.3 Convergence curve of function F6 |

从图 1~6中可以看出,对于函数F4、F10和F12,IPSO算法的收敛速度及求解精度明显优于其他4种算法,对于函数F6,虽然求解精度一样,但是IPSO算法的收敛速度明显比其他4种算法收敛快,对于函数F1,虽然收敛精度IPSO算法不如SSRDIWPSO算法,但是他较快收敛到人们比较满意精度,对于函数F8,IPSO算法的求解精度略优于其他4种算法。总之,IPSO算法有较好的收敛速度和求解精度。

|

图 4 函数F8的收敛曲线 Fig.4 Convergence curve of function F8 |

|

图 5 函数F10的收敛曲线 Fig.5 Convergence curve of function F10 |

|

图 6 函数F12的收敛曲线 Fig.6 Convergence curve of function F12 |

针对标准PSO算法不依概率收敛和易出现早熟收敛等缺点,提出了标准粒子群优化算法中增加了具有开发能力和探索能力两个变异算子,其目的是平衡算法的全局探索能力和局部搜索能力。并且从理论上证明所提出算法依概率1收敛到ε-最优解。数值结果表明,所提出的算法较大提高了全局寻优能力且具有较好的鲁棒性。在未来的工作中,将对变异算子中的参数设置进一步研究,以便提高算法收敛精度与收敛速度。

| [1] |

KENNEDY J, EBERHART R C. Particle swarm optimization[C]//IEEE International Conference on Neural Networks. Perth, Australia, 1995:1942-1948.

( 0) 0)

|

| [2] |

SHI Y H, EBERHART R C. Empirical study of particle swarm optimization[C]//IEEE International Conference on Evolutionary Computation. Washington, USA, 1999:1945-1950.

( 0) 0)

|

| [3] |

FENG Y, TENG G F A X, WANG Y, et al. Chaotic inertia weight in particle swarm optimization[C]//Proceedings of the 2nd international conference on innovative computing, information and control. Kumamoto, Japan, 2007:475.

( 0) 0)

|

| [4] |

SHEN X, CHI Z Y, CHEN J C. et al. Particle swarm optimization with dynamic adaptive inertia weight[C]//International conference on challenges in environmental science and computer engineering. Wuhan, China, 2010:287-290.

( 0) 0)

|

| [5] |

ADEWUMI A O, ARASOMWAN A M. An improved particle swarm optimizer based on swarm success rate for global optimization problems[J]. Journal of experimental and theoretical artificial intelligence, 2016, 28(3): 441-483. DOI:10.1080/0952813X.2014.971444 ( 0) 0)

|

| [6] |

PLUHACEK M, SENKERIK R, DAVENDRA D, et al. On the behavior and performance of chaos driven PSO algorithm with inertia weight[J]. Computers and mathematics with applications, 2013, 66: 122-134. DOI:10.1016/j.camwa.2013.01.016 ( 0) 0)

|

| [7] |

YANG C, GAO W, LIU N, et al. Low-discrepancy sequence initialized particle swarm optimization algorithm with high-order nonlinear time-varying inertia weight[J]. Applied soft computing, 2015, 29: 386-394. DOI:10.1016/j.asoc.2015.01.004 ( 0) 0)

|

| [8] |

郭建涛, 刘瑞杰, 陈新武. 用于跳频分量选取的修正适应度距离比粒子群算法[J]. 重庆邮电大学学报:自然科学版, 2015, 27(1): 26-30. GUO Jiantao, LIU Ruijie, CHEN Xinwu. Modified fitness-distance ratio based particle swarm optimizer for selection of frequency hopping components[J]. Journal of chongqing university of posts and telecommunications:natural science edition, 2015, 27(1): 26-30. (  0) 0)

|

| [9] |

ARDIZZON G, CAVAZZINI G, PAVESI G. Adaptive acceleration coefficients for a new search diversification strategy in particle swarm optimization algorithms[J]. Information sciences, 2015, 299: 337-378. DOI:10.1016/j.ins.2014.12.024 ( 0) 0)

|

| [10] |

姜建国, 田旻, 王向前, 等. 采用扰动加速因子的自适应粒子群优化算法[J]. 西安电子科技大学学报, 2012, 39(4): 74-79. JIANG Jianguo, TIAN Min, WANG Xiangqian, et al. Adaptive particle swarm optimization via disturbing acceleration coefficients[J]. Journal of xidian university, 2012, 39(4): 74-79. (  0) 0)

|

| [11] |

任凤鸣, 李丽娟. 改进的PSO算法中学习因子(c1, c2)取值的实验与分析[J]. 广东工业大学学报, 2008, 25(1): 86-89. REN Fengming, LI Lijuan. Experiment and analysis of the value selection of acceleration coefficients (c1, c2) in IPSO method[J]. Journal of Guangdong university of technology, 2008, 25(1): 86-89. (  0) 0)

|

| [12] |

GRIMACCIA F, MUSSETTA M, ZICH R. Genetical swarm optimization:self-adaptive hybrid evolutionary algorithm for electromagnetics[J]. IEEE transactions on antennas and propagation, 2007, 55(3): 781-785. DOI:10.1109/TAP.2007.891561 ( 0) 0)

|

| [13] |

熊伟丽, 徐保国, 吴晓鹏, 等. 带变异算子的改进粒子群算法研究[J]. 计算机工程与应用, 2006, 42(26): 1-3. XIONG Weili, XU Baoguo, WU Xiaopeng, et al. Study on the particle swarm optimization with mutation operator[J]. Computer engineering and applications, 2006, 42(26): 1-3. DOI:10.3321/j.issn:1002-8331.2006.26.001 (  0) 0)

|

| [14] |

段玉红, 高岳林. 基于差分演化的粒子群算法[J]. 计算机仿真, 2009, 26(6): 212-215. DUAN Yuhong, GAO Yuelin. A particle swarm optimization algorithm based on differential evolution[J]. Computer simulation, 2009, 26(6): 212-215. (  0) 0)

|

| [15] |

王丽芳, 曾建潮. 基于微粒群算法与模拟退火算法的协同进化方法[J]. 自动化学报, 2006, 32(4): 630-635. WANG Lifang, ZENG JianChao. A cooperative evolutionary algorithm based on particle swarm optimization and simulated annealing algorithm[J]. Acta automatica sinica, 2006, 32(4): 630-635. (  0) 0)

|

| [16] |

艾兵, 董明刚. 基于高斯扰动和自然选择的改进粒子群优化算法[J]. 计算机应用, 2016, 36(3): 687-691. AI Bing, DONG Minggang. Improved particle swarm optimization algorithm based on Gaussian disturbance and natural selection[J]. Journal of computer applications, 2016, 36(3): 687-691. DOI:10.11772/j.issn.1001-9081.2016.03.687 (  0) 0)

|

| [17] |

刘宏达, 马忠丽. 均匀粒子群算法[J]. 智能系统学报, 2010, 5(4): 336-341. LIU Hongda, MA Zhongli. A particle swarm optimization algorithm based on uniform design[J]. CAAI transactions on intelligent systems, 2010, 5(4): 336-341. (  0) 0)

|

| [18] |

PEHLIVANOGLU Y V. A new particle swarm optimization method enhanced with a periodic mutation strategy and neural networks[J]. IEEE transactions on evolutionary computation, 2013, 17: 436-452. DOI:10.1109/TEVC.2012.2196047 ( 0) 0)

|

| [19] |

LIM W H, MATISA N A. An adaptive two-layer particle swarm optimization with elitist learning strategy[J]. Information sciences, 2014, 273: 49-72. DOI:10.1016/j.ins.2014.03.031 ( 0) 0)

|

| [20] |

WU G, QIU D, YU Y, et al. Superior solution guided particle swarm optimization combined with local search techniques[J]. Expert systems with applications, 2014, 41: 7536-7548. DOI:10.1016/j.eswa.2014.06.005 ( 0) 0)

|

| [21] |

WANG H, SUN H, LI C, et al. Diversity enhanced particle swarm optimization with neighborhood search[J]. Information sciences, 2013, 223: 119-135. DOI:10.1016/j.ins.2012.10.012 ( 0) 0)

|

| [22] |

TIAN D P. A review of convergence analysis of particle swarm optimization[J]. international journal of grid and distributed computing, 2013, 6: 117-128. DOI:10.14257/ijgdc ( 0) 0)

|

| [23] |

LIU Q. Order-2 stability analysis of particle swarm optimization[J]. Evolutionary computation, 2014, 23: 187-216. ( 0) 0)

|

| [24] |

PAN F, LI X T, ZHOU Q, et al. Analysis of standard particle swarm optimization algorithm based on Markov chain[J]. Acta automatica sinica, 2013, 39(4): 81-289. ( 0) 0)

|

| [25] |

戴月明, 朱达祥, 吴定会. 核矩阵协同进化的震荡搜索粒子群优化算法[J]. 重庆邮电大学学报:自然科学版, 2016, 28(2): 247-253. DAI Yueming, ZHU Daxiang, WU Dinghui. Shock search particle swarm optimization algorithm based on kernel matrix synergistic evolution[J]. Journal of chongqing university of posts and telecommunications:natural science edition, 2016, 28(2): 247-253. (  0) 0)

|

| [26] |

YAO X, LIU Y, LIN G. Evolutionary programming made faster[J]. IEEE transactions on evolutionary computation, 1999, 3(2): 81-102. ( 0) 0)

|

2017, Vol. 12

2017, Vol. 12