波达方向(direction of arrival,DOA)估计是阵列信号处理的重要课题之一,在天线、通信和雷达等领域有着广泛的应用[1-2]。近几十年来,人们先后提出了多种DOA估计方法,包括波束形成、基于子空间的算法和最大似然(maximum likelihood,ML)估计等。其中子空间类算法[3-5]有着优秀的超分辨能力,作为代表的MUSIC方法是传统DOA估计技术中最成功的方法,然而子空间类算法需要大量的快拍才能获得高分辨的性能,且当信号由于多路径传播而高度相关或相干时,这些方法可能无法工作。加权子空间拟合(weighted subspace fitting,WSF)是一种参数化的DOA估计方法,该方法原理简单且具有较高的估计精度,因此受到了广泛的关注[6-7]。

近年来,空间的稀疏性引起了人们对信号处理的兴趣,极大地促进了稀疏表示方法在DOA估计[8-10]中的应用,这些方法,都展现出了许多优秀的特性,如提高了信号的分辨率、对噪声的鲁棒性等,其中,稀疏贝叶斯学习[11-15]是最流行的稀疏恢复方法之一。出色的DOA估计性能取决于一个假设,即真正的信号位于预定义的离散空间网格上,然而,实际信号的到达角与其最临近的网格点之间总是存在间隔,即网格失配问题,如果减小离散网格的间距,会带来计算量的大幅增加,而增大离散网格的间距,则算法的估计性能也会因此而变差。为了减小网格失配带来的建模误差,研究者们提出了一些改进的方法来处理离网的DOA估计[16-19],文献[16]采用一阶泰勒模型对真实DOA进行了线性逼近,提出了一种离网SBL方法,有效地解决了网格失配的问题,获得了更优的估计性能。文献[17]利用样本协方差矩阵,进一步提出了一种改进的离网SBL方法来减小噪声方差的影响。文献[18]通过使用相邻的2个网格点,提出了一种线性插值方法对真实DOA线性逼近,这些线性逼近方法确实可以减小离网间隙引起的建模误差,但不能完全消除,如果使用较粗的离散网格,在实际应用中仍然会有较大的建模误差。为此,文献[19]提出了一种基于动态网格的求根SBL算法,该算法在计算复杂度和估计精度之间取得了平衡,即使采用粗网格,也能获得较好的估计性能,对网格间距具有较好的鲁棒性,但是在低信噪比等条件下估计有效性不足。

1 子空间拟合模型假设K个远场窄带信号以角度

| ${{X}}(t) = {{A}}(\theta ){{S}}(t) + {{N}}(t),t = 1,2, \cdots ,L$ |

式中:

| ${{{R}}_X} = {{E}}[{{X}}(t){{{X}}^{\rm{H}}}(t)] = {{A}}(\theta ){{{R}}_s}{{{A}}^{\rm{H}}}(\theta ) + \sigma _n^2{{I}}$ |

对矩阵

| ${{{R}}_X} = \sum\limits_{i = 1}^M {{\mu _i}{{{v}}_i}{{v}}_i^{\rm{H}} = {{{U}}_s}{{{\varLambda }}_s}{{U}}_s^{\rm{H}}} + {{{U}}_n}{{{\varLambda }}_n}{{U}}_n^{\rm{H}}$ |

式中:

| ${{{U}}_s} = {{AT}}$ |

然而实际中,由于有限的采样数及噪声的存在,阵列流型张成的子空间与信号子空间并不完全相同,为了解决这个问题,经典WSF算法[4]通过构造一个拟合关系,使得两者在最小二乘意义下拟合得最好,即

| ${{\hat \theta }} = \arg \mathop {\min }\limits_{{\theta }} \left\| {{{{U}}_s}{{{W}}^{1/2}} - {{AT}}} \right\|_F^2$ |

式中

| ${{Y}} = {{AT}} + {{E}}$ | (1) |

式中:

为了将式(1)纳入稀疏贝叶斯学习框架求解,首先需要构造稀疏模型。将空间以角度为单位等间隔划分成N份,假设离散网格的间隔足够小,则能保证所有入射信号都落在这N个离散角度上,每一个离散角度都对应一个空间信号

| ${{Y}} = {{\varPhi \hat T}} + {{E}}$ | (2) |

根据式(2)中

| ${{y}} = {{Ds}} + {{n}}$ | (3) |

式中:

在实际情况下,入射信号都位于网格点上是不现实的,真实DOA与空间离散网格点之间的间隔不可避免地会导致较大估计误差,针对此网格失配问题,本文将在后面内容提出一种多项式求根方法解决建模误差,将采样网格点作为动态参数,然后通过迭代更新离散网格。

2.2 块稀疏贝叶斯学习由稀疏模型式(3)可知

| $p(\left. {{s}} \right|{{y}};{{\gamma }},{{B}},\lambda ) = {\rm{CN}}({{{\mu }}_s},{{{\varSigma }}_s})$ |

式中:

| ${\gamma _i} = {{{\rm{tr}}\left[ {{{{B}}^{ - 1}}({{\varSigma }}_s^i + {{\mu }}_s^i{{({{\mu }}_s^i)}^{\rm{H}}})} \right]}/K},i = 1,2, \cdots ,N$ | (4) |

| ${{B}} = \left\{ {\sum\limits_{i = 1}^N {{{({{\varSigma }}_s^i + {{\mu }}_s^i{{({{\mu }}_s^i)}^{\rm{H}}})}/{{\gamma _i}}}} } \right\}$ | (5) |

| $\lambda = \frac{{\left\| {{{y}} - {{D}}{{{\mu }}_s}} \right\|_2^2 + \lambda \left[ {NK - {\rm{tr}}({{{\varSigma }}_s}{{\varSigma }}_0^{ - 1})} \right]}}{{MK}}$ | (6) |

式中:

值得注意的是,学习规则式(4)、(5)的维数很高,算法速度不快。文献[14]指出可以通过合理地近似降低学习规则的维度,即利用MSBL算法来简化上述规则,其中MSBL算法为

| ${{\hat T}} = {{\varGamma }}{{{\varPhi }}^{\rm{H}}}{(\lambda {{I}} + {{\varPhi \varGamma }}{{{\varPhi }}^{\rm{H}}})^{ - 1}}{{Y}}$ | (7) |

| ${{{\varSigma }}_{\hat T}} = {\Bigg({{{\varGamma }}^{ - 1}} + \frac{1}{\lambda }{{{\varPhi }}^{\rm{H}}}{{\varPhi }}\Bigg)^{ - 1}}$ | (8) |

将式(7)、(8)及近似公式

| ${\gamma _i} = \frac{1}{K}{{{\hat T}}_{i }}{{{B}}^{ - 1}}{{\hat T}}_{i }^{\rm{H}} + {({{{\varSigma }}_{\hat T}})_{ii}}$ | (9) |

| ${{B}} = \sum\limits_{i = 1}^N {\frac{{{{\hat T}}_{i }^{\rm{H}}{{{{\hat T}}}_{i }}}}{{{\gamma _i}}}} + \eta {{I}}$ | (10) |

| $\lambda {\rm{ = }}\frac{1}{{MK}}\left\| {{{Y}} - {{\varPhi \hat T}}} \right\|_F^2 + \frac{\lambda }{M}{\rm{tr}}\left[ {{{\varPhi \varGamma }}{{{\varPhi }}^{\rm{H}}}{{(\lambda {{I}} + {{\varPhi \varGamma }}{{{\varPhi }}^{\rm{H}}})}^{ - 1}}} \right]$ | (11) |

式中

在本节中,针对网格失配问题带来的建模误差,将离散网格点作为动态参数,然后通过多项式求根来更新离散网格。设空间离散角

| $\begin{array}{l} {\kern 1pt} {\kern 1pt} {{ E}_{p({{y}}|{{\hat s}},\lambda ,{{\gamma }},{{B}})}}\left\{ {\ln (p({{y}}|{{\hat s}},\lambda ))} \right\} = - ||{{y}} - {{D\mu }}||_2^2 - {\rm{tr}}({{D\varSigma }}{{{D}}^{\rm{H}}}) = \\ - \displaystyle\sum\limits_{k = 1}^K {||{{{Y}}_k} - {{\varPhi }}{{{{\hat T}}}_k}||_2^2} - {\rm{tr}}\left( {{{\varPhi }}{{{\varSigma }}_{\hat T}}{{{\varPhi }}^{\rm{H}}}} \right){\rm{tr}}\left( {{B}} \right) \end{array}\!\!\!\!\!\!\!\!$ | (12) |

为了更新每个离散的空间网格

| $\frac{{\partial \displaystyle\sum_k {\left\| {{{{Y}}_k} - {{\varPhi }}{{{{\hat T}}}_k}} \right\|} _2^2}}{{\partial {{{v}}_{{{\hat \theta }_i}}}}} = {({{{{{\alpha}} '}}_i})^{\rm{H}}}\left( {{{{\alpha }}_i}\sum\limits_{k = 1}^K {{{\left| {{{{{\hat T}}}_{ki}}} \right|}^2} - \sum\limits_{k = 1}^K {\left| {{{\hat T}}_{ki}^*} \right|{{{Y}}_{k - i}}} } } \right)$ | (13) |

| $\frac{{\partial {\rm{tr}}\left( {{{\varPhi }}{{{\varSigma }}_{\hat T}}{{{\varPhi }}^{\rm{H}}}} \right)}}{{\partial {{{v}}_{{{\hat \theta }_i}}}}} = {({{{\alpha '}}_i})^{\rm{H}}}\left( {{\varepsilon _{ii}}{{{\alpha }}_i} + \sum\limits_{j \ne i} {{\varepsilon _{ji}}{{{\alpha }}_j}} } \right)$ | (14) |

令式(12)的导数为0,可以得到:

| $\begin{array}{l} {({{{{\alpha '}}}_i})^{\rm{H}}}\left( {{{{\alpha }}_i}\underbrace {\left( {\sum\limits_{k = 1}^K {{{\left| {{{{{\hat T}}}_{ki}}} \right|}^2}} + {\varepsilon _{ii}}{\rm{tr}}\left( {{B}} \right)} \right)}_{ \buildrel \Delta \over = {\phi ^{(i)}}}} + \right.\\ \;\;\;\;\;\;\;\;\left. { \underbrace {{\rm{tr}}\left( {{B}} \right)\sum\limits_{j \ne i} {{\varepsilon _{ji}}{{{\alpha }}_j}} - \sum\limits_{k = 1}^K {\left| {{{\hat T}}_{ki}^*} \right|{{{y}}_{k - i}}} }_{ \buildrel \Delta \over = {\varphi ^{(i)}}}} \right)=0 \end{array}$ | (15) |

式中:

则式(15)可以写成如下多项式形式:

| $\left[ {{v_{{{\hat \theta }_i}}},1,v_{{{\hat \theta }_i}}^{ - 1}, \cdots ,v_{{{\hat \theta }_i}}^{ - (M - 2)}} \right]\left[ {\begin{array}{*{20}{c}} {\dfrac{{M(M - 1)}}{2}{\phi ^{(i)}}} \\ {\varphi _2^{(i)}} \\ {2\varphi _3^{(i)}} \\ \vdots \\ {(M - 1)\varphi _M^{(i)}} \end{array}} \right] = 0$ | (16) |

式中

| $\hat \theta _i^{{\rm{new}}} = \arcsin \left( { - \frac{\lambda }{{2 {\text{π}} d}} {\rm{angle}}\left( {{z_i}} \right)} \right)$ | (17) |

需要注意的是,在每次迭代过程中,并不需要更新所有的离散网格点,选择更新

综上所述,基于子空间拟合和块稀疏贝叶斯学习的离网DOA估计算法步骤如下:

1)对阵列接收数据的协方差矩阵特征分解,构造信号的加权子空间

2)初始化参数

3)迭代;

a)利用式(7)、(8)计算

b)利用式(9)、(10)、(11)、(17)更新

c)若

4)根据

为了验证所提出算法的有效性,在本节中,进行了一些仿真实验来验证所提方法的性能,将所提出的算法与OGSBI(off-grid sparse bayesian inference)算法以及rootSBL(root sparse bayesian learning)算法进行了比较。仿真实验中,天线阵列设定为8元均匀线阵,迭代次数最大值设定为1000次,误差判决门限设置为

实验1设置快拍数为100,离散网格的间隔为2°,仿真实验比较了3种算法的均方根误差随信噪比变化的结果;实验2设置信噪比为0 dB,离散网格的间隔为2°,仿真实验比较了在不同快拍数下3种算法的均方根误差。

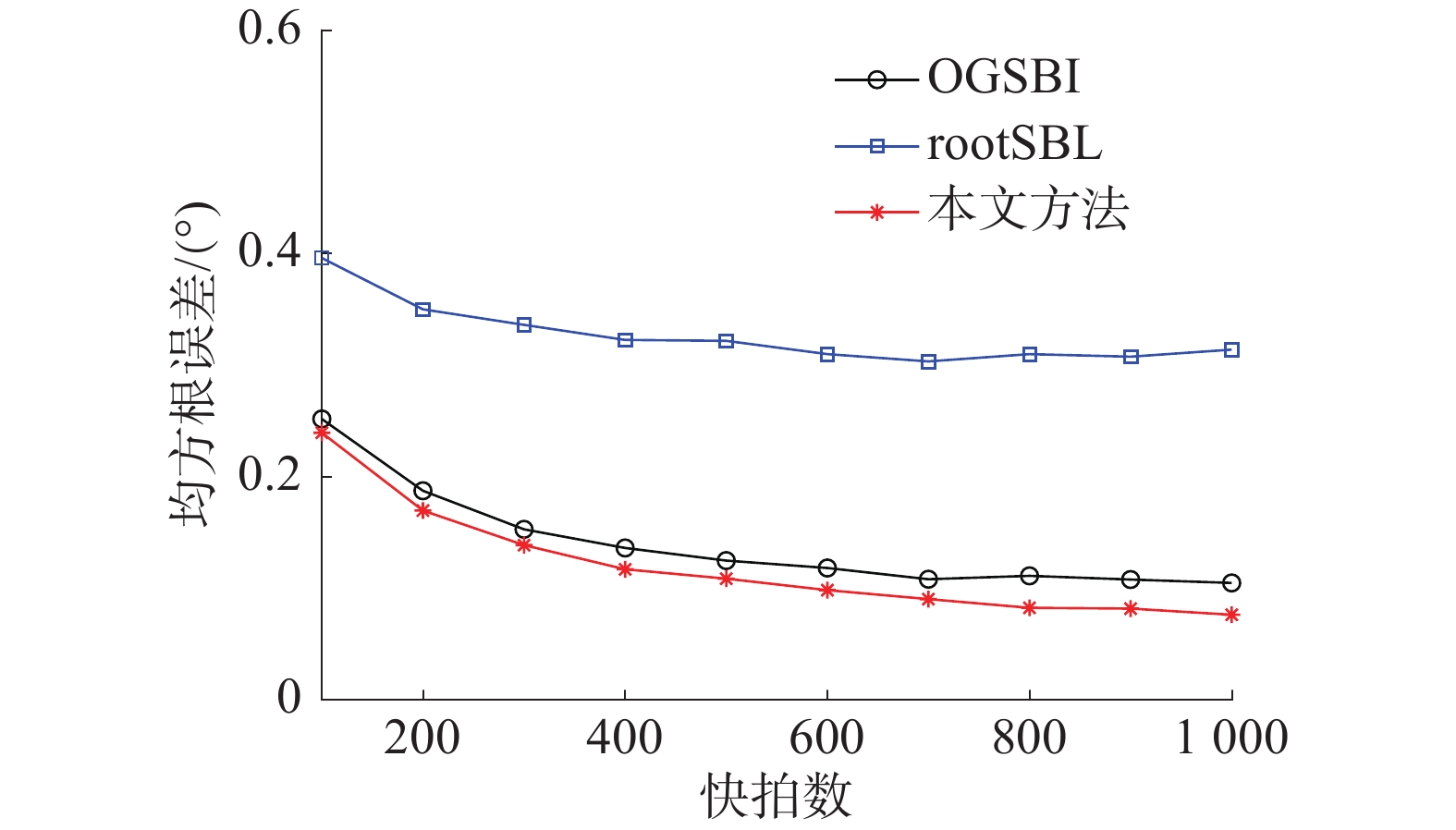

图1表明,当信噪比提高时,3种算法的均方根误差均有所减小,其中相比较rootSBL算法,在较低信噪比下OGSBI算法的估计精度更高,而当信噪比较高时,rootSBL算法的均方根误差能收敛到更小的值,相比较这2种算法,本文所提出的算法在不同信噪比条件下均具有更高的估计精度。由图2可以看出,当快拍数逐渐增大时,3种算法的均方根误差均不断降低,相比较2种对比算法,本文所提出的算法由于采用了子空间拟合,因此随着快拍数的增加,均方根误差能收敛到更小的值,具有更高的估计精度。

|

Download:

|

| 图 1 不同信噪比下算法对比结果 | |

|

Download:

|

| 图 2 不同快拍数下算法对比结果 | |

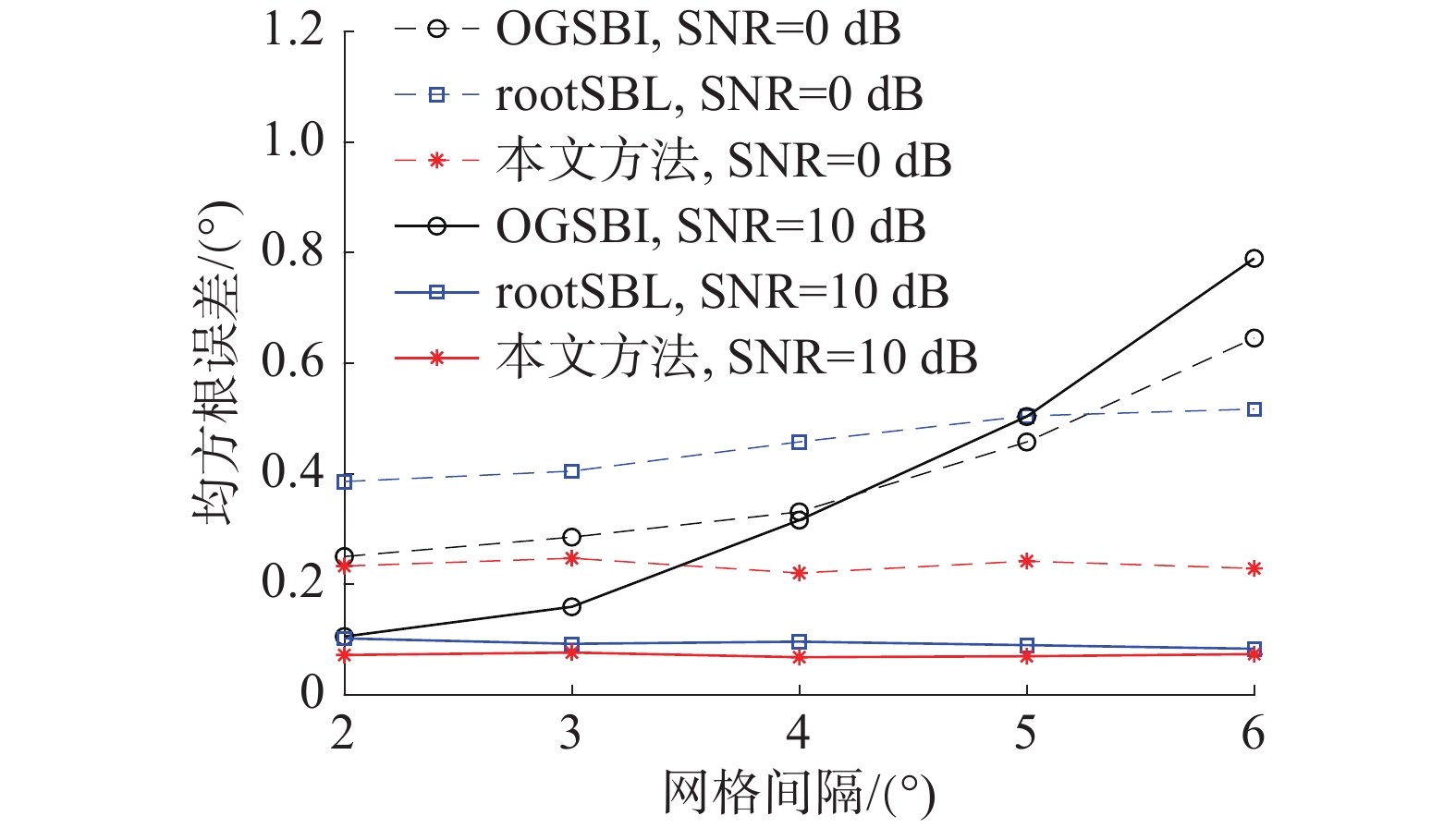

设置采样数为100,仿真实验3比较了在不同网格间隔下3种算法的均方根误差。由图3可以看出,随着网格间隔增大,OGSBI算法均方根误差增大较为明显,而rootSBL算法及本文提出的算法均方根误差变化较小。由于OGSBI算法中采用了线性逼近方法,因此当使用粗网格时会导致较大的建模误差,从而带来较大的均方根误差,而本文提出的算法将粗网格中的采样点作为可调参数,可以很好地减小网格失配带来的误差,具有较强的鲁棒性。

|

Download:

|

| 图 3 不同网格间隔下算法对比结果 | |

设置信噪比为10 dB,采样数为100,离散网格的间隔为2°,空间2入射信号的角度间隔分别为4°~10°,仿真实验4比较了3种算法在不同角度间隔下的均方根误差。图4表明,当空间2角度间隔较近时,OGSBI算法及rootSBL算法均具有较大的均方根误差,当角度间隔逐渐增大,3种算法的均方根误差均有所减小并趋于稳定,相比较这两种算法,本文所提出的算法在不同角度间隔的条件下均具有更小的均方根误差,具有更好的空间分辨率。

|

Download:

|

| 图 4 不同角度间隔下算法对比结果 | |

1)本文将加权子空间拟合引入块稀疏贝叶斯学习,提出了一种新的离网DOA估计方法。

2)针对建模带来的网格失配问题,将离散网格中的采样点作为动态可调参数,然后对网格进行迭代更新来消除建模误差。

3)通过实验仿真分析,相对于传统稀疏贝叶斯算法,本文算法在相同条件下能很好地改善离格误差,具有更高的DOA估计精度和空间分辨率,因而在实际应用中具有更高的可靠性。

为了提高算法的实时性,将本文算法应用于实数域是下一步的研究工作。

| [1] |

KRIM H, VIBERG M. Two decades of array signal processing research: the parametric approach[J]. IEEE signal processing magazine, 1996, 13(4): 67-94

( 0) 0)

|

| [2] |

WANG Qianli, ZHAO Zhiqin, CHEN Zhuming, et al. Grid evolution method for DOA estimation[J]. IEEE transactions on signal processing, 2018: 2374-2383. ( 0) 0)

|

| [3] |

SCHMIDT R O. Multiple emitter location and signal parameters estimation[J]. IEEE transactions on antennas and propagation, 1986, 34(3): 276-280. DOI:10.1109/TAP.1986.1143830 ( 0) 0)

|

| [4] |

ROY R, KAILATH T. ESPRIT-estimation of signal parameters via rotational invariance techniques[J]. IEEE transactions on acoustics speech and signal processing, 1989, 37(7): 984-995. DOI:10.1109/29.32276 ( 0) 0)

|

| [5] |

PAL P, WAIDYANATHAN P P.Coprime sampling and the music algorithm [C]//Digital Signal Process Workshop and IEEE Signal Processing Education Workshop. Sedona, Arizona, USA, 2011: 289–294.

( 0) 0)

|

| [6] |

HU Nan, YE Zhongfu, XU Dongyang, et al. A sparse recovery algorithm for DOA estimation using weighted subspace fitting[J]. Signal processing, 2012, 92(10): 2566-2570. ( 0) 0)

|

| [7] |

孙磊, 王华力, 熊林林, 等. 基于贝叶斯压缩感知的子空间拟合DOA估计方法[J]. 信号处理, 2012, 28(6): 827-833. DOI:10.3969/j.issn.1003-0530.2012.06.010 ( 0) 0)

|

| [8] |

GERSTOFT P, MECKLENBRAUKER C F, XENAKI A, et al. Multisnapshot sparse bayesian learning for DOA[J]. IEEE signal processing letters, 2016, 23(10): 1469-1473. ( 0) 0)

|

| [9] |

MALIOUTOV D, CETIN M, WILLSKY A S. A sparse signal reconstruction perspective for source localization with sensor arrays[J]. IEEE transactions on signal processing, 2005, 53(8): 3010-3022. DOI:10.1109/TSP.2005.850882 ( 0) 0)

|

| [10] |

DAS, ANUP. Theoretical and experimental comparison of off-grid sparse bayesian direction-of-arrival estimation algorithms[J]. IEEE access, 2017:1. ( 0) 0)

|

| [11] |

TIPPING M E. Sparse bayesian learning and the relevance vector machine[J]. Journal of machine learning research, 2001, 1(3): 211-244. ( 0) 0)

|

| [12] |

CARLIN M, ROCCA P, OLIVERI G, et al. Directions-of-arrival estimation through bayesian compressive sensing strategies[J]. IEEE transactions on antennas and propagation, 2013, 61(7): 3828-3838. DOI:10.1109/TAP.2013.2256093 ( 0) 0)

|

| [13] |

YANG J, YANG Y, LIAO G, et al. A super-resolution direction of arrival estimation algorithm for coprime array via sparse Bayesian learning inference[J]. Circuits system and signal process, 2018, 37(5): 1907-1934. ( 0) 0)

|

| [14] |

YANG J, YANG Y, LU J. A variational Bayesian strategy for solving the DOA estimation problem in sparse array[J]. Digital signal processing, 2019, 90: 28-35. ( 0) 0)

|

| [15] |

WANG H, WANG X, WAN L, et al. Robust sparse Bayesian learning for off-grid DOA estimation with non-uniform noise[J]. IEEE access, 2018(6): 64688-64697. ( 0) 0)

|

| [16] |

YANG Zai, XIE Lihua, ZHANG Cishen. Off-grid direction of arrival estimation using sparse Bayesian inference[J]. IEEE transactions on signal processing, 2011, 61(1): 38-43. ( 0) 0)

|

| [17] |

ZHANG Yi, YE Zhongfu, XU Xu, et al. Off-grid DOA estimation using array covariance matrix and block-sparse Bayesian learning[J]. Signal processing, 2014, 98: 197-201. DOI:10.1016/j.sigpro.2013.11.022 ( 0) 0)

|

| [18] |

WU Xiaohua, ZHU Weiping, YAN Jun. Direction of arrival estimation for off-grid signals based on sparse bayesian learning[J]. IEEE sensors journal, 2016, 16(7): 2004-2016. DOI:10.1109/JSEN.2015.2508059 ( 0) 0)

|

| [19] |

DAI J, BAO X, XU W, et al. Root sparse bayesian learning for off-grid DOA estimation[J]. IEEE signal processing letters, 2017, 24(1): 46-50. DOI:10.1109/LSP.2016.2636319 ( 0) 0)

|

| [20] |

ZHANG Z, RAO B D. Sparse signal recovery with temporally correlated source vectors using sparse bayesian learning[J]. IEEE journal of selected topics in signal processing, 2011, 5(5): 912-926. DOI:10.1109/JSTSP.2011.2159773 ( 0) 0)

|

2020, Vol. 47

2020, Vol. 47