2. 山西大学 计算智能与中文信息处理教育部重点实验室,山西 太原 030006

2. Key Laboratory of Computational Intelligence and Chinese Information Processing of Ministry of Education, Shanxi University, Taiyuan 030006, China

度量学习作为机器学习领域的重要分支,已广泛应用于多个领域,如图像检索[1-4]、目标检测[5-7]、亲属关系验证[8]、音乐推荐[9]等,目的是学习数据间的相似性关系使相似样本间距离尽可能小,不相似样本间距离尽可能大[10]。

在度量学习中,样本之间的相似性通常用马氏距离进行度量,即

基于三元组约束的度量学习通常依据先验知识,采用不同策略构建固定约束。随着迭代次数的增加,部分三元组在训练中不产生作用,于是,一些动态选择三元组的算法被提出。Mei等[24]提出了使用三元组的基于 Logdet 散度的度量学习算法(logdet divergence based metric learning with triplet constraints, LDMLT),该算法在每次迭代中选择有效的约束进行度量学习,降低了先验知识对度量学习的影响,但是构成三元组约束的样本都是在原始样本中选择,不能充分利用数据蕴含的三元约束。针对这一问题,研究人员将对抗训练与度量学习进行结合,在度量学习中通过产生对抗样本增强算法性能。Chen等[25]提出对抗度量学习算法(adversarial metric learning, AML),通过产生对抗样本对用于混淆学得的度量,提高度量学习算法鲁棒性。

基于二元组约束的对抗度量学习受参数和样本对间相似性差异的影响,使得对所有二元组约束产生对抗样本对是很难实现的,而三元约束解决了样本对间差异性的问题,同时考虑类间样本和类内样本的关系,可以将三元约束与对抗训练进行结合。基于三元组约束的度量学习与对抗训练进行结合的关键问题是如何生成对抗样本。本文借鉴对抗训练中样本扰动的思想,在原始样本附近产生对抗样本以构建对抗三元组约束,提出一种新的三元组约束的构造方法,并构建对抗样本三元组约束的度量学习模型。本文的贡献主要有:

1) 通过在三元组中的入侵样本附近学习对抗样本,构造了间隔更小的对抗样本三元组约束;

2) 构造的对抗样本学习优化模型具有闭式解;

3) 实验结果表明提出算法的性能优于代表性的三元组度量学习算法。

1 约束构建的相关算法 1.1 三元组约束构建三元组的构建是基于三元组约束的度量学习关键问题之一。Liu等[19]通过选择位于类边界的样本构建三元组约束,提出了一种有效的三元组约束构建方法。该论文利用任意样本、与其欧氏距离最大的同类样本和与其欧氏距离最小的异类样本构造三元组约束,并随机选择其中的一部分约束用于度量学习,这些约束一旦构造和选择将在整个度量学习的过程中固定不变。然而,这些基于欧氏距离构造并随机选择的三元组约束并不能很好地指导不断更新的度量学习,制约了算法的性能。为了解决这一问题,Mei等[24]提出了一种面向度量学习的三元组动态选择策略,使每次迭代都能有效地利用约束进行度量学习。该方法基于当前(第t次迭代)的马氏矩阵Mt计算样本的距离矩阵和相似矩阵,根据当前度量下的近邻与先验目标近邻的偏离程度定义了样本的混乱度,并依据样本混乱度选择三元组约束,用于学习下一次(t+1次)迭代时度量矩阵

尽管LDMLT算法在每次迭代时动态选择混乱度高的约束,可以提升度量学习算法的性能,但并不能充分挖掘数据蕴含的相似性关系。

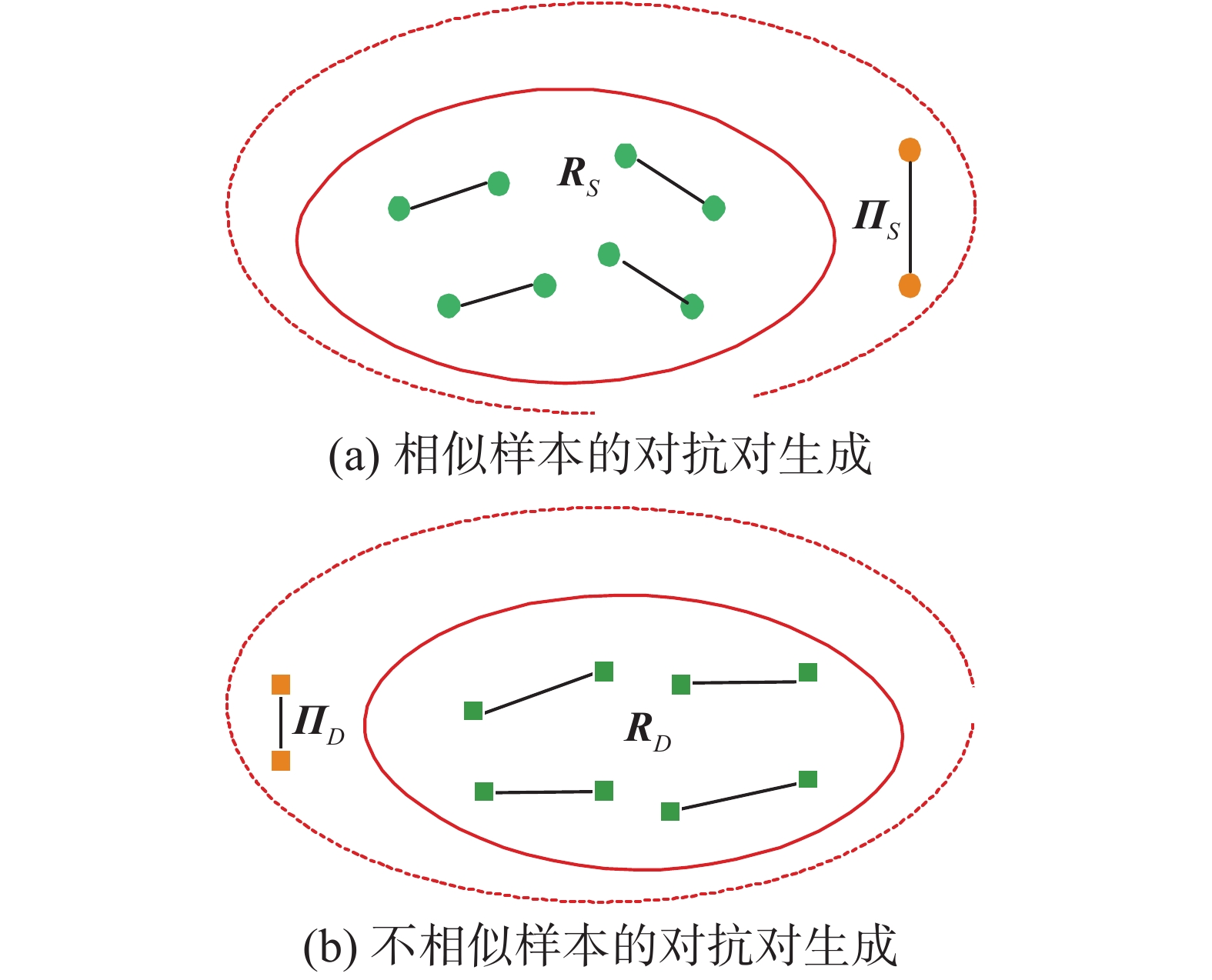

1.2 对抗度量学习对抗度量学习(AML)算法[25]基于对抗样本构造二元组约束,用于提高度量学习算法的鲁棒性。AML算法包括2个阶段:混淆阶段和区分阶段。在混淆阶段,通过对每个约束的2个端点产生样本扰动,即学习对抗样本对,不断放大或者缩小当前对抗样本的距离,使得在当前度量下该样本对难以区分,如图1所示。同类样本生成的对抗样本对(即

|

Download:

|

| 图 1 对抗样本示意 Fig. 1 Schematic illustration of adversarial samples | |

图1中S为相似样本,D为不相似样本,RS表示相似样本对,

AML算法通过对同类样本生成同类彼此相距甚远的对抗样本对,而异类样本生成异类彼此相对较近的对抗样本对来增强算法的鲁棒性。然而,由于需要为每一个二元约束构建对抗样本对,仅用单个参数来控制对抗样本的学习,使其参数难以调整,且构建的对抗样本对绝大多数是无效的。

2 对抗样本三元组约束的度量学习现有的方法基于欧氏距离构建三元组约束,并随机选择部分三元组约束用于度量学习。虽然有一些方法提出了动态构造三元组约束的方法,但大多都是从数据中选择或强调部分约束,并没有构造新的约束,受对抗度量学习(AML)的启发,本文提出一种三元对抗约束的构造方法。

2.1 模型构建通过调整参数动态构建三元组,提出了对抗样三元组约束的度量学习算法 (metric learning algorithm with adversarial sample triples constraints, ASTCML)。算法分为2个阶段:对抗阶段和区分阶段。

对抗阶段,生成对抗样本。初始三元组构建参考文献[15],针对每个样本

|

Download:

|

| 图 2 对抗过程示意 Fig. 2 Schematic illustration of adversarial processes | |

| $\min \sum\limits_{(i,j,l) \in {\cal{N}}} {{{{d_M}}}({{{x}}_i},{{{{\pi}}} _{il}})} + \alpha \sum\limits_{(i,j,l) \in {\cal{N}}} {{{{d_M}}}({{{{{\pi}}}} _{il}},{{{x}}_l})} $ | (1) |

式中:

区分阶段,学到的度量尽可能区分对抗三元组。将生成的对抗样本代入初始违反约束关系的三元组中,通过调整参数动态生成新的三元组。在新的三元组

| $ \begin{array}{l} \min (1 - \mu )\displaystyle\sum\limits_{i,j\sim i} {{{{d_M}}}({{{x}}_i},{{{x}}_j})} + \mu \displaystyle\sum\limits_{i,j\sim i} {\displaystyle\sum\limits_l {(1 - {y_{il}}){\xi _{ijl}}} } \\ \;\;\;\;{\rm{s.t.}}\;\;\;{{{d_M}}}({{{x}}_i},{{{\pi}}_{il}}) - {{{d_M}}}({{{x}}_i},{{{x}}_j}) \geqslant 1 - {\xi _{ijl}}\geqslant 0\\ \;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\; {{M}}\geqslant 0 \end{array} $ | (2) |

式中:最小化损失函数中的前者表示近邻损失,后者表示三元组损失;

该模型的目标函数是一个凸优化问题,可以利用梯度下降方式进行求解。根据式(1)可以得到对抗样本的闭式解:

| ${{{{\text{π}}}} _{il}} = \frac{1}{{\alpha + 1}}{{{{x}}}_i} + \frac{\alpha }{{\alpha + 1}}{{{{x}}}_l}, (i,j,l) \in {\cal{N}}$ | (3) |

将对抗样本代入违反约束的初始三元组中。区分阶段的损失函数也可以表示为

| $\begin{split} {{L}} =& (1 - \mu )\displaystyle\sum\limits_{i,j \sim i} {{{{d}}_{{M}}}({{{x}}_i},{{{x}}_j})} + \\ &\mu \displaystyle\sum\limits_{i,j \sim i} {\displaystyle\sum\limits_l {(1 - {y_{il}}){{[1 + {{{d}}_{{M}}}({{{x}}_i},{{{x}}_j}) - {{{d}}_{{M}}}({{{x}}_i},{{{{{\pi}} }}_{il}})]}_ + }} } \end{split}$ | (4) |

利用式(4)得到

| $\begin{split} \dfrac{{\partial {{L}}}}{{\partial {{M}}}} =& (1 - \mu )\displaystyle\sum\limits_{i,j \sim i} {{{{X}}_{ij}}} + \\ &\mu \displaystyle\sum\limits_{(i,j,l) \in {\cal J}} {{{{X}}_{ij}} - \left( {\left( {{{\left( {\dfrac{\alpha }{{\alpha + 1}}} \right)}^2} - 1} \right){{[\xi _{ijl}^{{\rm{ori}}}]}_ + } + 1} \right){{{X}}_{il}}} \end{split} $ | (5) |

式中:

算法1 对抗样本三元组约束的度量学习算法。

输入

输出

初始化

根据式(3)计算对抗样本,代入初始三元组中。

迭代计算:

1) 根据式(5)计算梯度

2) 更新梯度

3) 将

4)

5) 直到收敛。

假定样本个数为

本节在12个数据集上(如表1所示),对提出的ASTCML算法与目前几个代表性算法进行比较,并分析了实验中参数的灵敏度与提出算法的收敛性。

3.1 实验数据与设计本文提出的ASTCML算法与与 K 近邻算法(K-nearest neighbor, KNN)、ITML算法[12]、GMML算法[13]、LMNN算法[16]、PFLMNN算法[18]、RVML算法[21]、LDMLT算法[24]和AML算法[25]进行了对比。

| 表 1 数据描述 Tab.1 Data sets description |

实验中,对表1数据中的Corel_5k数据集,先用主成分分析(principal component analysis, PCA)进行降维,保留的数据信息大于95%,除Satellite和Wilt数据集外,对其他数据集进行预处理操作,对处理后的数据集进行划分,其中80%的数据为训练集,20%的数据为测试集。采用5折交叉验证的方法进行实验,将训练集随机分为5部分,轮流作为验证集,并对5次实验结果求平均,选择在验证集上达到最高分类精度的参数,在测试集上进行测试。Satellite数据集由4 435个训练样本和2 000个测试样本组成,Wilt数据集是由4 339个训练样本和500个测试样本组成,针对这2个数据集,首先对训练集进行预处理操作,记录下训练集的归一化方法,将该方法应用于测试集进行预处理,在所有参数实验结果中选择分类精度最高的精度,即为当前数据集的分类精度。初始化度量矩阵

实验结果在表2中列出,表2中粗体表示最高的分类正确率,在次高的分类正确率下划横线。当数据集为Corel_5k时,AML算法运行时间相对较长,不参与算法比较。实验结果显示本文算法普遍优于代表性的度量学习方法,相比于动态构建三元组的LDMLT算法,除在German数据集上取得次高的分类精度,在其他数据集上的分类精度明显提高;与LMNN算法相比,提出算法的分类精度最低与其保持一致;相比基于对抗训练的AML算法,分类精度普遍较高。可以得出,本文算法在一定程度上说明区分更加难以区分的三元组约束能够提升算法的性能。

| 表 2 分类精度的对比 Tab.2 Comparisons of classification accuracy |

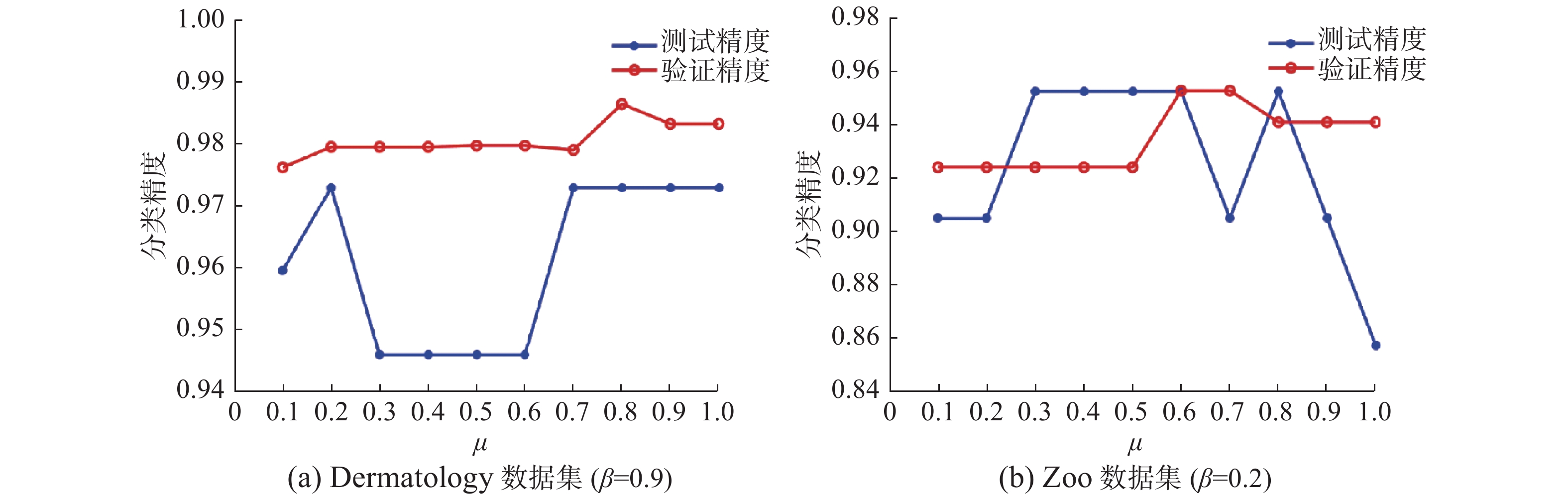

在本文提出的算法中,超参数

在对抗样本三元组约束的度量学习算法迭代优化的过程中,若相邻2次损失值的差小于设定的阈值或迭代次数大于最大迭代次数,则算法结束。本文通过不同数据集分别在测试集上迭代100次的损失值变化情况分析提出算法的收敛性。从图5可以看出,在迭代过程中,随着迭代次数的增加,损失函数的值呈现下降趋势,表明提出算法是可以收敛的。

|

Download:

|

|

图 3 不同

|

|

|

Download:

|

|

图 4 不同

|

|

|

Download:

|

| 图 5 损失值变化情况 Fig. 5 Change of loss value on different data sets | |

本文借鉴对抗训练的思想,建立了学习对抗样本的优化模型,构建了对抗样本三元组约束度量学习模型,并提出相应的度量学习算法。理论上,基于对抗训练思想得到的三元组约束更加符合数据的情况,学习对抗样本的模型求解简单,且可以提高分类精度。实验结果验证了提出算法的性能。虽然,与已有代表性算法相比提出算法的性能有所提升,但其对参数较为敏感。如何降低模型对参数的敏感性将是值得进一步研究的问题。

| [1] |

QIAN Qi, JIN Rong, ZHU Shenghuo, et al. Fine-grained visual categorization via multi-stage metric learning[C]//2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Boston, USA, 2015: 3716–3724.

( 0) 0)

|

| [2] |

GAO Yue, WANG Meng, JI Rongrong, et al. 3-D object retrieval with hausdorff distance learning[J]. IEEE transactions on industrial electronics, 2014, 61(4): 2088-2098. DOI:10.1109/TIE.2013.2262760 ( 0) 0)

|

| [3] |

HOI S C H, LIU W, CHANG S F. Semi-supervised distance metric learning for collaborative image retrieval[C]//2008 IEEE Conference on Computer Vision and Pattern Recognition. Anchorage, AK, USA, 2008: 1–7.

( 0) 0)

|

| [4] |

HOI S C H, LIU W, LYU M R, et al. Learning distance metrics with contextual constraints for image retrieval[C]//2006 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR'06). New York, NY, USA, 2006: 2072–2078.

( 0) 0)

|

| [5] |

DONG Yanni, DU Bo, ZHANG Liangpei, et al. Hyperspectral target detection via adaptive information-theoretic metric learning with local constraints[J]. Remote sensing, 2018, 10(9): 1415. DOI:10.3390/rs10091415 ( 0) 0)

|

| [6] |

DONG Yanni, DU Bo, ZHANG Liangpei. Target detection based on random forest metric learning[J]. IEEE journal of selected topics in applied earth observations and remote sensing, 2015, 8(4): 1830-1838. DOI:10.1109/JSTARS.2015.2416255 ( 0) 0)

|

| [7] |

DONG Yanni, DU Bo, ZHANG Lefei, et al. Local decision maximum margin metric learning for hyperspectral target detection[C]//2015 IEEE International Geoscience and Remote Sensing Symposium (IGARSS). Milan, Italy, 2015: 397–400.

( 0) 0)

|

| [8] |

HU Junlin, LU Jiwen, YUAN Junsong, et al. Large margin multi-metric learning for face and kinship verification in the wild[C]//12th Asian Conference on Computer Vision. Singapore, Singapore, 2015: 252–267.

( 0) 0)

|

| [9] |

LU Rui, WU Kailun, DUAN Zhiyao, et al. Deep ranking: triplet MatchNet for music metric learning[C]//2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). New Orleans, USA, 2017: 121–125.

( 0) 0)

|

| [10] |

XING E P, NG A Y, JORDAN M I, et al. Distance metric learning, with application to clustering with side-information[C]//Proceedings of the 15th International Conference on Neural Information Processing Systems. Cambridge, MA, USA, 2002: 521–528.

( 0) 0)

|

| [11] |

YING Yiming, LI Peng. Distance metric learning with eigenvalue optimization[J]. The journal of machine learning research, 2012, 13(1): 1-26. ( 0) 0)

|

| [12] |

DAVIS J V, KULIS B, JAIN P, et al. Information-theoretic metric learning[C]//Proceedings of the 24th International Conference on Machine Learning. Corvallis, Oregon, USA, 2007: 209–216.

( 0) 0)

|

| [13] |

ZADEH P H, HOSSEINI R, SRA S. Geometric mean metric learning[C]//Proceedings of the 33rd International Conference on Machine Learning. New York, NY, USA, 2016: 2464–2471.

( 0) 0)

|

| [14] |

OMARA I, ZHANG Hongzhi, WANG Faqiang, et al. Metric learning with dynamically generated pairwise constraints for ear recognition[J]. Information, 2018, 9(9): 215. DOI:10.3390/info9090215 ( 0) 0)

|

| [15] |

WEINBERGER K Q, BLITZER J, SAUL L. K. Distance metric learning for large margin nearest neighbor classification[M]. WEISS Y, SCHÖLKOPF B, PLATT J. Advances in Neural Information Processing Systems. Cambridge, MA: MIT Press, 2006: 1473–1480.

( 0) 0)

|

| [16] |

WEINBERGER K Q, SAUL L K. Distance metric learning for large margin nearest neighbor classification[J]. The journal of machine learning research, 2009, 10: 207-244. ( 0) 0)

|

| [17] |

杨柳, 于剑, 景丽萍. 一种自适应的大间隔近邻分类算法[J]. 计算机研究与发展, 2013, 50(11): 2269-2277. YANG Liu, YU Jian, JING Liping. An adaptive large interval nearest neighbor classification algorithm[J]. Journal of computer research and development, 2013, 50(11): 2269-2277. DOI:10.7544/issn1000-1239.2013.20120356 (  0) 0)

|

| [18] |

SONG Kun, NIE Feiping, HAN Junwei, et al. Parameter free large margin nearest neighbor for distance metric learning[C]//The 31st AAAI Conference on Artificial Intelligence. San Francisco, USA, 2017: 2555–2561.

( 0) 0)

|

| [19] |

LIU Meizhu, VEMURI B C. A robust and efficient doubly regularized metric learning approach[C]//12th European Conference on Computer Vision. Florence, Italy, 2012: 646–659.

( 0) 0)

|

| [20] |

LE CAPITAINE H. Constraint selection in metric learning[J]. Knowledge-based systems, 2018, 146(15): 91-103. ( 0) 0)

|

| [21] |

PERROT M, HABRARD A. Regressive virtual metric learning[C]//Advances in Neural Information Processing Systems. Montréal, Canada, 2015: 1810–1818.

( 0) 0)

|

| [22] |

WANG Faqiang, ZUO Wangmeng, ZHANG Lei, et al. A kernel classification framework for metric learning[J]. IEEE transactions on neural networks and learning systems, 2015, 26(9): 1950-1962. DOI:10.1109/TNNLS.2014.2361142 ( 0) 0)

|

| [23] |

ZUO Wangmeng, WANG Faqiang, ZHANG D, et al. Distance metric learning via iterated support vector machines[J]. IEEE transactions on image processing, 2017, 26(10): 4937-4950. DOI:10.1109/TIP.2017.2725578 ( 0) 0)

|

| [24] |

MEI Jiangyuan, LIU Meizhu, KARIMI H R, et al. LogDet divergence-based metric learning with triplet constraints and its applications[J]. IEEE transactions on image processing, 2014, 23(11): 4920-4931. DOI:10.1109/TIP.2014.2359765 ( 0) 0)

|

| [25] |

CHEN Shuo, GONG Chen, YANG Jian, et al. Adversarial metric learning[C]//Proceedings of the Twenty-Seventh International Joint Conference on Artificial Intelligence. Stockholm, Sweden, 2018: 2021–2027.

( 0) 0)

|

2021, Vol. 16

2021, Vol. 16