因时序逻辑语言与自然语言高度相似,具有强大的表达力,近年来,越来越多的学者开始做基于时序逻辑理论的路径规划研究[1-3]。文献[4]提出了基于计算树逻辑的智能体规划,文献[5-6]着重从单一的、全局的线性时序逻辑规范上来规划一组机器人的行为。文献[7]已经将一个线性时序逻辑片段作为车辆路径问题的一种规范语言。文献[8]在有时间限制的动态环境中进行线性时序逻辑约束下的路径规划研究。文献[9-10]认可了一种自上而下的线性时序逻辑规划方法,其中,机器人团队被给予一种全局性线性时序逻辑规范,并努力将任务公式分解为独立的局部线性时序逻辑规范,以至于可以根据局部规范单独处理每一个独立的机器人。文献[11]探讨了一种基于线性时序逻辑规范的自下而上的规划算法,提出了一种部分分散性的解决方案,即只是考虑具有相互依赖性的智能体的集群,不再去研究整个团队的智能体行为。

然而,尽管上述方法解决了时序逻辑综合问题,但不能处理依赖于时变参数(动态)的优化问题,此时,可通过在智能体规划上应用滚动时域方法来解决这个问题。其中,滚动时域控制是一种考虑整个固定时域范围的控制方法,对于每次由优化算法得到的最优控制序列,仅取序列中的第一个元素作为当前时刻的控制率。目前,滚动时域控制的应用几乎遍及各个领域[12-16]。同时,在滚动时域控制方法与满足时序逻辑公式的控制策略相结合的应用方面,文献[17]中提出了一种用于控制自动车辆的滚动时域控制方法,该方法必须满足在分区环境区域内所发生的服务请求的丰富任务规范。滚动时域控制也曾被用于文献[18-19]中,使一个单独智能体的线性时序逻辑规划达到一个局部最优行为。此外,文献[20-21]也通过应用滚动时域方法来规划智能体的运动,然而,作者没有考虑要优化任何回报的收集问题。

本文针对有限确定性系统中的路径规划问题,根据给定的回报动态假设和局部回报感知特点,开发出具有高级线性时序逻辑任务规范和局部最优回报收集的智能体运动规划的通用框架,将控制综合问题划分为更小的子问题,并以类似于滚动时域的方式逐渐获得可行的正确控制策略。其中,将智能体的运动环境抽象建模为有限且确定性的加权切换系统。同时,为了保证满足时序逻辑规范,从线性时序逻辑模型检测[22]中获得灵感,在切换系统和满足线性时序逻辑公式的

定义1 滚动时域控制

滚动时域控制的理论思路为:在每个时刻,当前状态的代价函数在有限时域内被优化,并在该时刻,仅取最优控制序列中的第一个控制信号实际作用到系统中,舍去后面各项。接着,在下一时刻,对于新的测量状态重复以上过程。这个过程随着时间的推进反复滚动进行。对于一个复杂环境下的路径规划问题,采用滚动时域策略,路径的每一小段都通过求解一个有限时域约束优化问题得到。因此,滚动时域控制方法能够极大地减少计算时间。

定义2 有限加权确定性切换系统

一个有限加权的确定性切换系统是一个元组

定义3 线性时序逻辑任务公式

线性时序逻辑(linear temporal logic, LTL)语句

定义4

一个

对于

定义5 加权Product自动机

给定一个加权确定性切换系统和一个

| ${S\!\!_P} = Q \times {S\!\!_B}{\text{;}}$ |

| ${S\!\!_{P0}} = {\rm{\{ }}{q_0}{\rm{\} }} \times {S\!\!_{B0}}{\text{;}}$ |

| ${\omega _P}((q,s),(q',s')) = \omega ((q,q')){\text{;}}$ |

其中,

| ${\gamma _\varGamma }({{p}}) = {{q}} = {q_0}{q_1}\cdots$ | (1) |

给定一个有限加权确定性切换系统

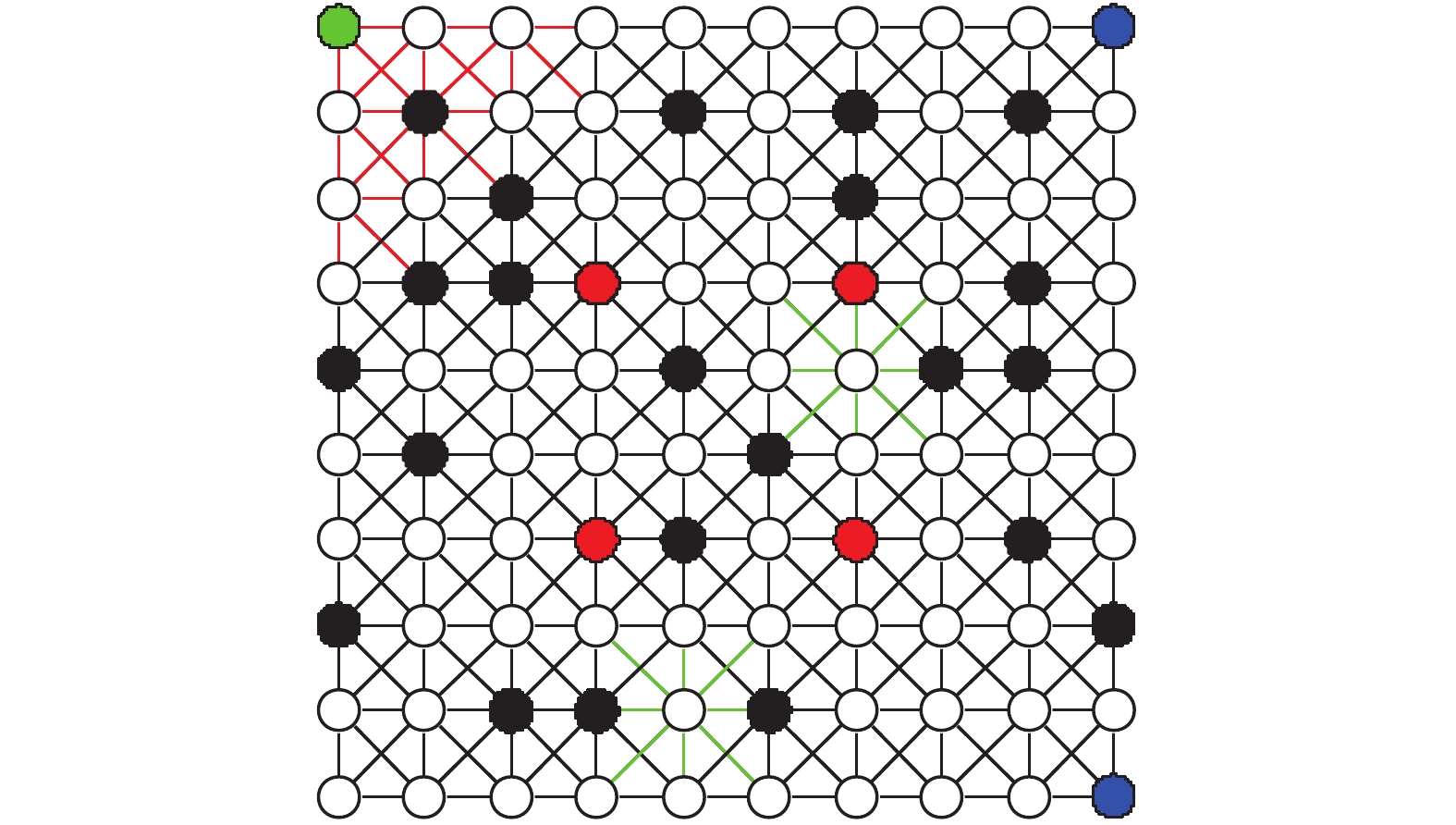

一个确定性加权切换系统的例子如图1所示。观测状态的集合为

|

Download:

|

| 图 1 给定的切换系统 Fig. 1 Given transition system | |

图1模拟了一个智能体在类似图形中运动的环境建模。在这个例子中,

此外,由于智能体的运行环境中各区域地势不可能完全相同,而不同的地势就会影响智能体的运行距离或速度,本文把在不同地势中影响智能体运动的因素称为地势影响因子,并用符号

在本例子中,设定图1切换系统网格中的红色线条区域、黑色线条区域、绿色线条区域分别为不同的地势区域,具有不同的地势影响因子。假设3个区域的地势影响因子

红色线条区域:

| $\rho {\rm{ = }}\left\{ \begin{gathered} 1.5,\;\;\;\;{\rm{10 < }}\omega (q,q') < 15 \\ 1.2,\;\;\;\;{\rm{ 0 < }}\omega (q,q') \leqslant 10{\rm{ }} \\ \end{gathered} \right.{\rm{ }}$ | (2) |

黑色线条区域:

| $\rho {\rm{ = }}\left\{ \begin{gathered} 1.2,\;\;\;\;{\rm{10 < }}\omega (q,q') < 15 \\ 1,\;\;\;\;{\rm{0 < }}\omega (q,q') \leqslant 10{\rm{ }} \\ \end{gathered} \right.{\rm{ }}$ | (3) |

绿色线条区域:

| $\rho {\rm{ = }}\left\{ \begin{gathered} 1.3,\;\;\;\;{\rm{10 < }}\omega (q,q') < 15 \\ 1.1,\;\;\;\;{\rm{0 < }}\omega (q,q') \leqslant 10{\rm{ }} \\ \end{gathered} \right.{\rm{ }}$ | (4) |

若在本例中,不顾及区域之间的地势差别而粗糙地描述智能体的工作环境,则将导致仿真中智能体判断最优路径时出现差错,使最终得到的仿真结果不切合实际情况,这也与智能体可以应用在各种复杂环境的目的相斥。若引入地势影响因子,则可以更精细地描述智能体运动的环境模型,使研究结果更符合实际,更真实接近智能体在实际情况下的最优路径判断。

2.2 线性时序逻辑公式ϕ将下面的LTL公式作为智能体的监视任务:

| $\begin{gathered} {\rm{ }} \phi : ={\rm{G}}\neg a \\ \wedge {\rm{GFb}} \\ {\rm{ }} \wedge {\rm{G(b}} \to {\rm{X}}\neg {\rm{bUc)}} \\ {\rm{ }} \wedge {\rm{G(c}} \to {\rm{X}}\neg {\rm{cUd}}){\rm{ }} \\ \end{gathered} $ | (5) |

假定本文描述的系统在具有回报的环境中操作,令在

算法1 回报值定义算法

1) 定义回报最大值为25

2) 定义回报最小值为10

3) 在

4) 在

根据上述定义算法,回报最大值设置为25,最小值设置为10。在

本文的目的是合成一个控制器,在满足时序逻辑任务的同时,使回报值以滚动时域的方式最大化。其中,本文提出的滚动时域控制策略的主要组成部分如下:在时刻

本文在Product自动机的状态上引入一个正值势函数

首先,令

其次,为一个有限路径

| $L({{p}}) = \sum\limits_{k = 1}^{n - 1} {\rho {\omega _P}} ({p_k},{p_{k + 1}})$ | (6) |

其中,地势影响因子

此外,定义从状态

| $d(p,p') = \left\{ \begin{array}{l} \mathop {\min }\limits_{{{p}} \;\in D(p,\;p')} L({{p}}) \;{\text{若}}\;D({p_i},{p_j}) \ne {\text{Ø}} \\ \infty\;\;\;\;\;\;\;{\text{若}}\;D({p_i},{p_j}) = {\text{Ø}} \end{array} \right.$ | (7) |

因为

定义6 P中一个状态的势函数

状态点的势函数

| $V(p) = \left\{ \begin{array}{l} \mathop {\min }\limits_{p' \in F_P^*} {\rm{ }}d(p,p')\;\;{\text{若}}p \notin F_P^*\\ 0\;\;{\text{若}}p \in F_P^*{\rm{ }} \end{array} \right.$ | (8) |

式中:

本文通过提出算法2来获得对应于任务公式

算法2 势函数计算算法

1) 构建

2) 构建Product自动机:

3) 对于所有

4) 设置

5) 若存在

6) 若

7) 对于所有

控制设计的核心组成部分是一个运行在Product自动机上的状态反馈控制器,该控制器在一定的约束条件下,在预定的固定时域内优化有限轨迹。同时,这些约束确保了Product自动机状态上的势函数在有限时间内减少,从而保证在满足LTL公式方面取得进展。

为了说明控制器的工作原理,首先,将

| $ {R_k}({{{p}}_k}) = \sum\limits_{i{\rm{ = }}1}^N {{R_k}({\gamma _\varGamma }({p_{i\;|k}}))} $ | (9) |

根据Product自动机和滚动时域控制的特点,可以将滚动时域控制器的设计分为两部分,即k = 0时刻和

在

| $\begin{array}{l} {{p}}_0^* = {{\mathop{\rm RH}\nolimits} ^0}({S_{P0}})\\ {\rm{ : = }}\mathop {{\rm{agrmax}}}\limits_{{p_0} \;\in {{P}}({p_0},N)} {R_0}({{{p}}_0}) \end{array}$ | (10) |

约束条件:

控制器从势函数有限的初始状态

| ${{p}}_k^* = \operatorname{RH} ({p_k},{{p}}_{k - 1}^{\rm{*}})$ | (11) |

即,它取决于当前状态

| $ {p_k} = p_{1|k - 1}^{\rm{*}},{\rm{ }}k = 1,2,\cdots $ | (12) |

为满足线性时序逻辑任务,

情况1

滚动时域控制器被定义如下:

| $ \begin{array}{l} {{p}}_k^* = {\mathop{\rm RH}\nolimits} ({p_k},{{p}}_{k - 1}^{\rm{*}})\\ {\rm{ : = }}\mathop {{\bf{argmax}}}\limits_{{{{p}}_k} \in {{P}}({p_k},N)} {R_k}({{{p}}_k}) \end{array} $ | (13) |

约束条件:

在此情况下,保证

情况2

若定义

| $\begin{array}{l} {{p}}_k^* = {\mathop{\rm RH}\nolimits} ({p_k},{{p}}_{k - 1}^{\rm{*}})\\ {\rm{ : = }}\mathop {{\bf{argmax}}}\limits_{{{{p}}_k} \in {{P}}({p_k},N)} {R_k}({{{p}}_k}) \end{array}$ | (14) |

约束条件:

其中,若先前的预测轨迹包含这种状态,则强制最优预测轨迹中的一个状态具有0值的势函数。

情况3

控制器定义如下:

| $\begin{array}{l} {{p}}_k^* = {\mathop{\rm RH}\nolimits} ({p_k},{{p}}_{k - 1}^{\rm{*}})\\ {\rm{ : = }}\mathop {{\bf{argmax}}}\limits_{{p_k} \in {{P}}({p_k},N)} {R_k}({{{p}}_k}){\rm{ }} \end{array}$ | (15) |

约束条件:

此种情况的终端约束为:终端状态的势函数数值大小是有限的。

4.2 控制算法切换系统

算法3 滚动时域控制算法

输入

输出 控制策略

离线初始化:

1) 构建对应于

| $B = {\rm{(}}{S_B},{S_{B0}},{2^\varPi }{\rm{,}}{\delta _B}{\rm{,}}{F_B}{\rm{)}};$ |

2) 构建Product自动机:

| $P = \varGamma \otimes B = {\rm{(}}{S_P},{S_{P0}},{\Delta _P},{\omega _P},{F_P}{\rm{)}};$ |

3) 计算所有状态

在线滚动时域控制

1) 若存在

2) 观测

3) 获得

4) 在Product自动机

5) 设置

6) 观测

7) 获得

8) 在Product自动机

9) 设置

10) 结束循环;

11) 否则,将无源于

12) 结束。

5 仿真结果分析将有限切换系统

在本案例研究中,选择时域

|

Download:

|

| 图 2 在滚动时域控制律下系统轨迹的截图 Fig. 2 Screenshots of the system trajectory under the receding horizon control law | |

在图2中,具有回报量的状态(回报感知状态)都用青色标出,青色圆圈的大小与相应状态点的回报值大小成正比。各子图的含义如下:

图2(a):在

图2(b):滚动时域控制器

图2(c):第一次切换

图2(d): 滚动时域控制器

同时,将仿真时间设置为100个时间步长,并将势函数与时间步长的关系以及回报值总和与时间步长的关系绘制在图3中。

在每个时刻上绘制了势函数

从图3可以看出回报值在每个离散时刻变化,收集到的回报量总和在稳步增长。其实,控制器通常选择满足终端约束,而不是选择增加势函数以便获得更多回报。因此,在这种情况下,对势函数的约束控制了局部回报量的最大化。

|

Download:

|

| 图 3 100个时间步长下的势函数和回报值总和 Fig. 3 Potential function and total reward for 100 time-step | |

此外,本文对未引入地势影响因子的情况进行了仿真,其中,将式(6)表述的路径长度函数重新定义为

| $ L({{p}}) = \sum\limits_{k = 1}^{n - 1} {{\omega _P}} ({p_k},{p_{k + 1}}) $ | (16) |

由于式(6)与式(8)所定义的势函数严格相关,而式(6)与式(16)存在差异,因此,未引入地势影响因子与引入地势影响因子的区别实质为势函数定义的差异。根据算法1和算法2,以及滚动时域控制算法3,通过MATLAB仿真,绘制了未引入地势影响因子情况下的势函数以及回报值总和与时间步长的关系,如图4所示。

|

Download:

|

| 图 4 未引入地势影响因子时100个时间步长下的势函数和回报值总和 Fig. 4 Potential function and total reward for 100 time-step without terrain influence factor | |

对比图3和图4的势函数仿真结果,可知,在两图中的每一相同时刻,状态点的势函数大小不同(当前状态到Product自动机接受状态的最大自我可达集合

然而,本文只是比较客观的加入了几个假设的地势影响因子来阐述不同地势对智能体在状态点之间运行距离的影响,所假设的因子数值大小与智能体的实际工作环境无关,即本文只是在一个模拟的复杂多变地势环境下,通过假设的地势影响因子来实现满足控制目标的路径规划。在实际状况下,便可通过人为经验获得地势影响因子的大小来准确规划每一时刻的最优路径以获得最大累积回报值。

6 结束语本文提出了一种线性时序逻辑约束下的在线实时求解滚动时域控制的新方法来解决有限确定性系统中的路径规划问题。该方法描述了保证无限轨迹满足给定线性时序逻辑公式的情况下,局部优化有限确定性系统轨迹的滚动时域控制框架,其优化标准被定义为最大化与系统状态相关联的时变回报值,同时,也开发了基于类能量函数定义的强化自动机接受条件的可证明正确的控制策略。

有关线性时序逻辑约束下的滚动时域控制问题,将来会有多个研究方向。例如,可以将所提出的框架扩展到有限概率系统,马尔可夫决策过程和部分可观测马尔可夫决策过程。还可以尝试将研究成果扩展到其他有限系统模型,如非确定性系统。

| [1] |

Fainekos G E, Girard A, Kress-Gazit H, et al. Temporal logic motion planning for dynamic robots[J]. Automatica, 2009, 45(2): 343-352. DOI:10.1016/j.automatica.2008.08.008 ( 0) 0)

|

| [2] |

Plaku E, Karaman S. Motion planning with temporal logic specifications: progress and challenges[J]. AI communications, 2015, 29(1): 151-162. DOI:10.3233/AIC-150682 ( 0) 0)

|

| [3] |

Saha S, Julius A A. Task and motion planning for manipulator arms with metric temporal logic specifications[J]. IEEE robotics and Automation letters, 2018, 3(1): 379-386. DOI:10.1109/LRA.2017.2755078 ( 0) 0)

|

| [4] |

Andersen M S, Jensen R S, Bak T, et al. Motion planning in multi-robot systems using timed automata[J]. Promoting health for working women, 2004, 37(8): 597-602. ( 0) 0)

|

| [5] |

Kloetzer M, Xuchu Ding, Belta C. Multi-robot deployment from LTL specifications with reduced communication[C]. The 50th IEEE conference on decision and control and European control conference. Orlando, USA, 2011: 4867–4872.

( 0) 0)

|

| [6] |

Shital S. Chiddarwar, N. Ramesh Babu. Multi-agent system for off-line coordinated motion planning of multiple industrial robots[J]. International journal of advanced robotic systems, 2011, 8(1): 102-112. ( 0) 0)

|

| [7] |

Karaman S, Frazzoli E. Vehicle routing with linear temporal logic specifications: applications to multi-UAV mission planning[J]. International journal of robust & Nonlinear control, 2011, 21(12): 1372-1395. ( 0) 0)

|

| [8] |

Zhou Y, Maity D, Baras J S. Optimal mission planner with timed temporal logic constraints[C]. IEEE 2015 European control conference. Linz, Austria, 2015: 759-764.

( 0) 0)

|

| [9] |

Chen Y, Ding X C, Stefanescu A, et al. Formal approach to the deployment of distributed robotic teams[J]. IEEE transactions on robotics, 2012, 28(1): 158-171. DOI:10.1109/TRO.2011.2163434 ( 0) 0)

|

| [10] |

Ulusoy A, Smith S L, Ding X C, et al. Optimality and robustness in multi-robot path planning with temporal logic constraints[J]. International journal of robotics research, 2013, 32(8): 889-911. DOI:10.1177/0278364913487931 ( 0) 0)

|

| [11] |

Guo M, Dimarogonas D V. Multi-agent plan reconfiguration under local LTL specifications[J]. International journal of robotics research, 2015, 34(2): 218-235. DOI:10.1177/0278364914546174 ( 0) 0)

|

| [12] |

Dunbar W B, Caveney D S. Distributed receding horizon control of vehicle platoons: stability and string stability[J]. IEEE transactions on automatic control, 2012, 57(3): 620-633. DOI:10.1109/TAC.2011.2159651 ( 0) 0)

|

| [13] |

李佩杰, 林颂晨, 白晓清, 等. 计及配电网三相模型的电动汽车充电滚动时域控制[J]. 中国电机工程学报, 2016, 36(17): 4533-4543. LI Peijie, LIN Songchen, BAI Xiaoqing, et al. Receding-horizon-control-based charging method of electric vehicle considering three-phase model of distribution network[J]. Proceedings of the CSEE, 2016, 36(17): 4533-4543. (  0) 0)

|

| [14] |

Grema A S, Cao Y. Receding horizon control for oil reservoir waterflooding process[J]. Systems science & Control engineering an open access journal, 2017, 5(1): 449-461. ( 0) 0)

|

| [15] |

王文彬, 秦小林, 张力戈, 等. 基于滚动时域的无人机动态航迹规划[J]. 智能系统学报, 2018, 13(04): 524-533. WANG Wenbin, QIN Xiaolin, ZHANG Lige, et al. Dynamic UAV trajectory planning based on receding horizon[J]. CAAI transactions on intelligent systems, 2018, 13(04): 524-533. (  0) 0)

|

| [16] |

段海滨, 杨之元. 基于柯西变异鸽群优化的大型民用飞机滚动时域控制[J]. 中国科学: 技术科学, 2018, 48(03): 277-288. DUAN Haibin, YANG Zhiyuan. Large civil aircraft receding horizon control based on cauthy mutation pigeon inspired optimization[J]. Scientia Sinica technologica, 2018, 48(03): 277-288. (  0) 0)

|

| [17] |

Ulusoy A, Belta C. Receding horizon temporal logic control in dynamic environments[J]. The international journal of robotics research, 2014, 33(12): 1593-1607. DOI:10.1177/0278364914537008 ( 0) 0)

|

| [18] |

Ding X C, Belta C, Cassandras C G. Receding horizon surveillance with temporal logic specifications[C]. The 49th IEEE conference on decision and control. Atlanta, USA, 2010: 256–261.

( 0) 0)

|

| [19] |

Tumova J, Dimarogonas D V. A Receding horizon approach to multi-agent planning from local LTL specifications[C]. 2014 American control conference. Portland, USA, 2014: 1775–1780.

( 0) 0)

|

| [20] |

Wongpiromsarn T, Topcu U, Murray R M. Receding horizon temporal logic planning for dynamical systems[C]. Proceedings of the 48th IEEE conference on decision and control. Shanghai, China, 2009, 57 (11): 5997–6004.

( 0) 0)

|

| [21] |

Wongpiromsarn, Topcu, Ufuk, et al. Receding horizon control for temporal logic Specifications[C]. Proceedings of the 13th ACM international conference on hybrid systems. Stockholm, Sweden, 2010: 101–110.

( 0) 0)

|

| [22] |

朱创营, 常亮, 徐周波, 等. 含有合取查询的时态描述逻辑ALC-LTL模型检测[J]. 智能系统学报, 2014, 9(06): 714-722. ZHU Chuangying, CHANG Liang, XU Zhoubo, et al. Research on the model checking of temporal description logic ALC-LTL containing conjunctive query[J]. CAAI transactions on intelligent systems, 2014, 9(06): 714-722. (  0) 0)

|

| [23] |

郭建, 边明明, 韩俊岗. LTL公式到自动机的转换[J]. 计算机科学, 2014, 9(06): 714-722. GUO Jian, BIAN Mingming, HAN Jungang. Translation from LTL formula into automata[J]. Computer science, 2014, 9(06): 714-722. (  0) 0)

|

2020, Vol. 15

2020, Vol. 15