2. 北京联合大学 智慧城市学院, 北京 100101

2. Smart City College, Beijing Union University, Beijing 100101, China

相对于英文和其他拉丁语系的语言,中文缺乏明显的词边界,从而给分词带来更大的挑战。随着中文日益广泛的应用,学术界已有一些学者和团队对中文分词进行了初步研究,并形成了一些分词算法和分词软件(例如jieba)[1-8]。但是,随着信息技术(特别是互联网技术)的飞速发展,大量网络新词不断涌现,使得采用常规算法进行中文分词效果变差。因此,如何设计高效的中文分词方法仍然是当前自然语言处理领域的一个重要研究问题。传统的中文分词基本方法可以分为3类:基于词典的分词方法、基于统计的分词方法和基于构词的分词方法。基于词典的分词方法按照一定方式对文本进行扫描,然后依靠分词词典,按照一定规则进行匹配(例如正向最大匹配、逆向最大匹配等),从而实现分词[3-4]。这类方法的优点是简单,便于实现,并且通常具有较高效率, 但存在分词歧义的问题,并且分词性能依赖于词典。基于统计的分词方法首先对输入字符串进行全切分,找到所有可能的切分结果,然后按照一定的统计语言模型(例如n元模型)计算每种切分结果出现的概率,选择概率最大的切分结果作为分词结果[5-7]。这类方法不依赖于词典,泛化能力较强,需要大规模语料集对模型进行训练,并且分词性能与语料集的选择有关。基于构词的分词方法将中文分词当作词性标注问题,采用机器学习模型(例如最大熵模型)进行训练处理,最终得到分词结果[8-10]。这类方法的性能相对于前两类算法有一定的提升,但是训练语料未出现的情形通常完全会被忽略,并且训练过程中容易出现过拟合现象,从而得到局部最优解。传统分词方法在对网络文本(例如微博)进行分词时的分类性能会下降,其主要原因是网络语言具有表达形式自由、语言不规范和内容多样性等特征,从而使得传统算法很难充分提取特征,特别是词的上下文的隐藏信息。此外,网络新词的创造相对于传统词汇具有一定的变异性[11],这些都给中文分词带来了困难。为了克服上述问题,本文提出了一种基于深度神经网络模型[12]的中文分词方案。长短期记忆网络(long short-term memory, LSTM)模型相对于传统的递归神经网络(recurrent neural network, RNN)等模型更好地解决了训练过程中的梯度消失等问题[13],可以更好地捕捉文本的上下文信息。编码-解码模型(encoder-decoder model, EDM)是自然语言处理中的重要模型。论文采用基于LSTM的EDM模型对分词模型进行训练,然后将训练得到的模型用于中文分词。为了进一步提升分词性能,提出一种基于词向量的修正方法,对上述模型的分词结果进行修正。

1 基于LSTM的EDM模型 1.1 LSTM模型LSTM是为了解决传统的RNN模型的梯度消失问题而提出的一种深度神经网络模型[13]。相对于传统的RNN结构,LSTM网络引入记忆单元C和3个逻辑门:输入门(i)、遗忘门(f)和输出门(o),用来控制信息的传递和遗弃,从而更好地处理历史信息。

由于在中文分词中,当前字符前面和后面的字符信息对该字符都有辅助作用,因此论文采用双向LSTM模型,即将文本分别按照正向和反向进行输入,分别按照上述过程得到输出信息ht和h′t,最后将得到的输出信息级联h=[ht, ht]。

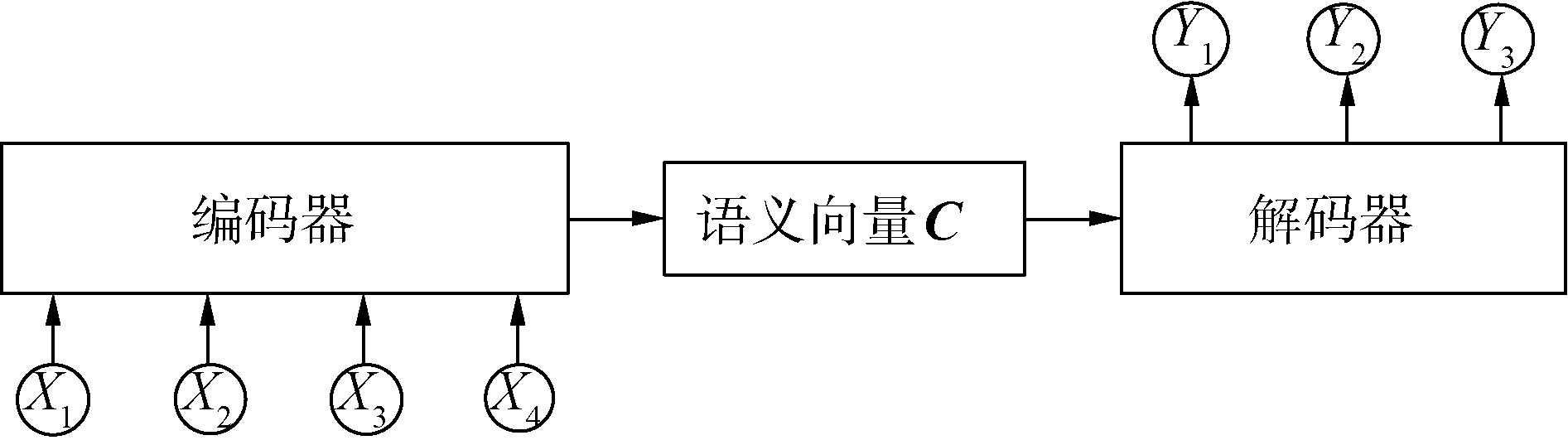

1.2 基于LSTM的EDM编码-解码模型(encoder-decoder model, EDM)是机器翻译领域中的基本模型[14],其基本原理如图 1所示。从图 1可知,EDM模型使用2个神经网络(本文2个神经网络均采用LSTM网络),第1个神经网络的作用相当于编码器(Encoder),把一串输入符号序列编码成一个长度固定的向量C,用来表示该序列,第2个神经网络的作用相当于解码器(Decoder),把这个固定长度的向量解码成输出符号序列。

|

Download:

|

| 图 1 编码-解码模型 Fig. 1 Encoder-decoder model | |

假定X=[X1, X2, …, Xm]和Y=[Y1, Y2, …, Ym]分别是输入符号序列和输出符号序列,编码器将X进行编码,即将X通过非线性变换f转化为语义向量C:

| $\mathit{\boldsymbol{C}} = f\left( {{X_1}, {X_2}, \cdots , {X_m}} \right) $ | (1) |

对于解码器,其根据语义向量C和之前的历史信息Y1, Y2, …Yt-1估计当前时刻的符号Yt:

| ${Y_t} = g\left( {{Y_1}, {Y_2}, \cdots , {Y_{t - 1}}} \right) $ | (2) |

函数g的选取使得在给定C, Y1, Y2, …,Yt-1的条件下,输出符号Yt的概率最大化。通过联合训练2个神经网络,最终得到输出符号序列Y。

从上面的分析中不难看出,对于EDM模型,编码和解码之间的唯一联系就是一个固定长度的语义向量C。即编码器要将整个输入序列X的信息压缩表示为向量C。这样做的弊端在于,先输入的序列信息在编码时会被后输入的信息“覆盖”掉,从而在解码时候不能获得充足信息,影响解码的准确度。为了解决这一问题,文献[15]引入注意力(Attention)机制,如图 2所示。

|

Download:

|

| 图 2 引入注意力的编码-解码模型 Fig. 2 Attention-based encoder-decoder model | |

从图 2中可以看出,引入注意力的EDM模型的编码过程并不是将所有输入信息都编码为一个固定长度的向量,而是将其转化为各个时刻的上下文环境向量Ct,这样在解码过程中可以充分利用输入序列携带的信息。

假定{ht}为语义向量,引入注意力的EDM模型的编码和解码过程如下:

| ${e_{ij}} = {\mathop{\rm score}\nolimits} \left( {{s_{i - 1}}, {h_j}} \right) $ | (3) |

| ${\alpha _{ij}} = \frac{{\exp \left( {{e_{ij}}} \right)}}{{\sum\limits_k {\exp } \left( {{e_{ik}}} \right)}} $ | (4) |

| ${C_i} = \sum\limits_j {{\alpha _{ij}}} {h_j} $ | (5) |

| ${s_i} = f\left( {{s_{i - 1}}, {y_1}, \cdots , {y_{i - 1}}, {C_i}} \right) $ | (6) |

| ${y_i} = g\left( {{y_1}, \cdots , {y_{i - 1}}, {s_i}, {C_i}} \right) $ | (7) |

式中:score为选择的某种代价函数(例如tanh函数),用来衡量si-1和hj之间的度量。

2 词向量模型词向量是指用向量来表示词汇表中的词,是自然语言处理任务的基本工具之一[16]。目前,应用最为广泛的是分布式表示的词向量(例如CBOW模型、Glove模型等),其将词汇表中的所有的词表示为固定长度的向量,构成词向量空间。通过选定模型此向量进行训练,可以得到词汇表中每个词的表示。论文采用Glove模型训练词向量。

假定wi和wj是词汇表中的词,Wi和Wj为其对应的词向量。记Xij为词wj在词wi的语境中出现的次数,Xi为在词wi的语境中所有词出现的次数:

| ${\mathit{\boldsymbol{X}}_i} = \sum\limits_j {{X_{ij}}} $ | (8) |

定义目标函数:

| $J(\boldsymbol{W})=\sum\limits_{i, j} f\left(X_{i j}\right)\left(\boldsymbol{W}_{i j}^{\mathrm{T}}-\operatorname{lb}_{2} X_{i j}\right)^{2} $ | (9) |

式中:W是词向量构成的矩阵,f(Xij)的表达式为

| $f\left( {{X_{ij}}} \right) = \left\{ {\begin{array}{*{20}{l}} {{{\left( {{X_{ij}}/{X_{\max }}} \right)}^\alpha }, }&{{X_{ij}} < {X_{\max }}}\\ {1, }&{{X_{ij}} \ge {X_{\max }}} \end{array}} \right. $ | (10) |

式中:Xmax和α为预先设置的参数,本文取Xmax=10和α=0.75。。通过优化式(16)中的目标函数J(W),可以得到每个词的词向量。

3 分词方案设计与实现 3.1 中文分词方案由于基于LSTM的EDM模型的一部分分词错误是将一个词错误切分单字(例如将“一带一路”切分为“一/带/一/路”),因此提出采用词向量对基于LSTM的EDM模型的分词结果进行修正,图 3为本文提出的分词系统方案流程图。词向量对分词修正的基本原理是[17]:如果词wi和wj同时出现的频率较高,那么二者以较大概率可以组合成新词wiwj。

|

Download:

|

| 图 3 提出的中文分词方案流程图 Fig. 3 The diagram of the proposed scheme for Chinese word segmentation | |

从式(19)训练词向量的目标函数可以看出,如果上述条件满足,那么词wi和wj对应的词向量Wi和Wj之间的夹角θ的余弦值会接近1(即夹角θ很小),其中

| $\cos \theta=\frac{<\boldsymbol{W}_{i}, \boldsymbol{W}_{j}>}{\left|\boldsymbol{W}_{i}\right|\left|\boldsymbol{W}_{j}\right|} $ | (11) |

式中: < Wi, Wj>表示向量Wi和Wj的内积;|W|表示向量W的范数。

根据上述原理,可以如下贪婪方法进行修正:对于基于LSTM的EDM模型的分词结果,从起始比较当前词wi的词向量Wi与下一个词wi+1的词向量Wi+1之间的夹角的余弦值,若该余弦值大于某个预先给定的阈值λ,则将词wi和wi+1组合成词wiwi+1,组合后的词向量Wout为:

| ${\mathit{\boldsymbol{W}}_{{\rm{out}}}} = \frac{{{\mathit{\boldsymbol{W}}_i} + {\mathit{\boldsymbol{W}}_{i + 1}}}}{{\left| {{\mathit{\boldsymbol{W}}_i} + {\mathit{\boldsymbol{W}}_{i + 1}}} \right|}} $ | (12) |

重复上述过程,直至结束。

为了验证本文提出的中文分词方案的性能,采用NLPCC2015共享任务中的中文分词任务数据集[18]进行验证。该数据集内容为微博语料,其中包括含有标签数据17 308条。每个字的标签为B(词首)、M(词中)、E(词尾)、S(单字词)中的一种。论文选择取字数大于3的3 500个句子作为基于LSTM的EDM分词模型的测试样本,其余作为该模型的训练样本。此外,数据集中还包含没有标签的背景数据58 384条。在词向量修正时,背景数据也进行分词,和有标签数据合并后训练所有词的词向量。

3.2 测试及分析为了定量地评估提出的中文分词方案的性能,分别选择正确率P、召回率R和F度量作为评估指标。令文本包含的总词数为D,切分正确的词数目为D1,切分的总词数目为D0,各指标的定义分别为:

| $P = \frac{{{D_1}}}{{{D_0}}} \times 100\% $ | (13) |

| $P = \frac{{{D_1}}}{D} \times 100\% $ | (14) |

| $F = \frac{{P \times R}}{{(1 - a) \times P + a \times R}} $ | (15) |

式中α=0.5[19]。在实验中,词向量维数取64,LSTM模型的状态数为64,LSTM模型训练的迭代次数为40。

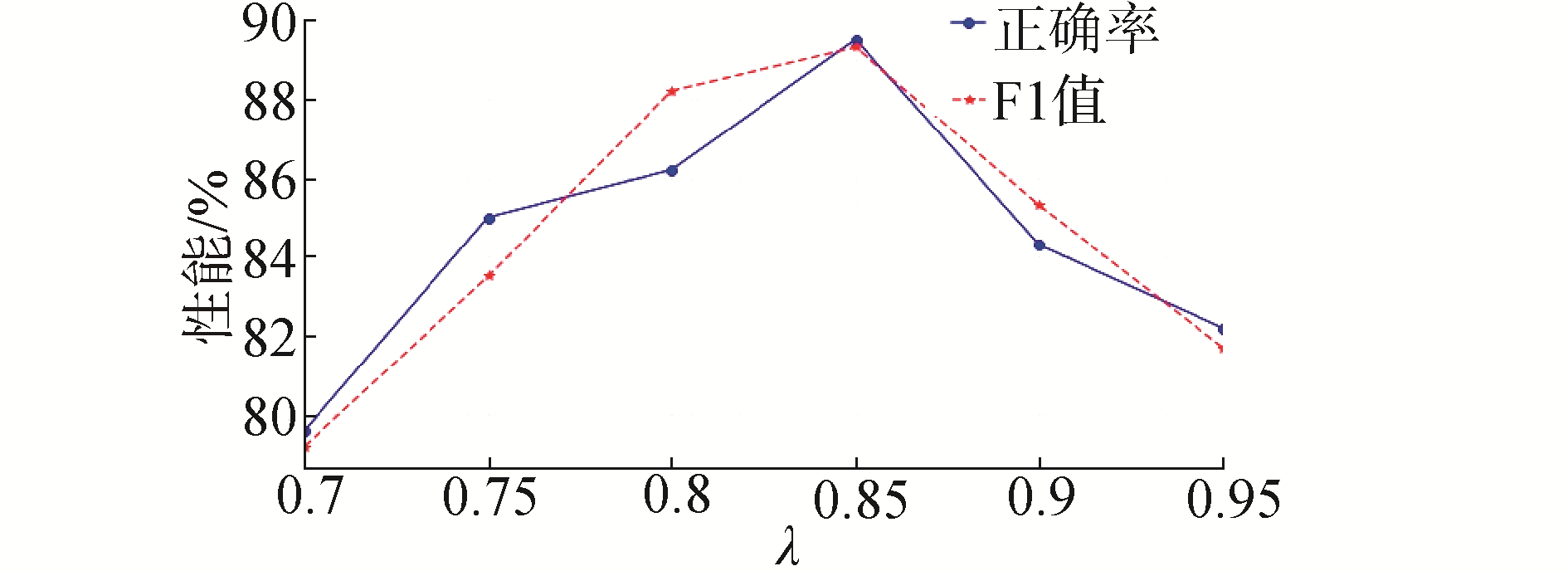

图 4给出了不同阈值λ取值下的修正性能,从图中可以看出,当λ=0.85时,修正后的性能最好。因此,论文将参数λ的值设置为0.85。

|

Download:

|

| 图 4 不同阈值λ下的修正性能 Fig. 4 Correct performances of different threshold λ | |

表 1为本文提出的分词方案的性能。为了进行比较,本文给出了采用jieba分词软件对同一数据集进行分词的性能。此外,文献[17]考虑采用词向量修正方法改善jieba分词性能,因此对jieba分词后进行词向量修正的性能也进行了评估。jieba分词的修正参数设置为文献[17]中的数值。

| 表 1 不同中文分词方案的性能比较 Table 1 Performance comparisons of different Chinese word segmentation schemes |

从表 1中可以看出,采用提出的基于LSTM的EDM模型的中文分词性能优于jieba分词性能和修正后的jieba分词性能。例如,基于LSTM的EDM模型的分词方案的F值相对于jieba分词性能和修正后的jieba分词性能分别获得了23.5%和12.8%的提升。此外,采用词向量对基于LSTM的EDM模型分词修正后的准确率和F值相对于未修正获得了0.42%和0.13%的提升。这些都说明论文提出的中文分词方案能更好地实现对网络文本的分词。

表 2列出了提出的方案对测试数据集的分词结果示例。

| 表 2 分词结果示例 Table 2 Examples of the segmentation results |

1) 提出基于LSTM的EDM分词模型的性能相对于传统的jieba分词软件的分词性能有了较大提升。

2) 采用提出的词向量修正方法修正后的分词准确率和F值略优于未修正的分词准确率。验证了提出的基于LSTM模型的中文分词方案和基于词向量的修正方法的有效性。

| [1] |

罗刚, 张子宪. 自然语言处理原理与技术实现[M]. 北京: 电子工业出版社, 2016.

(  0) 0)

|

| [2] |

黄昌宁, 赵海. 中文分词十年回顾[J]. 中文信息学报, 2007, 21(3): 8-19. HUANG Changning, ZHAO Hai. Chinese Word Segmentation:a decade review[J]. Journal of Chinese information processing, 2007, 21(3): 8-19. DOI:10.3969/j.issn.1003-0077.2007.03.002 (  0) 0)

|

| [3] |

黄昌宁. 中文信息处理中的分词问题[J]. 语言文字应用, 1997(1): 72-78. (  0) 0)

|

| [4] |

WU Andi, JIANG Zixin. Word segmentation in sentence analysis[C]//Proceedings of the 1998 International Conference on Chinese Information Processing. Beijing, 1998: 169-180.

(  0) 0)

|

| [5] |

UTIYAMA M, ISAHARA H. A statistical model for domain-independent text segmentation[C]//Proceedings of the 39th Annual Meeting on Association for Computational Linguistics. Toulouse, France, 2001: 499-506.

(  0) 0)

|

| [6] |

LOW J K, NG H T, GUO Wenyuan. A maximum entropy approach to Chinese word segmentation[C]//Proceedings of the 4th Sighan Workshop on Chinese Language Processing. Jeju Island, Korea, 2005: 161-164.

(  0) 0)

|

| [7] |

ZHAO Hai, HUANG Changning, LI Mu. An improved Chinese word segmentation system with conditional random field[C]//Proceedings of the 5th SIGHAN Workshop on Chinese Language Processing. Sydney, 2006: 162-165.

(  0) 0)

|

| [8] |

XUE Nianwen. Chinese word segmentation as character tagging[J]. Computational linguistics and Chinese language processing, 2003, 8(1): 29-48. (  0) 0)

|

| [9] |

TSENG H, CHANG Pichuan, ANDREW G, et al. A conditional random field word segmenter for Sighan bakeoff 2005[C]//Proceedings of the 4th SIGHAN Workshop on Chinese Language Processing, Association for Computational Linguistics. 2005: 168-171.

(  0) 0)

|

| [10] |

CHANG Pichuan, GALLEY M, MANNING C D. Optimizing Chinese word segmentation for machine translation performance[C]//Proceedings of the 3rd Workshop on Statistical Machine Translation. Columbus, Ohio, 2008: 224-232.

(  0) 0)

|

| [11] |

刘颖.网络语言的变异分析: 现象、成因及发展趋势[D].福州: 福建师范大学, 2012. LIU Ying. Linguistic variation of netspeak: phenomenon, reasons and future developments[D]. Fuzhou: Fujian Normal University, 2012. (  0) 0)

|

| [12] |

HINTON G E, SALAKHUTDINOV R R. Reducing the dimensionality of data with neural networks[J]. Science, 2006, 313(5786): 504-507. DOI:10.1126/science.1127647 (  0) 0)

|

| [13] |

HOCHREITER S, SCHMIDHUBER J. Long short-term memory[J]. Neural computation, 1997, 9(8): 1735-1780. DOI:10.1162/neco.1997.9.8.1735 (  0) 0)

|

| [14] |

CHO K, VAN MERRIENBOER B, GÜLÇEHRE Ç, et al. Learning phrase representations using RNN encoder-decoder for statistical machine translation[C]//Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing. Doha, Qatar, 2014: 1724-1734.

(  0) 0)

|

| [15] |

BAHDANAU D, CHO K, BENGIO Y. Neural machine translation by jointly learning to align and translate[C]//Proceedings of 2015 International Conference on Learning Representations. 2015: 1-15.

(  0) 0)

|

| [16] |

LAI Siwei, LIU Kang, HE Shi, et al. How to generate a good word embedding?[J]. IEEE intelligent systems, 2016, 31(6): 5-14. DOI:10.1109/MIS.2016.45 (  0) 0)

|

| [17] |

沈翔翔, 李小勇. 使用无监督学习改进中文分词[J]. 小型微型计算机系统, 2017, 38(4): 744-748. SHEN Xiangxiang, LI Xiaoyong. Improving Chinese word segmentation via unsupervised learning[J]. Journal of Chinese computer systems, 2017, 38(4): 744-748. DOI:10.3969/j.issn.1000-1220.2017.04.016 (  0) 0)

|

| [18] |

QIU Xipeng, QIAN Peng, YIN Liusong, et al. Overview of the NLPCC 2015 shared task: Chinese word segmentation and POS tagging for micro-blog texts[C]//Proceedings of the 4th CCF Conference on Natural Language Processing and Chinese Computing. Nanchang, China, 2015: 541-549.

(  0) 0)

|

| [19] |

MIKOLOV T, CHEN Kai, CORRADO G, et al. Efficient estimation of word representations in vector space[C]//Proceedings of Workshop at International Conference on Learning Representations. 2013: 1-12.

(  0) 0)

|

2019, Vol. 40

2019, Vol. 40