Many real-world optimization problems involve more than one goal to be optimized. This type of problems is known as multi-objective optimization problems (MOPs), and their solution involves the design of algorithms different from those adopted for dealing with single-objective optimization problems. For MOPs, in most cases, we can not find a single solution to optimize all the objectives at the same time. Therefore, we have to balance them and find a set of optimal tradeoffs, i.e., Pareto set in the decision space and Pareto front in the objective space, respectively. It seems to be natural to use evolutionary algorithms (EAs) for solving MOPs, because EAs can dealwith a group of candidate solutions, and obtain different compromising solutions in the population simultaneously at each run. In the last decade, a number of different EAs have been widely developed to solve MOPs, such as NSGA-Ⅱ[1], GDE[2], SPEA2[3], MOPSO[4], MOEP[5], MOEA/D[6], IPSO[7], etc. Estimation of distribution algorithms (EDAs)[8] are a class of novel evolutionary algorithm. They are based on the idea of building the probabilistic model of solution in the population, and sampling from it to generate new solution. It has been proven that EDAs has some special characteristics of concise concepts, good global searching ability. Many researchesin EDAs focus on different approaches to probabilistic modeling and sampling, such as directed graphical models[9], Markov networks[10], Copulas[11], etc. Recently, EDAs handling multi-objective optimizations have been proposed[12].

Most available multi-objective evolutionary algorithms utilize the concept of Pareto dominance to classify the solutions. However, as shown theoretically in [13] and empirically evidenced in[14], the proportion of Pareto optimal solutions in a non-dominated set grows with the number of objectives. Thus, these algorithms, based on Pareto dominance ranking procedure, become ineffective in sorting out the quality of solutions when the number of objectives increases. Favour ranking method was proposed by Drechsler in [15] and consists of a new relation called favour. It provides a way to designate some Pareto solutions superior to others when the size of the non-dominated solution set is very large. Cloud model is the innovation and an effective tool in uncertain transforming between qualitative concepts and their quantitative expressions[16]. In this paper, a multi-objective optimization algorithm using cloud model and favour ranking is proposed. The algorithm estimates three digital characteristics of individuals in the current population by the backward cloud generator and generates offsprings according to three digital characteristics by the forward cloud generator. And instead of Pareto dominance, favour ranking is used to identify the best individuals in order to guidethe search process. The population with the current population and current offsprings population is sorted using favour ranking, and the best individuals are selected to form the next population. The proposed algorithm is tested and compared with NSGA-II[1], GDE[2], SPEA2[3], MOPSO[4]and MOEP[5] using a set of benchmark functions. Convergence metric, diversity metric and maximum spread metric are used to evaluate the performance of thealgorithm. The experimental results show that the algorithm is effectiveon the benchmark functions.

1 Multi-objective Optimization ProblemThe multi-objective optimization problem can be formally defined as the problem of finding all x =(x1, x2, …, xn)which satisfy the p inequality constraints:

| $ {g_i}\left( \mathit{\boldsymbol{x}} \right) \le 0, i = 1, 2, \cdots, p. $ |

q equality constraints:

| $ {h_i}\left( \mathit{\boldsymbol{x}} \right) = 0, i = 1, 2, \cdots, q. $ |

and optimize M-dimensional vector function:

| $ \min \mathit{\boldsymbol{F}}\left( \mathit{\boldsymbol{x}} \right) = \left( {{f_1}\left( \mathit{\boldsymbol{x}} \right), {f_2}\left( \mathit{\boldsymbol{x}} \right), \cdots, {f_M}\left( \mathit{\boldsymbol{x}} \right)} \right). $ |

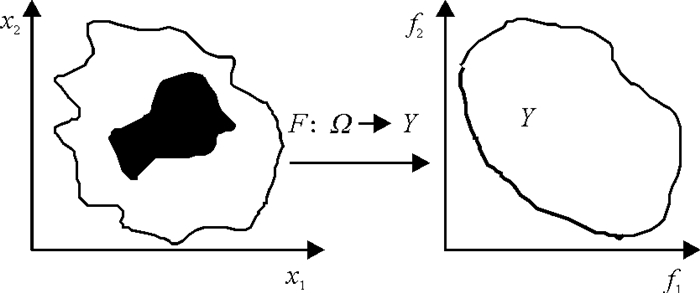

where x =(x1, x2, …, xn)is an n-dimensional decision variable vector. The constraints define the feasible region Ω and any vector x in the feasible region Ω is called a feasible solution. Y= {y|y=F(x), x∈Ω }is referred to as objective space.

When there are several objective functions, the concept of optimum changes, because in MOPs the purpose is to find "trade-off" solutions rather than a single solution. The concept of optimum commonly adopted in multi-objective optimization is Pareto dominance.

A feasible solution x1∈Ω is said to strictly dominate another feasible solution x2∈Ω, denoted by x1≤x2, if and only if (iff) ∀i∈{1, 2, …, M}: fi(x1)≤fi(x2) and ∃j∈{1, 2, …, k}: fj(x1) < fj(x2).

A feasible solution x∈Ω is said to be Pareto optimal with respect to Ω if there is no other feasible solution that dominates x in Ω. The set of all Pareto optimal solutions in the feasible region Ω is called Pareto optimal set and the corresponding set of objective vector is called Pareto optimal front. An illustrative example of a multi-objective minimization problem with two objectives, f1 and f2, that are plotted in the objective space ∏ mapped from the feasible region Ω is shown in Figure 1. The bold part in the feasible region Ω indicates the Pareto optimal set. The bold curve in the objective space ∏ indicates the Pareto front.

|

图 1 说明性示例 Figure 1 An illustrative example |

Cloud model is a conversion model with uncertainty between a quality concept which is expressed by natural language and its quantity number expression[16]. If U is a quantity domain expressed with accurate numbers, and C is a quality concept in U, if the quantity value, x∈U and x is a random realization of the quality concept C, μ(x) is the membership degree of x to C, μ(x)∈[0, 1], it is the random number which has the steady tendency:

| $ \mu :U \to \left[{0, 1} \right], \forall x \in U, x \to \mu \left( x \right). $ |

The distribution of x in domain is called cloud model, which is briefly called cloud, each x is called a cloud drop.The numerical characteristics of cloud model are expressed with Expectation Ex, Entropy En and Hyper-entropy He, and they reflect the whole characteristics of the quality conception C. Expectation Ex of the cloud drops, distribution in domain, is the point which can best represent the quality concept, reflect the cloud centre of gravity of cloud drops of the concept[16]. Entropy En is an uncertainty measurement of the qualitative concept. It not only represents fuzziness of the concept but also randomness and their relations. Also En represents the granularity of the concept. Generally, the larger En is, the higher abstraction the concept is.The hyper-entropy He is the uncertain measurement of entropy, namely the entropy of the entropy. It reflects the coagulation of uncertainty of all points which represents the concept in the number domain, namely the coagulation degree of cloud drop. The size of hyper-entropy reflects the discrete degree and thickness of cloud indirectly. The hyper-entropy is decided by the random and fuzziness of entropy together.

3 Scheme of AlgorithmThe proposed algorithm is designed based on EDA scheme and preference order. EDA estimates a probability distribution over the search space, and then samplesthe offspring individuals from this distribution. We use information obtained during optimization to estimate cloud model of good solution regions and use this cloud model to produce new solutions. As we saw in Section 1, the solution set of a problem with multiple objectives does not consist of a single solution. Instead, in multi-objective optimization, we aim to find Pareto optimal set. In our algorithm, the favour ranking is used for selecting the next population. The algorithm steps are as follows:

t=0, INITIALIZATION

(1) Generate initial population P(0)with size N;

(2) Estimate three digital characteristicsfrom P(0) by backward cloud generator;

(3) Generate P′(0)with size N according to three digital characteristics by forward cloud generator;

REPEAT

(1)S(t)←P(t)∪P′(t)

(2) Sort S(t)using favour ranking;

(3) Select N best individuals from S(t)to form P(t);

(4) Estimate three digital characteristicsfrom P(t)by backward cloud generator;

(5) Generate P′(t)with size N according to three digital characteristics by forward cloud generator;

(6) t←t+1

UNTIL end condition met

The population size N, M and the maximum number of iterations T are initialized according to the problem concerned. The individuals in P(0) are initialized randomly. The individuals in Q(0) are selected from P(0) according to preference order ranking. The individuals in P′(0) are generatedby forward cloud generator.

3.2 Favour rankingFavour ranking method was proposed by Drechsler[15] and consists of a new relation called favour. This technique requires no user interaction and can handle infeasible solutions.

| $ \begin{array}{l} x{ < _f}y \Leftrightarrow \\ \left| {i:{f_i}\left( x \right) < {f_i}\left( y \right), 1 \le i \le n} \right| > \left| {j:{f_j}\left( y \right) < {f_j}\left( x \right), 1 \le j \le n} \right|. \end{array} $ |

This means that x is favoured to y(x < fy) iff i components of x are better than the corresponding components of y and only j components of y are better than the corresponding components of x.

Also, in this model, the authors proposed that the solutions are divided into so called Satisfiability Classes (SCs) depending on their quality. Solutions of the same quality belong to the same SC. This property helps the mechanism in using a graph representation to describe properly the relation favour (< f), in which each element is a node and preferences are given by edges. The relation < f is not transitive, thus the relation < f can generate cycles in the graph, causing elements that describe a cycle to be denoted as not comparable and they are included in the same SC giving the same rank to each of the elements in that cycle. The graph that contains all the populations (G) needs to be a directed graph without internal cycles, so all the cycles have to be identified and replaced by a single node representing the single cycle.

Once we have the final directed graph (G), we know that (G) is acyclic, and it is possible to determine a level sorting of the nodes. For each node in G we define a SC. Level sorting of the nodes in G determines the ranking of the SCs; each level contains at least one node of G. Then each level corresponds to exactly one SC. Using the level sorting, it is possible to group nodes (sets of solutions) that are not connected by an edge in the same SC. These solutions are not comparable with respect to relation < f and thus they should be ranked in the same level of quality.

For the case n=2 it holds that < f and < d are equal, where < d is the Pareto dominance relation. So, when n=2, the favour relation is exactly the same as the Pareto dominance relation. Relation < f can handle infeasible solutions. When an element is infeasible, the element is considered as the worst possible value.

3.3 Estimate three digital characteristics by the backward cloud generatorBackward cloud generator is a conversion model which can convert quantity numbers into a quality concept. It can convert the accurate data (x1, x2, …, xn) with the membership degree (μ1, μ2, …, μn) into the quality cloud concept expressed by numerical characteristic (Ex, En, He). The algorithm of backward cloud generator can be summed as follows:

(1) According to the samples (x1, x2, …, xn), calculate mean value Ex=Mean(x1, x2, …, xn);

(2) According to the samples (x1, x2, …, xn), calculate standard deviation value En=Stdev(x1, x2, …, xn);

(3) calculate

(4) According to the En′i, calculate standard deviation value He=Stdev(En′1, En′2, …, En′n);

3.4 Generate individuals by the forward cloud generatorAccording to three digital characteristics values(Ex, En, He), the forward cloud generator can generate the cloud drops x, μ, where x is the quantity values, μ is the membership degree of x. The algorithm of the forward cloud generator can be summed as follows:

(1) Generate a normally distributed random number En′ with mean En and standard deviation He;

(2) Generate a normally distributed random number x, with mean Ex and standard deviation absolute value of En′;

(3) Calculate

(4) μ is certain degree of x, belongs to the qualitative concept, and the cloud drop (x, μ) reflects the whole contents of this qualitative quantitative transform;

(5) Repeat step (1)-(4) until N cloud drops are generated.

4 Experimental ResultsThe performance of the proposed algorithm is compared with that of some multi-objective optimization algorithms. Three different quantitative performance metrics for multi-objective optimization are employed. These metrics are capable of evaluating non-dominated individuals in several nontrivial aspects and have been widely used in the studies of multi-objective evolutionary algorithms.

(1) Diversity metric Δ was proposed by Deb[1]. It measures the extent of spread achieved among the obtained solutions and is defined as

(2) Maximum spread metric χ [17]: The metric of maximum spread measurement is defined as

| $ \chi = \sqrt {\frac{1}{M}\sum\limits_{i = 1}^M {{{\left( {\frac{{\min \left( {f_i^{\max }, F_i^{\max }} \right)-\max \left( {f_i^{\min }, F_i^{\min }} \right)}}{{F_i^{\max }-F_i^{\min }}}} \right)}^2}} }, $ |

where m is the number of objectives, fimax and fimin are the maximum and minimum of the ith objective in the non-dominated solution found so far; the Fimax and Fimin are the maximum and minimum of the ith objective in Pareto optimal set.

(3) Convergence metric γ was introduced by Van Veldhuizen and Lamont[18]. It is a way to estimate the Euclidean distance between the member in the non-dominated solution found so far and its nearest member in the Pareto optimal set and is defined as

| $ \gamma = \frac{{\sqrt {\sum\limits_{i = 1}^L {{d_i}} } }}{L}, $ |

where L is the number of non-dominated solutions found by the algorithm being analyzed and di is the minimum Euclidean distance (measured in the objective space) between the ith solution of NF and the solutions in PF.

The smaller the value of convergence metric γ and diversity metric Δ is, the better the performance of the algorithm is. The best value of maximum spread metric χ should be approximate to one.

Some of the following well-knownbenchmark functions were taken from the specialized literature [19] to compare our approach.

(1) T1:f1(x)=x1,

| $ \begin{array}{l} {f_2}\left( \mathit{\boldsymbol{x}} \right) = g\left( \mathit{\boldsymbol{x}} \right)\left( {1- \sqrt {\frac{{{x_1}}}{{g\left( \mathit{\boldsymbol{x}} \right)}}} } \right), \\ g\left( \mathit{\boldsymbol{x}} \right) = 1 + 9\frac{{\left( {\sum\nolimits_{i = 2}^n {{x_i}} } \right)}}{{\left( {n- 1} \right)}}, {x_i} \in \left[{0, 1} \right]. \end{array} $ |

(2) T2: f1(x)=x1,

| $ \begin{array}{l} {f_2}\left( \mathit{\boldsymbol{x}} \right) = g\left( \mathit{\boldsymbol{x}} \right)\left( {1- {{\left( {\frac{{{\mathit{\boldsymbol{x}}_1}}}{{g\left( \mathit{\boldsymbol{x}} \right)}}} \right)}^2}} \right), \\ g\left( \mathit{\boldsymbol{x}} \right) = 1 + 9\frac{{\left( {\sum\nolimits_{i = 2}^n {{x_i}} } \right)}}{{\left( {n- 1} \right)}}, {x_i} \in \left[{0, 1} \right]. \end{array} $ |

(3) T3: f1(x)=x1,

| $ \begin{array}{l} {f_2}\left( \mathit{\boldsymbol{x}} \right) = g\left( \mathit{\boldsymbol{x}} \right)\left( {1- \sqrt {\frac{{{\mathit{\boldsymbol{x}}_1}}}{{g\left( \mathit{\boldsymbol{x}} \right)}}}- \frac{{{x_1}}}{{g\left( \mathit{\boldsymbol{x}} \right)}}\sin \left( {10\pi {x_1}} \right)} \right), \\ g\left( \mathit{\boldsymbol{x}} \right) = 1 + 9\frac{{\left( {\sum\nolimits_{i = 2}^n {{x_i}} } \right)}}{{\left( {n- 1} \right)}}, {x_i} \in \left[{0, 1} \right]. \end{array} $ |

(4) T4: f1(x)=x1,

| $ \begin{array}{l} {f_2}\left( \mathit{\boldsymbol{x}} \right) = g\left( \mathit{\boldsymbol{x}} \right)\left( {1- \sqrt {\frac{{{x_1}}}{{g\left( \mathit{\boldsymbol{x}} \right)}}} } \right), \\ g\left( \mathit{\boldsymbol{x}} \right) = 1 + 10\left( {D- 1} \right) + \sum\nolimits_{i = 2}^n {\left( {x_i^2- } \right.} \\ \left. {10\cos \left( {4\pi {x_i}} \right)} \right), x1 \in \left[{0, 1} \right], xi \in \left[{-5, 5} \right], i = 2, \cdots, n. \end{array} $ |

(5) T5: f1(x)=1-exp(-4x1)sin6(6πx1),

| $ \begin{array}{l} {f_2}\left( \mathit{\boldsymbol{x}} \right) = g\left( \mathit{\boldsymbol{x}} \right)\left( {1- {{\left( {\frac{{{f_1}\left( x \right)}}{{g\left( \mathit{\boldsymbol{x}} \right)}}} \right)}^2}} \right), \\ g\left( \mathit{\boldsymbol{x}} \right) = 1 + 9{\left( {\frac{{\sum\nolimits_{i = 2}^n {{x_i}} }}{{\left( {n- 1} \right)}}} \right)^{0.25}}, {x_i} \in \left[{0, 1} \right]. \end{array} $ |

The proposed algorithm(MOEDACF) is compared with NSGA-II[1], GDE[2], SPEA2[3], MOPSO[4], MOEP[5] in the multi-objective benchmark functions T1, T2, T3, T4 and T5. The initial population with size 150 was generated from a uniform distribution in the ranges specified below. In all the experiments, we report the results obtained from performing 50 independent runs of each algorithm compared. The maximum number of iterations is set to 4000 in each running. The total number of fitness function evaluations was set to 10000. Table 1 showsthe comparison of results(mean and variances) among the six algorithms considering the metrics previously described. It can be seen that the performance of the proposed algorithm is more effective and better in the benchmark functions.

| 表 1 5个测试函数的3个标测试结果 Table 1 Results of three metrics for the five test functions |

We proposed a multi-objective estimation of distribution algorithm using cloud-based and favour ranking in this paper. The proposed algorithm employs backward cloud generator to estimate three digital characteristics from the current population, and the current offsprings population are generated according to three digital characteristics by the forward cloud generator. The population with the current population and current offsprings population is sorted based on favour ranking, and the best individuals are selected to form the next population. The proposed algorithm is tested and compared with NSGA-II[1], SPEA2[2], GDE[3], MOEP[4] and MOPSO[5] using a set of multi-objective benchmark functions T1, T2, T3, T4 and T5. Convergence metric, diversity metric and maximum spread metric are used to evaluate the performance of the algorithm. The experimental results show that the algorithm is effectiveon the benchmark functions.

| [1] |

Deb K, Agrawal S, Pratap A, et al. A fast and elitist multi-objective genetic algorithm: NSGA-Ⅱ[J]. IEEE Transactions on Evolutionary Computation, 2002, 6(2): 182-197. DOI:10.1109/4235.996017 |

| [2] |

Kukkonen S, Lampinen J. Performance assessment of generalized differentia evolution with a given set of constrained multi-objective test problems[C]// Proceedings ofIEEE Congress on Evolutionary Computation. Trondheim: IEEE, 2009: 1943-1950. http://ieeexplore.ieee.org/xpls/abs_all.jsp?arnumber=4983178

|

| [3] |

Zitzler E, Laumanns M, Thiele L. SPEA2: Improving the strength pareto evolutionary algorithm for multi-objective optimization[C]//Proceedings of the EUROGEN2001 Conference. Barcelona, Spain: CIMNE, 2001: 95-100.

|

| [4] |

Coello Coello C A, Pulido G T, Lechuga M S. Handling multiple objectives with particle swarm optimization[J]. IEEE Transactions on Evolutionary Computation, 2004, 8(3): 256-279. DOI:10.1109/TEVC.2004.826067 |

| [5] |

Qu B Y, Suganthan P N. Multi-objective evolutionary programming without non-domination sorting is up to twenty times faster[C]//Proceeding of Congress on Evolutionary Computation. Trondheim: IEEE, 2009: 2934-2939. https://ieeexplore.ieee.org/document/4983312/

|

| [6] |

Zhang Q, Liu W, Li H. The performance of a new version of MOEA/D on CEC09 unconstrained mop test instances[C]//Proceeding of Congress on Evolutionary Computation. Trondheim: IEEE, 2009: 203-208. https://ieeexplore.ieee.org/document/4982949/

|

| [7] |

Agrawal S, Dashora Y, Tiwari M K, et al. Interactive particle swarm: a Pareto-adaptive metaheuristic to multiobjective optimization[J]. IEEE Transactions on Systems, Man, and Cybernetics, Part A (Systems and Humans), 2008, 38(2): 258-277. DOI:10.1109/TSMCA.2007.914767 |

| [8] |

Larranaga P, Lozano J A. Estimation of Distribution Algorithms: A New Tool for Evolutionary Computation[M]. DordechtKluwer Academic Publishers, 2002.

|

| [9] |

Etxeberria R, Larranaga P. Global optimization using Bayesian networks[C]//Proceedings of the Second Symposium on Artificial Intelligence (CIMAF-99), [s. l], 1999, 151-173.

|

| [10] |

Shakya S, McCall J. Optimisation by Estimation of Distribution with DEUM framework based on Markov Random Fields[J]. International Journal of Automation and Computing, 2007, 4: 262-272. DOI:10.1007/s11633-007-0262-6 |

| [11] |

Gao Y. Multivariate Estimation of Distribution Algorithm with Laplace Transform Archimedean Copula[J]. IEEE International Conference on Information Engineering and Computer Science, 2009, 1(12): 273-277. |

| [12] |

Sastry K, Pelikan M, Goldberg D E. Limits of scalability of multiobjective estimation of distribution algorithms[C]// Proceedings of the Congress on Evolutionary Computation. [s. l. ]: IEEE, 2005: 217-2224.

|

| [13] |

Farina M, Amato P. A fuzzy definition of"optimality" for many-criteria optimization problems[J]. IEEE Transactions on Systems, Man and Cybernetics, Part A, 2004, 34(3): 315-326. DOI:10.1109/TSMCA.2004.824873 |

| [14] |

Deb K. Multi-Objective Optimization Using Evolutionary Algorithms[M]. VS: John Wiley & Sons, 2001.

|

| [15] |

Drechsler N, Drechsler R, Becker B. Multi-Objected optimization in evolutionary algorithms using satisfyability classes[C]//Reusch, B. (ed. ) Fuzzy Days 1999. LNCS. Heidelberg: Springer, 1999, 1625: 108-117.

|

| [16] |

Deyi L, Yi D. Artificial intelligence with uncertainty[M]. London: Chapman & Hall, 2005: 376.

|

| [17] |

Schott J R. Fault tolerant design using single and multicriteria genetic algorithm optimization[D]. Canbridge: Dept Aeronautics and Astronautics, Massachusetts Inst Technol, 1995. http://dspace.mit.edu/handle/1721.1/11582

|

| [18] |

Van Veldhuizen D A, Lamont G B. Multiobjective evolutionary algorithm research: A history and analysis[R]. US OH: Air Force Institute of Technology Wright-Patterson, Department of Electrical and Computer Engineering, 1998.

|

| [19] |

Zitzler E, Deb K, Thiele L. Comparison of multi-objective evolutionary algorithms: Empirical results[J]. Evolutionary Computation, 2008, 8: 173-195. |

2014, Vol. 31

2014, Vol. 31