The rotating machinery system is an important part of industrial production, and its entire performance is often determined by the operation of rolling bearings. Therefore, the fault diagnosis of rolling bearings has been a hot and difficult topic in the field of equipment fault monitoring and diagnosis.

Pattern recognition is the basic technology of artificial intelligence. It can be used in medical imaging, speech recognition and mechanical fault diagnosis, etc. To improve the intelligence of fault diagnosis, a series of artificial intelligence methods has been proposed in recent years, such as back propagation neural network (BP) and support vector machines (SVMs). However, there exist some challenging issues in both neural networks and SVMs, namely ① trivial human interference, ②slow learning speed and ③ poor computational scalability[1].

In 2006, Huang and other researchers[1-4] proposed extreme learning machine (ELM), which has fast learning speed and strong generalization ability. ELM is a simple single-hidden-layer feedforward network (SLFN) with three layers: an input layer, a hidden layer and an output layer. To achieve a good result, it only needs to randomly set the input layer weights and the hidden layer thresholds, and then calculate the output layer weights analytically. Since the introduction of ELM there have been rapid developments aiming at improving its capacity, reducing the impact of random variables and demonstrating the advantages of ELM. Based on the basic ELM, various variants of ELM have been introduced in recent years[4-5]. However, how to achieve a more acceptable accuracy with the suitable hidden neurons remains a challenging problem.

Previous research revealed that, compared with SLFNs, two-hidden-layer feedforward network (TLFN)[6-7] requires fewer nodes to achieve a desired generalization performance. Additionally, in ELM, the output neurons only receive information from the hidden neurons, which severely limits the performance of neural networks. Also, much direct information between the input layer and the output layer exists. In the double parallel feedforward neural network (DPFNN)[8-16], a direct channel is built between the input layer and the output layer. Accordingly, the output layer of the DPFNN receives information from the direct and the indirect channels, which is likely to improve the learning capacity.

In this study, pattern recognition is used to solve the fault diagnosis problem of rolling bearings. To achieve a satisfactory accuracy, a double parallel two-hidden-layer extreme learning machine (DPT-ELM) algorithm is proposed in this paper. DPT-ELM extends the single-hidden-layer structure to a two-hidden-layer structure. To highlight the two-hidden-layer network, other multilayer ELM algorithms are introduced for comparison. In addition, a double parallel structure is used to improve the generalization performance of DPT-ELM. That is, a direct channel is built between the input layer and the output layer[8], so that the effects of the input layer and the two hidden layers on the output layer are parallel. Specifically, the final output is made up of two parts: the input layer and the two hidden layers.

Above all, although ELM has a strong generalization ability, there are still many problems that need to be solved. To ensure the classification accuracy and the stability of the model, the use of less hidden layer nodes is an urgent problem to be addressed. In this paper, the combination of the two-hidden-layer feedforward scheme and the double parallel scheme, aims at reducing the network error and improving the accuracy with fewer hidden nodes.

To extract the eigenvector values, time-domain and frequency-domain signal analysis are conducted to solve the fault diagnosis problem of the rolling bearings. As the original signal is often mixed with a lot of noise, the cause of the fault is difficult to detect. Here, adaptive waveform decomposition (AWD) is used to denoise the data. The experimental results reveal that the DPT-ELM method has a better performance and faster speed than the other methods. The whole flow process is divided into three parts, namely data preprocess, feature extraction and pattern recognition. In the section of data preprocessing, there are two accelerometers to collect the signal, then, AWD is used to denoise the signal. Regarding feature extraction, time-domain and frequency-domain features are used as the characteristic parameter vectors. In the section of the pattern recognition, the characteristic parameter vectors are divided into training data and testing data, and then the data are used to train and test the DPT-ELM model.

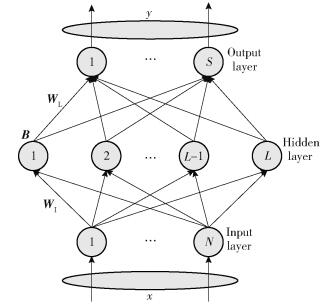

1 The traditional extreme learning machineELM is a single-hidden layer feedforward neural network, which consists of three layers. The traditional ELM belongs to the supervised learning algorithms and uses a series of labeled samples to train the parameters. In ELM, the input layer weights, as well as the hidden layer thresholds, are assigned randomly. Additionally, there is no need to tune the parameters while learning. The training process of ELM is divided into two stages: random learning and the calculation of the output layer weights[17].

The structure of the traditional ELM is shown in Fig. 1. The hidden layer thresholds (bi) and the input layer weights (ai) are chosen in a random way. Then, the input layer data for the hidden layer is G(ai, bi, x). After the initialization of the input layer weights (WI) and the hidden layer thresholds (B), the suitable number of the hidden layer nodes (L) are selected based on the influence of hidden layer neurons on the accuracy. Then, the activation function (g(x)) of the hidden nodes is used to calculate the output matrix H from the hidden layer.

|

Fig.1 The structure of the basic extreme learning machine |

| $ \mathit{\boldsymbol{H}} = G\left( {{a_i},{b_i},x} \right) = g\left( {{a_i}x + {b_i}} \right) $ | (1) |

where the sigmoid function is used as the activation function. When the output matrix is obtained, the output layer weights (β) are calculated. That is

| $ f\left( x \right) = \sum\limits_{i = 1}^L {{\beta _i}G\left( {{a_i},{b_i},x} \right)} $ | (2) |

| $ \mathit{\boldsymbol{H}}\beta = \mathit{\boldsymbol{T}} $ | (3) |

where T is the expected output. If H is a full column rank, the output layer weights can be obtained by the least squares function. Using the least squares function, it follows that

| $ \hat \beta = \arg \min \left\| {\mathit{\boldsymbol{H}}\beta - \mathit{\boldsymbol{T}}} \right\| $ | (4) |

| $ \hat \beta = {\mathit{\boldsymbol{H}}^\dagger }\mathit{\boldsymbol{T}} $ | (5) |

where H† is the Moore-Penrose generalized inverse of matrix H.

2 The proposed intelligent fault diagnosis methodTo improve the accuracy with fewer hidden layer nodes, an improved method, namely the double parallel two-hidden-layer extreme learning machine (DPT-ELM) is proposed in this paper. In 2003, Huang[6] demonstrated that a two-hidden-layer feedforward neural network can achieve an arbitrarily high accuracy when learning from training samples by using

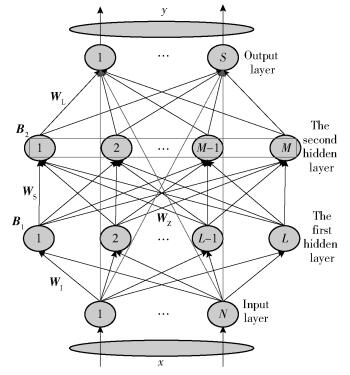

The workflow of the DPT-ELM structure is shown in Fig. 2. It is evident that there are four layers, namely the input layer, the first hidden layer, the second hidden layer and the output layer. Additionally, there is a direct connection between the input layer and the output layer, so the output neurons receive information from two channels, specifically the direct channel and the indirect channel. Drawing on the implementation of ELM, the implementation of the improved method is derived as follows.

|

Fig.2 The structure of the double parallel two-hidden-layer extreme learning machine |

Given a set of training samples (xi, ti), i=1, 2, …, N. By means of the traditional ELM, DPT-ELM is established. Then, the connection weights between the input layer and the first hidden layer (WI) and the threshold values of the first hidden layer (B1) are randomly selected. Where, the number of the first hidden layer neurons is L, the number of the second hidden layer neurons is M and the number of the output layer neurons is S.

| $ {\mathit{\boldsymbol{W}}_{\rm{I}}} = \left[ {\begin{array}{*{20}{c}} {{\omega _{11}}}& \cdots &{{\omega _{1N}}}\\ \vdots&\ddots&\vdots \\ {{\omega _{L1}}}& \cdots &{{\omega _{LN}}} \end{array}} \right] $ | (6) |

| $ {\mathit{\boldsymbol{B}}_1} = {\left[ {\begin{array}{*{20}{c}} {{b_1}}&{{b_2}}& \cdots &{{b_L}} \end{array}} \right]^{\rm{T}}} $ | (7) |

where ωLN∈(-1, 1) and bL∈(0, 1). To simplify the whole model, in the same way, here we randomly select the connection weights between the first hidden layer and the second hidden layer (WS) and the thresholds of the second hidden layer (B2). There is no need to tune the parameters.

| $ {\mathit{\boldsymbol{W}}_{\rm{S}}} = \left[ {\begin{array}{*{20}{c}} {{\upsilon _{11}}}& \cdots &{{\upsilon _{1L}}}\\ \vdots&\ddots&\vdots \\ {{\upsilon _{M1}}}& \cdots &{{\upsilon _{ML}}} \end{array}} \right] $ | (8) |

| $ {\mathit{\boldsymbol{B}}_2} = {\left[ {\begin{array}{*{20}{c}} {b{b_1}}&{b{b_2}}& \cdots &{b{b_M}} \end{array}} \right]^{\rm{T}}} $ | (9) |

where υML∈(-1, 1) and bbM∈(0, 1). To obtain the output of the two hidden layers, a suitable activation function of the hidden neurons (the hidden layer activation function g(x) is sigmoid in this paper) is selected.

| $ g\left( x \right) = \frac{1}{{1 + {{\rm{e}}^{ - x}}}} $ | (10) |

| $ {\mathit{\boldsymbol{H}}_1} = \left[ {\begin{array}{*{20}{c}} {h\left( {{x_1}} \right)}\\ \vdots \\ {h\left( {{x_N}} \right)} \end{array}} \right] = \left[ {\begin{array}{*{20}{c}} {G\left( {{\omega _{11}},{b_1},{x_1}} \right)}& \cdots &{G\left( {{\omega _{1N}},{b_L},{x_1}} \right)}\\ \vdots&\ddots&\vdots \\ {G\left( {{\omega _{L1}},{b_1},{x_N}} \right)}& \cdots &{G\left( {{\omega _{LN}},{b_L},{x_N}} \right)} \end{array}} \right] $ | (11) |

| $ \begin{array}{l} {\mathit{\boldsymbol{H}}_2} = \left[ {\begin{array}{*{20}{c}} {h\left( {{h_1}} \right)}\\ \vdots \\ {h\left( {{h_L}} \right)} \end{array}} \right] = \left[ {\begin{array}{*{20}{c}} {G\left( {{\upsilon _{11}},b{b_1},{h_1}} \right)}& \cdots &{G\left( {{\upsilon _{1L}},b{b_M},{h_1}} \right)}\\ \vdots&\ddots&\vdots \\ {G\left( {{\upsilon _{M1}},b{b_1},{h_L}} \right)}& \cdots &{G\left( {{\upsilon _{ML}},b{b_M},{h_L}} \right)} \end{array}} \right]\\ {h_i} = h\left( {{x_i}} \right),i = 1,2, \cdots ,N \end{array} $ | (12) |

where G(ω, b, x)=g(ωx+b). And the output matrix (H1) of the first hidden layers and the output matrix (H2) of the second hidden layer are calculated.

| $ {\mathit{\boldsymbol{W}}_{\rm{z}}} = \left[ {\begin{array}{*{20}{c}} {{\rho _{11}}}& \cdots &{{\rho _{1S}}}\\ \vdots&\ddots&\vdots \\ {{\rho _{N1}}}& \cdots &{{\rho _{NS}}} \end{array}} \right] $ | (13) |

| $ {\mathit{\boldsymbol{W}}_{\rm{L}}} = \left[ {\begin{array}{*{20}{c}} {{\theta _{11}}}& \cdots &{{\theta _{1S}}}\\ \vdots&\ddots&\vdots \\ {{\theta _{M1}}}& \cdots &{\upsilon {\theta _{MS}}} \end{array}} \right] $ | (14) |

After the output of the second layer (H2) is obtained, based on

| $ \begin{array}{l} \mathit{\boldsymbol{T}} = \mathit{\boldsymbol{H}}\left( {{x_i}} \right)\beta = {\mathit{\boldsymbol{W}}_{\rm{Z}}} \cdot \mathit{\boldsymbol{X}} + {\mathit{\boldsymbol{W}}_{\rm{L}}} \cdot {\mathit{\boldsymbol{H}}_2} = \\ \left[ {\begin{array}{*{20}{c}} {{\mathit{\boldsymbol{W}}_{\rm{Z}}}}&{{\mathit{\boldsymbol{W}}_{\rm{L}}}} \end{array}} \right]\left[ {\begin{array}{*{20}{c}} \mathit{\boldsymbol{X}}\\ {{\mathit{\boldsymbol{H}}_2}} \end{array}} \right] \end{array} $ | (15) |

| $ \beta = \left[ {\begin{array}{*{20}{c}} {{\mathit{\boldsymbol{W}}_{\rm{Z}}}}&{{\mathit{\boldsymbol{W}}_{\rm{L}}}} \end{array}} \right] = \mathit{\boldsymbol{H}}{\left( {{x_i}} \right)^\dagger } \cdot {\mathit{\boldsymbol{T}}^{\rm{T}}} = {\left[ {\begin{array}{*{20}{c}} \mathit{\boldsymbol{X}}\\ {{\mathit{\boldsymbol{H}}_2}} \end{array}} \right]^\dagger } \cdot {\mathit{\boldsymbol{T}}^{\rm{T}}} $ | (16) |

where β=[WZ WL], H(xi)=[X; H2]. H(xi)† is the Moore-Penrose generalized inverse of the input layer matrix.

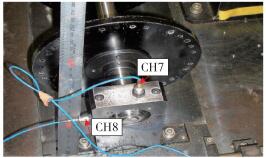

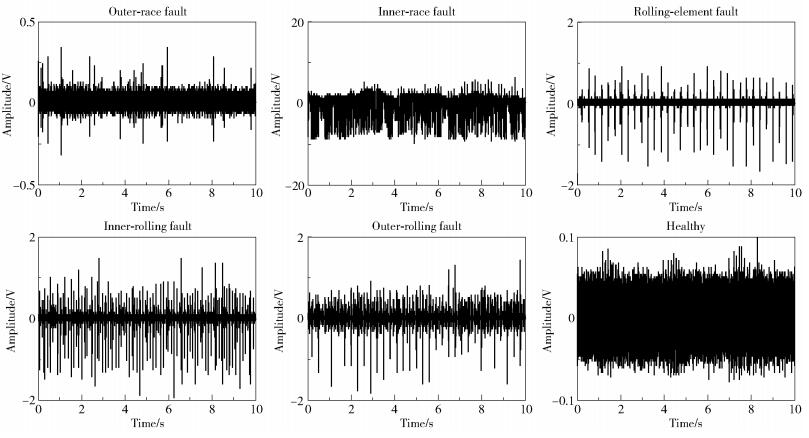

3 Experimental verification 3.1 Signal acquisitionIn this section, the performance of the DPT-ELM model, DP-ELM model, TELM model and BP model are evaluated using the experimental data, which are collected in the real rolling bearing experimental platform whose specific model number is NTN204 (shown in Fig. 3). The data collected from CH7 in this study is used for fault diagnosis. To verify the effectiveness of the methods, six different rolling bearing states data (the inner-race fault, the outer fault, the rolling-element fault, the inner-race with rolling-element fault, the outer-race with rolling-element fault and the healthy data) of two sets of data with different speeds are used. The actual experimental platform parameters are listed in Table 1. In this paper, the data at relatively low speed and with relatively weak fault is chosen to test the methods. Here two groups of original data at speed of 500 r/min and 900 r/min (shown in Fig. 4) are used to train and test the classification models.

|

Fig.3 The real experimental platform |

| 下载CSV Table 1 The real experimental platform parameters |

|

Fig.4 Six state waveforms of the bearing signal (500 r/min) |

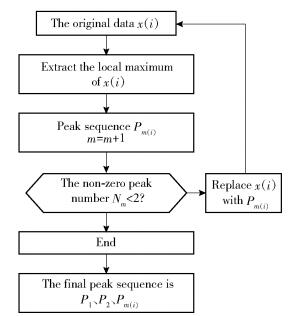

To improve the signal to noise ratio (SNR), the AWD algorithm is used to denoise the vibration signal. As the sub-signal of the empirical modal decomposition (EMD) and the local mean decomposition (LMD) are harmonic signals [19-22], the AWD is put forward. Here, EMD, LMD and AWD are put together for comparison. It is evident that after EMD and LMD, the resulting signal has no significant alteration compared with the original signal, but there is still much noise in the signal. However, after AWD processing, the curve is smooth and it retains the effective information part. Accordingly, AWD is selected to be used to denoise the vibration signal. AWD, which has more practical value in engineering application, combines the advantages of global frequency and instantaneous frequency. Additionally, the original vibration signal can be decomposed into several waveform functions and residual waveforms. The flow chart of the AWD algorithm is shown in Fig. 5. The AWD can be expressed as follows:

| $ x\left( t \right) = \sum\limits_{m = 1}^N {{s_m}\left( t \right) + W\left( t \right)} $ | (17) |

|

Fig.5 The flow chart of AWD |

where sm(t) represents the mth waveform component of the original signal, and W(t) is the residual component.

When it comes to denoising by AWD, the correlation coefficient of the waveform component and the original signal is used to determine the noise component. When the obtained correlation coefficient is larger than or equal to 0.3, the waveform component is kept, otherwise it is discarded, then the denoised signal is obtained. In this paper, because of the large amount of the vibration data, the segmentation method is used. The data is divided into 10 000×100. That is, the processed data is obtained by 100 cycles and 10 000 data are dealt every cycle.

3.2.2 Feature parameter constructionTo make the pattern recognition process proceed smoothly, it is necessary to extract the features from the data. In this study, the traditional time-domain and frequency-domain feature extraction methods are used for the rolling bearing data. Time-domain feature extraction is one of the most direct feature extraction methods, the extracted time-domain parameters have a clear physical meaning, as they can fully reflect the characteristics of the rolling bearing signal waveform. A total of 15 kinds of time-domain parameters[23] are introduced in this paper, including waveform index and effective value. However, the signal changes not only with time, but also with frequency. Accordingly, the frequency-domain feature extraction of the data is very necessary. A total of 7 kinds of frequency-domain parameters[24] are introduced in this paper, which can be seen in reference[14].

Due to the impact of various uncertainties, the distribution of the vibration signal amplitude of the rolling bearing is generally close to normal distribution. However, once the pitting, spalling and other local failures occur, the signal amplitude deviates from the normal distribution. In other words, compared with the normal state, the amplitude and phase of the vibration signal will have a certain degree of deviation when it is in the fault state, whether in the time domain or frequency domain. Comparison with the healthy state amplitude clearly reveals the deviation degree of different fault data (shown in Fig. 4). Compared with the healthy state data, the five fault state data have different degrees of deviation. Also, it cannot be directly distinguished with the naked eyes. In this paper, 15 kinds of time-domain feature sets and 7 kinds of frequency-domain feature sets are extracted to represent the characteristic parameters of the six-state signal.

3.3 Comparison of resultsWith the time-domain and frequency-domain feature extraction, 50 sets of characteristic parameters are extracted from each state, then a matrix of 300×22 is obtained. In this paper, the signal without AWD and the preprocessed signal with AWD denoising are used to conduct the test. Then all of the input data (300 sets) are randomly disrupted by the randperm function, and a part of the data (180 sets) is input into the model for training, the other part of the data (120 sets) is used for testing. As is well known, every time the experiment runs, there is a little deviation in the result. Here the performances of the different methods are evaluated by using an average of the accuracy and running time after 10 cycles. The sigmoid function is used as the activation for DPT-ELM, DP-ELM and TELM.

For the hidden layer nodes, the number of hidden layer nodes is used as the loop condition to get the different accuracy. Based on the influence of hidden layer neurons on the accuracy of the different methods, we choose the suitable number of hidden layer nodes. In this paper, for DPT-ELM, the number of the two hidden layer nodes are respectively chosen as 2 and 4. To compare with DPT-ELM, in DP-ELM, 6 is chosen as the number of its hidden layer nodes. Similarly, 2 and 4 are chosen as the number of the two hidden layer nodes in TELM(1). Also, based on the influence of hidden layer neurons on the accuracy of the different methods, when the number of the two hidden layer nodes are 35 and 40, a higher accuracy can be achieved with TELM(2). Thus, the number of the two hidden layer nodes of TELM(2) are 35 and 40, respectively. Meanwhile, in BP, 25 is selected as the number of its hidden layer nodes.

The average running time and the accuracy determined with four groups of experiments, are listed in Table 2, Table 3, Table 4 and Table 5. From these results, such as those in Table 3, it is evident that DPT-ELM can achieve a higher accuracy of 96.7% with a few of the hidden layer nodes. Additionally, the learning time is very short, as it can learn hundreds of times faster than BP. A comparison of Table 2 with Table 3, or of Table 4 with Table 5 reveals that the accuracy is obviously improved with AWD denoise. For example, the comparison of Table 2 with Table 3 indicates that the accuracy of DPT-ELM is improved from 92.1% to 96.7%.

| 下载CSV Table 2 Performance comparison of DPT-ELM, DP-ELM, TELM and BP (500 r/min, without AWD denoise) |

| 下载CSV Table 3 Performance comparison of DPT-ELM, DP-ELM, TELM and BP (500 r/min, with AWD denoise) |

| 下载CSV Table 4 Performance comparison of DPT-ELM, DP-ELM, TELM and BP (900 r/min, without AWD denoise) |

| 下载CSV Table 5 Performance comparison of DPT-ELM, DP-ELM, TELM and BP (900 r/min, with AWD denoise) |

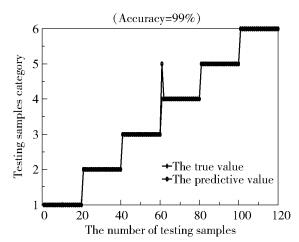

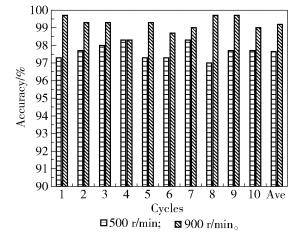

Through the experiments, a higher accuracy was obtained with DPT-ELM. As shown in Fig 6, where it can be seen, from the fault classification figure of the data whose speed is 900 r/min, that the generalization of DPT-ELM is strong, and the accuracy can reach 99%. However, it is not enough to prove the effectiveness of DPT-ELM. In this paper, a 10-fold cross-validation is used to verify the effectiveness of DPT-ELM.

|

Fig.6 The results of prediction by DPT-ELM(900 r/min) |

To achieve the 10-fold cross-validation, for each set of data at different speed, each state data is divided into 10 parts on average. In turn, one part is used as a test set, and the other nine parts are used as the training set. Then, the average of the 10 cycles is taken as the result of one time of 10-fold cross-validation. Then, the 10-fold cross-validation is allowed to run 10 times, and the average of the 10 times is taken as the final result. As shown in Fig. 7, "10 times" means allowing the 10-fold cross-validation to run 10 times. It is clear that the final accuracy of the data, whose speed is 500 r/min, is 97.7%. While the final accuracy of the data, whose speed is 900 r/min, is 99%. This means that the accuracy is high enough to support DPT-ELM.

|

Fig.7 The effectiveness verification of DPT-ELM by the 10-fold cross-validation |

From the results, it is evident that the improved method DPT-ELM introduced in this paper has a better performance compared with the other methods. Not only the accuracy of DPT-ELM is higher than that of the others, but the running time of DPT-ELM is very short. Additionally, the use of the AWD algorithm improves the accuracy of the pattern recognition. Moreover, the 10-fold cross-validation verifies the effectiveness of DPT-ELM. Thus, this means that the method proposed in this paper can achieve an ideal effect to deal with the faults of rolling bearings.

4 ConclusionsThis paper proposes an improved method of ELM, namely the double parallel two-hidden-layer extreme learning machine (DPT-ELM). With this method, the generalization performance can be improved. Comparison of the structures between ELM and DPT-ELM reveals that a direct channel is built between the input layer and the output layer, where there exist two hidden layers. The AWD algorithm is used in this paper to improve the SNR of the vibration signal. In addition, two groups of rolling bearing data at different speeds with six different states are used to extract the time-domain and frequency-domain features, then the eigenvector values are input to test the performance.

From the results presented in this paper, it is obvious that compared with the DP-ELM, TELM and BP methods, the DPT-ELM method proposed here has a better performance in dealing with weak faults. Moreover, although the proposed method uses double hidden layers, the number of nodes used in each hidden layer is very few, and the learning time is very short. Additionally, the whole model also appears to be relatively simple.

Last but not least, we have already become aware of some variants of ELM, specifically, the sparse Bayesian extreme learning machine (SBELM)[25-26], which is strongly attracting our attention. The sparse Bayesian approach allows to estimate the probability distribution of the model output, and automatically prunes out unnecessary samples. SBELM utilizes a small amount of labeled data to train the model, resulting in a very good performance. Accordingly, for our further research, we will combine our improved method with the sparse Bayesian method to obtain a more practical and effective method for rolling bearing fault diagnosis.

| [1] |

HUANG G B, WANG D H, LAN Y. Extreme learning machine:a survey[J]. International Journal of Machine Learning and Cybernetics, 2011, 2(2): 107-122. DOI:10.1007/s13042-011-0019-y |

| [2] |

HUANG G B, ZHU Q Y, SIEW C K. Extreme learning machine:theory and applications[J]. Neurocomputing, 2006, 70(1): 489-501. |

| [3] |

HUANG G B. What are extreme learning machines? filling the gap between Frank Rosenblatt's dream and John von Neumann's puzzle[J]. Cognitive Computation, 2015, 7(3): 263-278. DOI:10.1007/s12559-015-9333-0 |

| [4] |

HUANG G, HUANG G B, SONG S J, et al. Trends in extreme learning machines:a review[J]. Neural Networks, 2015, 61(C): 32-48. |

| [5] |

CAO J W, LIN Z P. Extreme learning machines on high dimensional and large data applications:a survey[J]. Mathematical Problems in Engineering, 2015, 2015: 103796. |

| [6] |

HUANG G B. Learning capability and storage capacity of two-hidden-layer feedforward networks[J]. IEEE Transactions on Neural Networks, 2003, 14(2): 274-281. DOI:10.1109/TNN.2003.809401 |

| [7] |

QU B Y, LANG B F, LIANG J J, et al. Two-hidden-layer extreme learning machine for regression and classification[J]. Neurocomputing, 2016, 175: 826-834. DOI:10.1016/j.neucom.2015.11.009 |

| [8] |

HE Y L, GENG Z Q, ZHU Q X. Positive and negative correlation input attributes oriented subnets based double parallel extreme learning machine (PNIAOS-DPELM) and its application to monitoring chemical processes in steady state[J]. Neurocomputing, 2015, 165: 171-181. DOI:10.1016/j.neucom.2015.03.007 |

| [9] |

WANG J, WU W, LI Z X, et al. Convergence of gradient method for double parallel feedforward neural network[J]. International Journal of Numerical Analysis and Modeling, 2011, 8(3): 484-495. |

| [10] |

HE M Y, HUANG R. Feature selection for hyperspectral data classification using double parallel feedforward neural networks[M]//WANG L, JIN Y. Fuzzy systems and knowledge discovery. Berlin: Springer-Verlag, 2005: 58-66.

|

| [11] |

HUANG R, HE M Y. Feature selection using double parallel feedforward neural networks and particle swarm optimization[C]//IEEE Congress on Evolutionary Computation. Singapore, 2007: 692-696.

|

| [12] |

MOULTON D, GHENDRIH P, FUNDAMENSKI W, et al. Quasineutral plasma expansion into infinite vacuum as a model for parallel ELM transport[J]. Plasma Physics & Controlled Fusion, 2013, 55: 085003. |

| [13] |

YAO M C, LI W T, LIU Y. Double parallel extreme learning machine[J]. Energy Procedia, 2011, 13: 7413-7418. DOI:10.1016/S1876-6102(14)00454-8 |

| [14] |

KHAN A, YANG J, WU W. Double parallel feedforward neural network based on extreme learning machine with L1/2 regularizer[J]. Neurocomputing, 2014, 128(5): 113-118. |

| [15] |

HE Y L, GENG Z Q, ZHU Q X. A data-attribute-space-oriented double parallel (DASODP) structure for enhancing extreme learning machine:applications to regression datasets[J]. Engineering Applications of Artificial Intelligence, 2015, 41: 65-74. DOI:10.1016/j.engappai.2015.02.001 |

| [16] |

YAO M C, ZHANG C, WU W. Online sequential double parallel extreme learning machine for classifications[J]. Journal of Mathematical Research with Applications, 2016, 36(5): 621-630. |

| [17] |

SHEN W, WU L T, ZHANG X, et al. Multi-fault diagnosis for roller bearing based on extreme learning machine[J]. International Journal of Comprehensive Engineering, 2015, 4(1): 1-7. DOI:10.14270/IJCE2015.4.1.a1 |

| [18] |

TAMURA S, TATEISHI M. Capabilities of a four-layered feedforward neural network:four layers versus three[J]. IEEE Transactions on Neural Networks, 1997, 8(2): 251-255. DOI:10.1109/72.557662 |

| [19] |

TIAN Y, MA J, LU C, et al. Rolling bearing fault diagnosis under variable conditions using LMD-SVD and extreme learning machine[J]. Mechanism and Machine Theory, 2015, 90: 175-186. DOI:10.1016/j.mechmachtheory.2015.03.014 |

| [20] |

HUANG N E, SHEN Z, LONG S R, et al. The empirical mode decomposition and the Hilbert spectrum for nonlinear and non-stationary time series analysis[J]. Proc R Soc Lond A, 1998, 454: 903-995. DOI:10.1098/rspa.1998.0193 |

| [21] |

SMITH J S. The local mean decomposition and its application to EEG perception data[J]. Journal of the Royal Society Interface, 2005, 2: 443-454. DOI:10.1098/rsif.2005.0058 |

| [22] |

张超, 杨立东, 李建军. 局部均值分解和经验模态分解的性能对比研究[J]. 机械设计与研究, 2012, 28(3): 38-40. ZHANG C, YANG L D, LI J J. The performance contrast between local mean decomposition and empirical mode decomposition[J]. Machine Design and Research, 2012, 28(3): 38-40. |

| [23] |

崔硕. 时域指标在滚动轴承故障诊断中的应用[J]. 机械工程与自动化, 2008(1): 101-102. CUI S. Application of time domain indexes in the fault diagnosis of rolling bearing[J]. Mechanical Engineering & Automation, 2008(1): 101-102. |

| [24] |

张任. 基于振动信号的齿轮箱智能故障诊断方法研究[D]. 北京: 北京化工大学, 2013. ZHANG R. Intelligent fault diagnosis method research of gearbox based on vibration signal[D]. Beijing: Beijing University of Chemical Technology, 2013. (in Chinese) http://www.wanfangdata.com.cn/details/detail.do?_type=degree&id=Y2393231 |

| [25] |

WONG P K, ZHONG J H, YANG Z X, et al. Sparse Bayesian extreme learning committee machine for engine simultaneous fault diagnosis[J]. Neurocomputing, 2016, 174: 331-343. DOI:10.1016/j.neucom.2015.02.097 |

| [26] |

VONG C M, TAI K I, PUN C M, et al. Fast and accurate face detection by sparse Bayesian extreme learning machine[J]. Neural Computing and Applications, 2015, 26(5): 1149-1156. DOI:10.1007/s00521-014-1803-x |