人的发展与沟通密不可分。有研究表明,人类的理性学习依赖于情绪[1]。随着互联网的兴起,人们开始习惯在网上交流,发表自己对某件事情的看法,网络的保护让人们更加真实地表达自己的情感。因此,分析这些包含用户情感信息的观点对于舆情监控、营销策略等方面具有非常良好的效果。情感分析,又名意见挖掘、观点分析等,它是通过计算机来帮助人们访问和组织在线意见,分析、处理、总结和推理主观文本的过程[2]。

在情感分析研究中最重要的就是情感分类技术。情感分类主要是针对文本中表达的情感进行识别和分类,如积极、消极等,进而得到潜在的信息。目前研究较多的情感分类技术主要分为以下3种:第1种是基于情感词典的方法[3]。基于情感词典的方法主要是将文档中的句子,找出不同词性的词并计算其相应得分。这种方法过于依赖情感词典,具有严重的领域特征,效果并不理想[4];第2种是基于人工提取特征分类的方法。基于人工提取特征分类的方法是一种传统机器学习的方法,该方法需要大量已经被事先标注好的数据,然后再利用支持向量机[5]、朴素贝叶斯、条件随机场等机器学习算法进行情感分类。其中,条件随机场是一种判别式概率学习模型,在序列标注、命名实体识别、中文分词等方面都具有很好的效果;第3种方法,即基于深度学习的方法[6]。深度学习模型在不需要人工标注的前提下,能够充分挖掘文本的情感信息,得到良好的分类效果。

卷积神经网络(CNN)最初广泛应用于数字图像处理领域,并迅速应用于情感分析。Collobert等[7]首次提出将CNN用于解决NLP词性标注等问题。Kim[8]提出将CNN应用于情感分析任务并取得了良好的效果。随着CNN在情感分类上的广泛应用,其缺陷也越来越明显。CNN只能挖掘文本的局部信息,对于长距离依赖的捕捉效果有所欠缺。而循环神经网络(RNN)弥补了这方面的不足[9]。RNN相较于CNN具有记忆功能,能够实现在序列化数据中捕捉动态信息,在情感分类任务中取得了良好的效果。Tang等[10]对篇章级文本进行建模,提出了一种层次化RNN模型。RNN虽然适用于上下文处理,但在处理长距离依赖问题的情况下,会产生梯度爆炸的情况。针对此问题,Hochreiter等[11]提出了LSTM模型,对RNN的内部构造进行了优化。Zhu等[12]利用LSTM对文本进行建模,将其划分为词序列,进而进行情感分类。传统的LSTM只能有效地使用上文信息,忽略了向下的信息,这在一定程度上影响了情感分类的准确性。白静等[13]利用了Bi-LSTM进行建模,将词向量分别通过Bi-LSTM与CNN,最后再融合注意力机制,取得了更好的效果,但也存在计算复杂度过大的不足。为了减少计算量,结构简单的GRU被人们提出。刘洋[14]提出将GRU应用于时间序列任务并取得了良好的效果。

以上学者在情感分析问题上已经认识到模型在训练上存在的时间长,数据量大,上下文信息获取距离短的局限并做出了改进,但是并没有突破深度学习的限制。针对上面发现的问题,本文提出了一种混合神经网络和CRF相结合的情感分析模型。本文提出的模型综合考虑篇章中句子的上下文语境,在保留语序的同时更快更充分地获取语义信息。本文模型将一段文本按照句子划分为不同的区域,利用CNN与Bi-GRU以并行训练的方式从句子中获取更多的语义信息和结构特征。同时,采用条件随机场作为分类器,计算整个文本的情感概率分布,从而达到预测情感类别的目的。

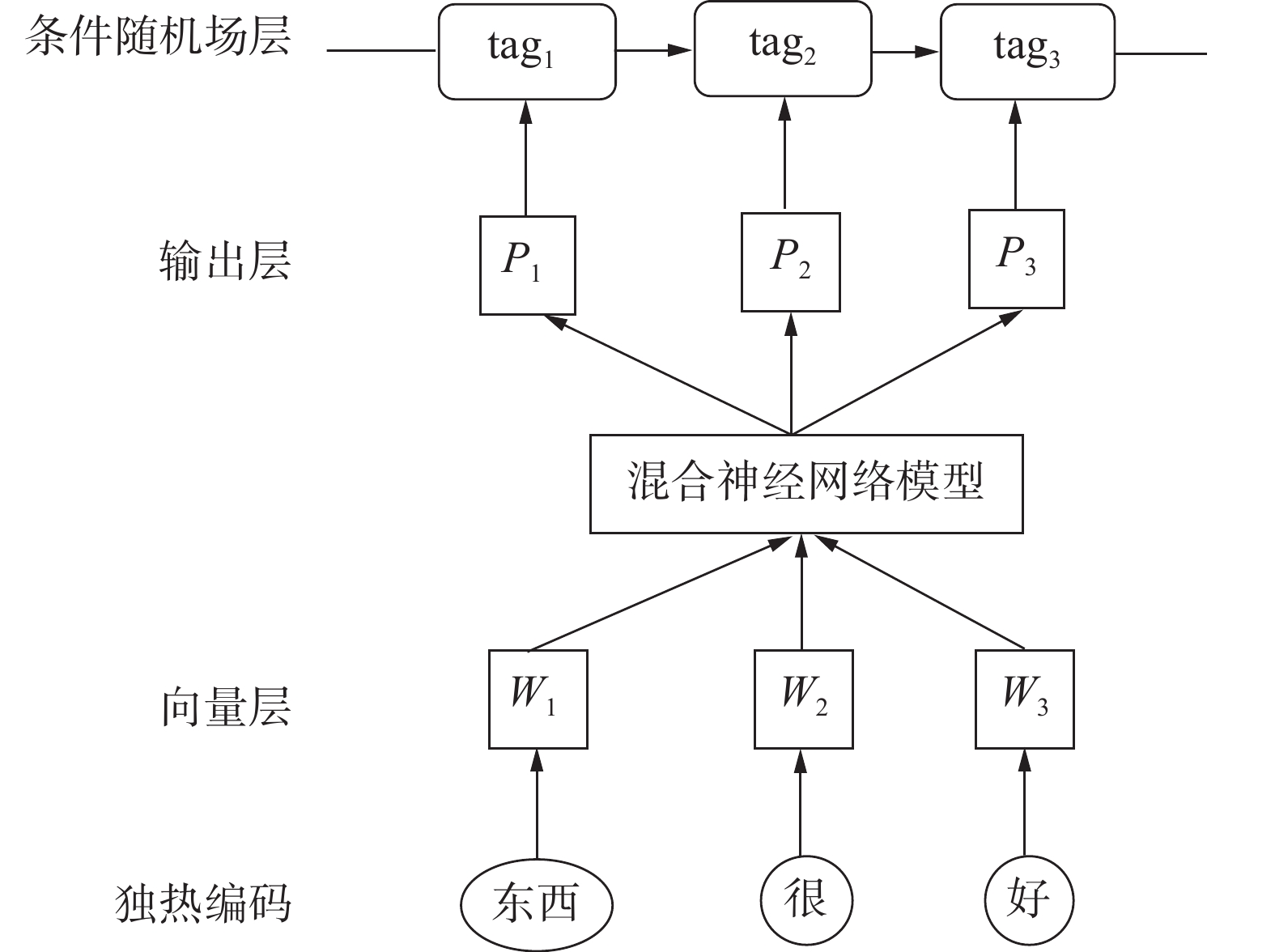

1 情感分析过程情感分析早在2000年初就已经成为NLP领域的热门研究领域[15]。情感分析问题是一个多层面的问题,具有一定的复杂性,它包含很多相互关联的上下文关系。在同一句中,文章中表达的情感类别可能与仅由句子表达的情感类别有很大不同。传统情感分析的过程如图1所示。

|

Download:

|

| 图 1 情感分析过程图 Fig. 1 Process diagram of sentiment analysis | |

情感分析主要应用于对文本评论或观点的处理,评论文本具有篇幅较短、文本格式不规范等问题。例如其中的标点符号、网络流行语和用户昵称等内容都会给情感分析任务带来困难。因此,情感分析首先要减少文本的噪音,对其进行预处理。本文采用中国科学院计算技术研究所开发的汉语分词系统NLPIR对评论文本进行分词[16],继而主要针对标点符号和用户昵称等字符串进行去除噪声处理。词语的向量表示是指从词语到实数维向量空间的复杂映射。目前,比较常见的单词向量训练模型有CBOW模型和Skip-gram模型,这两个模型在结构上是相似的。Mikolov等[17]针对这两种词向量提取的方法进行了深刻的分析,指出了其预测范围受限的不足。

本文将语言模型和情感分析模型联合训练,通过语言模型获取语义信息,利用混合神经网络作为语言模型对分词后的文本进行向量表示和特征提取,对每一个经过混合神经网络的输出特征实行打分制。这种方法使训练模型不再依赖词向量训练模型,而是一个端到端的整体过程,是一种数据驱动的方法,具有更高的准确性。

2 混合神经网络和条件随机场相结合的文本情感分析模型现有文本情感分析研究工作大多存在模型训练时间长,上下文信息学习不充分的问题,本文提出将混合神经网络与条件随机场相结合,首先利用混合神经网络获取文本的特征信息,再将其输入到条件随机场分类器中。本文模型获取到的特征更充分,训练速度也比以往模型更快,具有更好的分类效果。

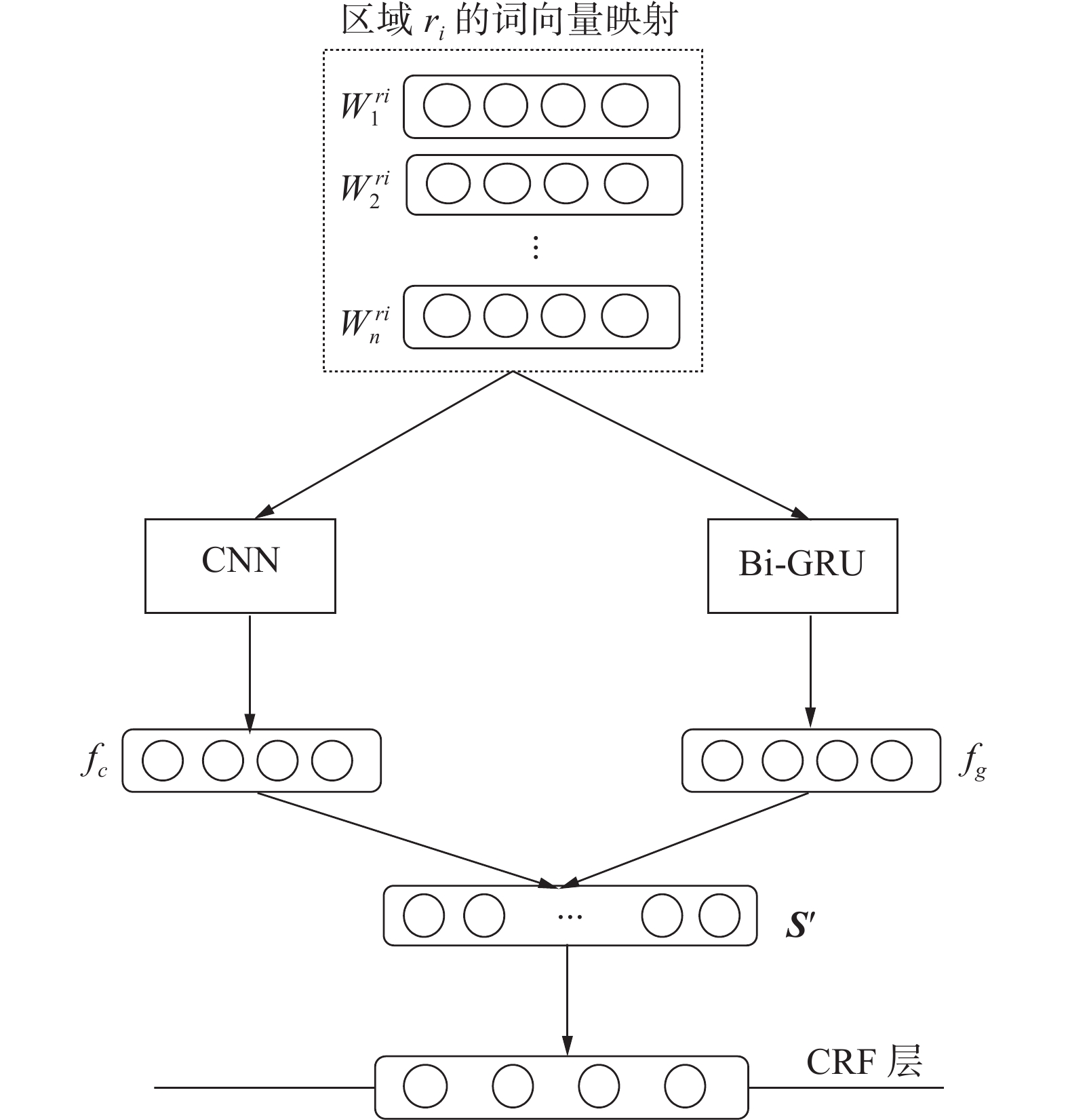

本文将进行分词后的句子的离散one-hot表示映射到低维空间形成稠密的词向量,低维词向量作为模型的输入,进行卷积池化后,在输出端融入低维词向量经过Bi-GRU神经网络学习到的结构特征。模型将分别通过混合神经网络得到的句子的特征表示拼接在一起,对每一个经过混合神经网络的输出特征进行打分。针对词语在混合神经网络模型中生成的标签可能存在的非法序列从而导致数据失效的问题,本文在混合神经网络模型接入CRF层,将得分输出特征作为 CRF的发射概率,并使用维特比算法获得概率最高的情绪类别。本文过程的示意图如图2所示。

|

Download:

|

| 图 2 基本过程示意 Fig. 2 Schematic diagram of basic process | |

混合神经网络的构造如图3所示,本文模型将一段文本按照句子划分为不同的区域,以每个区域的低维词向量作为输入。低维词向量分别经过两种神经网络,经过CNN输出的句子特征为

本文的思路是将一段文本按照句子划分为不同的区域,将每个句子的低维词向量作为模型的输入,通过CNN模型进行卷积池化再输出。

词向量映射层:假设区域中词语的个数为M,每个词向量有k维特征,则所有词向量都在区域矩阵

|

Download:

|

| 图 3 混合神经网络结构 Fig. 3 Structure diagram of Hybrid neural network | |

卷积层:每个区域

| $y_n^d = f\left( {{W^d} \circ {x_{n:n + \delta - 1}} + {b^d}} \right)$ | (1) |

式中:

常用的激活函数有Relu函数、Tanh函数和Sigmoid函数。由于不同的功能特性,Sigmoid和Tanh函数在接近饱和区域时倾向于梯度消失,从而减慢收敛速度。为了增强网络适应和加速收敛的能力,该模型应用ReLU激活函数。滤波器逐渐遍历,可以得到输出矩阵:

| ${{{y}}^d} =[ y_1^d\;\;y_2^d\;\;\cdots\;\;y_{N - \delta + 1}^d]$ | (2) |

不同区域的文本长度不同,对应的

池化层:本文中Max-pooling操作的优点是Max-pooling可以全局过滤文本特征,减少参数数量,过滤噪声,减少信息冗余,并有效地减少过度拟合的问题。其次,Max-pooling还可以提取不同区域内的局部依赖关系,保留最显著的信息。通过pooling操作,每个过滤器可以取一个值,池化层最终产生的文本向量特征为

在本文中卷积神经网络的池化策略为Max-pooling,对特征具有旋转不变性。然而对于情感分类任务,特征的位置信息至关重要,Max-pooling却将其丢弃。为了弥补这个不足,本文引入双向GRU神经网络(Bi-GRU)作为词向量的另一种特征提取方式来对CNN的结果进行补充。

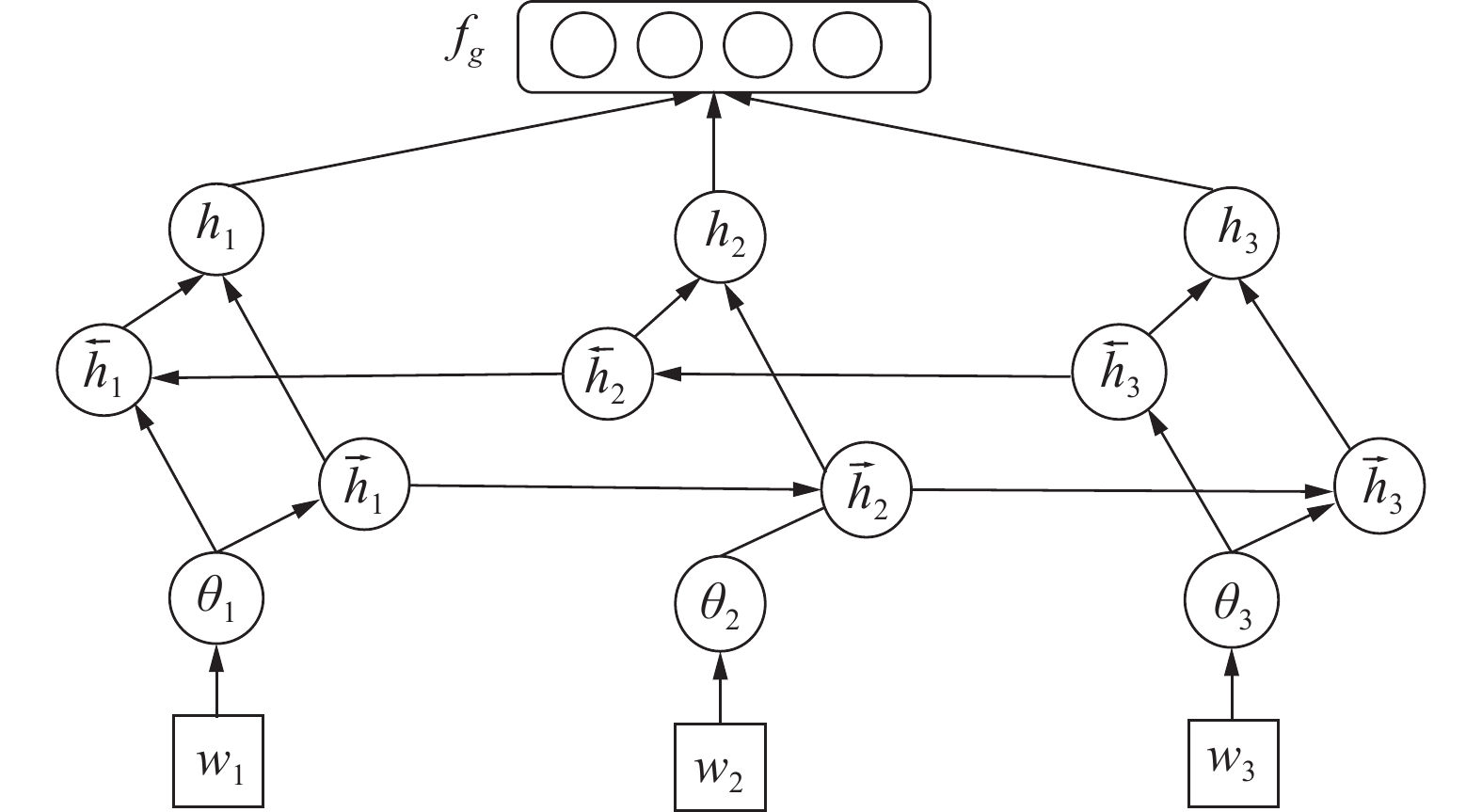

Bi-GRU是GRU的改进,它可以在前后方向同时获取上下文信息,相比GRU能够获得更高的准确率。不仅如此,Bi-GRU还具有复杂度低,对字向量依赖性低,响应时间快的优点。在Bi-GRU结构中,在每个训练序列之前和之后都存在循环神经网络。在t时刻,Bi-GRU单元激活值

| ${\tilde h_t} = {\rm{tanh}}\left( {{{W}}{x_t} + {{U}}\left( {{r_t} \odot {h_{t - 1}}} \right)} \right)$ | (3) |

| $h_t^j = \left( {1 - z_t^j} \right)h_{t - 1}^j + z_t^j\tilde h_t^j$ | (4) |

对于序列“我很开心”,使用Bi-GRU进行特征获取的具体过程如图4所示。

|

Download:

|

| 图 4 Bi-GRU获取特征过程 Fig. 4 Process diagram of Bi-GRU obtain feature | |

在本文中,

| ${{S}}' = {f_c} \oplus {f_g}$ | (5) |

S′进行线性变换后,得到对应词语所属情感类别的分数k:

| $k = {{{U}}_1}{{S}}' + b$ | (6) |

式中:U为权值矩阵,b为偏置量。当前词语所属情感类别的得分为

将对应词语所属情感类别的分数k输入Softmax函数中,针对文本中的大量词汇导致的向量维度过高,计算量大的问题,本文采用噪声对比估计[18]方法对Softmax函数进行最大似然估计,运用基于采样的原理使模型得到有效训练,目标是使正样本概率达到最大值的同时让负样本的概率尽可能的小。本文双向网络的损失函数定义为

| $\vec {{E}} = \mathop \sum \limits_{t = 1}^{m - 1} - \log\sigma \left( {{{\overrightarrow {{{{h}}_t}} }^{\rm{T}}}{v_{{w_{t + 1}}}}} \right) + \mathop \sum \limits_{i = 1,{w_i} \in N}^l \log\sigma \left( {{{\overrightarrow {{{{h}}_t}} }^{\rm{T}}}{v_{{w_i}}}} \right)$ | (7) |

| $\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\leftarrow}$}}{{{E}}} = \mathop \sum \limits_{t = 1}^{m - 1} - \log\sigma \left( {{{\overleftarrow {{{{h}}_t}} }^{\rm{T}}}{v_{{w_{t - 1}}}}} \right) + \mathop \sum \limits_{i = 1,{w_i} \in N}^l \log\sigma \left( {{{\overleftarrow {{{{h}}_t}} }^{\rm{T}}}{v_{{w_i}}}} \right)$ | (8) |

其中文本

| $N = \left\{ {{w_j}|{w_j} \in V\& {w_j} \notin \left\{ {{w_t},{w_{t - 1}}, \cdots ,{w_1}} \right\}} \right\}$ | (9) |

则情感类别的分数输入Softmax函数后得到概率为

| ${p_{{w_t}}}\left( {y{\rm{|}}S'} \right) = \left[ \!\!{\begin{array}{*{20}{c}} {p({y_1} = 1|S')} \\ {p({y_2} = 1|S')} \\ {\begin{array}{*{20}{c}} \vdots \\ {p({y_m} = 1|S')} \end{array}} \end{array}} \!\!\right] = \frac{1}{{\displaystyle\sum \limits_{i = 1}^m {e^{{k_i}}}}}\left[\!\! {\begin{array}{*{20}{c}} {{e^{{k_1}}}} \\ {{e^{{k_2}}}} \\ {\begin{array}{*{20}{c}} \vdots \\ {{e^{{k_m}}}} \end{array}} \end{array}} \!\!\right]$ | (10) |

式中:

CRF分类器模型和神经网络分类器模型各自具有优点和不足。CRF模型需要人工提前对语料信息进行标注,手动设计词的词性、程度等特征,而神经网络模型可以学习训练数据自动生成特征向量,取得更好的效果[19]。但是,神经网络模型往往需要更长的训练时间,且神经网络模型的有些输出在命名实体识别上是不合法的,因此有必要使用 CRF随后将命名实体的规则添加到序列标记过程中。本文根据CRF与神经网络模型各自的特点进行组合,得到在性能上更具优势的联合模型[20]。

CRF模型的学习与预测是在样本的多个特征上进行的。CRF模型本身可以生成特征向量并进行分类,本文使用混合神经网络提取的特征作为中间量,替换原公式中的向量值。

CRF分类器模型中的发射概率是指序列中的单词属于每个情感分类的概率,即为

| ${p_{{w_t}}}\left( {w{\rm{|}}S',y} \right) = \frac{1}{{\displaystyle \sum \limits_{i = 1}^m {e^{{k_i}}}}}\left[ {\begin{array}{*{20}{c}} {{e^{{k_1}}}} \\ {{e^{{k_2}}}} \\ {\begin{array}{*{20}{c}} \vdots \\ {{e^{{k_m}}}} \end{array}} \end{array}} \right]$ | (11) |

可以获得单词的发射概率乘以转移概率的概率。计算公式如下:

| ${p_{tw}} = \mathop \prod \limits_{t = 1}^n {\mathit{\Phi }}\left( {{y_{{w_{t - 1}}}},{y_{{w_t}}}} \right)*{p_{{w_t}}}\left( {y{\rm{|}}S'} \right)$ | (12) |

其中

| ${\rm{loss}} = {y_{tw}} - \max\left( {{p_{tw}}} \right)$ | (13) |

式中:

本文选用NLPCC(natural language processing and Chinese computing),即自然语言处理及中文计算会议中公开的2014年任务2中的中文文本作为数据集,来验证本文模型的有效性。该数据集以商品的评价为主,文本长度适中。为了更好地对比训练模型的分类效果,实验将数据集按照8∶2的比例分为训练集和测试集。通过进行多次重复实验,选用实验的平均值作为最终结果,以此评估模型的性能。数据集的详细信息如表1所示。

| 表 1 实验数据信息 Tab.1 Experimental data information |

由于本文采用的数据集主要是商品评论,评论文本具有篇幅较短、文本格式不规范等问题。例如其中的标点符号、网络流行语和用户昵称等内容都会给情感分析任务带来困难。为了有效减少噪音,首先需要对文本进行预处理。本文采用中国科学院计算技术研究所开发的汉语分词系统NLPIR对评论文本进行分词,继而主要针对标点符号和用户昵称等字符串进行去除噪声处理。词语的向量表示是指从词语到实数维向量空间的复杂映射,本文将进行分词后的句子的离散one-hot表示映射到低维空间形成稠密的词向量,得到文本的词向量映射,进而输入本文模型进行进一步的特征提取和情感分类工作。

3.2 评价指标本文选择准确率A、召回率R和F值来当作本文实验的评价指标。准确率A表示对测试集进行分类后,在某个类别中被正确分类的比例。召回率R代表分类后正确判断某个类别的个数占其所有正确数目的比例。F值是一个根据准确率A与召回率R得到的加权调和平均数,通常被用来综合评定模型的性能优劣。每个评价指标的计算方式如下:

| $ A=\frac{m+p}{m+n+l+p} $ | (14) |

| $ R=\frac{m}{m+p} $ | (15) |

| $ F=\frac{2m}{2m+l+p} $ | (16) |

表2是根据分类结果建立的判别混淆矩阵,介绍了上述评价指标中各个字母所代表的含义。

| 表 2 分类判别混淆矩阵 Tab.2 Classification discrimination confusion matrix |

本文实验运行环境为Win10系统、8 GB内存,本文底层采用TensorFlow架构,使用Keras来搭建深度学习网络模型,上层采用JetBrains PyCharm软件。网络模型所使用的激活函数为ReLU,采用Adam作为梯度更新方法,学习率设为0.05。

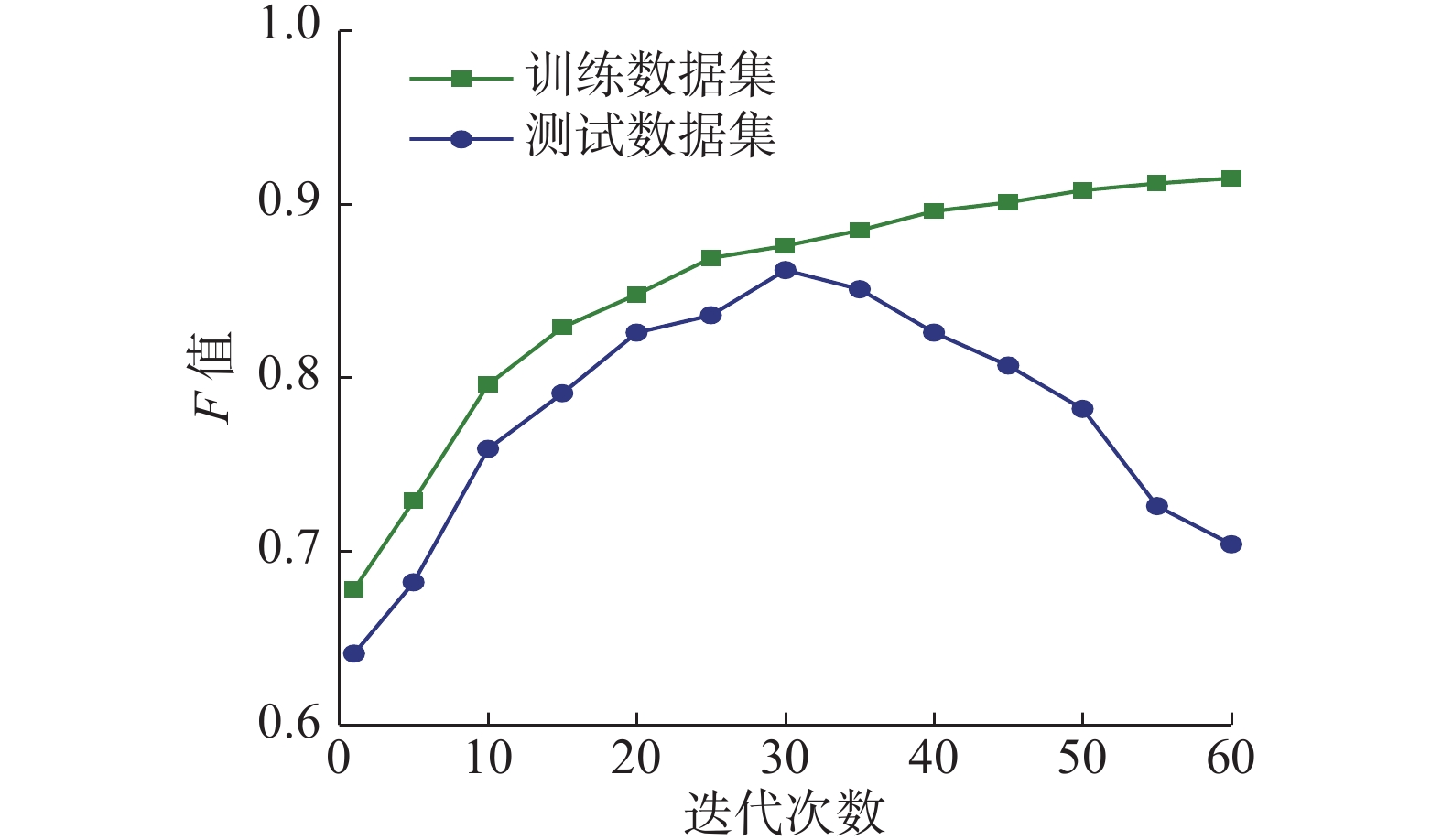

由于神经网络模型的迭代次数对于实验结果的影响比较大,迭代次数越大,模型的拟合程度越好,进而会导致模型的过拟合问题。因此,本文对迭代次数进行了单因子变量实验。如图5所示,当迭代次数为30时,训练集和测试集的数据上F值都处于一个较高的水平。因此本文实验迭代次数设为30,同时将Dropout的参数设为0.5以防止过拟合。

|

Download:

|

| 图 5 基于迭代次数的F值的变化趋势 Fig. 5 Trends in F values based on iterations | |

词向量维度是实验的一个重要参数,维度的高低会影响模型中参数的数目。维度过高,容易导致过拟合,达不到预期的效果。维度过低,难以包含所需要的全部信息。本实验采用单因子变量法确定词向量维度的最优值,实验结果如图6所示,词向量维度初始值从30开始,并不断增加。在单一变量的情况下,词向量维度在80的时候F值达到最优值。因此在神经网络训练过程中,选用80作为词向量维度的设定值。本文模型训练的参数和函数设置如表3所示。

|

Download:

|

| 图 6 基于词向量维度的F值的变化趋势 Fig. 6 Trends in F values based on word vector dimensions | |

| 表 3 实验参数设置 Tab.3 Experimental parameter settings |

为了证明本文提出模型的有效性,将本文模型分别与CNN、Bi-GRU、CRF、CNN+Bi-GRU、Bi-GRU+CRF等模型做对比实验,进行情感分析的性能对比。

CNN:将词向量作为输入在CNN中进行分类。

Bi-GRU:将词向量作为输入在双向门控循环单元中进行分类。

CRF:将词向量输入到条件随机场中进行分类。

CNN+Bi-GRU:将CNN与Bi-GRU采用联合训练的方式,词向量分别输入两种神经网络中,得到的输出进行特征融合,利用Softmax进行分类。

Bi-GRU+CRF:将Bi-GRU模型与CRF模型以链式方式进行组合,将训练好的词向量作为Bi-GRU模型的输入,其输出作为CRF模型的输入,最终输出情感分析结果。

C-BG+CRF:本文提出的混合神经网络与条件随机场相结合的情感分析模型。

实验结果如图7,对比发现,CRF单模型和神经网络模型相比,其分类的准确率和F值较低,证明了传统机器学习方法在情感分析中的表现与深度学习确实存在差距。本文模型的收敛速度跟CRF单模型差距不大,且在准确率和F值等指标上均优于其他模型,证明了本文模型的有效性。

|

Download:

|

| 图 7 6种模型F值比较 Fig. 7 Comparison of F value in six models | |

对比实验的测试结果如表4所示。本文模型与CNN单模型、Bi-GRU单模型和CRF单模型相比在准确率、召回率和F值等指标上均表现出了良好的优越性。实验说明本文模型与单个模型相比,在情感分析任务上确实具有更好的效果。

| 表 4 不同模型的测试结果 Tab.4 Test results of different models |

本文模型与CNN+BiGRU模型进行对比,分类效果有所提升。因为本文模型采用CRF作为分类器而不是Softmax函数,对异常标签的处理具有更好的准确性,可以有效地促进情感分类器性能上的提升。本文模型与BiGRU+CRF模型相比在F值上有所提升,同时收敛速度加快。两种神经网络共同训练得到的特征比单一网络更加充分,在准确率上有所提升。实验说明本文模型与融合模型相比,在情感分析任务上确实具有更好的效果。

4 结束语本文针对篇章级的文本进行了分析,提出一种混合神经网络和CRF相结合的情感分析模型。将一段文本按照句子划分为不同的区域,结合了CNN与Bi-GRU两种神经网络获得的语义信息和结构特征。同时,将分别通过神经网络得到的句子的向量表示拼接在一起,采用条件随机场模型作为分类器。本文模型充分考虑了上下文信息,使学习到的特征更加丰富。在NLPCC 2014数据集上进行训练和测试,总体上看,证明了本文的方法能够有效地对篇章级文本进行情感分类,取得了良好的效果。下一步考虑在词向量中融入主题词信息,从而更好地针对篇章中多主题进行文本情感分析任务。

| [1] |

CAMBRIA E. Affective computing and sentiment analysis[J]. IEEE intelligent systems, 2016, 31(2): 102-107. ( 0) 0)

|

| [2] |

陈龙, 管子玉, 何金红, 等. 情感分类研究进展[J]. 计算机研究与发展, 2017, 54(6): 1150-1170. CHEN Long, GUAN Ziyu, HE Jinhong, et al. A survey on sentiment classification[J]. Journal of computer research and development, 2017, 54(6): 1150-1170. DOI:10.7544/issn1000-1239.2017.20160807 (  0) 0)

|

| [3] |

杨立公, 朱俭, 汤世平. 文本情感分析综述[J]. 计算机应用, 2013, 33(6): 1574-1578, 1607. YANG Ligong ZHU Jian, TANG Shiping. Survey of text sentiment analysis[J]. Journal of computer applications, 2013, 33(6): 1574-1578, 1607. DOI:10.3724/SP.J.1087.2013.01574 (  0) 0)

|

| [4] |

TABOADA M, BROOKE J, TOFILOSKI M, et al. Lexicon-based methods for sentiment analysis[J]. Computational linguistics, 2011, 37(2): 267-307. DOI:10.1162/COLI_a_00049 ( 0) 0)

|

| [5] |

丁晟春, 吴靓婵媛, 李红梅. 基于SVM的中文微博观点倾向性识别[J]. 情报学报, 2016, 35(12): 1235-1243. DING Shengchun, WU Jingchanyuan, LI Hongmei. Chinese micro-blogging opinion recognition based on SVM model[J]. Journal of the China society for scientific and technical information, 2016, 35(12): 1235-1243. DOI:10.3772/j.issn.1000-0135.2016.012.001 (  0) 0)

|

| [6] |

梁军, 柴玉梅, 原慧斌, 等. 基于深度学习的微博情感分析[J]. 中文信息学报, 2014, 28(5): 155-161. LIANG Jun, CHAI Yumei, YUAN Huibin, et al. Deep learning for Chinese micro-blog sentiment analysis[J]. Journal of Chinese information processing, 2014, 28(5): 155-161. DOI:10.3969/j.issn.1003-0077.2014.05.019 (  0) 0)

|

| [7] |

COLLOBERT R, WESTON J, BOTTOU L, et al. Natural language processing (almost) from scratch[J]. Journal of machine learning research, 2011, 12: 2493-2537. ( 0) 0)

|

| [8] |

KIM Y. Convolutional neural networks for sentence classification[C]//Proceedings of 2014 Conference on Empirical Methods in Natural Language Processing. Doha, Qatar, 2014: 1746−1751.

( 0) 0)

|

| [9] |

YIN Wenpeng, KANN K, YU Mo, et al. Comparative study of CNN and RNN for natural language processing[J]. 2017.

( 0) 0)

|

| [10] |

TANG Duyu, QIN Bing, LIU Ting. Document modeling with gated recurrent neural network for sentiment classification[C]//Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing. Lisbon, Portugal, 2015: 1422−1432.

( 0) 0)

|

| [11] |

HOCHREITER S, SCHMIDHUBER J. Long short-term memory[J]. Neural computation, 1997, 9(8): 1735-1780. DOI:10.1162/neco.1997.9.8.1735 ( 0) 0)

|

| [12] |

ZHU Xiaodan, SOBHANI P, GUO Hongyu. Long short-term memory over recursive structures[C]//Proceedings of the 32nd International Conference on International Conference on Machine Learning. Lille, France, 2015: 1604−1612.

( 0) 0)

|

| [13] |

白静, 李霏, 姬东鸿. 基于注意力的BiLSTM-CNN中文微博立场检测模型[J]. 计算机应用与软件, 2018, 35(3): 266-274. BAI Jing, LI Fei, JI Donghong. Attention based BiLSTM-CNN Chinese microblogging position detection model[J]. Computer applications and software, 2018, 35(3): 266-274. DOI:10.3969/j.issn.1000-386x.2018.03.051 (  0) 0)

|

| [14] |

刘洋. 基于GRU神经网络的时间序列预测研究[D]. 成都: 成都理工大学, 2017. LIU Yang. The research of time series prediction based on GRU neural network[D]. Chengdu: Chengdu University of Technology, 2017. (  0) 0)

|

| [15] |

魏韡, 向阳, 陈千. 中文文本情感分析综述[J]. 计算机应用, 2011, 31(12): 3321-3323. WEI Wei, XIANG Yang, CHEN Qian. Survey on Chinese text sentiment analysis[J]. Journal of computer applications, 2011, 31(12): 3321-3323. (  0) 0)

|

| [16] |

齐小英. 基于NLPIR的人工智能新闻事件的语义智能分析[J]. 信息与电脑(理论版), 2019, 31(20): 104-107. QI Xiaoying. Semantic intelligence analysis of artificial intelligence news events based on NLPIR[J]. China computer & communication, 2019, 31(20): 104-107. (  0) 0)

|

| [17] |

MIKOLOV T, CHEN Kai, CORRADO G, et al. Efficient estimation of word representations in vector space[C]// Proceedings of Workshop at ZCLR. [S.l.], 2013.

( 0) 0)

|

| [18] |

MNIH A, TEH Y W. A fast and simple algorithm for training neural probabilistic language models[C]//Proceedings of the 29th International Conference on International Conference on Machine Learning. Edinburgh, UK, 2012: 419−426.

( 0) 0)

|

| [19] |

王昊, 邓三鸿. HMM和CRFs在信息抽取应用中的比较研究[J]. 现代图书情报技术, 2007(12): 57-63. WANG Hao, DENG Sanhong. Comparative Study on HMM and CRFs applying in information extraction[J]. New technology of library and information service, 2007(12): 57-63. (  0) 0)

|

| [20] |

王鸿飞. 基于条件随机场的中文微博情感分析研究[D]. 广州: 广东工业大学, 2013. WANG Hongfei. Research of sentiment analysis for Chinese micro blog based on conditional random field[D]. Guangzhou: Guangdong University of Technology, 2013. (  0) 0)

|

2021, Vol. 16

2021, Vol. 16