2. 华侨大学 计算机科学与技术学院,福建 厦门 361021

2. College of Computer Science and Technology, Huaqiao University, Xiamen 361021, China

视觉跟踪是计算机视觉领域的研究热点,在虚拟现实、人机交互、智能监控、增强现实、机器感知等场景中有着重要的研究与应用价值。视觉跟踪通过分析视频图片序列,对检测出的各个候选目标区域实施匹配,定位跟踪目标在视频序列中的位置。目前跟踪算法已经取得很多研究成果,但在实际中应对各类复杂场景时仍面临很大挑战,例如面对遮挡、形变、视频序列分辨率低等影响因素时,如何实现更加鲁棒和准确的跟踪仍然是目前研究的核心[1]。

视觉跟踪算法一般分为两部分:目标表观建模和跟踪策略。

1)目标表观建模

根据对目标表观的建模方式可分为判别式模型和生成式模型两类[2-3]。判别式模型将跟踪问题建模为一个二分类问题,用以区分前景和背景。B. Babenko等[4]提出多示例学习算法(MIL),针对跟踪中训练样本不足的问题,引入多示例学习机制,有效抑制跟踪过程中跟踪器的漂移问题;文献[5]提出具有元认知能力的粒子滤波(MCPF)目标跟踪算法,通过监控到突变,快速调节决策机制,实现稳定的目标跟踪。生成式模型不考虑背景信息直接为目标进行建模。文献[6]提出了L1跟踪系统,用稀疏方法表示跟踪目标,但算法运算复杂度高;K. Zhang等[7]提出了压缩跟踪(CT),用一个稀疏的测量矩阵提取特征以建立一个稀疏、健壮的目标表观模型,取得快速有效、鲁棒性好的跟踪效果;文献[8]引入小波纹理特征,改善单纯依靠颜色特征不能很好适应环境变化的情况,与单一特征相比能够实现更加稳健的跟踪。

2)跟踪策略

采用运动模型来估计目标可能的位置,通过先验知识来缩小搜索范围。代表性方法有隐马尔可夫模型[9]、卡尔曼滤波[10]、均值漂移算法[11]和粒子滤波[12]等。其中,粒子滤波算法因为对局部极小值相对不太敏感且计算非常有效而被广泛应用。另外,近几年相关滤波跟踪算法在目标领域也取得不错的成绩。D.S. Bolme等[13]首次将相关滤波引入跟踪领域,通过设计一个误差最小平方和滤波器(MOSSE),在跟踪过程中寻找目标最大响应值来实现跟踪。J.F. Henriques等[14]提出的CSK算法使用循环矩阵结构进行相邻帧的相关性检测,利用灰度特征空间提高了算法的准确性。文献[15]在CSK的基础上,通过循环偏移构建分类器的训练样本,使数据矩阵变成一个循环矩阵,同时引入HOG、颜色、灰度多通道特征,提高了算法的速度和准确性。

传统跟踪算法大多数直接使用视频图像序列中的像素值特征进行建模,当跟踪过程中出现复杂场景等较大挑战时,浅层的像素级特征无法很好应对。针对卷积神经网络具有强大的特征提取功能,设计一种无需训练的卷积神经网络特征提取方法,在粒子滤波框架下,利用核函数加速卷积运算,实现了一种快速卷积神经网络跟踪算法,通过与其他算法的对比分析,最终验证了所提出算法的有效性。

1 相关工作2013年以来,深度学习算法在跟踪领域已经取得了很大进展。如深度神经网络、卷积神经网络等深度学习方法能够挖掘出数据的多层表征,而高层级的表征更能够反映数据更深层的本质,相比传统浅层学习特征,基于高层次特征的跟踪算法可以提高目标的跟踪效率[16]。

1.1 CNN特征提取结构卷积神经网络(convolutional neural network,CNN)的网络结构类似于生物神经网络,采用局部连接、权值共享和时空下采样的思想降低了网络复杂度,减少了权值数量,使得 CNN 在处理高维图像时更具优势。

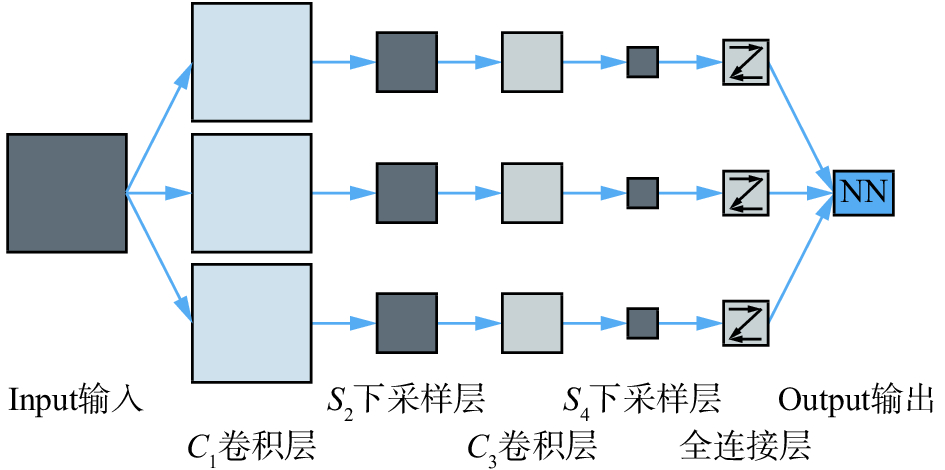

卷积神经网络具有多层性,在传统神经网络的基础上增加了特征提取的卷积层和保证位移不变的下采样层。每层由多个二维平面特征映射层组成,每个映射层由多个独立的神经元组成。卷积特征的提取首先对原始输入图像进行取片操作获取大量小的局部块,然后应用卷积网络模型对局部块进行训练,得到结果为各个卷积层的神经元卷积滤波器,与新输入的样本图像进行卷积滤波,提取样本图的抽象卷积特征从而得到原始图像的深度特征。如图1所示,给出了一个简单卷积特征提取结构,输入图像卷积后在C1层产生若干特征映射图,然后特征映射图中每组的若干像素再进行求和,加权值与偏置,通过一个激活函数(Sigmoid、Relu)得到S2层的特征映射图,这些映射图再经过滤波得到C3层,这个层级结构再和S2一样产生S4。最终,这些像素值全连接成一个向量输入到传统的神经网络,得到输出。

|

Download:

|

| 图 1 卷积特征提取结构 Fig. 1 Convolution feature extraction structure | |

深度学习在跟踪领域面临两个重要问题:1)深度学习网络在训练阶段需要大量的样本,而跟踪领域仅能够提供第一帧的目标进行训练,面临训练样本缺少问题;2)采用深度学习的网络模型运算时间复杂度大,跟踪实时性低。

针对以上问题,N.Y. Wang等[17]提出DLT算法首次将深度学习算法应用到跟踪领域,在ImageNet数据集上使用栈式降噪自编码器离线预训练来获得通用的物体表征能力,并在跟踪过程中更新自编码器实现跟踪;K. Zhang等[18]结合人脑视觉处理系统,简化了卷积网络结构,使用目标区域中随机提取的归一化图像块作为卷积神经网络的滤波器,从而实现了不用训练卷积神经网络的快速特征提取。文献[19]中的MDNet算法提出将训练方法和训练数据交叉运用的思路,在检测任务中重点关注背景中的难点样本,显著减轻了跟踪器漂移问题。

深度学习算法需要搭建专用的深度学习硬件平台,通过大量的前期预训练来训练神经网络提取深度特征,面临样本缺少,算法时间复杂度高,硬件运行平台要求高,跟踪实时性低等显著缺点。本文结合卷积神经网络在特征提取时能够获得一定的平移、尺度和旋转不变性且能够大幅降低神经网络规模的特性,结合文献[18]提出的无需训练的卷积特征提取方法,采用两层前馈处理方式简化卷积网络结构,通过分层滤波器卷积来抽取目标的高维抽象特征,利用高斯核函数进行运算加速,提出一种快速卷积神经网络跟踪算法。

2 高斯核函数卷积神经网络跟踪算法针对卷积计算时间复杂度过高问题,本文引入高斯核函数进行变换,对算法的卷积运算实现加速;针对深度学习算法训练样本缺少的问题,本文采用简单两层前馈处理网络实现一种无需训练的特征提取方法。

2.1 核函数卷积本文的卷积运算采用高斯核函数进行变换加速运算,文献[15]采用子窗口高斯核函数

| ${k({{x,\,x'}})} = \exp ( - \frac{1}{{{{{\sigma}} ^2}}}({\left\| {{x}} \right\|^2} + {\left\| {{{x}}'} \right\|^2} - 2{F^{ - 1}}(\sum\limits_d {{{{\hat{ x}}}^*}} \odot {\hat{ x}}')))$ | (1) |

式中:“*”表示复共轭,

假设

| ${{\alpha }} = {({{K}} + \lambda {{I}})^{ - 1}}{{y}}$ | (2) |

式中:

| ${{\hat{ \alpha }}^*} = {\hat{ y}} \times {({{\hat{ k}}^{xx'}} + \lambda )^{ - 1}}$ | (3) |

式中:

本文利用卷积网络设计一个分层的目标表示结构。在第1帧中,将目标归一化到

简单层特征,通过预处理将图像归一化到

| ${{S}}_i^o = {{F}}_i^o \otimes {{I}},\,\,{{S}}_i^o \in {R^{{{(n - w + 1)}^2}}}$ | (4) |

跟踪目标周围的上下文可为区分目标和背景提供大量有用信息,对目标周围的区域随机采样出

| ${F_l} = \left\{ {{{F}}_1^b,{{F}}_2^b, \cdots ,{{F}}_l^b} \right\}$ | (5) |

最后,由目标卷积核减去背景卷积核与输入图像

| ${{{S}}_i} = {{S}}_i^o - {{S}}_i^b = ({{F}}_i^o - {{F}}_i^b) \otimes {{I}},\,\,i \in \{ 1,2, \cdots ,d\} $ | (6) |

复杂层特征,为了加强对目标的特征表达,本文将

| ${{C}} \in {R^{(n - w + 1) \times (n - w + 1) \times d}}$ | (7) |

这种特征具有平移不变特性,由于图像归一化后,使得特征对目标的尺度具有鲁棒性,且复杂层特征保留不同尺度目标的局部几何信息。文献[20]表明可以通过一个浅层的神经机制实现跟踪,因此本文没有使用高层次的对象模型而是利用一个简单的模板匹配方案,结合粒子滤波实现跟踪。

2.3 粒子滤波本文基于粒子滤波框架,设第

| $\begin{array}{c} p({{{S}}_t}|{{{Z}}_t}) \propto \\ p({{{Z}}_t}|{{{S}}_t})\int {p({{{S}}_t}|{{{S}}_{t - 1}})p({{{S}}_{t - 1}}|{{{Z}}_{t - 1}}){\text{d}}{{{S}}_{t - 1}}} \\ \end{array} $ | (8) |

式中:

| $p({{{S}}_t}|{{{S}}_{t - 1}}) = N({{{S}}_t}|{{{S}}_{t - 1}},\sum )$ | (9) |

式中:

| $p({{{Z}}_t}|{{S}}_t^i) \propto {{\text{e}}^{ - \left| {{\text{vec}}({{{C}}_t}) - {\text{vec}}({{C}}_t^i)} \right|_2^1}}$ | (10) |

于是,整个跟踪过程就是求最大响应:

| ${{\hat{ S}}_t} = \arg {\max _{\{ {{S}}_t^i\} _{i = 1}^{\text{N}}}}p({{{Z}}_t}|{{S}}_t^i)p({{S}}_t^i)$ | (11) |

前文给出了简单前馈卷积网络的特征提取方式,并采用高斯核函数对卷积计算进行加速处理,获取目标的深层次复杂表示。基于这种卷积特征,结合粒子滤波,提出的跟踪算法流程如图2所示。

|

Download:

|

| 图 2 跟踪算法流程图 Fig. 2 Tracking flow chart | |

跟踪算法的主要步骤:

1)输入:输入视频序列,并给定跟踪目标。

2)初始化:归一化,粒子滤波,网络规模,样本容量等参数设置。

3)初始滤波器提取:利用第一帧的目标,通过滑动窗口和K-means聚类提取一个初始滤波器组用作后续网络的滤波器使用。

4)卷积特征提取:利用上文的卷积网络结构提取出各候选样本的深层抽象特征,并使用高斯核函数进行加速。

5)粒子滤波:按照粒子滤波算法,归一化后生成规定尺寸大小的候选图片样本集,并进行目标识别与匹配。

6)网络更新:采取限定阈值的方式,即当所有粒子中最高的置信值低于阈值时,认为目标特征发生较大表观变化,当前网络已无法适应,需要进行更新。利用初始滤波器组,结合跟踪过程中得到前景滤波器组,进行加权平均,得到全新的卷积网络滤波器。

7)模板更新:以第一帧中目标的中心点为中心,偏移量为±1个像素点范围内进行等尺寸采样,构成正样本集合。以当前帧目标的远近两类距离采样,构成负样本集合。跟踪过程中为了减轻漂移现象,预设一个更新阈值f=5,目标模板每5帧更新一次。

3 实验结果与分析本文利用MATLAB2014a编程环境,PC配置为Inter Core i3-3220,3.3 GHz,8 GB内存,根据Database OTB2013[3,21]中提供的测试视频序列对算法进行了仿真分析。本文仿真参数设置为:滑动窗口取片尺寸为6×6,滤波器个数为100,归一化尺寸为32×32,学习因子设置为0.95,粒子滤波器的目标状态的标准偏差设置为:

限于论文篇幅,本文仅给出几组代表性的跟踪实验结果。如图3(a)、(b)、(c)、(d)所示,比较的算法有CT[7]、KCF[15]、CNT[18]与本文算法。图示给出Crossing、Football、Walking、Walking2四组序列,均存在目标形变问题,其中Crossing、Walking、Walking2均是在低分辨率场景下的跟踪,Football、Walking、Walking2均存在局部遮挡问题。对于Crossing序列,随着目标的运动导致目标本身的尺度变化,在低分辨率监控场景中,在第45帧,目标在行进过程中面临光线的干扰,并且出现运动车辆导致的背景干扰,在所有比较的算法中,同样跟踪成功,本算法性能都能达到最优。对于Football序列,目标在运动过程中,一直伴随着大量的形变问题,整个视频序列中大量的相似目标导致背景干扰问题,在第150帧目标进入人群中导致局部遮挡问题,本文算法在所有算法中表现最优。对于Walking序列,目标在监控的低分辨场景中,目标运动过程中伴随着一定的尺度变换,并在第90帧出现柱子遮挡目标的情况,本文算法在所有算法中表现最优。对于Walking2序列,在监控的低分辨场景中,目标运动途中伴随着尺度变换、遮挡、背景干扰。在第190帧与第360帧,目标均面临相似目标的背景干扰与遮挡,本文算法在所有算法中表现最优。

可见,本文算法在形变、遮挡、低分辨率等复杂背景干扰下均能取得有效的跟踪效果。

|

Download:

|

| 图 3 视频序列跟踪结果示例 Fig. 3 Examples of the tracking results on video sequences | |

为了测试算法性能,给出了部分序列的中心位置误差与距离精度的具体数据[3,21]。中心位置误差(center location error,CLE)表示目标的中心位置与标准中心位置的欧氏距离的误差,表达式为ε =

| 表 1 中心位置误差(像素) Tab.1 Center location error(pixels) |

| 表 2 距离精度DP Tab.2 Distance Precision |

对于算法速度,同样采用卷积网络结构提取特征的CNT算法,与本文算法在相同实验环境下进行速度对比,CNT没有采用高斯核函数进行加速,算法速度为1~2 f/s,本文算法采用高斯核函数进行加速,算法平均速度为5 f/s。由实验可知,采用高斯核函数加速,在不影响跟踪精度的同时能够提升算法的速度。

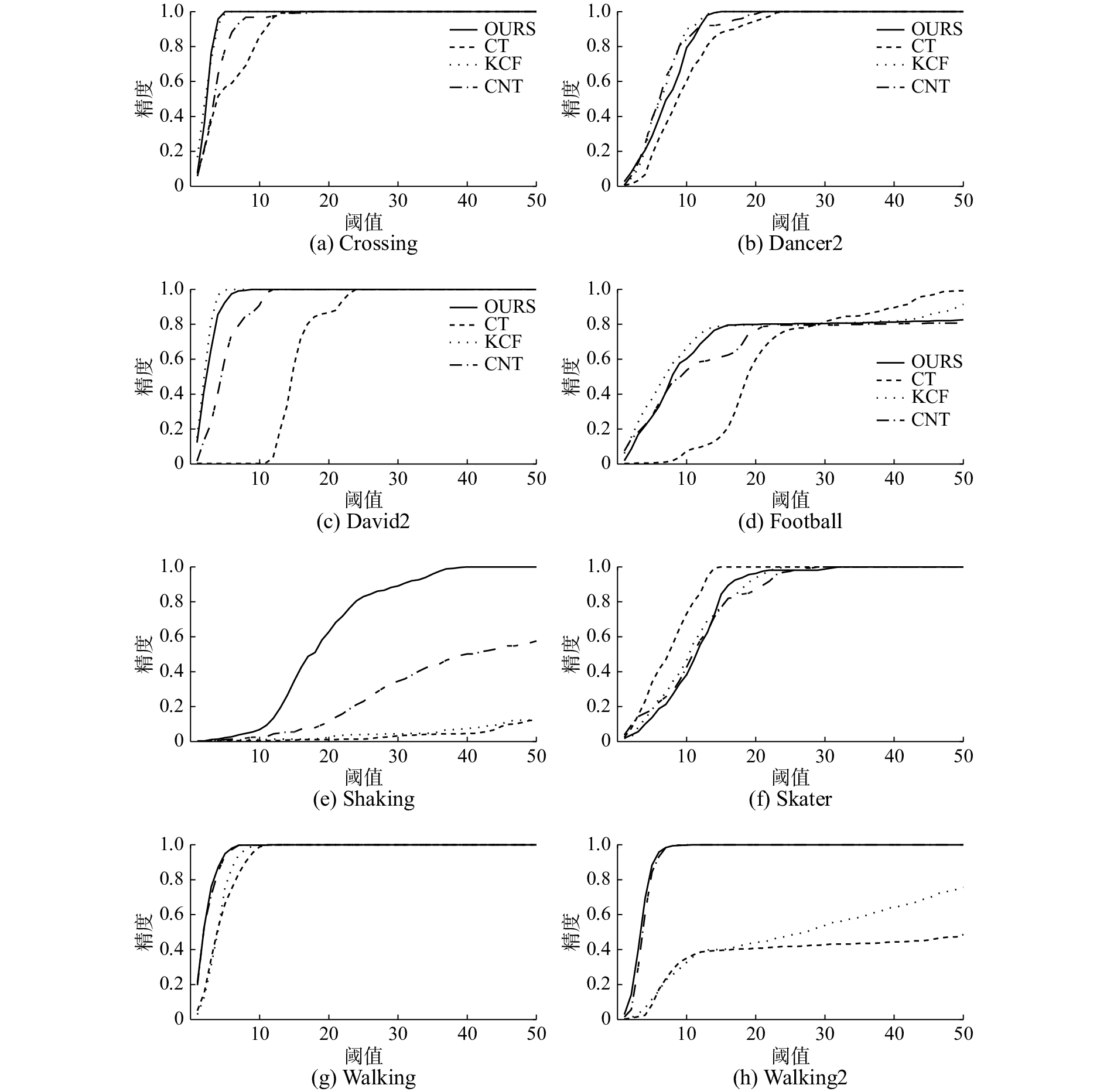

实验中,绘制了4种算法跟踪精度曲线图[4],跟踪精度曲线图首先设定一个目标估计位置与真实位置的阈值距离,在跟踪过程中,统计跟踪算法估计的目标位置与真实位置的距离小于阈值范围的帧数,并计算帧数占整个视频帧的百分比。图4给出了以上4种算法对应的8个视频序列的跟踪精度曲线,横坐标为阈值,纵坐标为精度,阈值越低而精度值越高的跟踪器性能越好。由曲线图可清晰地看到,本文算法具有较高的跟踪精度。

|

Download:

|

| 图 4 跟踪精度曲线图 Fig. 4 Tracking accuracy curve | |

本文针对深度学习跟踪算法训练费时,硬件要求高等问题,采用高斯核函数加速计算,采用简单两层前馈卷积网络提取目标鲁棒性特征,基于简化的卷积神经网络提出跟踪算法,第一层利用K-means在第一帧中提取归一化图像块作为滤波器组提取目标的简单层特征,第二层将简单的单元特征图堆叠形成一个复杂的特征映射,并编码目标的局部结构位置信息,在粒子滤波框架下,在目标形变、遮挡、低分辨等场景下,脱离深度学习复杂的硬件环境,仍能取得较好跟踪效果。因为本文的特征提取方式采用卷积神经网络特征,所以本文算法在快速运动、目标出界等场景下仍面临很大挑战,在今后的工作中,将主要致力于解决此类场景的跟踪问题。

| [1] |

杨戈, 刘宏. 视觉跟踪算法综述[J]. 智能系统学报, 2010, 5(2): 95-105. YANG Ge, LIU Hong. Survey of visual tracking algorithms[J]. CAAI transactions on intelligent systems, 2010, 5(2): 95-105. (  0) 0)

|

| [2] |

黄凯奇, 陈晓棠, 康运锋, 等. 智能视频监控技术综述[J]. 计算机学报, 2015, 38(6): 1093-1118. HUANG Kaiqi, CHEN Xiaotang, KANG Yunfeng, et al. Intelligent visual surveillance: a review[J]. Chinese journal of computers, 2015, 38(6): 1093-1118. DOI:10.11897/SP.J.1016.2015.01093 (  0) 0)

|

| [3] |

WU Yi, LIM J, YANG M H. Online object tracking: a benchmark[C]//Proceedings of 2013 IEEE Conference on Computer Vision and Pattern Recognition. Portland, OR, USA, 2013: 2411-2418.

( 0) 0)

|

| [4] |

BABENKO B, YANG M H, BELONGIE S. Robust object tracking with online multiple instance learning[J]. IEEE transactions on pattern analysis and machine intelligence, 2011, 33(8): 1619-1632. DOI:10.1109/TPAMI.2010.226 ( 0) 0)

|

| [5] |

陈真, 王钊. 元认知粒子滤波目标跟踪算法[J]. 智能系统学报, 2015, 10(3): 387-392. CHEN Zhen, WANG Zhao. Object tracking algorithm with metacognitive model-based particle filters[J]. CAAI transactions on intelligent systems, 2015, 10(3): 387-392. (  0) 0)

|

| [6] |

MEI Xue, LING Haibin. Robust visual tracking using ℓ1 minimization[C]//Proceedings of the 12th IEEE International Conference on Computer Vision. Kyoto, Japan, 2009: 1436-1443.

( 0) 0)

|

| [7] |

ZHANG Kaihua, ZHANG Lei, YANG M H. Real-time compressive tracking[C]//Proceedings of the 12th European Conference on Computer Vision. Berlin, Germany, 2012: 864-877.

( 0) 0)

|

| [8] |

韩华, 丁永生, 郝矿荣. 综合颜色和小波纹理特征的免疫粒子滤波视觉跟踪[J]. 智能系统学报, 2011, 6(4): 289-294. HAN Hua, DING Yongsheng, HAO Kuangrong. An immune particle filter video tracking method based on color and wavelet texture[J]. CAAI transactions on intelligent systems, 2011, 6(4): 289-294. (  0) 0)

|

| [9] |

RABINER L R. A tutorial on hidden Markov models and selected applications in speech recognition[J]. Proceedings of the IEEE, 1989, 77(2): 257-286. DOI:10.1109/5.18626 ( 0) 0)

|

| [10] |

BAR-SHALOM Y, FORTMANN T E, CABLE P G. Tracking and data association[J]. The journal of the acoustical society of America, 1990, 87(2): 918-919. DOI:10.1121/1.398863 ( 0) 0)

|

| [11] |

COMANICIU D, RAMESH V, MEER P. Real-time tracking of non-rigid objects using mean shift[C]//Proceedings of 2000 IEEE Conference on Computer Vision and Pattern Recognition. Hilton Head Island, SC, USA, 2000: 142-149.

( 0) 0)

|

| [12] |

ISARD M, BLAKE A. CONDENSATION-conditional density propagation for visual tracking[J]. International journal of computer vision, 1998, 29(1): 5-28. DOI:10.1023/A:1008078328650 ( 0) 0)

|

| [13] |

BOLME D S, BEVERIDGE J R, DRAPER B A, et al. Visual object tracking using adaptive correlation filters[C]//Proceedings of 2010 IEEE Conference on Computer Vision and Pattern Recognition. San Francisco, CA, USA, 2010: 2544-2550.

( 0) 0)

|

| [14] |

HENRIQUES J F, CASEIRO R, MARTINS P, et al. Exploiting the circulant structure of tracking-by-detection with kernels[C]//Proceedings of the 12th European Conference on Computer Vision. Berlin, Germany, 2012: 702-715.

( 0) 0)

|

| [15] |

HENRIQUES J F, CASEIRO R, MARTINS P, et al. High-speed tracking with kernelized correlation filters[J]. IEEE transactions on pattern analysis and machine intelligence, 2015, 37(3): 583-596. DOI:10.1109/TPAMI.2014.2345390 ( 0) 0)

|

| [16] |

余凯, 贾磊, 陈雨强, 等. 深度学习的昨天、今天和明天[J]. 计算机研究与发展, 2013, 50(9): 1799-1804. YU Kai, JIA Lei, CHEN Yuqiang, et al. Deep learning: yesterday, today, and tomorrow[J]. Journal of computer research and development, 2013, 50(9): 1799-1804. DOI:10.7544/issn1000-1239.2013.20131180 (  0) 0)

|

| [17] |

WANG Naiyan, YEUNG D Y. Learning a deep compact image representation for visual tracking[C]//Proceedings of the 26th International Conference on Neural Information Processing Systems. Lake Tahoe, USA, 2013: 809-817.

( 0) 0)

|

| [18] |

ZHANG Kaihua, LIU Qingshan, WU Yi, et al. Robust visual tracking via convolutional networks without training[J]. IEEE transactions on image processing, 2016, 25(4): 1779-1792. ( 0) 0)

|

| [19] |

NAM H, HAN B. Learning multi-domain convolutional neural networks for visual tracking[C]//Proceedings of 2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas, CA, USA, 2016: 4293-4302.

( 0) 0)

|

| [20] |

ROSS D A, LIM J, LIN R S, et al. Incremental learning for robust visual tracking[J]. International journal of computer vision, 2008, 77(1/2/3): 125-141. ( 0) 0)

|

| [21] |

WU Yi, LIM J, YANG M H. Object tracking benchmark[J]. IEEE transactions on pattern analysis and machine intelligence, 2015, 37(9): 1834-1848. ( 0) 0)

|

2018, Vol. 13

2018, Vol. 13