语音是人类之间最原始最有效的信息交流方式,在社会发展中起着不可替代的重要作用。现代社会中,随着科技的蓬勃发展,世界已经进入到信息化时代,语音通信技术应用到各个领域中。但在现实生活中噪声无处不在,语音信号在传输中往往会受到环境噪声的干扰,使得语音质量下降。语音增强是从被噪声污染的语音信号中提取有用的语音信号,通过增强技术降低噪声的干扰,提升增强后的语音质量和可懂度,所以语音增强在语音通信系统中有着重要的意义。在实际生活中,由于不同的环境噪声的特性不同,可以使用不同的语音增强方法,从而得到更好的增强效果。

传统的无监督语音增强方法对于平稳噪声的去噪效果较好[1-2],但对于非平稳噪声则差强人意,这也是传统语音增强技术的局限性。近年来随着深度学习的不断发展,逐渐应用到语音信号处理领域,如语音端点检测[3]、情感识别[4-7]、助听器[8]、语音增强[9-15]等。其中基于深度学习的语音增强方法不需要对噪声的平稳性做出假设,重要的是训练特征和训练目标地表示和训练中使用的损失函数,以及神经网络的拓扑结构。Xu等[9-10]用基于回归的多层深度神经网络(deep neural network, DNN)语音增强框架,通过建立清晰语音和含噪语音之间的对数功率谱的非线性映射关系,从而成功地从背景噪声中分离出语音。文献[11-12]用卷积神经网络(convolutional neural network, CNN)构造降噪自动编码器来进行语音降噪。文献[13]采用全卷积网络(fully convolutional network, FCN)训练噪声语音与纯净语音频谱之间的关系,来增强语音质量,提高语音可懂度。文献[14]提出了一种基于卷积和递归神经网络的端到端语音增强模型,卷积部分利用频谱图中频率和时间域的局部信息,然后用双向递归网络,以模拟连续帧之间的动态相关性,最后经过一个全连接层,预测纯净的语谱图。文献[15]提出了一种新的因果卷积递归神经网络的渐进学习框架,该框架利用卷积神经网络和递归神经网络的优点,大大减少了参数的数量,同时提高了语音质量和语音清晰度。文献[16]通过预测语音的信噪比(signal to noise ratio, SNR)设计了一种信噪比感知的卷积神经网络语音增强模型,提高了不匹配噪声下的泛化能力。

这些方法的模型中大多只对频率幅度或者谱图进行处理,而将相位采用其原始含噪语音信号的相位,有研究证实了相位信息在重构时域信号波形时的重要性,尤其是在傅里叶变换的长度增和窗口重叠加时[17]。所以最新的一些研究中考虑了相位信息[18-19]。这些方法仍然需要在时域和频域之间映射特征关系,以便通过(逆)傅里叶变换进行分析和再合成,而相位信息或多或少会受到损失。Oord等[20]提出wavenet模型,成功地通过逐样本预测和扩展卷积对原始音频波形进行时域建模。

本文提出一种基于时域全卷积网络的语音增强算法,该方法的神经网络结构由6层卷积层组成,以波形输入和波形输出的方式进行语音增强,网络中不含全连接层,不同于训练幅度谱的神经网络增强方法,该方法不进行傅里叶变换,用时域信号直接训练网络,保留了信号的原始相位信息,建立含噪语音和纯净语音在时域上的非线性关系,这样可以有效减小相位对语音合成的影响。

1 基本原理 1.1 卷积神经网络卷积神经网络一般由卷积层、池化层,上采样层和全连接层组成,通过这些网络层就可以构建一个卷积神经网络。

卷积层是通过卷积运算提取特征,即前一层的神经元与卷积核进行卷积运算后与偏置项相加,得出当前层的特征图。卷积核具有权值共享特性,可以用于所有当前层神经元的预算,这样使得网络的参数大大减少。一般卷积层用到多个卷积核,可以得到更全面的信息。更新公式如下[21]:

| $ x_j^m = f\left( {\sum\limits_{i \in {M_j}} {x_i^{m - 1}} *k_{i,j}^m + b_j^m} \right) 。$ | (1) |

式中:

池化层一般分为最大池化Maxpooling和平均池化AveragePooling,即选择一个区域,用这些值得最大值或平均值来替换,这样可以减少输入信号的规模,起到降维的作用,加快运算速度,同时保留信号的主要特征,提高模型的鲁棒性。

全连接层(fully connected layers, FC)在卷积神经网络中一般放到网络结构的最后,对前面特征做加权和,得到固定长度的特征向量,可以作为分类器。在语音增强中,全连接层存在着参数冗余的问题,生成大量的训练参数。

上采样层(UpSampling)可以放大特征图,提高分辨率,一般采用插值的方法进行上采样。上采样层可以使特征图恢复到输入特征图的大小,从而对每一个元素都进行预测。

1.2 激活函数激活函数对于神经网络学习非常重要,由于神经网络的运算是线性的,而现实中数据的分布大多为非线性的,加入激活函数,则在神经网络中加入了非线性,这样可以增强网络的学习能力。

常用的激活函数有Sigmoid函数、tanh函数和ReLU函数,公式分别为:

| $ f(x) = 1/(1 + {e^{ - x}}) ,$ | (2) |

| $ f(x) = ({e^x} - {e^{ - x}})/({e^x} + {e^{ - x}}),$ | (3) |

| $ f(x) = \max (0,x)。$ | (4) |

批标准化(batch normalization, BN)使得输出的数据符合0均值,方差为1的分布,一般放在激活函数之前,加速网络训练的速度,缓解梯度消失和梯度爆炸的问题。

BN操作的过程如式(5)~式(8)[22],考虑一个batch的训练含有

| $ {\mu }_{B}=\frac{1}{m}\underset{i=1}{\overset{m}{{{\displaystyle \sum }}^{\text{}}}}{x}_{i}。$ | (5) |

计算训练样本的方差:

| $ {\sigma }_{B}^{2}=\frac{1}{m}\underset{m}{\overset{i+1}{{{\displaystyle \sum }}^{\text{}}}}{\left({x}_{i}-{\mu }_{B}\right)}^{2},$ | (6) |

对每个训练样本的元素进行标准化:

| $ \widehat{B}=\frac{B-{\mu }_{B}}{\sqrt{{\sigma }_{B}^{2}+\epsilon}} 。$ | (7) |

式(7)中

最后为了恢复数据的表达能力,引入训练参数

| $ y = \gamma * \hat B + \beta 。$ | (8) |

卷积神经网络一般在最后加入几个全连接层,把特征图映射到一个特定尺度的特征向量上去。而全卷积网络是在卷积神经网络的基础上,把网络中的全连接层替换为卷积层,实现网络结构全部由卷积层组成。

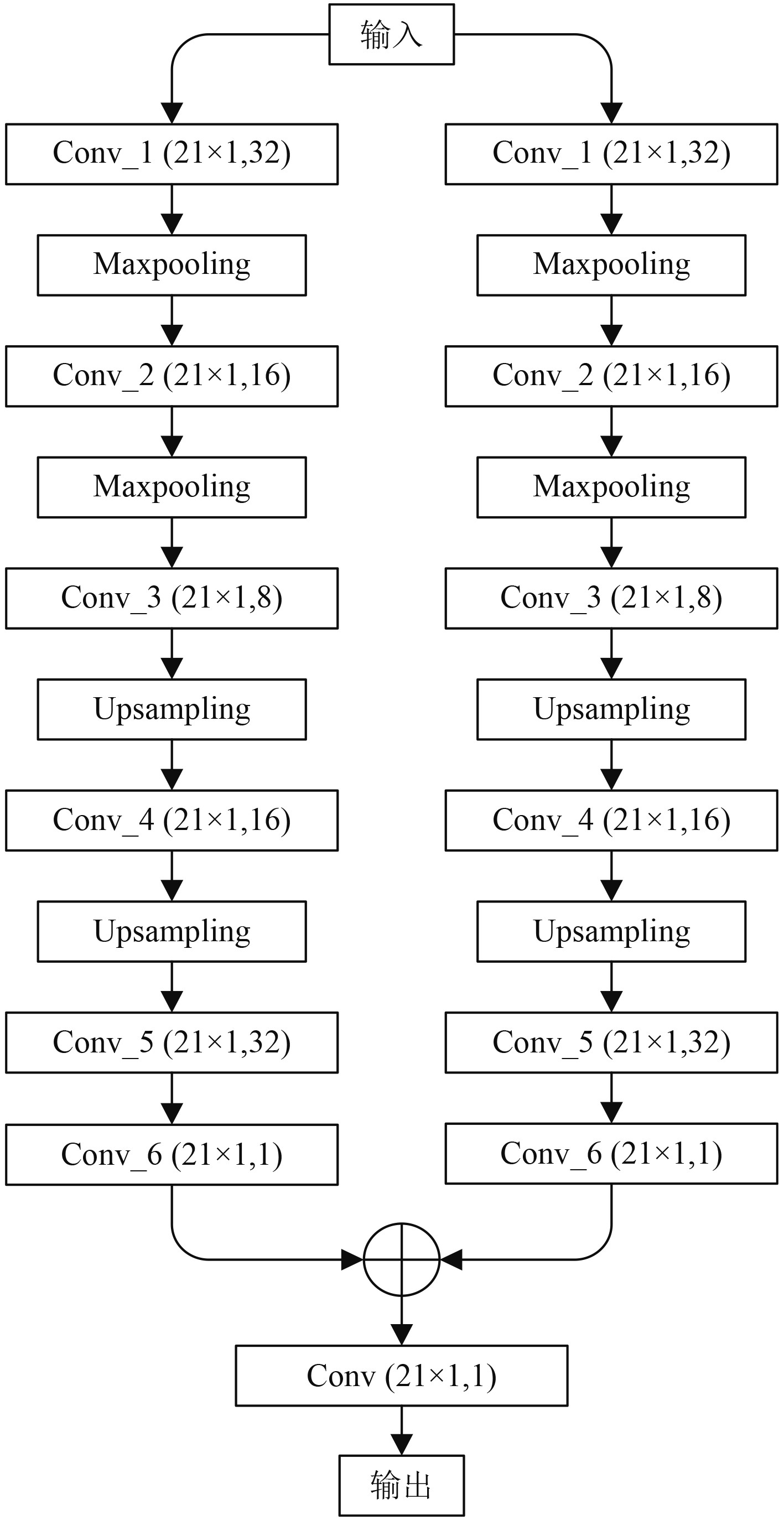

文献[23]研究了不同基于深度学习的语音增强方法,发现神经网络中的全连接层不能很好地保留原始信号中的高频信息,建议使用卷积神经网络。本文把卷积神经网络中的全连接层用卷积层替换,并加入上采样层,提出一种时域全卷积网络结构的语音增强方法,其网络结构如图1所示。

|

图 1 时域全卷积网络结构 Fig. 1 Structure of the full convolution network in time domain |

该网络结构输入和输出都是一维1 000×1的时域信号,实现了波形输入波形输出。从图中可以看到,全卷积网络结构由卷积层、池化层和上采样层组成。输入信号通过2次神经网络,训练出的特征图经过加法器连接到一个卷积层作为输出层,得到增强后的信号。左右支路的网络结构相同,由6层卷积层、2个池化层和2个上采样层组层。其中所有卷积层的卷积核为21×1的,移动步长为1×1,padding的模式为same,卷积层后面链接一个BN操作和激活函数,激活函数设置为tanh,卷积核个数分别设置为32,16,8,16,32和1。池化层选用Maxpooling,即最大池化,池化尺寸为2×1。上采样层设置为nearest方法。由于网络前半部分有2层池化层,使得信号的长度变为原来的1/4,为了在输出层信号的长度与输入层的长度一致,2个上采样层都采用2×1尺寸的。2个支路通过加法器连接到卷积层,得到一个1 000×1的输出信号。

2.2 算法流程假设带噪语音信号为:

| $ m(n) = s(n) + d(n) 。$ | (9) |

式中:

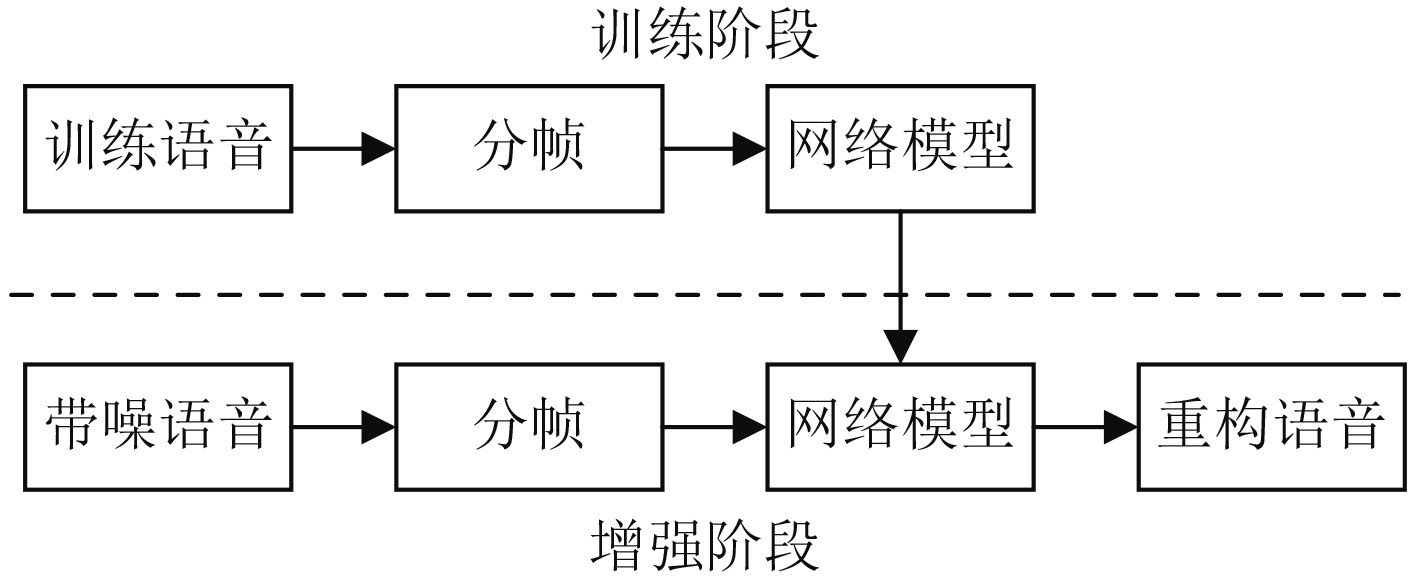

基于时域全卷积网络的语音增强方法流程如图2所示。该流程由训练阶段和增强阶段组成,在训练阶段,由于本文方法直接用纯净语音和含噪语音的时域波形信号作为训练数据,不需要进行傅里叶变换操作,直接对训练数据进行分帧处理,然后把处理好的数据送入到神经网络进行训练,训练结束后得到一个含噪语音信号和纯净语音信号之间非线性映射关系模型。在增强阶段,把测试的含噪语音信号分帧后送入到训练得到的网络模型中,最后得到增强后的语音信号。

|

图 2 基于时域全卷积网络的语音增强流程图 Fig. 2 Flowchart of speech enhancement based on full convolutional network in time domain |

仿真实验是在Python Tensorflow 2.3框架下进行,网络训练时学习率设置为0.001,损失函数选择均方误差(mean squared error, MSE),选用自适应矩估计(Adaptive Moment Estimation, Adam)优化器,迭代次数为50次。

3.1 数据处理训练语音信号和测试语音信号均来自TIMIT语音库,采用8 000 Hz采样率,对于训练的语音,是把语音库中测试集中的3 696个句子做分帧处理,帧的长度设置为1 000,即每帧语音时长为125 ms,从中随机的选取100 000帧。噪声选用NOISEX-92中的buccaneer1,factory1,hfchannel,pink和white,并在−5~15 dB之间随机取值,作为每帧纯净语音信号添加噪声的强度。

3.2 结果分析使用SNR、语音质量感知评估(perceptual evaluation of speech quality, PESQ)和对数谱距离(log spectral distance, LSD)[12]对仿真结果进行客观评估。PESQ是国际电信联盟推出的P.862标准,用来评估语音质量的客观评价,评价得分取值范围为−0.5~4.5,数值越大表示语音质量越好。

SNR和LSD可以分别在时域和频域上评估语音的失真程度,其公式分别为:

| $ {\text{SNR}} = 10{\rm{lg}}\frac{{\displaystyle\sum\limits_{i = 1}^N {{s^2}(n)} }}{{\displaystyle\sum\limits_{n = 1}^N {{{\left| {m(n) - s(n)} \right|}^2}} }},$ | (10) |

| $ \text{LSD}=\frac{1}{T}\underset{t=0}{\overset{T-1}{{{\displaystyle \sum }}^{\text{}}}}\sqrt{\left\{\frac{1}{L/2+1}\underset{k=0}{\overset{L/2}{{{\displaystyle \sum }}^{\text{}}}}{\left[10\mathrm{lg}\frac{P(k)}{\widehat{P}(k)}\right]}^{2}\right\}}。$ | (11) |

为了评价本文提出的基于时域全卷积网络语音增强方法的有效性,分别与基于卷积神经网络(CNN)增强方法、文献[11]和文献[12]中的网络结构方法进行比较。基于CNN方法与本文方法的网络结构的一条支路相同,而最后一层网络用全连接层作为输出层。文献[11]由4组卷积层和1个全连接层组成。文献[12]中的网络结构由2层卷积层、1层池化层和3层全连接层组成。通过分别训练相同的训练集,比较各个方法的性能

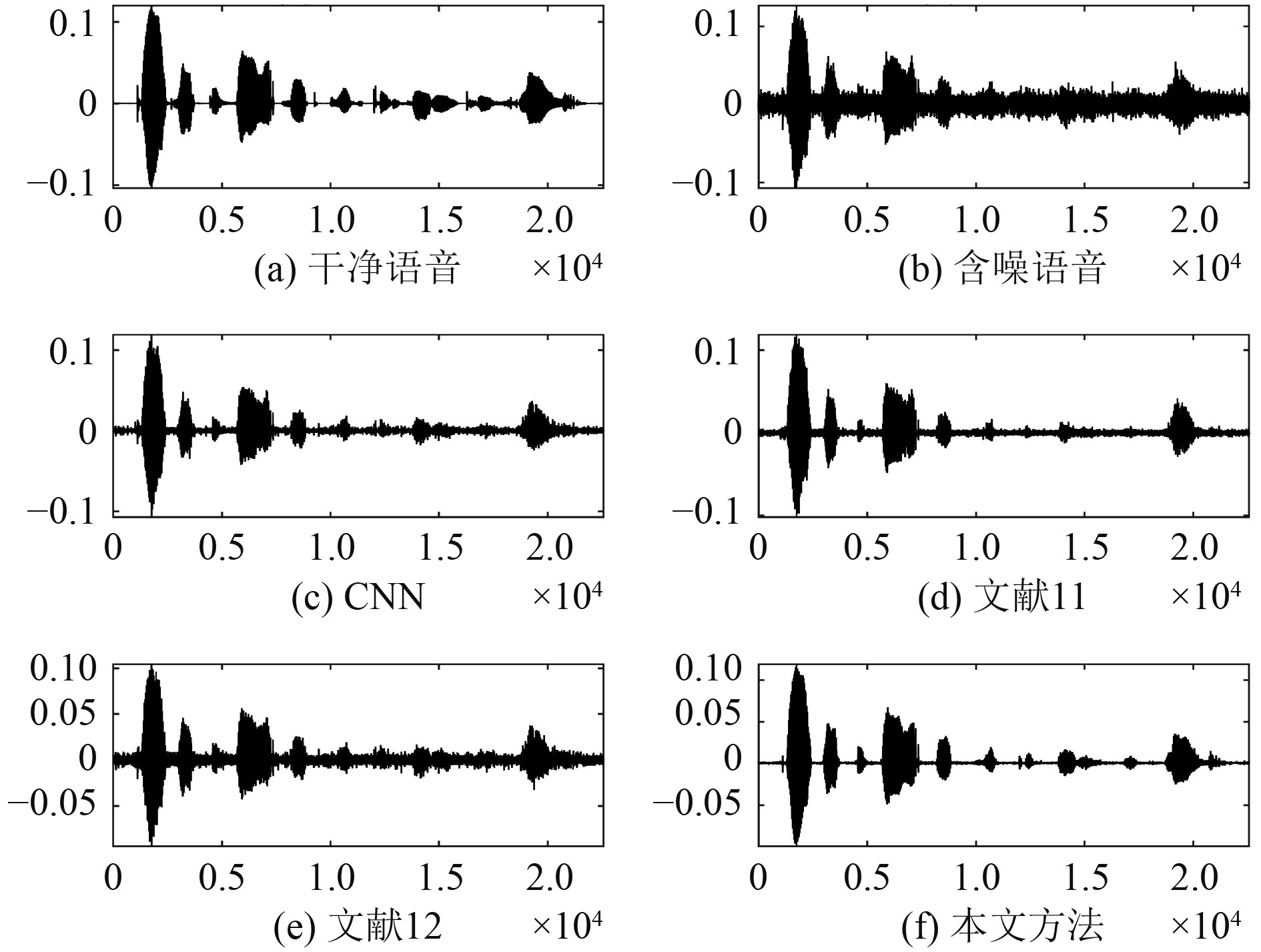

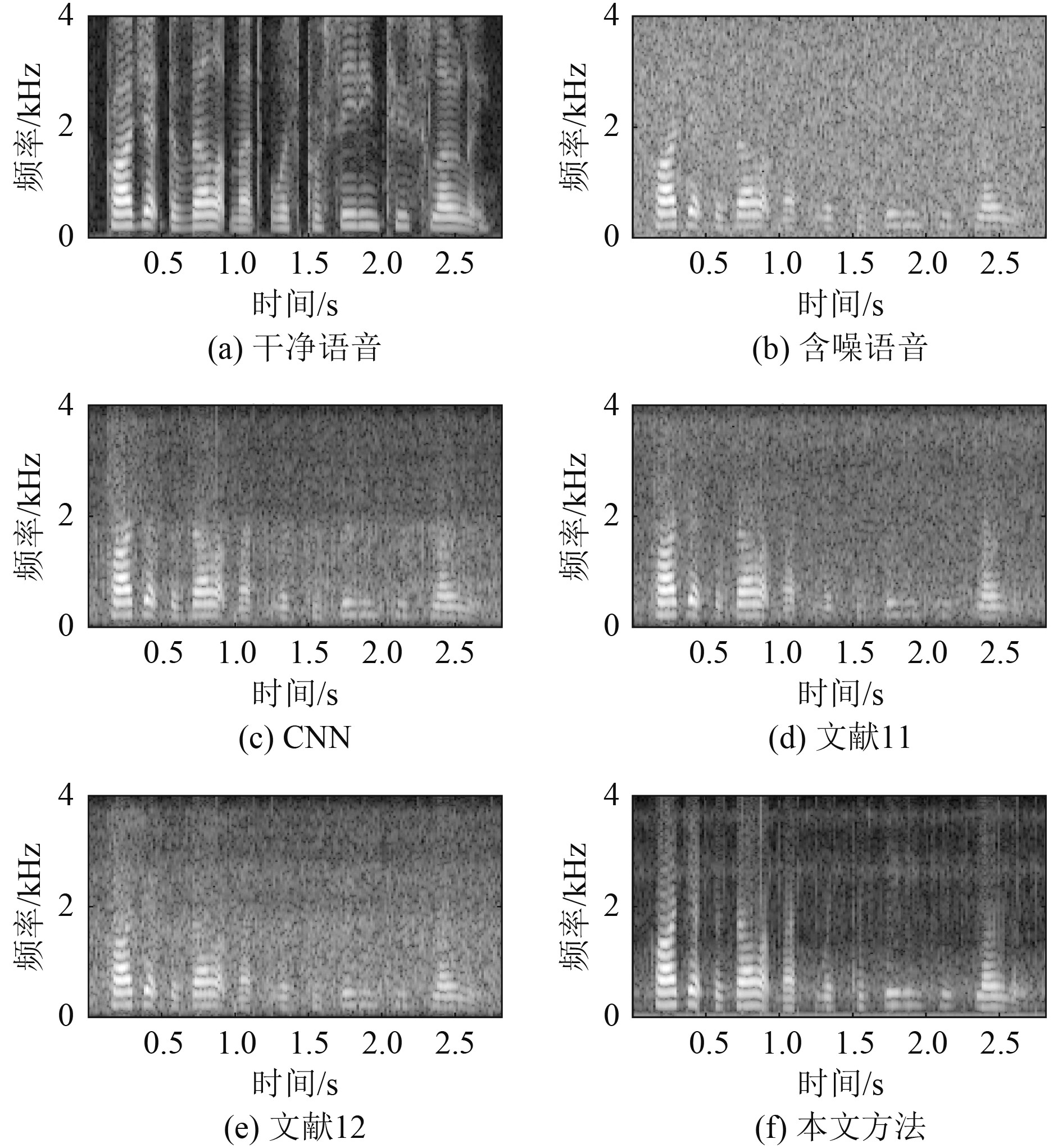

为了更直观地对比4种方法的增强效果,在测试集中选择一段语音,加入5 dB white噪声,图3 、图4中(a)和(b)分别表示纯净语音和含噪语音的时域图和语谱图,(c)~(f)分别表示经过CNN、文献[11]、文献[12]和本文方法增强后语音的时域图和语谱图。可以看出本文方法的效果要优于另外3种方法。

|

图 3 white噪声下的时域图(5 dB) Fig. 3 Time domain diagram under white noise (5 dB) |

|

图 4 white噪声下的增强语谱图(5 dB) Fig. 4 Enhanced language spectra under white noise (5 dB) |

表1和表2分别表示表示测试语音在添加了0 dB和5 dB与训练噪声匹配的white噪声情况下测得4种增强方法评价的指标得分。

|

|

表 1 匹配white噪声下的性能(0 dB) Tab.1 Performance under matching white noise (0 dB) |

|

|

表 2 匹配white噪声下的性能(5 dB) Tab.2 Performance under matching white noise (5 dB) |

可以看出,在匹配white噪声的干扰下,本文提出算法的评价指标的效果总体上优于其他方法。

为了评估本文算法模型的泛化能力,在测试语音中加入不匹配的0 dB和5 dB f16噪声,仿真结果如表3和表4所示。

|

|

表 3 不匹配f16噪声下的性能(0 dB) Tab.3 Performance under mismatched F16 noise (0 dB) |

|

|

表 4 不匹配f16噪声下的性能(5 dB) Tab.4 Performance under mismatched F16 noise (5 dB) |

从仿真结果可以看到,本文提出的方法在不匹配噪声环境下,也表现出较好的效果,在评价指标SNR和PESQ中效果是最好的,尤其是PESQ得分比其他3种方法明显优异,表明本文方法在未知噪声环境下也有较好的增强效果。

综合比较可以看出,本文提出的基于时域全卷积网络的语音增强方法在低信噪比下可以有效地去除噪声,提高语音信号的质量,并具有良好的泛化能力。

4 结 语鉴于目前大部分基于深度学的语音增强算法都是在频域上对语音信号的幅度谱做处理,相位信息受到损失,本文提出一种基于时域全卷积网络的语音增强方法,通过全卷积网络在时域上直接对信号做训练,保留了信号的相位信息,得到噪声语音和纯净语音之间的非线性映射关系。通过波形输入波形输出的增强方式,实现了时域语音增强。仿真结果表明,在低信噪比的环境下,本文的方法可以显著提高语音信号的质量,与其他方法相比有更好的语音增强性能,并且有良好的泛化能力,证实了本文方法的有效性。

| [1] |

PALIWAL K, SCHWERIN B, WÓJCICKI K. Speech enhancement using a minimum mean-square error short-time spectral modulation magnitude estimator[J]. Speech Communication, 2012, 54(2): 282-305. DOI:10.1016/j.specom.2011.09.003 |

| [2] |

KUMAR M A, CHARI K M. Noise reduction using modified wiener filter in digital hearing aid for speech signal enhancement[J]. Journal of Intelligent Systems, 2020, 29(1): 1360-1378. |

| [3] |

TACHIOKA Y. DNN-based voice activity detection using auxiliary speech models in noisy environments [C] // IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2018: 5529–5533.

|

| [4] |

FAYEK H M, LECH M, CAVEDON L. Evaluating deep learning architectures for speech emotion recognition[J]. Neural Networks:The Official Journal of the International Neural Network Society, 2017, 92: 60-68. DOI:10.1016/j.neunet.2017.02.013 |

| [5] |

ZHANG S, CHEN A, GUO W, et al. Learning deep binaural representations with deep convolutional neural networks for spontaneous speech emotion recognition[J]. IEEE Access, 2020, 8: 23496-23505. DOI:10.1109/ACCESS.2020.2969032 |

| [6] |

ZHANG S, ZHANG S, HUANG T, et al. Speech emotion recognition using deep convolutional neural network and discriminant temporal pyramid matching[J]. IEEE Transactions on Multimedia, 2018, 20: 1576-1590. DOI:10.1109/TMM.2017.2766843 |

| [7] |

FAYEK H M, LECH M, CAVEDON L. Evaluating deep learning architectures for speech emotion recognition[J]. Neural Networks, 2017, 92: 60-68. DOI:10.1016/j.neunet.2017.02.013 |

| [8] |

WANG D L. Deep learning reinvents the hearing aid[J]. IEEE Spectrum, 2017, 54(3): 32-37. DOI:10.1109/MSPEC.2017.7864754 |

| [9] |

XU Y, DU J, DAI L R, et al. A regression approach to speech enhancement based on deep neural networks[J]. IEEE/ACM Transactions on Audio, Speech, and Language Processing, 2015, 23: 7-19. DOI:10.1109/TASLP.2014.2364452 |

| [10] |

XU Y, DU J, DAI L R, et al. An experimental study on speech enhancement based on deep neural networks[J]. IEEE Signal Processing Letters, 2013, 21(1): 65-68. |

| [11] |

张明亮, 陈雨. 基于全卷积神经网络的语音增强算法[J]. 计算机应用研究, 2020, 37(S1): 135-137. |

| [12] |

KOUNOVSKY T, MALEK J. Single channel speech enhancement using convolutional neural network[C] // IEEE International Workshop of Electronics, Control, Measurement, Signals and their Application to Mechatronics (ECMSM), 2017: 1–5.

|

| [13] |

PARK S R, LEE J. A fully convolutional neural network for speech enhancement[J]. Interspeech 2017: 1993–1997

|

| [14] |

ZHAO H, ZARAR S, TASHEV I, et al. Convolutional-recurrent neural networks for speech enhancement[C]// ICASSP 2018 - 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2018: 2401–2405.

|

| [15] |

ALA B, MY C, CZA B, et al. Speech enhancement using progressive learning-based convolutional recurrent neural network[J]. Applied Acoustics, 2020, 166: 107347. DOI:10.1016/j.apacoust.2020.107347 |

| [16] |

FU, S, TSAO, Y, LU, X. SNR-Aware Convolutional neural network modeling for speech enhancement[C] // Interspeech 2016: 3768–3772.

|

| [17] |

PALIWAL K K, WÓJCICKI KK, SHANNON B J. The importance of phase in speech enhancement[J]. Speech Communication, 2011, 53(4): 465-494. DOI:10.1016/j.specom.2010.12.003 |

| [18] |

YIN D, LUO C, XIONG Z, et al. PHASEN: A phase-and-harmonics-aware speech enhancement network[J]. arXiv: 1911.04679, 2019.

|

| [19] |

WILLIAMSON D S, Wang Y, Wang D. Wang. Complex ratio masking for monaural speech separation[J]. IEEE/ACM Transactions on Audio, Speech, and Language Processing, 2016, 24(3): 483-492. DOI:10.1109/TASLP.2015.2512042 |

| [20] |

OORD A, DIELEMAN S, ZEN H, et al. WaveNet: A generative model for raw audio[J]. arXiv: 1609.03499, 2016.

|

| [21] |

缪裕青, 邹巍, 刘同来, 等. 基于参数迁移和卷积循环神经网络的语音情感识别[J]. 计算机工程与应用, 2019, 55(10): 135-140. DOI:10.3778/j.issn.1002-8331.1802-0089 |

| [22] |

罗仁泽, 王瑞杰, 张可, 等. 残差卷积自编码网络图像去噪方法[J]. 计算机仿真, 2021, 38(5): 455-461. DOI:10.3969/j.issn.1006-9348.2021.05.093 |

| [23] |

FU S W, YU T, LU X, et al. Raw waveform-based speech enhancement by fully convolutional networks [C] 2017 Asia-Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC). 2017: 006–012.

|

2022, Vol. 44

2022, Vol. 44